Clear Sky Science · en

Task-irrelevant human and robot head movements bias gaze in humans who follow them through virtual reality

Why other people’s glances quietly steer your own

Imagine walking down a hallway behind someone and noticing them tilt their head toward a poster on the wall. Without thinking, you may find your own eyes drifting in the same direction, even if you don’t really care what they’re looking at. This study explores that everyday phenomenon in a virtual reality setting, asking a simple question: do we automatically follow where others look, even when it has nothing to do with what we’re trying to do—and does it matter if that “other” is a human or a robot?

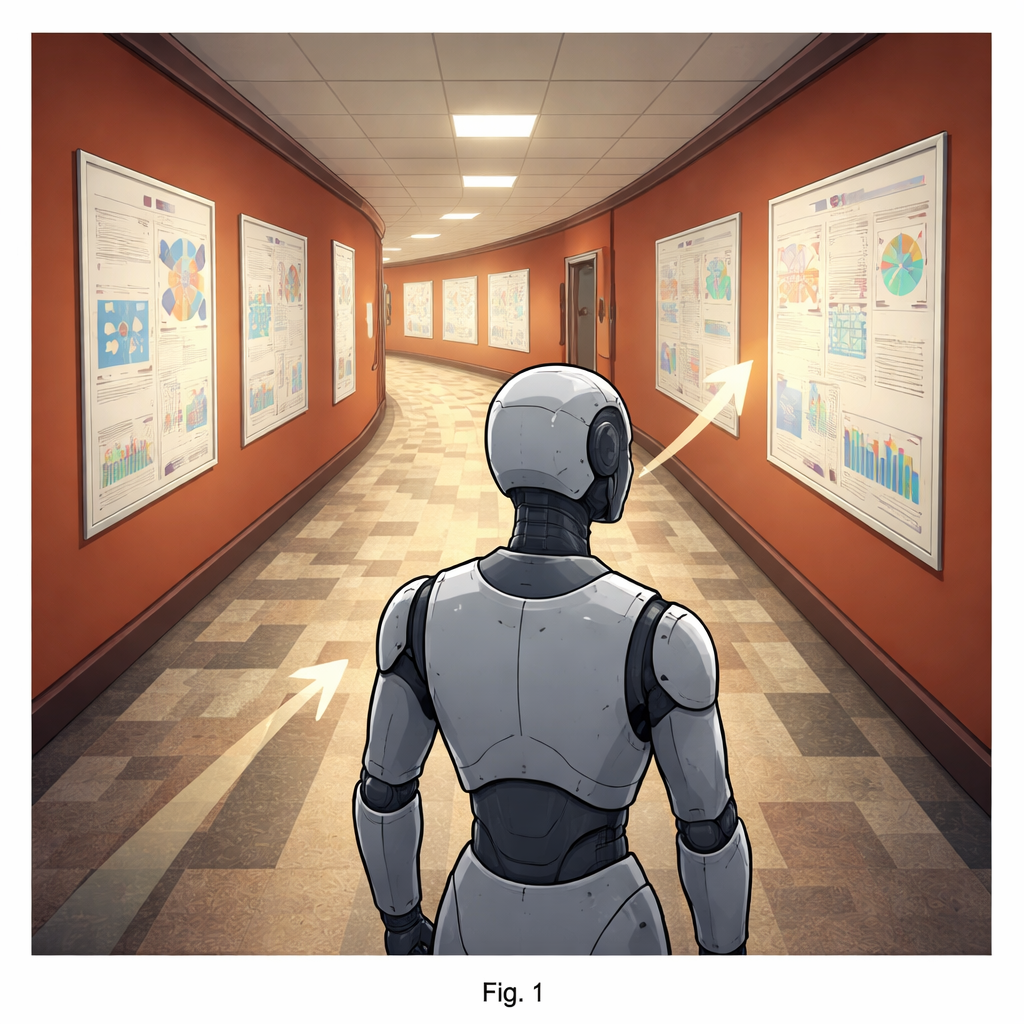

A virtual walk down the corridor

The researchers invited volunteers to put on a virtual reality headset and walk through a digital replica of a university corridor. On both sides of each corridor, they saw rows of realistic-looking scientific posters and a few closed doors, much like in an actual academic building. In front of each participant, an avatar led the way—sometimes a human figure with a natural walking motion, sometimes a robot that smoothly glided along. The participants could control their own speed and side-to-side position, but they were simply told to walk behind the avatar; nobody mentioned the posters or where the avatar was looking.

Head turns that should not matter—but do

Across the corridors, the key thing that changed was how the avatar moved its head. In some corridors, it never looked at the posters at all. In others, it briefly turned its head toward three different posters, sometimes all on one side of the hallway, sometimes split between left and right. Crucially, these head turns gave the participant no useful information about what to do: the posters were neutral and equally interesting (or uninteresting), and the avatar’s gaze did not signal where to walk or when to turn. From the participant’s point of view, these glances were completely irrelevant to the simple task of following along.

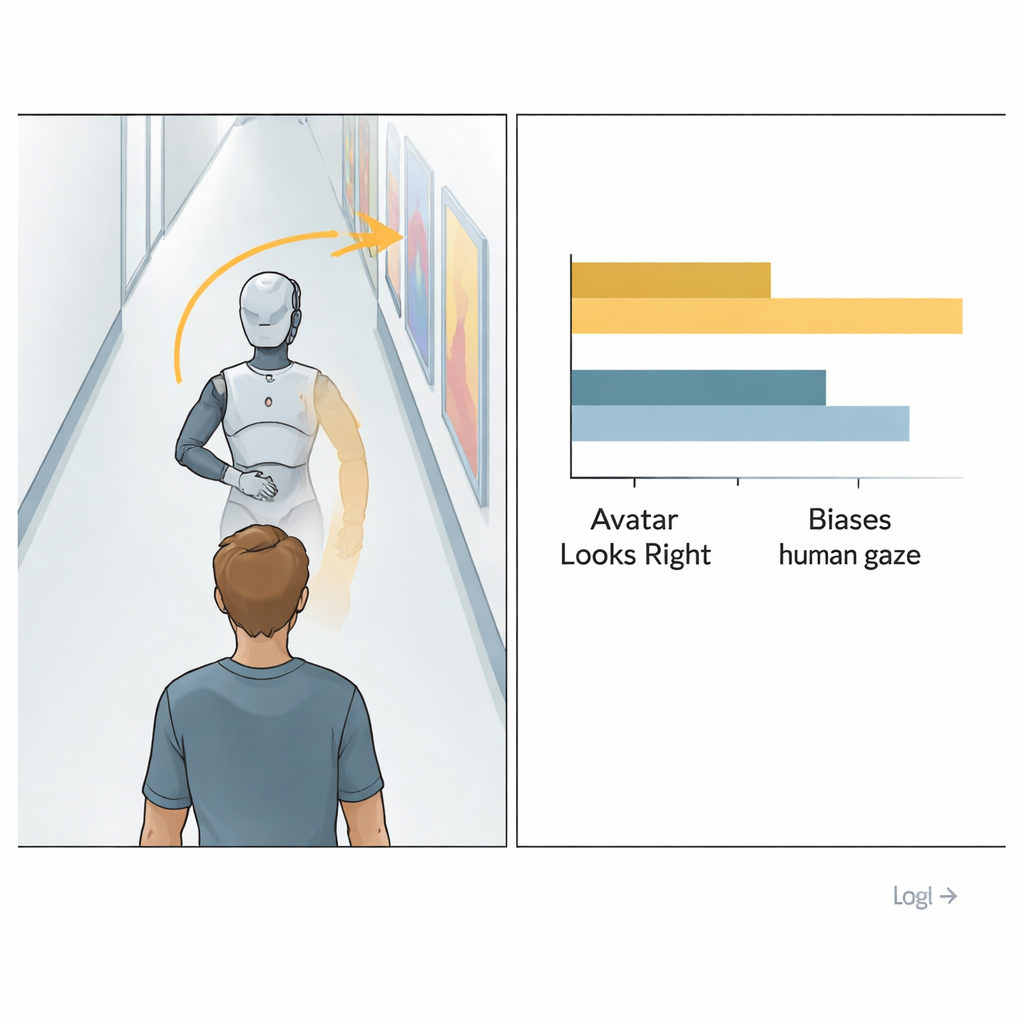

Eyes that echo another’s gaze

Using eye-tracking built into the headset, the team measured where in the corridor participants were looking over time. They then compared how much time each person’s gaze was directed to the left versus the right side. Despite being told nothing about the avatar’s gaze, people’s own looking direction clearly shifted toward the side where the avatar looked more often. When the avatar focused its head turns on the left-hand posters, participants tended to look more to the left; when it favored the right, their gaze followed suit. This bias appeared even though the overall amount of time people spent looking at any posters varied widely from person to person, suggesting a robust pull of social gaze on our attention.

Humans and robots sway us in similar ways

An important twist was that the avatar was sometimes a human figure and sometimes a robot. One might expect a metal body and a smooth “sliding” motion to feel less social and thus less influential. Yet the data told a different story: the sideward pull of gaze was just as strong whether the leading figure was human or robot. Participants’ eyes were biased by the direction of the robot’s head turns to about the same degree as by the human avatar’s. While the study did not find a strong effect on whether people looked at the exact same posters the avatar did, it did show a reliable shift in overall viewing toward the avatar’s preferred side of the hallway.

What this means for everyday life and future machines

These results suggest that following someone else’s line of sight is not just a deliberate, goal-driven act; it is a deep-seated tendency that kicks in even when it offers no clear benefit. Simply walking behind a figure whose head tilts to one side is enough to bias where we look, and a robot’s head movements can trigger much the same response as a person’s. As robots and other autonomous machines increasingly share our public spaces, such as hallways, streets, or train stations, designers can harness this built-in social habit: subtle head turns or “gaze” cues may help guide human attention and behavior in a natural, low-effort way, improving safety and communication without a word being spoken.

Citation: Schmitz, I., Miksch, J. & Einhäuser, W. Task-irrelevant human and robot head movements bias gaze in humans who follow them through virtual reality. Sci Rep 16, 5563 (2026). https://doi.org/10.1038/s41598-026-39130-1

Keywords: gaze following, virtual reality, human-robot interaction, social attention, eye tracking