Clear Sky Science · en

AI-driven diagnosis of acute aortic syndrome based on multi-modal information fusion

Why this matters for people with chest pain

Acute aortic syndrome is a medical emergency in which the body’s main artery can suddenly tear, leading to death within hours if missed. Yet its symptoms often masquerade as a heart attack, muscle strain, or even indigestion, making it notoriously easy to misdiagnose. This study describes a new artificial intelligence system that combines CT scans and blood tests to help doctors recognize these silent arterial catastrophes earlier and more accurately, and to flag borderline cases that need a second look.

A dangerous tear that hides in plain sight

Acute aortic syndrome (AAS) includes several related problems in the wall of the aorta, such as classic dissection, intramural hematoma, and penetrating ulcers. They all share a common danger: blood forces its way into or through the vessel wall, which can quickly lead to rupture or loss of blood flow to vital organs. The risk is highest in the first day or two after symptoms begin, when mortality may climb toward 70% without rapid treatment. Doctors use CT angiography to view the aorta and blood tests like D-dimer and inflammatory markers to gauge clotting and immune activity. But patients’ complaints are often vague, physical exams can be deceptively normal, and CT images may be subtle or degraded by motion or artifacts, so roughly one in three cases is initially missed in routine practice.

What current AI tools miss

Recent years have brought powerful image-recognition systems that can scan CT or X-ray images for signs of aortic tears. However, most of these tools look only at pictures and ignore blood tests, or they simply bolt separate data streams together without really learning how they interact. That is at odds with how clinicians think: they mentally weave together what they see on the scan with lab values and the patient’s history. Simple “stacking” of image features and lab numbers can even make matters worse, because blood-test data are noisy, incomplete, and mathematically intertwined. Many AI models also operate as black boxes, offering a verdict without exposing the reasoning process, which makes emergency physicians hesitant to rely on them when lives are on the line.

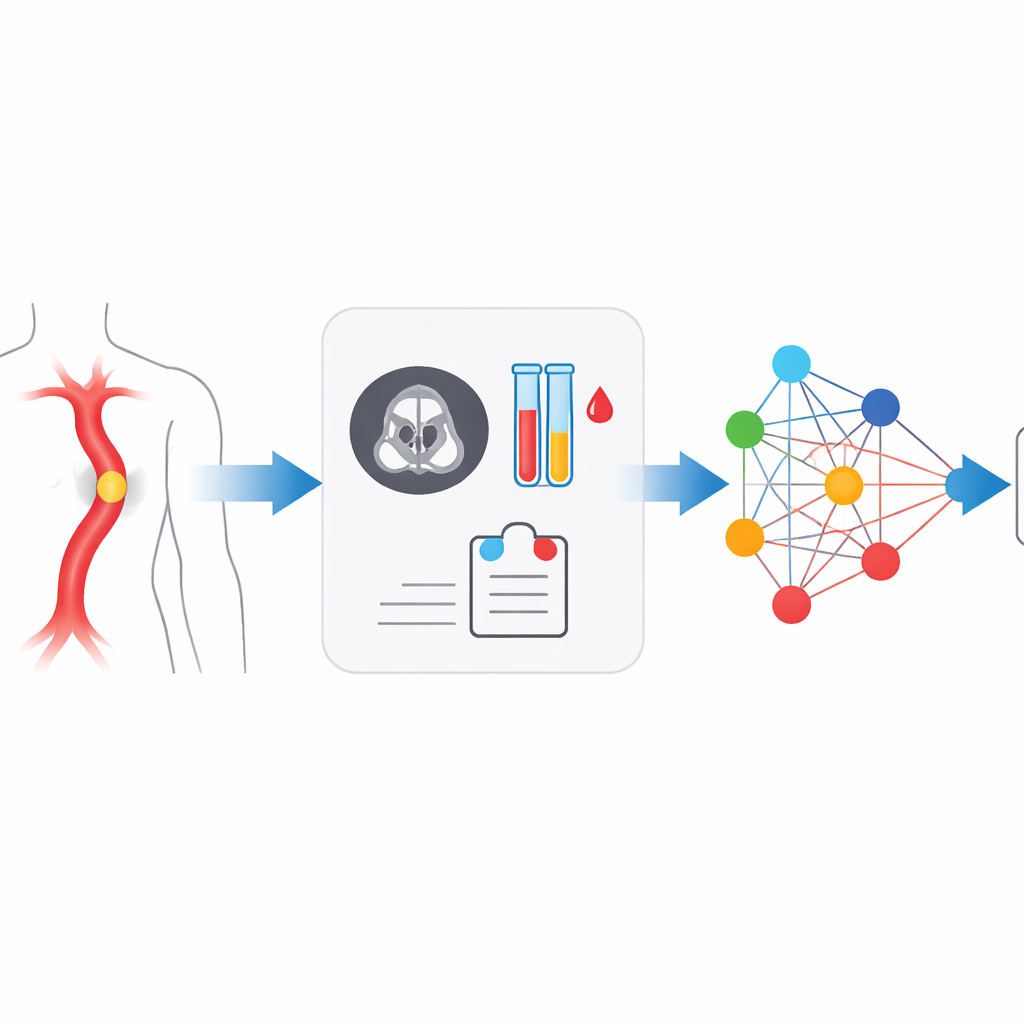

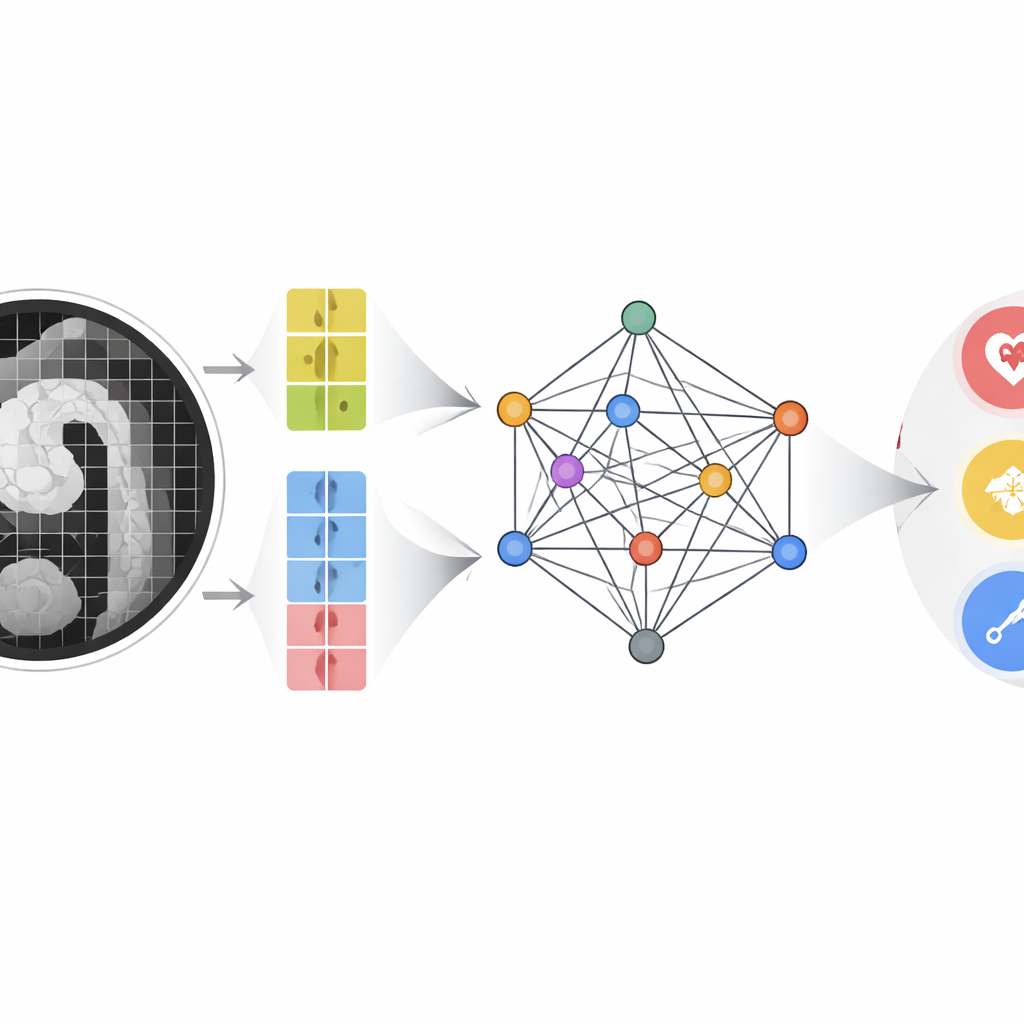

A new way to fuse scans and blood tests

The authors built a multimodal multi-scale fusion (MMMF) model designed to mimic the way experienced radiologists and cardiologists reason. First, a dual-branch image encoder looks at the CT angiography scan in two levels of detail: large patches capture the overall shape and course of the aorta, while smaller patches focus on fine details such as tiny intimal tears or small pockets of blood in the wall. At the same time, key blood indicators—including D-dimer and a panel of inflammatory markers derived from white cell counts and platelets—are converted into numerical feature points. These image features and lab features become nodes in a graph-like structure, where an advanced graph neural network passes “messages” between them, learning how certain blood patterns reinforce or contradict subtle imaging findings.

How well the system performs

The team trained and tested the MMMF model on CT scans and concurrent blood tests from 493 patients examined between 2019 and 2024, covering confirmed AAS of various types and non-AAS controls. They compared their approach with well-known image-only models, lab-data-only models, and several state-of-the-art multimodal systems originally designed for pairing images with text. Across accuracy, precision, recall, and F1 score, the MMMF model came out on top. Its overall area under the receiver operating characteristic curve exceeded 0.9, indicating strong ability to distinguish between normal aortas, classic dissections involving the ascending or descending aorta, and atypical forms. Image data remained the strongest single source of information, but carefully structured fusion with lab data provided a measurable boost, especially for difficult or borderline cases. Ablation experiments showed that two elements were crucial: the dual-scale imaging pathway and the transformer-based graph that models long-range relationships between features.

Toward a partnership between doctors and machines

Beyond headline accuracy numbers, a key contribution of this work is its emphasis on collaboration rather than replacement. The system is particularly adept at rapidly clearing obvious normal scans and clearly diseased atypical cases, acting as a kind of intelligent front-line screener. Equally important, it can recognize when its own confidence is low—often in cases that human experts also find tricky, such as early or milder forms of dissection—so it can flag those patients for urgent re-review, extra imaging, or senior consultation. In essence, the study shows that when image details and blood-test clues are woven together in a structured, clinically inspired way, AI can both sharpen early diagnosis of acute aortic syndrome and provide a safety net against missed emergencies, all while keeping physicians firmly in charge of final decisions.

Citation: Yang, Z., Xu, S., Wang, B. et al. AI-driven diagnosis of acute aortic syndrome based on multi-modal information fusion. Sci Rep 16, 8332 (2026). https://doi.org/10.1038/s41598-026-39111-4

Keywords: acute aortic syndrome, aortic dissection, medical AI, multimodal diagnosis, graph neural network