Clear Sky Science · en

A randomized controlled trial of artificial intelligence-based analytics for clinical deterioration

Why Keeping Hospital Patients Safe Is So Hard

When people are admitted to the hospital, doctors and nurses work hard to spot early warning signs that someone is about to get much sicker. But human eyes can miss subtle changes in heart rate, breathing, or blood pressure, especially on busy wards. This study asked a pressing question: can an artificial intelligence (AI) system that quietly watches patients’ vital signs in the background actually help prevent serious emergencies such as cardiac arrest, breathing failure, or rushed transfers to intensive care?

A New Kind of "Weather Radar" for Patients

The research team tested a system called CoMET, which turns streams of bedside monitor data, lab results, and nurse-charted vital signs into an easy-to-read visual picture of risk. Each patient appears on a large screen as a bright comet icon whose “head” shows current risk and whose “tail” shows how that risk has changed over the last three hours. A score of 1 means the average chance of a serious event in the next day; higher scores mean higher risk. Unlike loud alarms, this system simply displays information all the time. The idea was that a quiet, always-on view of risk would help staff notice worrisome trends early and check on patients before they crashed.

Putting AI to the Test in Real Hospital Wards

To see if this display truly made a difference, the team ran a large randomized controlled trial on an 85-bed cardiology and cardiac surgery ward in a university hospital. More than ten thousand hospital stays were included over almost two years, during the COVID-19 era. Instead of randomizing individual patients, the researchers randomized clusters of rooms. Some room groups had the CoMET display turned on; others followed usual care without the display. Everyone received standard medical care; the only difference was whether staff could see the risk trajectories on big monitors and in the electronic record. No specific actions were forced—clinicians were encouraged, but not required, to respond when scores rose.

What Happened to Patients’ Outcomes

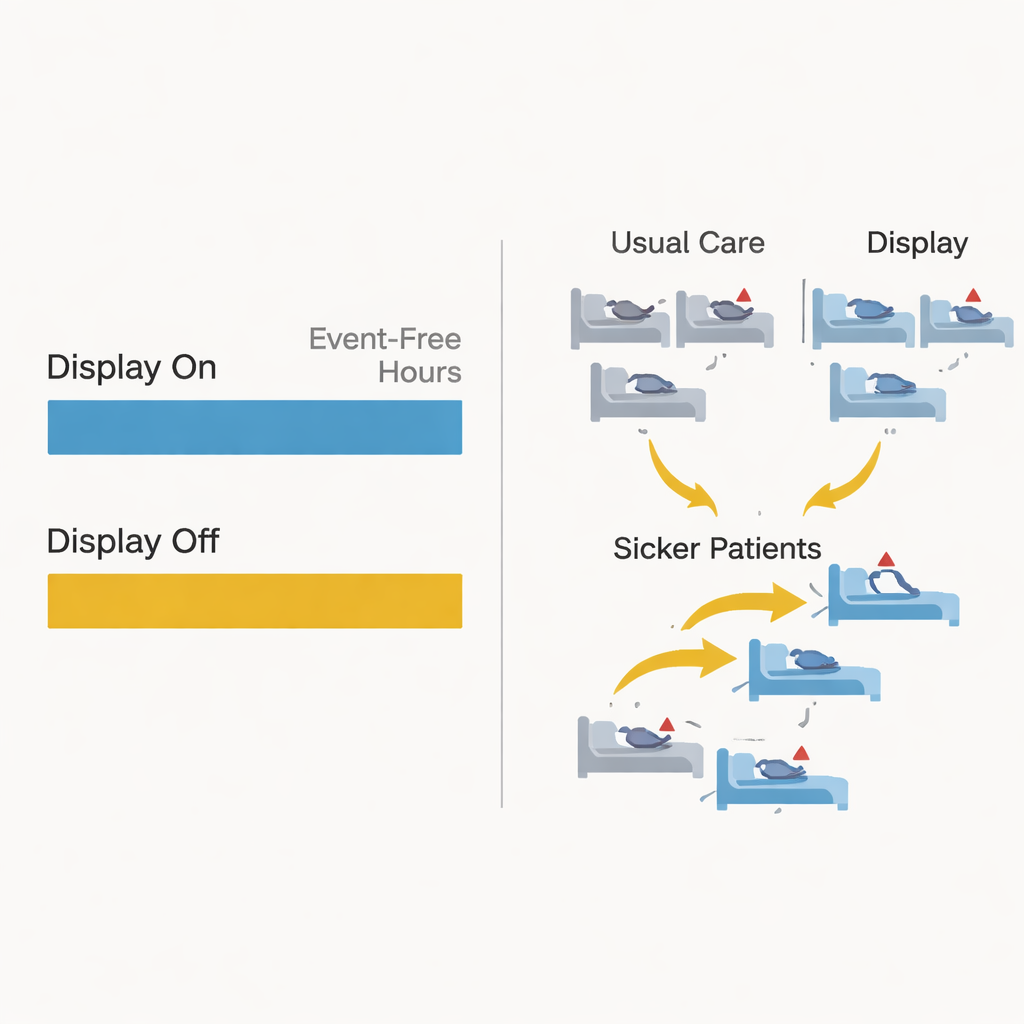

The main yardstick was how many hours in the first 21 days of a hospital stay patients remained free of serious deterioration—events like death, emergency transfer to the intensive care unit, emergency breathing tube placement, cardiac arrest, or rushed surgery. Most patients never had any such event, so they received the maximum score of 21 event-free days. Overall, about 5% of patients experienced a serious event. The AI system’s underlying prediction models worked well and even outperformed a common early warning score, yet when the researchers compared the display-on group to the display-off group, they found no meaningful difference in event-free hours or deaths. Among the smaller group of patients who did have an event, those in the display-on arm tended to have more stable hours beforehand, but this pattern was not strong enough to be statistically convincing.

How Human Decisions Blurred the Experiment

One of the most striking findings had less to do with the math and more to do with human behavior. During the trial, clinicians frequently moved patients between beds: hundreds went from usual-care beds into display-on beds and vice versa. A closer look showed that sicker patients were more likely to be transferred into rooms with the AI display. In other words, staff seemed to view CoMET as helpful and tried to give higher-risk patients the benefit of extra monitoring, even though the trial design had aimed to keep assignments random. These bed moves had to be treated as censoring events in the analysis and likely diluted any real effect the system might have had. The study also took place amid the strains of the COVID-19 pandemic, which lowered event rates and added further complexity.

What This Means for the Future of AI in Hospitals

For patients and families, the bottom line is both cautious and hopeful. This well-designed real-world trial showed that simply adding a passive AI risk display, without alarms or strict response rules, did not clearly improve outcomes like death or emergency transfers on these wards. Yet the way clinicians gravitated sicker patients toward AI-equipped beds suggests they saw value in the information. The authors conclude that future studies of hospital AI tools must go beyond accuracy and trial size: they should track how clinicians interpret risk scores, how teams communicate and act on them, and how bed assignments, workloads, and rare events shape results. AI may still help catch trouble early, but to truly make patients safer, designers and researchers will need to blend smart algorithms with equally thoughtful attention to human judgment, workflow, and hospital culture.

Citation: Keim-Malpass, J., Ratcliffe, S.J., Clark, M.T. et al. A randomized controlled trial of artificial intelligence-based analytics for clinical deterioration. Sci Rep 16, 7345 (2026). https://doi.org/10.1038/s41598-026-39051-z

Keywords: clinical deterioration, predictive monitoring, hospital AI, early warning systems, cardiology ward