Clear Sky Science · en

Research on intelligent recognition method of mechanical parts with high feature similarity in industrial field environment

Why spotting look‑alike parts matters

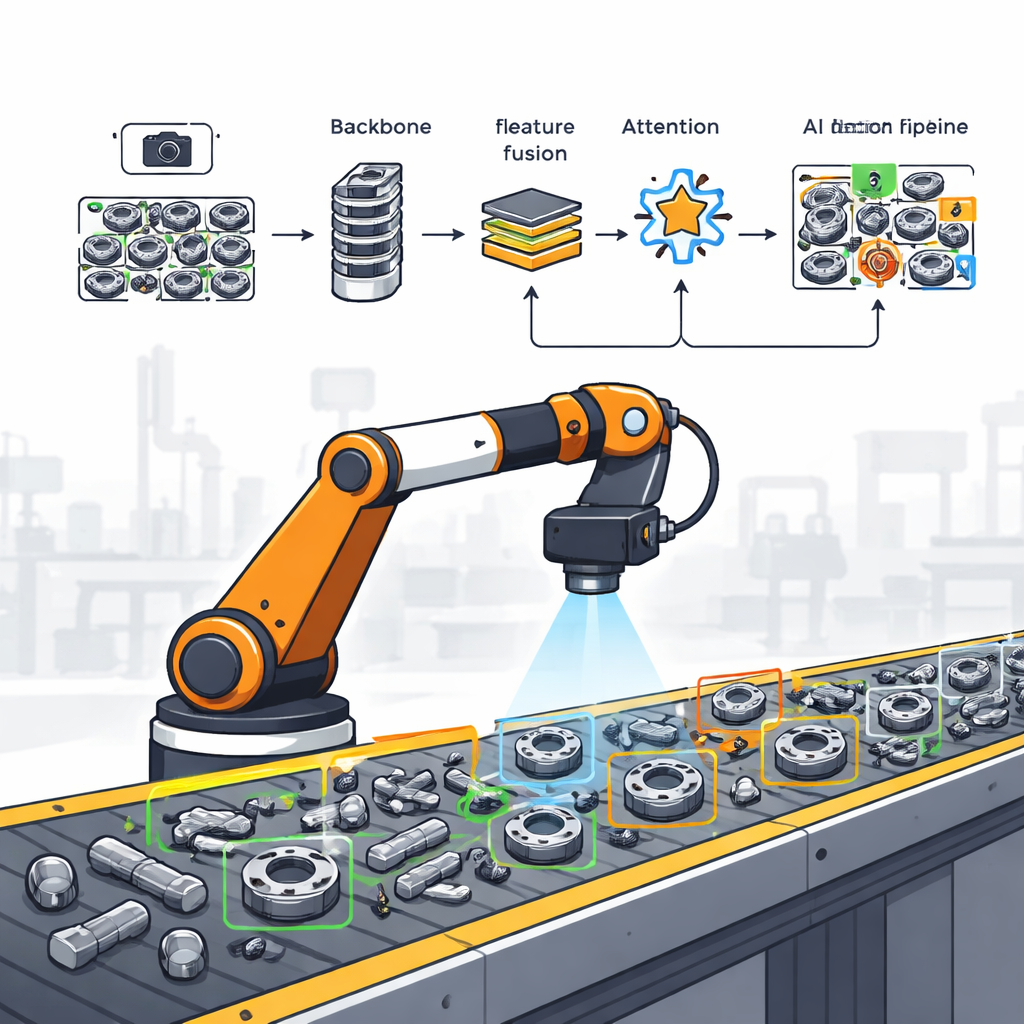

Modern factories depend on robots to find, pick up, and assemble thousands of tiny metal parts. Many of these pieces—gears, bearings, sprockets, nuts, and screws—look confusingly alike, especially under harsh lighting or when they overlap on a conveyor belt. If a robot mistakes one for another, the result can be jams, defects, or even damaged machines. This study tackles a deceptively simple question with big industrial consequences: how can a compact, fast vision system reliably tell nearly identical parts apart in real workshop conditions?

Challenges in real factory vision

On a factory floor, cameras rarely enjoy the clean views used in demo videos. Lighting is uneven, causing strong reflections on shiny metal and deep shadows on other regions. Parts are poured into bins or scattered on belts, often partially hiding one another. To make matters worse, many metal components share similar shapes, colors, and textures, leaving very few obvious visual clues. Traditional software that matches templates or hand‑crafted features struggles badly under these conditions: it is slow, brittle under changing light, and often fails when parts overlap or are rotated in unexpected ways.

Building on fast one‑shot detectors

In recent years, a family of artificial‑intelligence models called YOLO (for “You Only Look Once”) has become popular for detecting objects in images in a single, rapid pass. YOLOv8, one of the latest versions, already balances accuracy and speed well and can run in real time. However, when different parts look almost the same, even YOLOv8 can miss subtle cues or draw imprecise boxes. Earlier attempts to shrink YOLO models for small devices tended to cut parameters but also weakened their ability to represent fine details, which is precisely what is needed to distinguish look‑alike mechanical parts.

A leaner yet sharper detection network

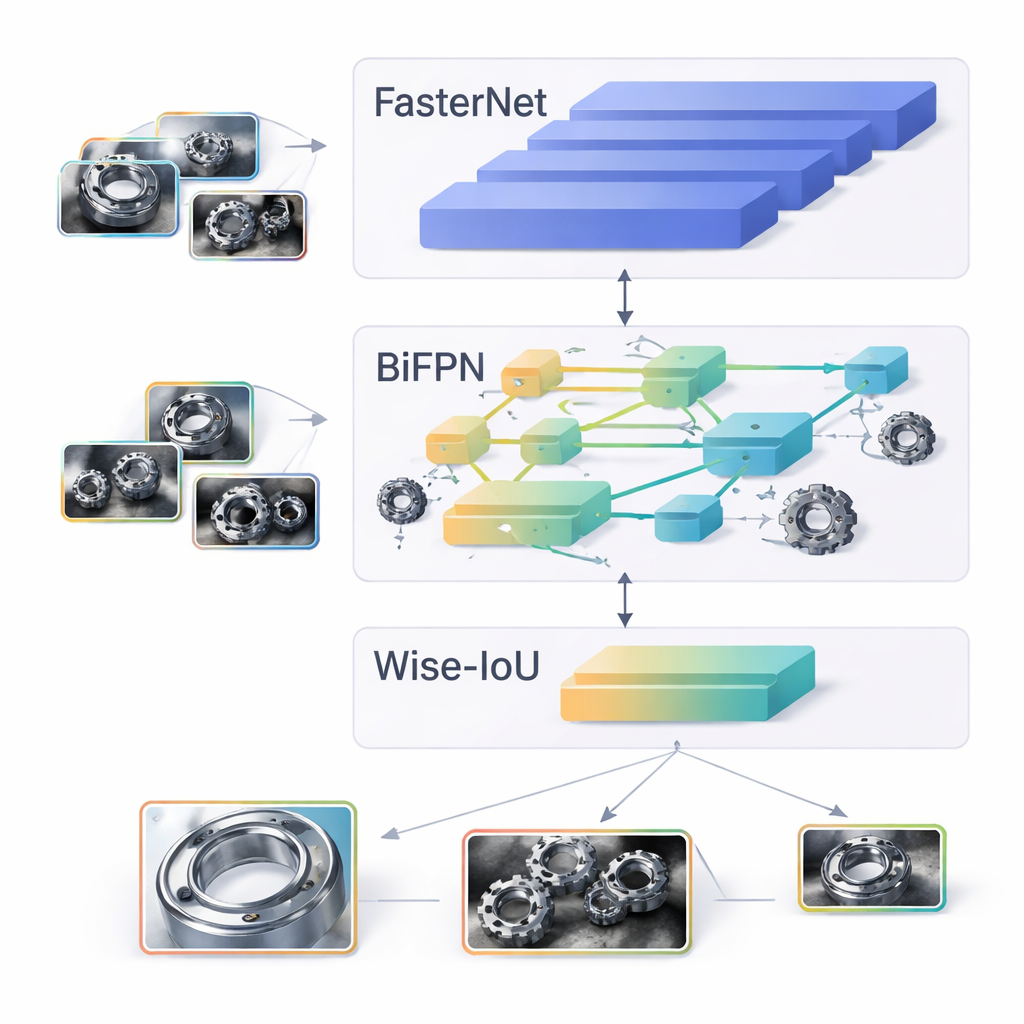

The authors propose an upgraded version of the smallest YOLOv8 model, called YOLOv8n, tailored specifically for confusing industrial parts and for hardware with limited computing power. First, they swap the standard core of the network for a newer design named FasterNet, which uses a “partial” convolution trick to work only on the most useful regions of the image at each step. This reduces repeated work in memory without losing key visual information. Second, they redesign the middle “neck” of the network to use a bidirectional feature pyramid (BiFPN), which lets information flow both from coarse, global views down to fine details and from fine details back up, so that small, occluded parts benefit from context and vice versa.

Teaching the network to ignore bad hints

Beyond the structure of the network, the way it learns to adjust its internal settings—guided by a loss function—strongly affects what it pays attention to. Standard training methods treat all examples more or less equally, which means low‑quality training boxes (poorly aligned or ambiguous) can mislead the model. The authors replace the usual box‑matching rule with a method called Wise‑IoU. In simple terms, this approach scores each training example not just by overlap, but by how much of an “outlier” it is, and then quietly turns down the influence of those unreliable examples. Over time, the system learns mostly from clear, well‑labeled parts, leading to tighter and more trustworthy detection boxes, especially when parts overlap or appear under difficult lighting.

Putting the system to the test

To evaluate their design, the team built their own image collection of six common mechanical parts, each captured 1,250 times under different light levels and with varying degrees of mutual blockage. They compared their improved model with the standard YOLOv8n and several other light‑weight detectors. The new system achieved higher overall detection quality while using less than two‑thirds of the computation and cutting the number of parameters by about 42%. In particular, it raised a key score called mean average precision at a commonly used threshold by 1.5 percentage points, while still running efficiently enough for real‑time use on modest hardware.

What this means for smart factories

In everyday terms, the study shows that factory robots can become both smarter and leaner. With the redesigned network core, smarter feature fusion, and a more selective learning rule, a small AI model can more reliably distinguish between look‑alike gears, bearings, and other parts in messy, real‑world scenes, even when the lighting is poor and parts overlap. This combination of higher accuracy and lower computational load makes it easier to deploy robust vision on low‑cost edge devices, paving the way for more flexible, fully automated production lines without needing massive servers or perfectly controlled environments.

Citation: Lu, C., Ye, X., Wu, J. et al. Research on intelligent recognition method of mechanical parts with high feature similarity in industrial field environment. Sci Rep 16, 7640 (2026). https://doi.org/10.1038/s41598-026-39036-y

Keywords: industrial object detection, mechanical parts, lightweight deep learning, YOLOv8, factory automation