Clear Sky Science · en

A humanoid control strategy based on deep reinforcement learning for enhanced comfort in lower limb rehabilitation robots

Robots That Help People Walk Again

When someone has trouble walking after a stroke or spinal injury, therapy can be slow, tiring, and uncomfortable. Lower limb rehabilitation robots are designed to support and guide a patient’s legs during practice, but today’s machines often feel stiff and “robotic.” This study explores how giving these robots a more human-like brain—using advanced learning algorithms—can make training gentler, more natural, and ultimately more effective for patients.

Why Walking Practice Needs to Feel Natural

As populations age, more people live with serious walking problems, and many turn to robot-assisted rehabilitation. Traditional robots follow pre-programmed leg paths and use simple control rules to move the joints. While reliable, these methods struggle with the messy reality of human movement: everyone’s gait is a bit different, and a rigid robot can pull or push in ways that feel awkward or even painful. The authors argue that for rehabilitation to work well, the robot must not only keep the patient upright and moving, but also adapt to natural walking patterns and minimize the forces it exerts on the body.

Learning from Real Human Steps

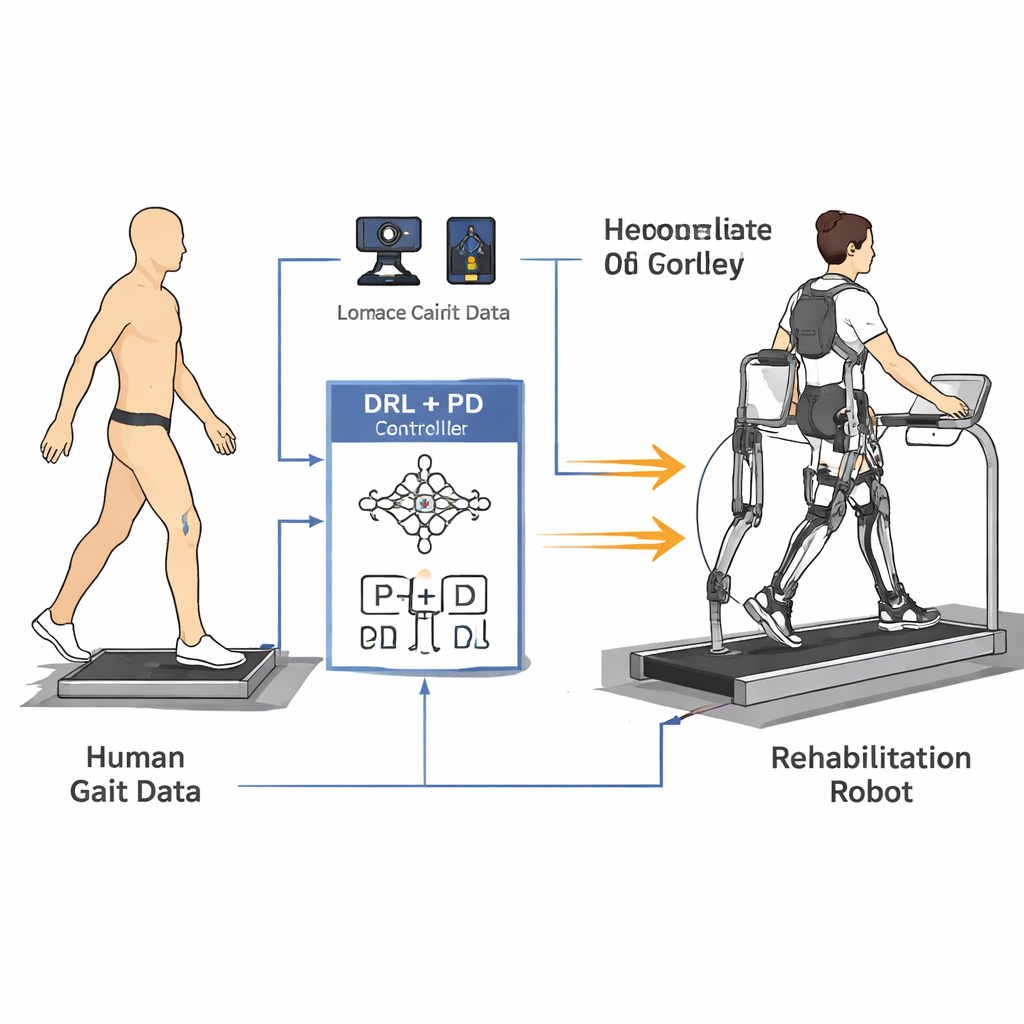

To teach the robot how people really walk, the researchers first built a simplified mathematical model of the legs and torso. They then recorded gait data from five healthy volunteers using a high-precision 3D motion capture system and force plates in the floor. Reflective markers on the hips, knees, ankles, and trunk allowed them to calculate how each joint moved through a full step, while sensors under the feet measured how hard each leg pressed against the ground. From these measurements, they created smooth reference curves for hip and knee angles and tracked how joint forces changed over time, capturing both the shape and rhythm of normal walking.

A Smarter Controller That Still Plays It Safe

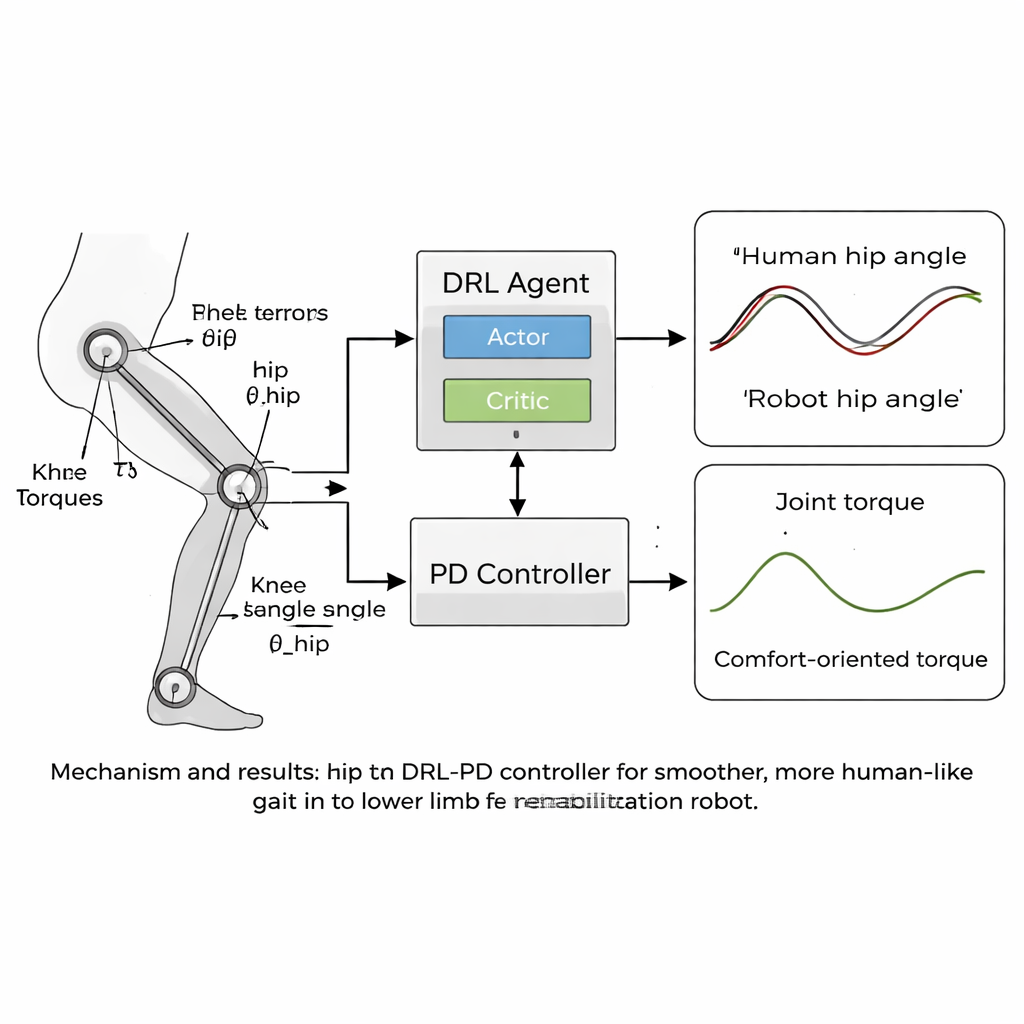

The heart of the paper is a new “humanoid” control strategy that combines deep reinforcement learning (DRL) with a classic proportional-derivative (PD) controller. DRL is a type of artificial intelligence in which a virtual agent tries actions, observes the results, and gradually discovers what works best by maximizing a reward signal. In this case, the agent sits on top of the PD controller: it sees the robot’s joint angles and speeds and decides what torques to apply, while the PD layer makes sure the joints do not drift far from safe, human-like target angles. The reward function is carefully crafted to encourage stable forward walking while penalizing anything that would feel bad to a patient—such as jerky motion, large forces at the joints, or unsafe postures like excessive leaning or low foot clearance.

Smoother Motion, Closer to a Human Gait

The team tested their approach in computer simulations using a lower limb rehabilitation robot model with hip and knee joints that matched their gait data. Over thousands of training episodes, the DRL-PD controller learned to produce a repeating walking cycle in which joint angles closely followed the human reference patterns. The robot’s hips and knees moved in regular, stable loops, a sign of reliable, repeatable gait. Crucially, the torques needed to drive the joints became smoother and smaller compared with a standard PD controller. Quantitative measures showed that tracking errors dropped to just a few hundredths of a radian, and the rate at which joint torques changed—a proxy for how “jerky” the forces would feel to a patient—was reduced by more than half. The controller also remained stable even when the model’s leg masses were varied by several percent, suggesting it could tolerate real-world differences between users.

What This Means for Future Rehab Robots

For non-specialists, the take-home message is straightforward: by letting a robot learn the rhythms and limits of human walking from real data, and by rewarding it for being smooth and gentle, we can design machines that help people practice walking in a way that feels more natural and less stressful. Patients may be more willing to engage in longer, more frequent sessions if the robot moves with them rather than against them. Although the current results come from simulations and require powerful computers for training, once the learning is complete the controller can run efficiently on real devices. The authors see this work as a step toward personalized, adaptive rehabilitation robots that adjust to each patient’s unique gait and comfort needs, potentially improving both recovery and quality of life.

Citation: Jin, Y., Zhang, J., Li, W. et al. A humanoid control strategy based on deep reinforcement learning for enhanced comfort in lower limb rehabilitation robots. Sci Rep 16, 7370 (2026). https://doi.org/10.1038/s41598-026-39011-7

Keywords: rehabilitation robots, gait training, deep reinforcement learning, exoskeleton, patient comfort