Clear Sky Science · en

Enhanced diabetes prediction using pre-trained CNNs, LSTM, and conditional GAN on transformed numerical data

Why smarter diabetes checks matter

Type 2 diabetes is often called a silent disease because it can quietly damage the heart, kidneys, eyes, and nerves long before symptoms become obvious. Doctors already collect simple measurements—such as blood sugar, blood pressure, weight, and age—to judge a person’s risk. But turning these few numbers into an accurate early warning system is surprisingly hard, especially when the available data are limited. This study explores an inventive way to squeeze more information out of small, routine datasets so that computers can spot who is most likely to develop diabetes, potentially allowing earlier care and fewer complications.

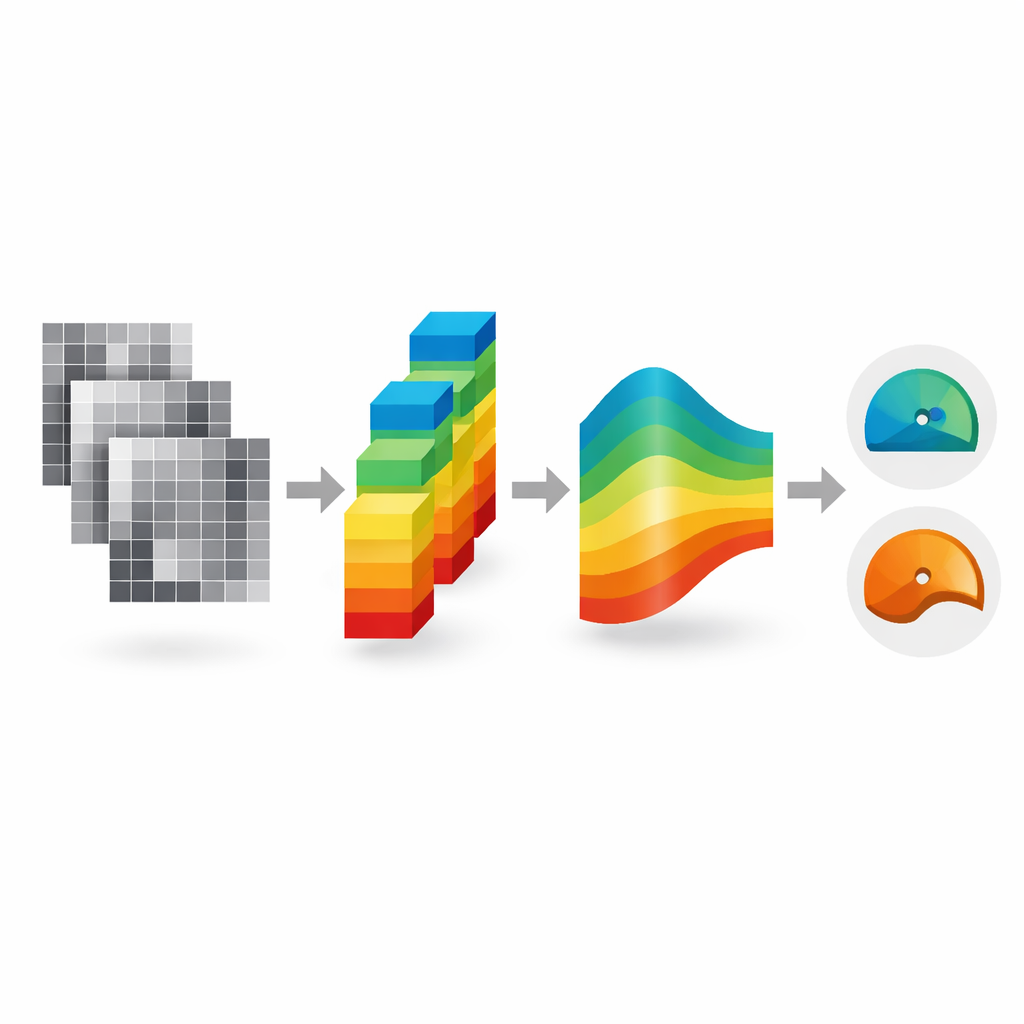

Turning numbers into pictures

Most medical records are stored as rows of numbers in a table. Modern image-based deep-learning systems, however, work best on pictures. The researchers bridge this gap by converting each person’s eight routine measurements from a well-known diabetes dataset into a small artificial image. Features that tend to change together—like blood sugar and body mass index—are placed close to one another in the image, and more important features are given larger areas. In effect, each patient’s health profile becomes a simple patchwork picture whose patterns can be read by image-recognition networks. This kind of “tabular-to-image” conversion lets the team reuse powerful tools originally developed for tasks like object recognition and medical imaging.

Teaching machines from too little data

A major obstacle in diabetes prediction is that public datasets are modest in size and often unbalanced, with fewer people in the diabetic group than in the non-diabetic group. Training large neural networks on such small, skewed samples can lead to models that perform well on paper but fail on new patients. To counter this, the authors first rebalance the data so that both outcomes are equally represented. They then use a type of generative model, a conditional GAN, to create many additional synthetic images that resemble real patients from each group. These artificial examples expand the training pool from 1,000 to 9,000 images while preserving the overall statistical structure, giving the learning algorithms far more variety to practice on.

Layered networks that read patterns and context

Once the numeric records have been turned into images and expanded with synthetic examples, the pictures are passed through several advanced image-recognition networks that were originally trained on large general-purpose image collections. These pre-trained models—such as DenseNet, ResNet, Xception, and EfficientNet—act like highly experienced feature detectors, extracting hundreds of subtle visual patterns from each image. Instead of making a decision directly, their outputs are treated as ordered sequences and fed into a second type of network called an LSTM, which is good at finding dependencies in sequences. By stacking these two stages, the system can capture both local patterns (how related measurements cluster together) and broader relationships (how groups of measurements jointly signal risk) before deciding whether a person is likely to have diabetes.

How well does the system work?

Evaluated on the augmented version of the classic Pima Indians Diabetes Dataset, the best-performing configuration—a ResNet-based feature extractor combined with an LSTM and a fusion of features from all four image models—correctly classified about 94% of cases and achieved an area-under-the-curve score of 98%, a common measure of how well a test separates two groups. These numbers are higher than many previously reported results based on traditional machine-learning methods that work directly on the raw table of numbers. To check whether the approach might generalize beyond a single study population, the authors also tested it on an independent dataset from a German hospital. There, the system reached similar accuracy and discrimination, despite differences in age, sex, and background between the two groups of patients.

Promise and caution for real-world use

For non-specialists, the key takeaway is that familiar, low-cost clinical measurements can be made more informative by reimagining them as simple images and letting mature image-analysis tools do the heavy lifting. The study suggests that this strategy, combined with realistic synthetic data and layered neural networks, can sharpen computerized screening for diabetes and possibly other diseases that rely on structured records. At the same time, the authors stress important caveats: part of the strong performance may stem from the synthetic data, and both datasets are limited in size and demographics. Before such a system guides care in clinics, it must be tested on much larger and more diverse patient groups and paired with explanations that clinicians can trust. Still, the work points toward a future in which even small, routine datasets can fuel more reliable early warnings for chronic disease.

Citation: Singh, K.R., Dash, S., Liu, H. et al. Enhanced diabetes prediction using pre-trained CNNs, LSTM, and conditional GAN on transformed numerical data. Sci Rep 16, 8081 (2026). https://doi.org/10.1038/s41598-026-38942-5

Keywords: type 2 diabetes, medical AI, deep learning, risk prediction, synthetic data