Clear Sky Science · en

DQN-empowered energy optimization for wireless powered communication networks

Powering Tiny Devices Through the Air

From smart streetlights to fire alarms hidden in forests, countless tiny devices now make up the Internet of Things. Keeping all of them supplied with energy is a major headache: batteries run out, and running power cables everywhere is impractical. This paper explores a way to beam energy wirelessly to such devices and use artificial intelligence to share that energy wisely, so that critical sensors stay alive longer and the whole network works more smoothly.

Why Wireless Power Needs Smarter Control

Wireless powered communication networks send out radio waves that devices can convert into electricity while also using them to send data. In most earlier studies, this energy conversion was treated as if it behaved in a simple, straight-line way: more signal always meant proportionally more power. In reality, energy-harvesting circuits begin to “flatten out” when the incoming signal is strong, wasting part of the power. At the same time, real environments are messy: sunlight for solar panels can swing up and down, buildings block signals, and sudden events such as fires can create urgent data needs at particular nodes. Static rules that ignore these twists and turns can leave some sensors starved of energy and cause others to waste it, shortening the overall network lifetime.

A Learning Brain for the Power Network

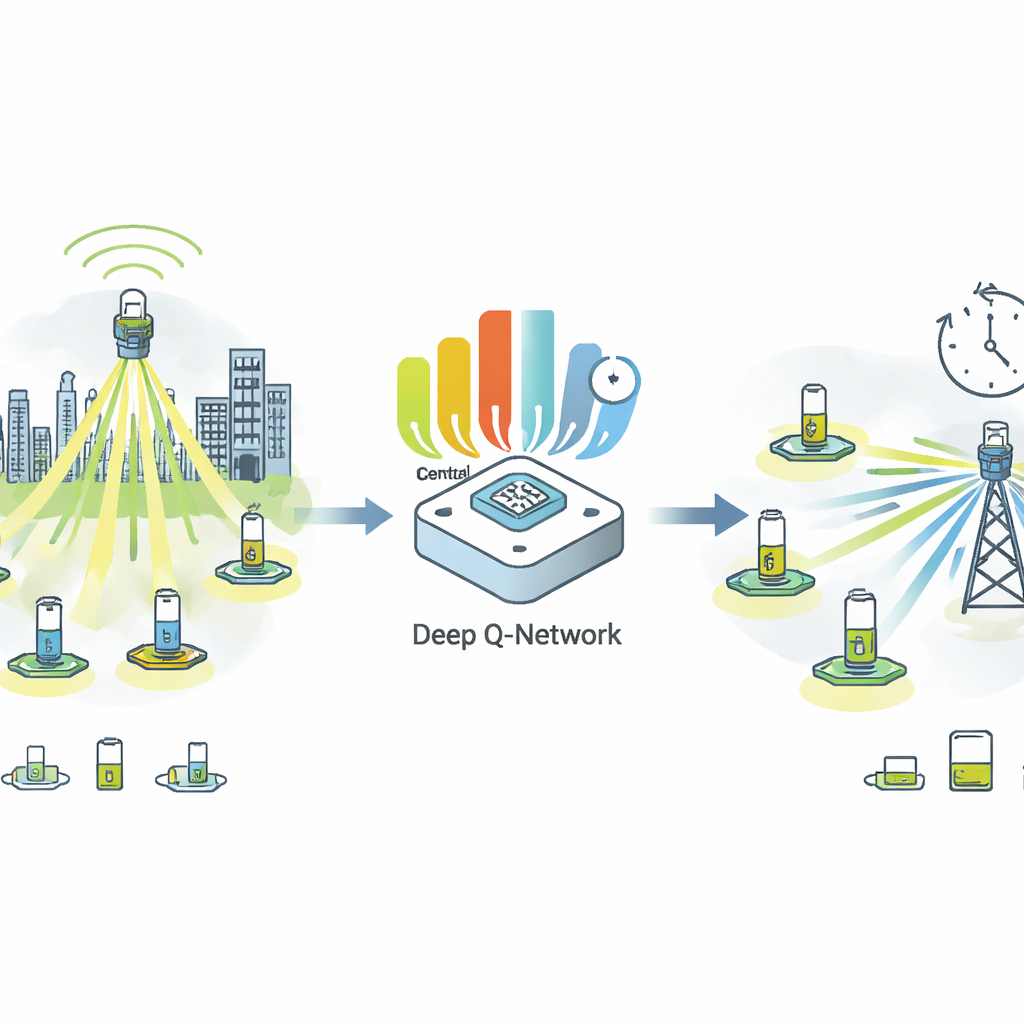

To tackle this, the authors design a learning-based controller built on a technique called Deep Q-Networks, a form of reinforcement learning. Instead of relying on fixed mathematical formulas, this controller treats the network as a game played over time. In each round, it observes the energy left in every node, the quality of the radio links, and how urgent each task is—for example, fire monitoring versus routine temperature checks. Based on these observations, it decides how much power to beam to each node. After each decision, it receives feedback that mixes several goals: sending as much useful data as possible, sharing energy fairly so that no device is consistently neglected, and avoiding wasteful overuse of the shared power source. Over many rounds, the controller learns which patterns of energy sharing lead to the best long-term performance.

Seeing Ahead and Balancing Competing Goals

One key ingredient in the framework is prediction. The system uses a statistical method called Gaussian Process Regression to forecast how much energy nodes are likely to harvest in the near future, for example as sunlight conditions change. It also uses a flexible model of how radio signals fade and bounce in realistic city-like environments. These pieces feed into a decision process that updates every few seconds, allowing the controller to respond quickly when network conditions shift. The reward signal guiding the learning blends three simple ideas: efficiency (how many bits of information are delivered per unit of energy), fairness (how evenly energy is spread across nodes), and priority (making sure high-urgency tasks get what they need). By tuning the relative importance of these three ingredients, network operators can choose between maximum lifetime, strict fairness, or peak data rates.

What the Simulations Reveal

Because real-world experiments are still in progress, the authors evaluate their method in detailed computer simulations of a network with 30 wirelessly powered devices, and also explore scenarios up to 100 nodes. Compared with a simple fixed split of energy and a more traditional learning method, the new controller keeps the network running for much longer—about half again as many rounds before nodes shut down. It also keeps the spread of energy levels between devices much tighter, meaning far fewer “dead spots” where nodes fail early. The learned strategy adapts several times faster to sudden changes, such as a drop in signal quality or a jump in task urgency, and it maintains higher data throughput across a wide range of radio conditions. Importantly, the authors pay attention to practical details, showing that a compact version of the learning model can run on low-cost microcontrollers used in many IoT devices, with decision times on the order of tens of milliseconds.

From Simulation to Real-World Grids of Sensors

The study concludes that pairing wireless power with a learning-based controller can significantly extend the life and reliability of sensor networks, especially when conditions are unpredictable and tasks differ in urgency. By acknowledging that harvesting circuits saturate, that the radio environment fluctuates, and that some sensors matter more than others at any given moment, the proposed approach learns to juggle competing needs better than static rules can. The authors are clear that their results so far come from simulations and that the exact gains will need to be confirmed on real hardware. Still, their work points toward a future in which vast networks of small devices can run for long periods with minimal human attention, intelligently sipping power from the air while keeping vital data flowing.

Citation: Chen, H., Wang, X., Yuan, L. et al. DQN-empowered energy optimization for wireless powered communication networks. Sci Rep 16, 7987 (2026). https://doi.org/10.1038/s41598-026-38904-x

Keywords: wireless power, Internet of Things, energy harvesting, reinforcement learning, sensor networks