Clear Sky Science · en

Research on improved models for facial expression recognition in mice with abnormal glucose metabolism

Reading Health in Tiny Faces

Abnormal blood sugar is best known for its role in diabetes, but it also quietly affects the brain, mood, and overall well‑being. This study shows that even mice wear their metabolic health on their faces. By watching tiny shifts in whiskers, ears, and eyes, and combining them with a smart but compact computer-vision model, the researchers demonstrate a new way to track blood sugar problems and treatment effects without a single needle stick.

Building a Mouse Version of Prediabetes and Diabetes

To explore how blood sugar changes show up on the face, the team first needed mice that reliably moved from normal metabolism into trouble and then into recovery. They used a well‑established recipe: a rich, high‑fat diet together with a compound called streptozotocin that damages insulin‑producing cells. Male C57BL/6J mice were split into five groups. One stayed on a standard diet, while the others received the high‑fat diet plus the drug to trigger high blood sugar. After this, three of the high‑sugar groups were given different doses of a mushroom‑derived substance called Sparassis latifolia polysaccharides (SLPs). Over several months, repeated blood tests showed a clear pattern: blood sugar rose from normal to pre‑abnormal, then to full hyperglycemia, and finally dropped again in the high‑dose SLP group, revealing a dose‑dependent improvement.

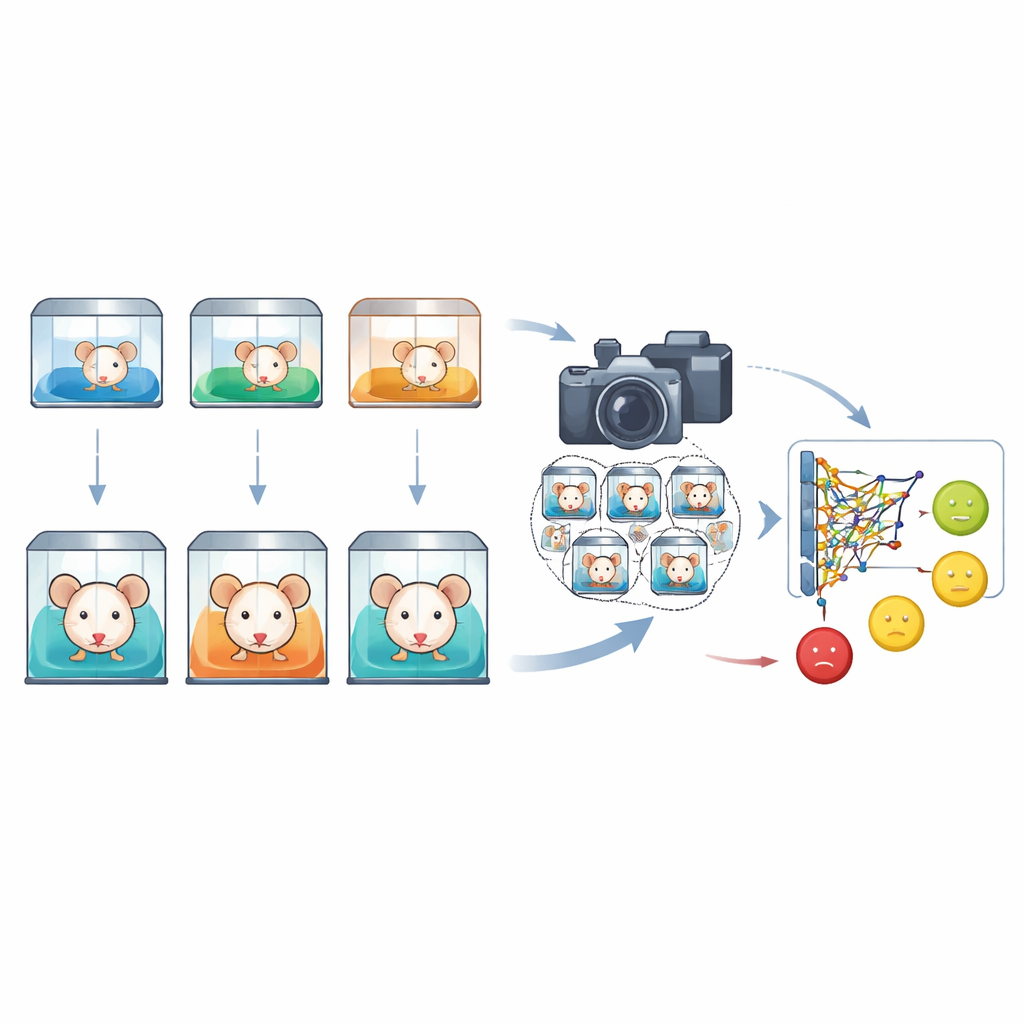

Turning Mouse Faces into a Data Library

Next, the researchers transformed everyday mouse behavior into a rich image library. Two cameras—one at eye level and one angled from above—recorded freely moving mice for thousands of minutes under controlled lighting and temperature. From 390 video clips, the team hand‑selected 2830 clear images of mouse faces. Each image was labeled according to one of five blood‑sugar‑based states: normal, early disturbance, full abnormality, and early or late stages of SLP treatment. Specialists then drew boxes around eyes, ears, nose, mouth, and whiskers, capturing the subtle cues that reflect discomfort, strain, or relief. This created a standardized dataset linking facial expressions directly to measured blood‑sugar levels across disease and recovery.

Designing a Small but Sharp‑Eyed Detection Model

Recognizing these expressions is far from trivial: mouse faces are tiny in each frame, expression differences are delicate, and cages are visually messy with bedding, litter, and cage‑mates. To tackle this, the team built an upgraded version of a popular real‑time vision system called YOLOv8, naming their variant LFPP‑YOLO. They added a “partial self‑attention” block that scans the whole image but selectively emphasizes regions that look like faces, helping the model ignore distractions in the background. They also wove in a lightweight set of modules that blend information across different image scales so that the system can both see the overall head and pick out fine lines and textures around eyes and whiskers. A refined loss function further nudges the model to draw tighter, more accurate bounding boxes around irregular, blurred facial regions.

Testing the System Against Rival Methods and Real Life

On the curated dataset, LFPP‑YOLO reached an average detection accuracy of about 95% across the five metabolic states, with an F1 score close to 0.89. Remarkably, it did this while remaining tiny—about 2.4 megabytes—and fast, needing only about 5 milliseconds to analyze an image on the test hardware. In head‑to‑head trials, it outperformed both a classic two‑stage detector and several newer YOLO variants, especially for small, partially hidden, or angled faces. Heat‑map visualizations showed that the improved model learned to concentrate on ears, eyes, and mouth even when other mice or bedding cluttered the scene. In a separate validation at another facility, the model’s expression‑based classifications closely matched blood‑glucose‑based labels, with a statistical agreement level usually described as “almost perfect.”

What This Could Mean for Future Care

The work suggests that facial expressions can serve as a practical, non‑invasive window into metabolic health in small animals. Instead of repeated blood draws, researchers could use cameras and a compact algorithm to track when a mouse drifts from normal metabolism toward trouble, and when a dietary or drug intervention starts to reverse the damage. While the current dataset is limited in scale and conditions, and more work is needed to extend the method to other strains, lighting, and species, the study points to a future in which routine monitoring of chronic disease in animals—and perhaps one day in people—could rely increasingly on careful reading of the face combined with intelligent vision systems rather than needles and test strips.

Citation: Guo, X., Shi, L., Ma, B. et al. Research on improved models for facial expression recognition in mice with abnormal glucose metabolism. Sci Rep 16, 8165 (2026). https://doi.org/10.1038/s41598-026-38863-3

Keywords: facial expression recognition, abnormal glucose metabolism, mouse diabetes model, deep learning detection, noninvasive monitoring