Clear Sky Science · en

Serial cascaded hybrid adaptive deep networks-based lyrics text classification using optimization approach

Why Smarter Song Filters Matter

Music streams into our lives almost nonstop, and much of what we hear is chosen by algorithms. Yet many of these systems still struggle with a simple question: what exactly are the words in a song saying, and who are they suitable for? This paper tackles that problem by building an advanced artificial intelligence (AI) model that automatically reads song lyrics and sorts them by mood, genre, sentiment, and even the type of performer. The goal is to help create safer playlists for children, more accurate mood-based recommendations, and better tools for music researchers.

The Challenge Hidden in Song Words

Lyrics are far more complicated than a list of good or bad words. The same phrase can feel tender in one song and threatening in another, and listeners bring their own experiences to what they hear. Traditional filters usually rely on static lists of offensive terms or simple statistical techniques. These approaches miss context, fail to keep up with evolving slang, and often mislabel songs. At the same time, the explosion of digital music means there are millions of tracks to analyze, in many languages and styles, which overwhelms manual labeling and older algorithms.

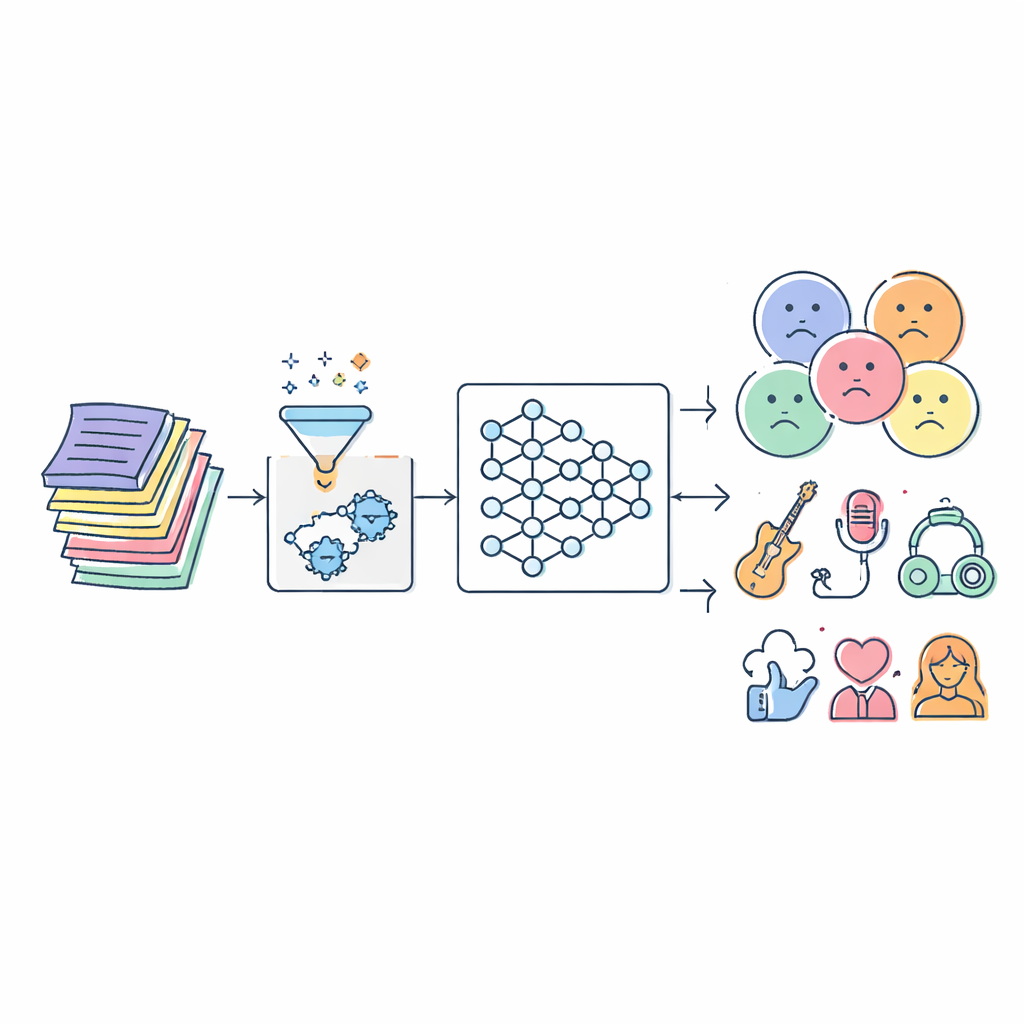

Cleaning Up the Raw Lyrics

The authors begin by assembling large lyric collections from three public datasets that together cover hundreds of thousands of songs across multiple genres and languages. Before any AI can learn from the text, the lyrics must be cleaned. The system removes punctuation, special symbols and repeated or irrelevant fragments, and then reduces related word forms to a common root (for example, “singing,” “sings,” and “sang” all become “sing”). This pre-processing step strips away noise while keeping meaning, so that later stages can focus on emotional tone and topic rather than formatting quirks or spelling variations.

A Layered AI that Reads Like a Careful Listener

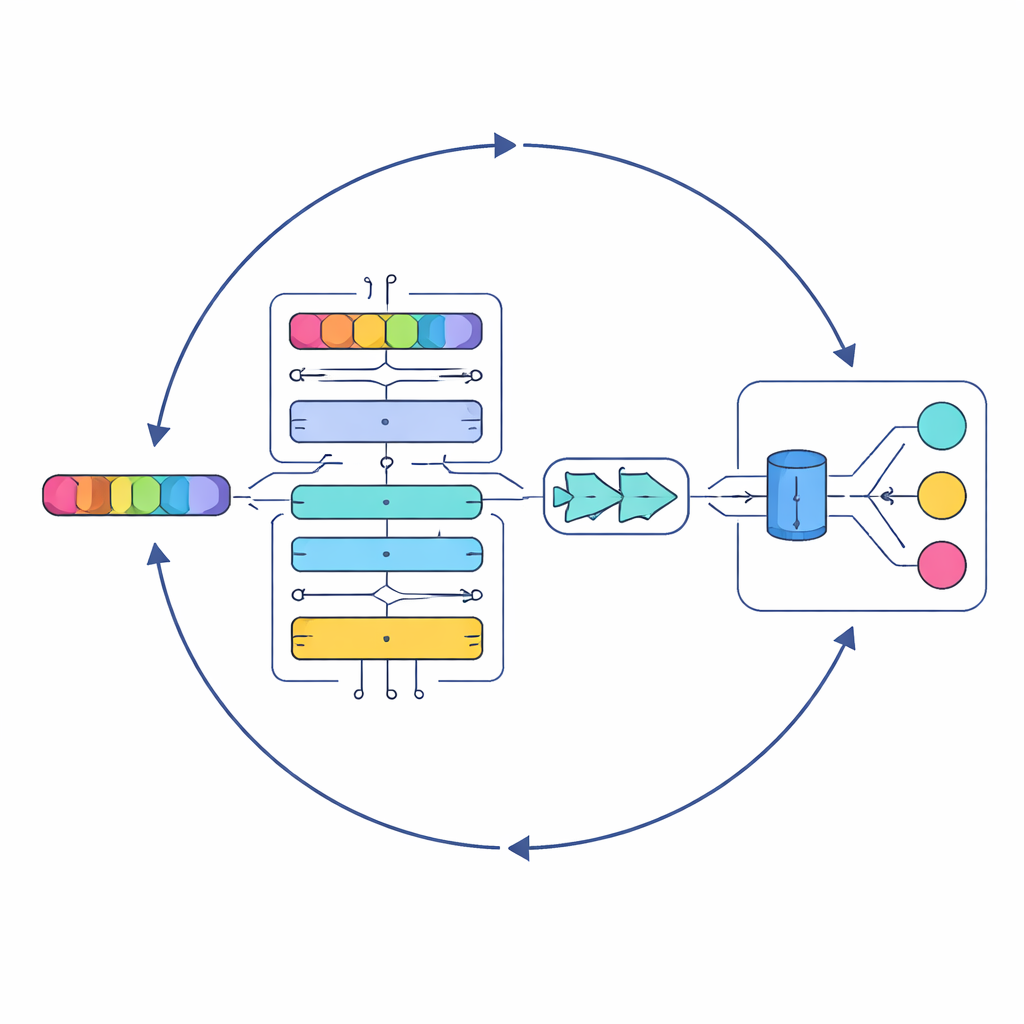

At the heart of the study is a new model called Serial Cascaded Hybrid Adaptive Deep Network, or SCHADNet. It combines three powerful ideas from modern language AI. First, a transformer-based encoder captures how words relate to each other across an entire lyric, not just next-door neighbors. Second, a bidirectional Long Short-Term Memory layer reads the lyric both forward and backward in time, helping the system understand how earlier lines color the meaning of later ones. Third, a Gated Recurrent Unit layer refines this information into a compact summary that is well suited for making final decisions. Together, these components act like a chorus of specialized readers, each focusing on different aspects of the song’s text.

Borrowing a Strategy from the Sea

Simply stacking deep-learning layers is not enough; their internal settings—such as how many neurons they contain and how long they train—strongly affect performance. Instead of hand-tuning these choices, the authors turn to an optimization approach inspired by the hunting patterns of marine predators. Their Improved Marine Predators Algorithm (IMPA) explores many possible parameter combinations, steadily homing in on those that yield the best results. By trimming away parts of the original algorithm that did not help in this setting, they improve convergence, meaning the system reaches good solutions faster and more reliably.

How Well the System Performs

The researchers test SCHADNet with IMPA on three different lyric datasets and compare it with a range of established methods, including classic machine-learning classifiers and several popular deep-learning models such as plain LSTM, transformer–only systems, and hybrid networks. Across accuracy, recall (how many truly relevant songs are found), and other quality measures, the new approach consistently comes out on top. On one large multilingual dataset, it correctly classifies about 93% of songs and achieves an especially high negative predictive value, meaning it is very good at recognizing lyrics that do not belong in a flagged category—crucial for avoiding overzealous blocking or mislabeling.

What This Means for Listeners and Creators

To a layperson, the message is straightforward: the authors have built a more nuanced, reliable reader for song lyrics. Instead of relying on crude word lists, their system looks at whole phrases, context, and patterns across large collections of music, then automatically assigns labels such as mood, style, or suitability for younger audiences. While the model is complex and computationally demanding, it opens the door to smarter parental controls, richer mood-based playlists, and new ways to study trends in popular music. Future work aims to reduce its hunger for data and speed up training, but even in its current form, SCHADNet points toward a future where music platforms understand lyrics almost as carefully as an attentive human listener.

Citation: Jasmine, R.L., Mukherjee, S., Robin, C.R.R. et al. Serial cascaded hybrid adaptive deep networks-based lyrics text classification using optimization approach. Sci Rep 16, 8527 (2026). https://doi.org/10.1038/s41598-026-38813-z

Keywords: music recommendation, lyrics analysis, text classification, deep learning, content moderation