Clear Sky Science · en

VolE: A point-cloud framework for food 3D reconstruction and volume estimation

Why Measuring Your Dinner Matters

Counting calories from a photo sounds like magic, but for doctors and dietitians it could be a powerful tool. Accurately knowing how much food people actually eat is vital for managing conditions like diabetes and obesity, yet weighing every meal on a kitchen scale is unrealistic in daily life. This paper presents VolE, a new method that lets an ordinary modern smartphone build a detailed three-dimensional model of a single food item and estimate its volume with surprisingly high accuracy—no special hardware, reference card, or depth sensor required.

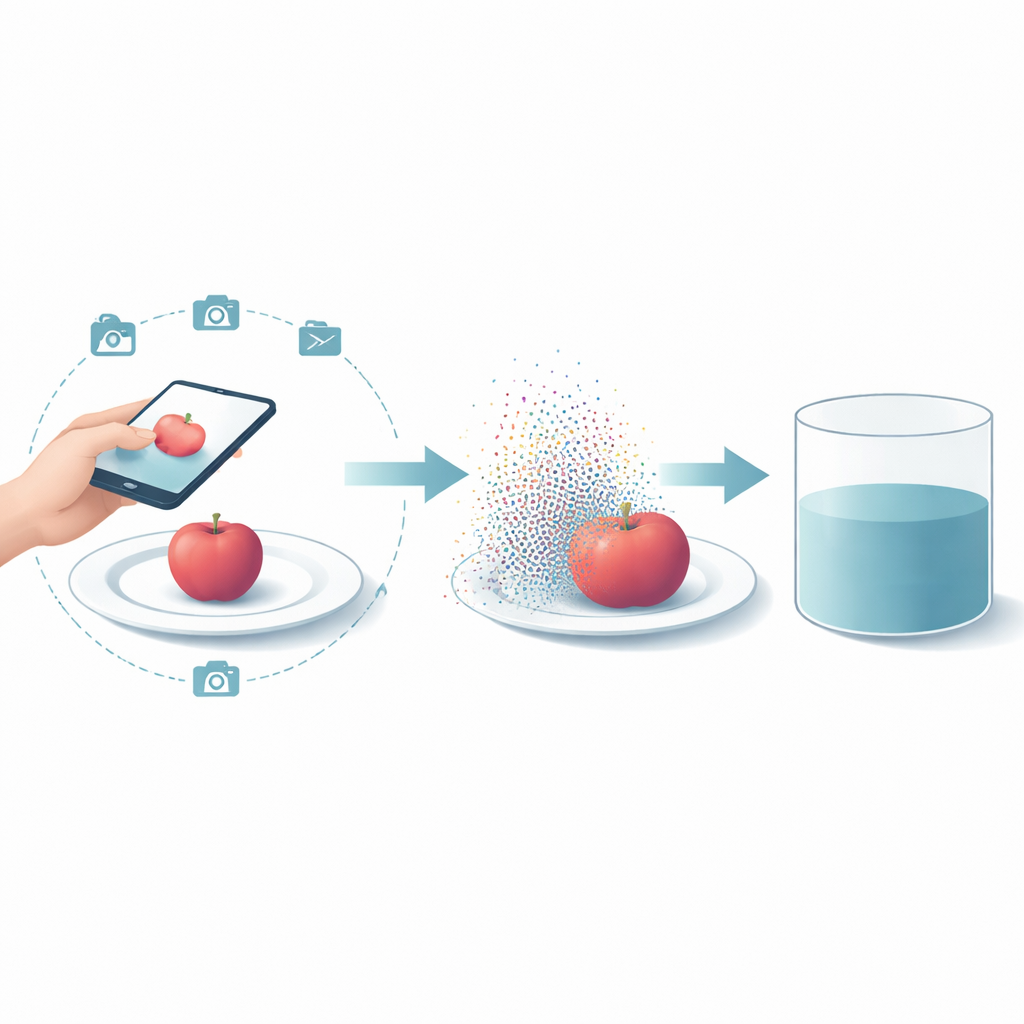

From Simple Photos to Solid Shapes

The core idea behind VolE is to turn a short, casual phone video of your meal into a precise 3D shape that can be measured. As a user slowly moves their phone around a dish, the device’s built-in augmented reality features (ARCore on Android or ARKit on iOS) record both the images and the exact position and orientation of the camera in real space. VolE combines these image streams and camera paths to reconstruct a dense “point cloud” of the food—thousands of tiny dots floating in space that trace the surface of the item. Because the phone’s AR system already knows real-world distances, this virtual object is created at the correct physical scale, solving a long-standing problem in vision research where 3D shape can be recovered but not its true size.

Finding the Food and Cleaning the Scene

Food photos are busy: plates, tables, and background clutter compete for attention. VolE tackles this with an automatic video segmentation step that acts like a smart pair of scissors. A model called FoodMem identifies which pixels belong to the food across all frames of the video, even as the phone moves and the food becomes partially hidden. Using the refined camera positions, VolE projects the 3D points into each segmented image and keeps only those that consistently fall on the food in every view. The result is a clean, isolated cloud of points belonging just to the target item, while most background points and segmentation mistakes are filtered away.

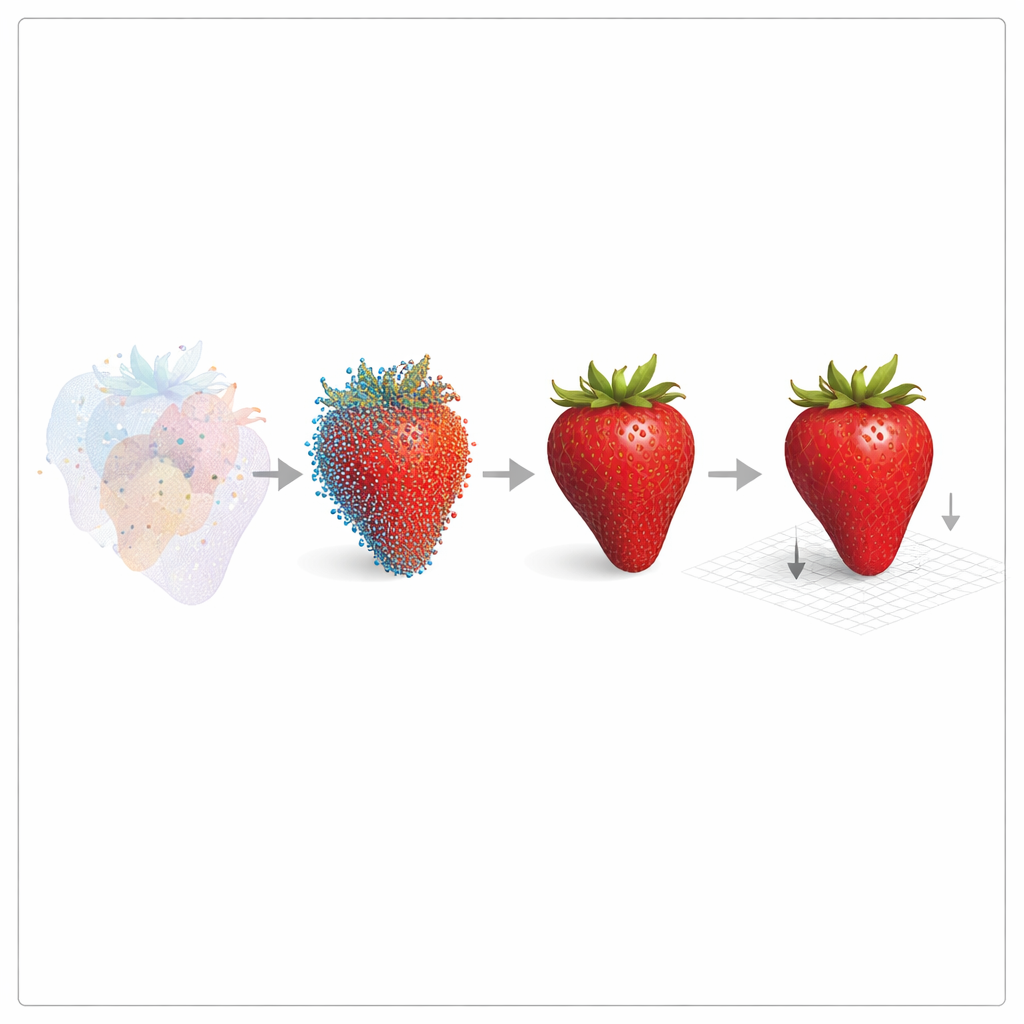

From Dots to a Measurable Object

Point clouds alone are hard to measure, so VolE converts them into a continuous digital surface called a mesh. Specialized 3D software stitches neighboring points together into tiny triangles that wrap around the food like a tight skin, filling small gaps and making the object “watertight.” The mesh is then refined through smoothing, denoising, and optimization steps that remove bumps and holes without changing the true size in any meaningful way. Finally, a mathematical trick known as the divergence theorem is used: the surface is broken into many small pieces, each treated like a tiny pyramid anchored at the origin. Summing the signed volumes of all these pieces yields the total volume of the food in cubic centimeters, ready to be translated into weight and calories via standard density tables.

Testing on Real Foods and Tough Benchmarks

To see how well VolE works, the authors built a new “Foodkit” dataset of 21 real foods—from apples and bananas to wraps and pastries—captured with 700–1200 images each. They measured true volume using water displacement and mass with a lab scale, then compared these numbers to VolE’s estimates. Across all items, the average volume error was about 1–2%, corresponding to roughly 99% accuracy, and remained stable across repeated runs despite internal randomness in the reconstruction software. VolE was also evaluated on challenging public datasets used in international competitions, outperforming or matching the best existing methods for food volume estimation while needing no calibration boards, depth sensors, or fixed camera rigs.

What This Means for Everyday Health

In plain terms, this work shows that a phone you already own can, with the right algorithms, measure your food almost as well as lab equipment. By turning casual videos into accurate 3D models, VolE removes the need for scales, specialized scanners, or carefully staged photos with reference objects. Although it currently works best for a single main item on a plate and still runs on a powerful computer rather than directly on the phone, the method points toward a near future where diet tracking apps can estimate portion sizes automatically and reliably. That could make long-term nutrition monitoring more objective, less burdensome, and far more accessible to people managing their health in everyday settings.

Citation: Haroon, U., AlMughrabi, A., Zoumpekas, T. et al. VolE: A point-cloud framework for food 3D reconstruction and volume estimation. Sci Rep 16, 8648 (2026). https://doi.org/10.1038/s41598-026-38756-5

Keywords: food volume estimation, 3D reconstruction, mobile health, augmented reality, dietary assessment