Clear Sky Science · en

Entropy guided multi level feature fusion network for high precision content based image retrieval

Finding the Right Picture, Fast

Every day, we create and store staggering numbers of photos—from medical scans and satellite images to security footage and personal snapshots. Manually tagging and searching these pictures is slow and unreliable. This paper presents a smarter way for computers to “look” at images directly and find the ones we want with high precision, even in very large and varied collections.

Why Looking at Pixels Isn’t Enough

Traditional image search often leans on filenames or simple tags such as “cat” or “building.” But people don’t always label images carefully, and computers see only raw pixels, not the rich meaning humans infer. Earlier content-based systems tried to bridge this gap using simple visual cues like color, texture, and shape. These cues helped, but they were usually combined with fixed importance levels. That means the system treated some features as always more important than others, even if a particular search would benefit from a different mix. As a result, accuracy suffered when image types, lighting, or scenes changed.

Blending Many Ways of Seeing

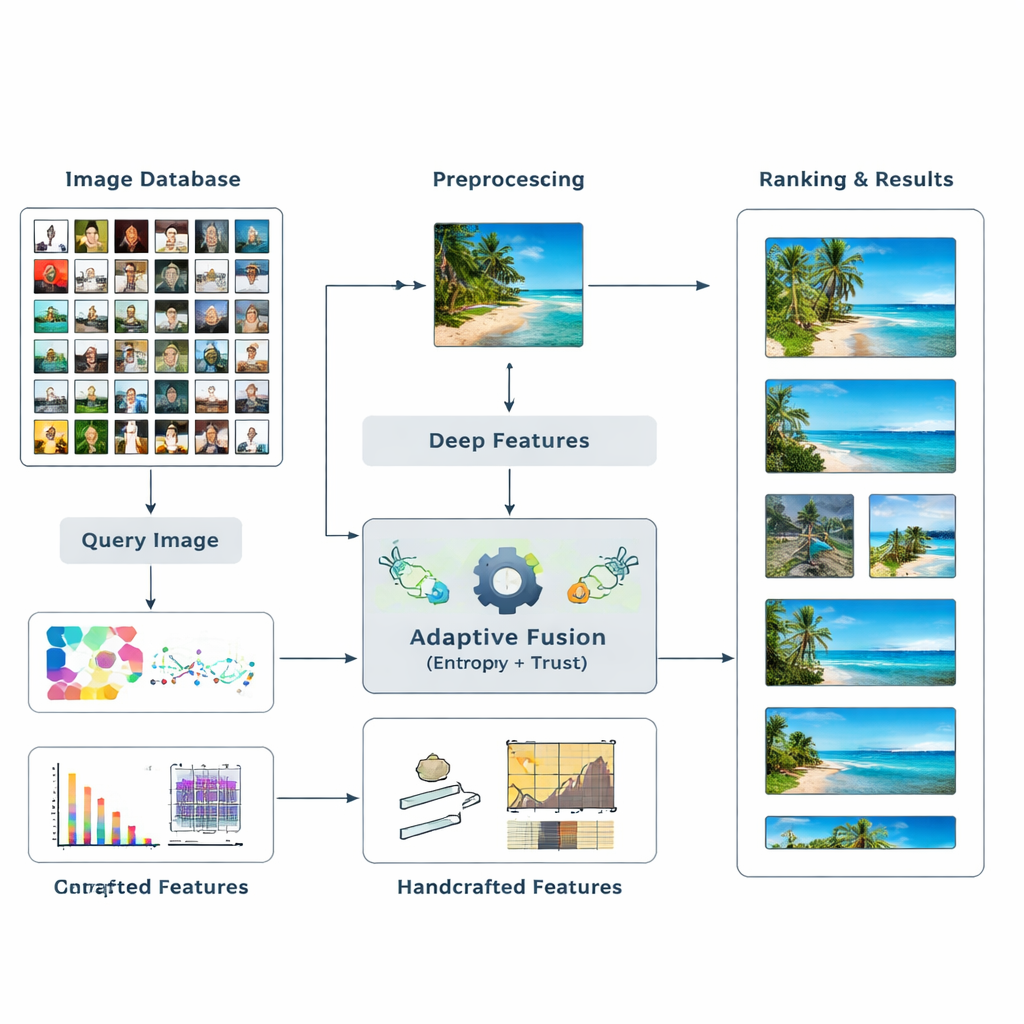

The authors propose a new retrieval framework that fuses two main kinds of visual evidence. First, it uses deep learning models—well-known networks such as ResNet50 and VGG16—that have learned to recognize complex patterns in images. Second, it adds classic “handcrafted” descriptors that capture color distributions, edges, and textures in a more controlled way. Instead of guessing in advance how much each type of feature should matter, the system lets the data decide. It measures how informative each feature is for a given search and adjusts their influence on the fly. This multi-level blend of high-level and low-level cues helps the computer form a richer, more flexible understanding of what’s in an image.

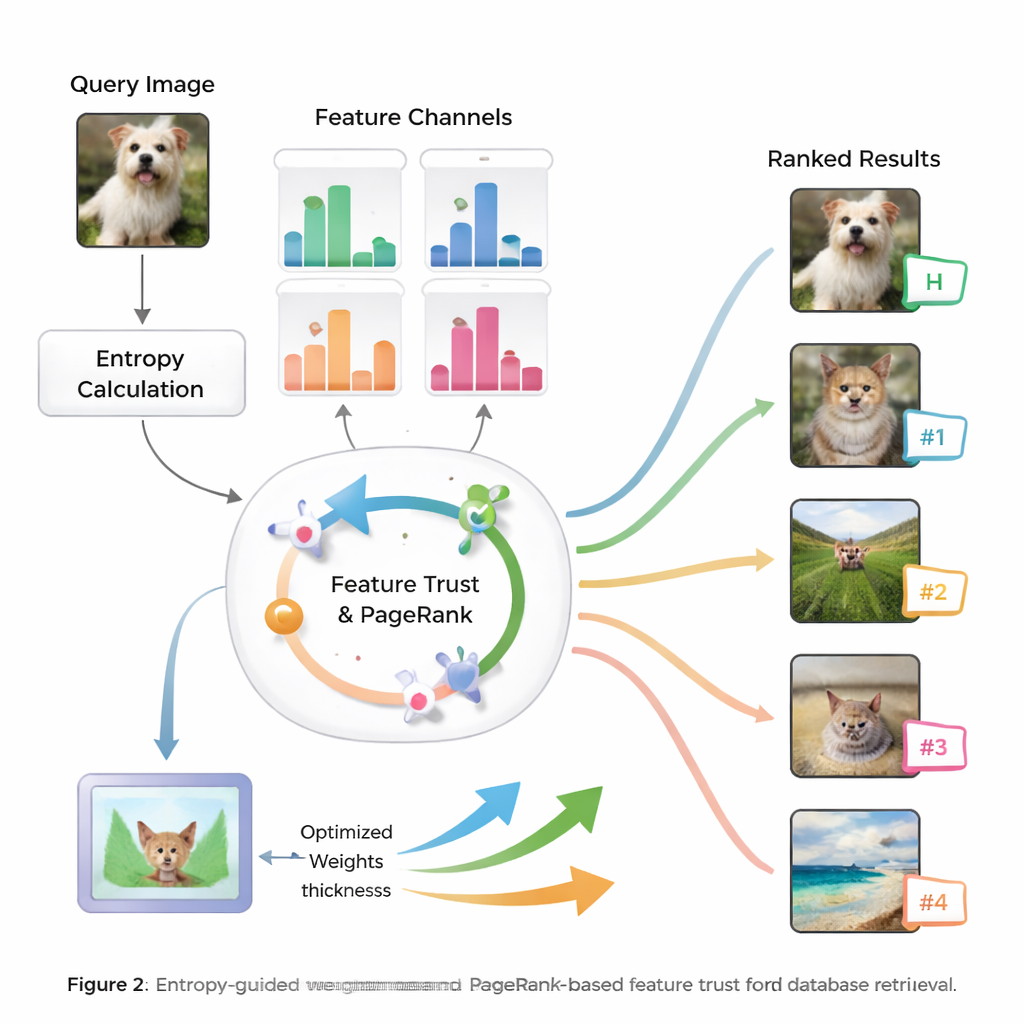

Letting Information and Trust Set the Weights

At the heart of the method is the idea of entropy, a measure of how uncertain or spread out information is. Features that consistently separate relevant from irrelevant images have lower entropy and are treated as more “discriminative.” For a new query, the system evaluates how each feature behaves across the database and assigns it an initial importance score. It then examines how reliable each feature’s search results are—whether the top matches really look like the query—building a notion of “trust” for each type of cue. These trust scores are fed into a PageRank-like process, similar to how early web search engines decided which pages were most important, to refine the feature weights through a probability transfer network.

From Smart Weights to Better Rankings

Once the system has learned how much to trust each feature for the current query, it combines their similarity scores into one overall measure for every image in the database. Images are then ranked by this comprehensive score, so those that match the query in the most meaningful ways rise to the top. The authors test their approach on widely used image benchmarks and compare it with several existing methods. They report gains of up to 8.6% in mean average precision and notable improvements in how good the top ten results are, both in accuracy and relevance of ordering. Statistical tests show that these improvements are unlikely to be due to chance, suggesting the system is both accurate and stable across many types of images.

What This Means for Everyday Image Search

In plain terms, this research shows how to make image search engines that adapt themselves to each question instead of relying on rigid rules. By letting information content and earned trust decide which visual cues matter most, the system can find the right images more often, whether it is spotting a fingerprint in a huge crime database, locating a specific building in satellite photographs, or pulling up the correct medical scan. The authors acknowledge that the method is computationally heavier than simpler systems, but argue that its higher reliability and accuracy make it well suited for large, critical image repositories where getting the right picture truly matters.

Citation: Lavanya, M., Vennira Selvi, G., Gopi, R. et al. Entropy guided multi level feature fusion network for high precision content based image retrieval. Sci Rep 16, 7449 (2026). https://doi.org/10.1038/s41598-026-38699-x

Keywords: content-based image retrieval, deep learning, feature fusion, image search, entropy weighting