Clear Sky Science · en

Adaptive multi-feature fusion architecture with optimized learning for high-fidelity brain tumor classification in MRI

Why spotting brain tumors early matters

Brain tumors are among the most dangerous cancers, and catching not just their presence but their severity can mean the difference between effective treatment and rapid decline. Doctors rely heavily on MRI scans, yet even experienced specialists struggle to distinguish slow‑growing tumors from fast, deadly ones when the images are noisy or low in contrast. This study presents an artificial intelligence system designed to read brain scans more clearly and consistently, aiming for near‑perfect separation between healthy brains and two major types of glioma, the most common primary brain tumors.

Cleaning up a blurry picture

Medical images are often far from perfect. Tumors may blur into surrounding tissue, and scanner noise can hide tiny but important details. The authors start by rebuilding the MRI pictures themselves. They first use a carefully tuned contrast adjustment method, making bright and dark areas in the scan more distinct so the edges of abnormal tissue stand out. Immediately afterward, they apply a deep neural network specialized for denoising, which has learned how to strip away speckled noise while keeping fine structures intact. Tests show that this two‑step clean‑up produces images that are sharper and structurally closer to the original anatomy than several standard enhancement techniques commonly used in hospitals.

Teaching computers to see what doctors see

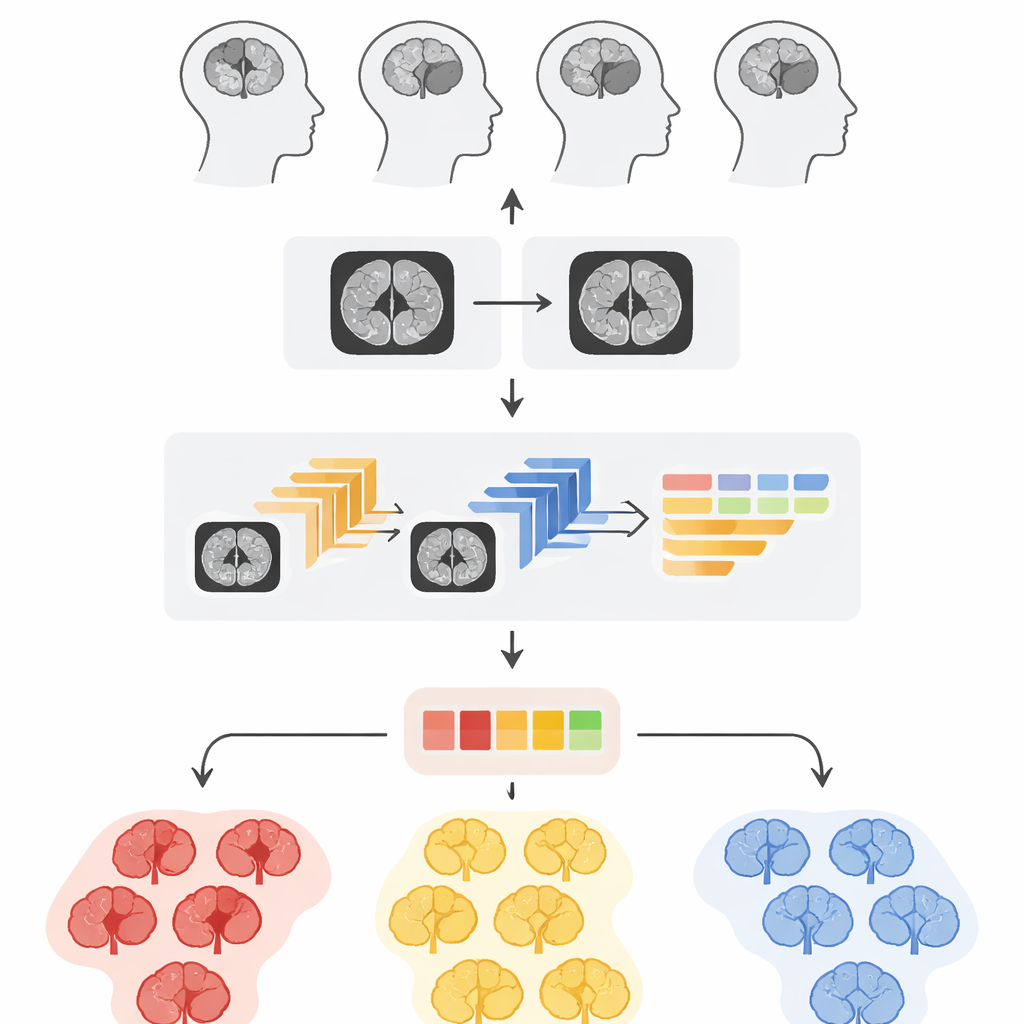

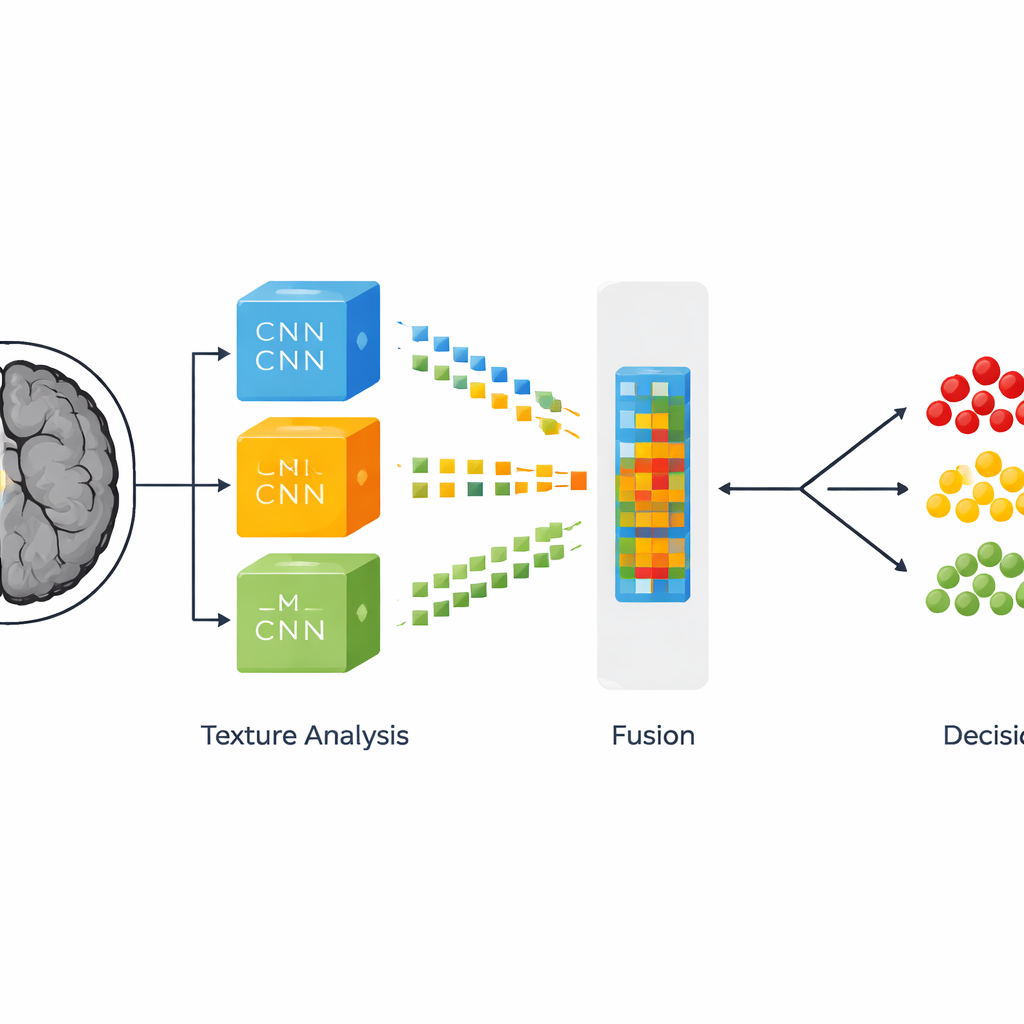

Once the MRI slices are cleaned and resized, the system tackles the more subtle question: is this brain healthy, home to a slow‑growing tumor, or threatened by an aggressive one? To do this, the researchers combine two ways of describing each image. The first comes from three powerful, pre‑trained neural networks originally built for general image recognition and then fine‑tuned for brain scans. These networks learn to spot large‑scale patterns such as shapes and regions that resemble tumors. The second description focuses on texture—tiny variations in brightness and granularity that often distinguish one tumor grade from another. This texture analysis uses a classic statistical tool that counts how often different shades of gray appear next to each other, turning subtle surface patterns into numbers that a computer can understand.

Blending many clues into one verdict

Rather than choosing between deep learning and texture analysis, the authors fuse them. From each of the three neural networks they select three especially informative internal layers and flatten their complex activation patterns into long feature lists. Each of these nine sets is then combined with the corresponding texture measurements, forming what the authors call fused feature representations. These hybrid fingerprints of the MRI slice are then passed to several different decision‑making algorithms, including random forests, boosted decision trees, and support vector machines, as well as a stacked ensemble that mixes their outputs. By exploring many combinations, the team identifies which blend of features and classifier makes the most reliable calls across thousands of images.

Measuring reliability, not just raw accuracy

To judge how well their system works, the researchers do more than quote a single accuracy number. They calculate how often the system correctly flags diseased scans, how often it correctly reassures that a scan is normal, and how rarely it cries wolf. Their best configuration—using features from one particular neural network layer combined with texture data and classified by a support vector machine—correctly labels about 99 out of every 100 images. It also shows very high confidence that a positive result truly means a tumor is present and that a negative result truly indicates no dangerous growth. Statistical tests confirm that this top‑performing setup is not just lucky but significantly better than the alternative classifiers they tried.

What this means for patients and clinics

In practical terms, the study demonstrates that a carefully designed blend of smarter image clean‑up, multiple deep learning models, and traditional texture analysis can provide near‑flawless sorting of brain MRI scans into healthy, slow‑growing tumor, and fast‑growing tumor categories. The complete pipeline can analyze a single scan slice in well under a second, suggesting it could slot into real‑world hospital workflows without delaying care. While the system does not replace expert radiologists, it could act as a dependable second pair of eyes, especially in busy emergency departments or regions with few specialists, helping ensure that aggressive tumors are recognized quickly and milder cases are not overtreated.

Citation: Safy, M., Abd-Ellah, M.K., Bayoumi, E.S. et al. Adaptive multi-feature fusion architecture with optimized learning for high-fidelity brain tumor classification in MRI. Sci Rep 16, 8498 (2026). https://doi.org/10.1038/s41598-026-38672-8

Keywords: brain tumor MRI, glioma grading, medical image AI, feature fusion, tumor classification