Clear Sky Science · en

FedSCOPE: Federated cross-domain sequential recommendation with decoupled contrastive learning and privacy-preserving semantic enhancement

Why smarter, safer recommendations matter

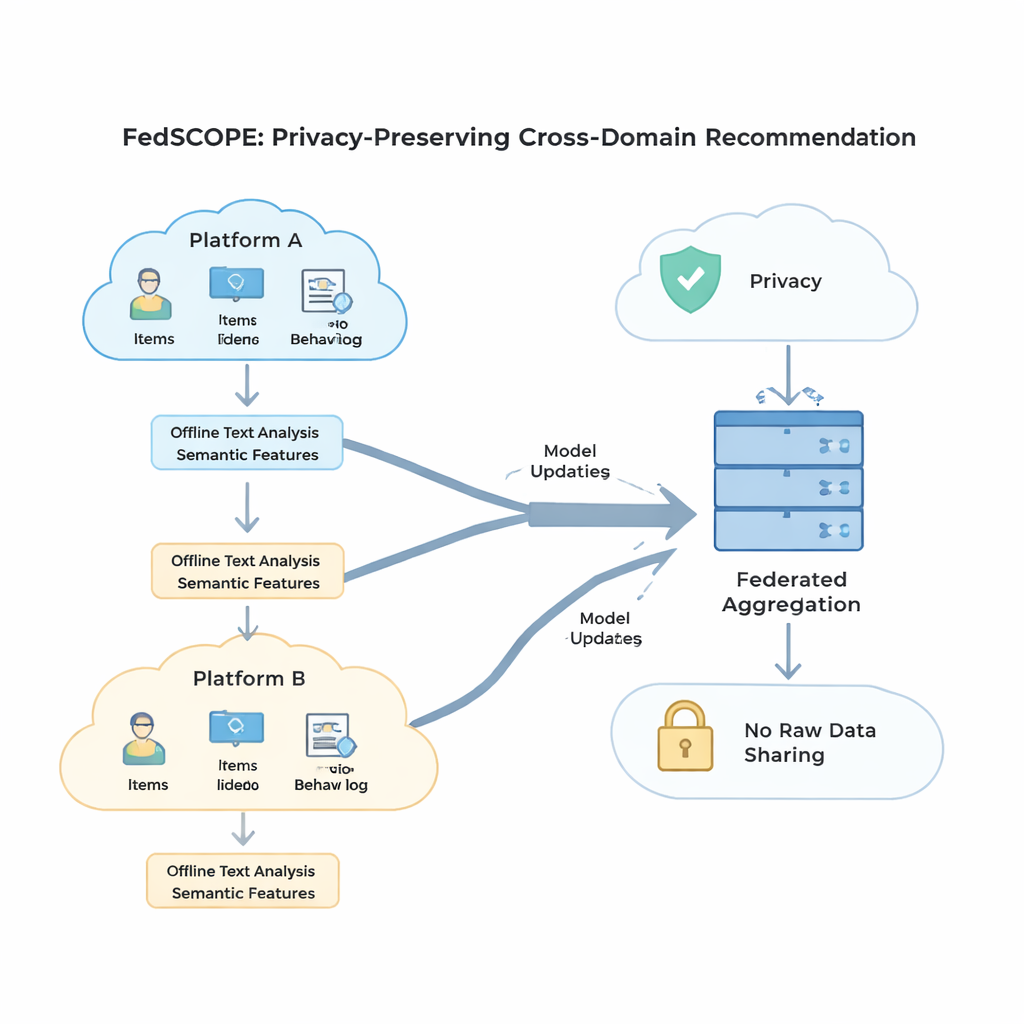

Every time you browse movies, shop online, or read reviews, recommendation systems quietly decide what to show you next. As our digital lives spread across many apps and websites, those systems could do a much better job if they could learn from all of your activity at once—without ever exposing your private data. This paper introduces FedSCOPE, a new way for different platforms to collaborate on recommendations that are both more accurate and more respectful of user privacy.

The trouble with today’s recommendation engines

Most current recommendation systems live inside a single app or website and only see a narrow slice of your behavior. That means they struggle with “cold-start” users who have little history, or with niche products that few people interact with. When companies try to combine data across domains—such as books and movies, or food and kitchen tools—they run into three big problems: data is often sparse, different platforms have very different types of users and activity, and strict privacy rules make it risky to pool raw data in one place. Simple fixes, like adding the same amount of privacy-preserving noise for everyone, tend to either weaken protection or severely hurt accuracy.

Letting language models fill in the blanks

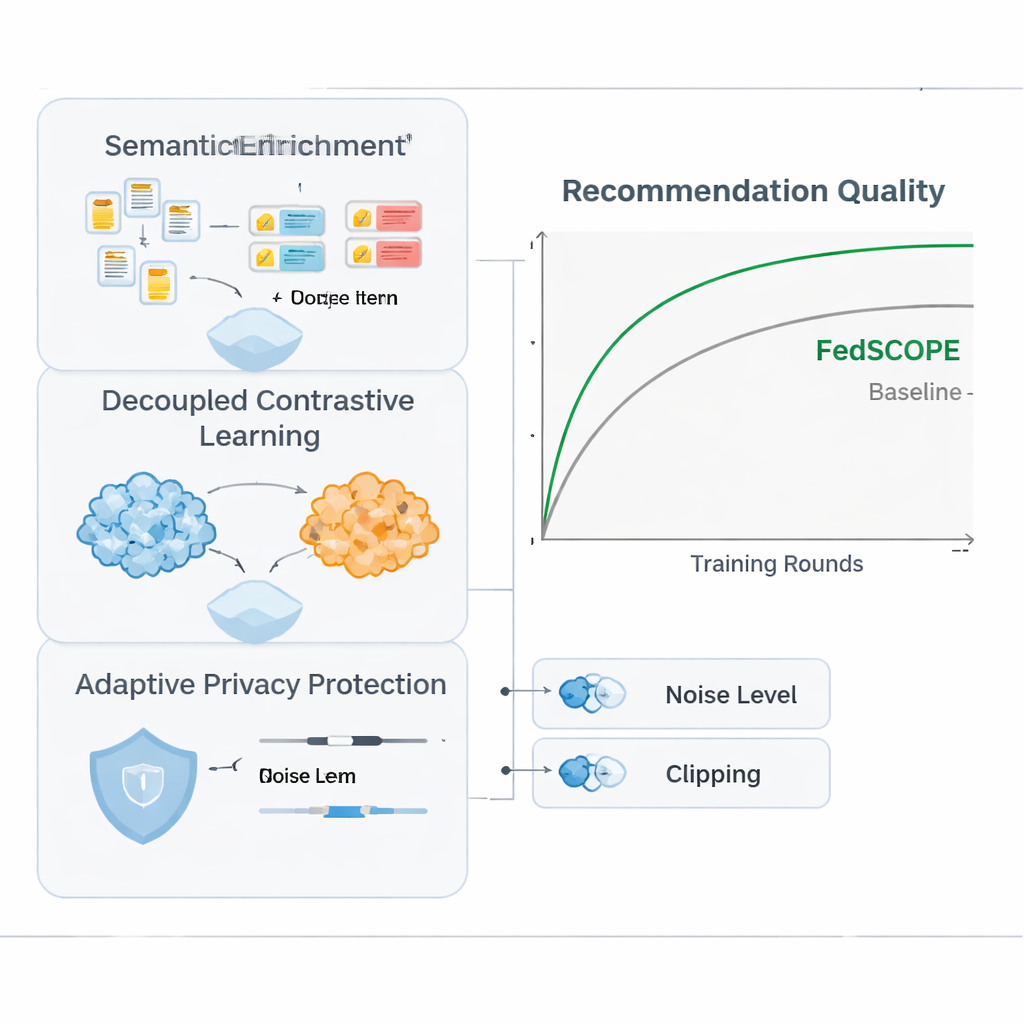

FedSCOPE tackles the sparsity problem by having each platform enrich its data using a large language model (LLM), but in an unusual, privacy-conscious way. Instead of sending user histories to a remote AI service during every recommendation, each client runs a one-time offline process: it feeds titles and basic item information (for example, a movie’s name and genre) to an LLM and asks for structured descriptions, such as likely themes, viewing habits, or related interests. These generated attributes stay on the local device or server and are fused with the usual click and viewing histories using a lightweight neural network. This gives the system a richer sense of both users and items, which is especially helpful when there are only a few recorded interactions. Because the process is offline and local, raw behavior never leaves the platform, and there is no ongoing dependence on external AI services.

Separating what’s personal from what’s shared

To make use of behavior coming from multiple domains without mixing signals in a harmful way, FedSCOPE introduces a training strategy called decoupled contrastive learning. In simple terms, the system learns two things at once. First, inside each domain—say, just the movie side—it pulls together users who behave similarly and pushes apart those who do not, sharpening the sense of personal taste within that environment. Second, across domains, it aligns representations of the same user while keeping different users distinct, so that what you watch can help predict what you might read or buy, without blurring you with others. By handling these “within-domain” and “across-domain” goals separately, the method avoids a common pitfall where forcing everything into a single shared mold destroys fine-grained preferences.

Protecting privacy without throwing away usefulness

Strong mathematical privacy, known as differential privacy, usually means adding random noise to model updates before they are shared with a central server. Many earlier systems used the same privacy settings for every participant, which is a poor fit when some clients have millions of users and others only a few thousand. FedSCOPE instead gives each client a personalized privacy budget and adapts how much it clips and perturbs its updates based on its data size and past behavior. Large, data-rich platforms can contribute more precise information without being over-noised, while smaller ones are shielded more aggressively. All updates are then combined using secure aggregation, so the server never sees any individual contribution in the clear.

What the experiments show in practice

The authors tested FedSCOPE on real-world shopping data from Amazon, pairing domains like Movies with Books and Food with Kitchen. They compared it against a range of modern recommendation methods, including other privacy-preserving and cross-domain approaches. Across multiple accuracy measures, FedSCOPE consistently ranked at or near the top. It converged faster during training, worked better for users with very few past interactions, and held up well when the number of participating clients or the fraction sampled each round changed. Importantly, when the team tightened privacy constraints, FedSCOPE’s adaptive strategy kept performance much higher than systems using one-size-fits-all differential privacy.

What this means for everyday users

From a layperson’s perspective, FedSCOPE points toward a future where your favorite apps can collaborate to understand your tastes more deeply without ever pooling your raw data. By enriching sparse histories with language-model insight, carefully separating what is domain-specific from what is shared, and tuning privacy controls to each participant, the framework delivers recommendations that are both more relevant and more respectful of personal information. In practical terms, that could mean better suggestions for what to watch, read, or buy next—without having to trade away your digital privacy.

Citation: Zhao, L., Lin, Y., Qin, S. et al. FedSCOPE: Federated cross-domain sequential recommendation with decoupled contrastive learning and privacy-preserving semantic enhancement. Sci Rep 16, 7420 (2026). https://doi.org/10.1038/s41598-026-38628-y

Keywords: federated recommendation, privacy-preserving AI, cross-domain personalization, large language models, differential privacy