Clear Sky Science · en

Exploring teacher-student interaction through multimodal large language models: an empirical investigation

Why watching classrooms with AI matters

Anyone who has sat in a classroom knows that how teachers and students interact can make the difference between boredom and real learning. Yet it is surprisingly hard to study these moment‑to‑moment exchanges: observers get tired, human judgments differ, and video data quickly becomes overwhelming. This article explores how a new kind of artificial intelligence—multimodal large language models that can “look” at images and “read” text—can help researchers and schools make sense of complex classroom life in a faster and more objective way.

Turning real lessons into research data

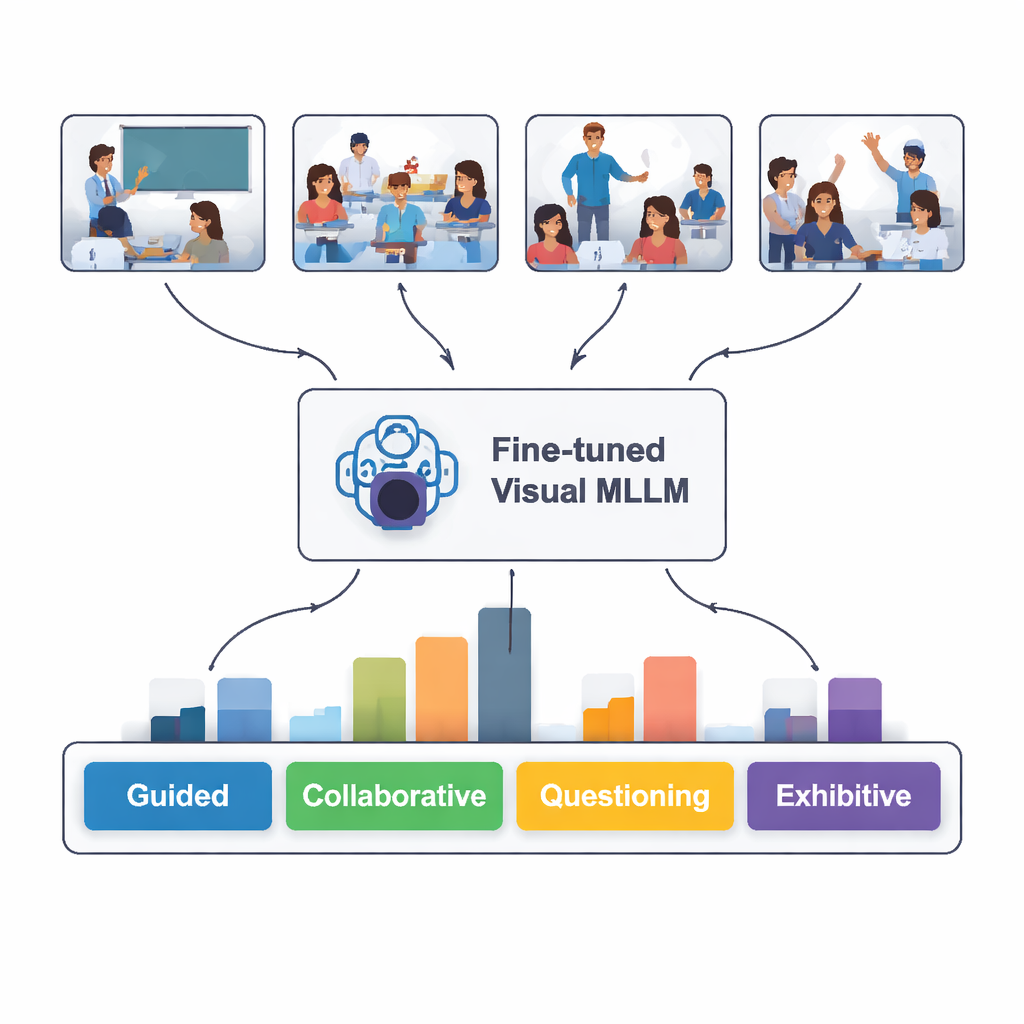

The researchers began with ordinary classroom videos from Chinese primary and secondary schools, publicly available on a national education platform. From 30 lessons they extracted nearly 2,400 still images that captured key moments of teaching and learning. Each image was labeled according to five easy‑to‑grasp patterns of interaction: guided (teacher explaining), collaborative (students working together), questioning (asking and answering), independent (students working alone), and exhibitive (students presenting to the class). Experts in educational technology helped refine these categories so they matched what experienced observers look for in real classrooms.

Teaching an AI to see classroom dynamics

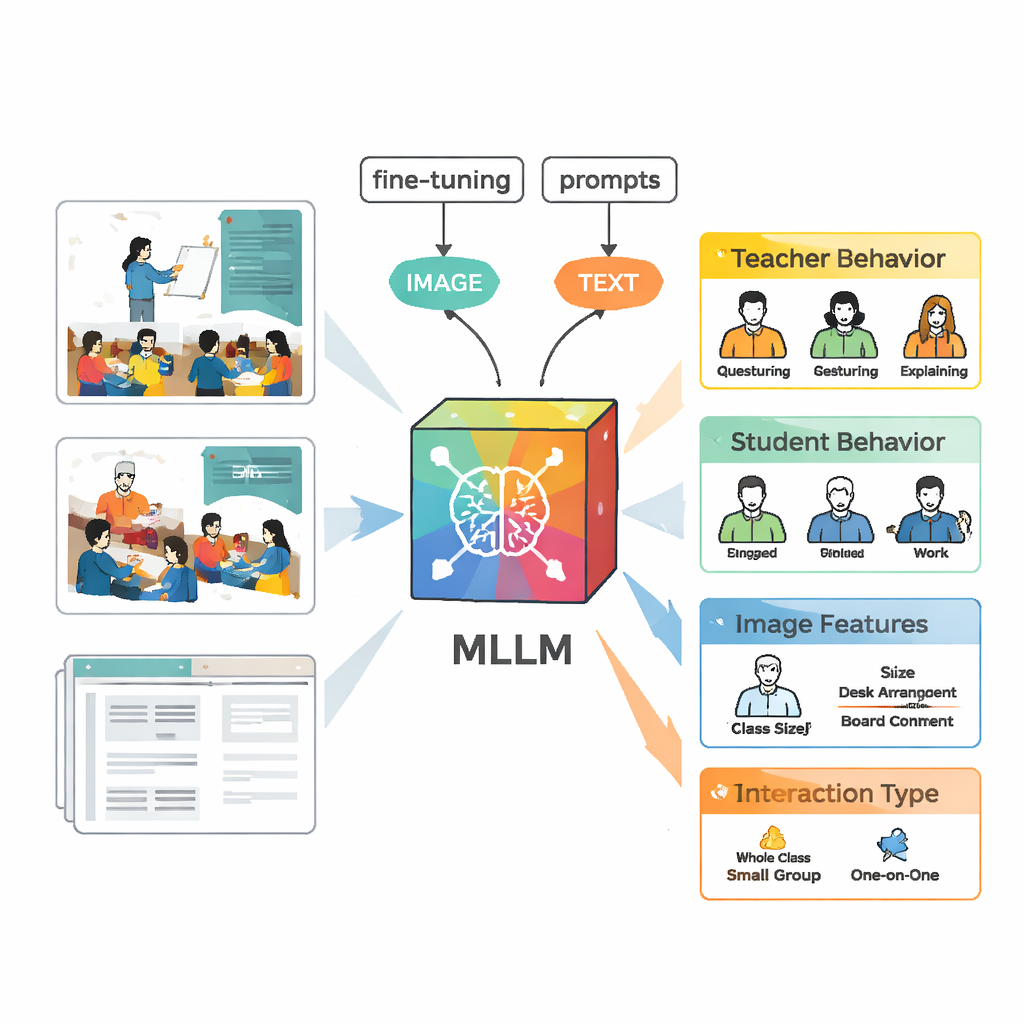

To analyze these scenes, the team used a multimodal large language model called VisualGLM‑6B, which can take both images and text as input. Because the original model was trained broadly and not specifically on classrooms, the researchers “fine‑tuned” it using their labeled images. They adopted a technique called LoRA that adjusts only a small number of the model’s internal parameters, making training more efficient but still powerful. They also designed careful prompts—structured instructions that tell the model to describe teacher behavior, student behavior, visual features, and the type of interaction in a consistent format, so that the output would be easier to compare with human expert judgments.

Building better labels with humans and machines

Creating a high‑quality training set required more than just pointing the model at videos. First, VisualGLM produced basic descriptions of each image. Human annotators then corrected mistakes and filled in missing context, such as who was speaking or whether students were listening or discussing. Next, they fed these polished descriptions into ChatGPT, which, guided by custom prompts, generated structured analyses following the five interaction categories. Experts reviewed and edited these AI‑generated analyses again. The final result was a rich dataset in which each image carried a detailed, reliable account of what teachers and students were doing.

How well did the AI “read” the classroom?

When tested on 100 new classroom images it had never seen, the fine‑tuned model correctly identified the interaction type 82 percent of the time. It performed best at recognizing guided, independent, and exhibitive situations—when the teacher is clearly explaining, students are working quietly on their own, or a student is presenting up front. It struggled more with collaborative work and questioning, where body language and seating arrangements can be ambiguous even to people. A deeper text‑based comparison showed that the model’s written descriptions often matched expert analyses quite closely, though it occasionally “hallucinated” details that were not present in the images or misread a subtle gesture.

What this means for future classrooms

To a lay reader, the core message is that AI systems are becoming capable of watching classrooms and summarizing how teaching and learning unfold, with a level of structure and consistency that would be hard for humans to maintain over thousands of scenes. While not perfect—especially for subtle forms of discussion and questioning—the approach shows that multimodal large language models can already support educational research and, eventually, classroom feedback tools. As these models begin to include sound, gestures, and larger, more varied datasets, they may help teachers see patterns in their practice that were previously hidden, offering a new lens on how everyday interactions shape students’ learning.

Citation: Chen, G., Han, G., Niu, J. et al. Exploring teacher-student interaction through multimodal large language models: an empirical investigation. Sci Rep 16, 7602 (2026). https://doi.org/10.1038/s41598-026-38626-0

Keywords: teacher-student interaction, classroom analytics, multimodal AI, education technology, large language models