Clear Sky Science · en

Attention-based workload prediction and dynamic resource allocation for heterogeneous computing environments

Why Smarter Computers Matter for Everyone

Behind every movie you stream, map you open, or AI assistant you talk to, huge warehouses of computers quietly work around the clock. As artificial intelligence grows more powerful, these data centers are being pushed to their limits: they must juggle many kinds of jobs on many kinds of machines while keeping costs, speed, and energy use under control. This paper introduces a new way to predict what those computers will need in the near future and to shuffle work across different types of hardware so that services stay fast and reliable while wasting less electricity.

Many Jobs, Many Machines

Modern data centers no longer rely on a single kind of server. Instead, they combine traditional processors with powerful graphics chips, custom AI boards, and reprogrammable circuits. Different AI tasks—such as training a large language model, serving real-time recommendations, or analyzing images—fit these machines in very different ways. Today, operators often allocate resources using fixed rules or simple forecasts based on yesterday’s usage. When demand suddenly spikes, this can cause slow responses or broken service agreements; when demand drops, expensive hardware may sit idle, burning power but doing little work.

Learning to Look Where It Matters

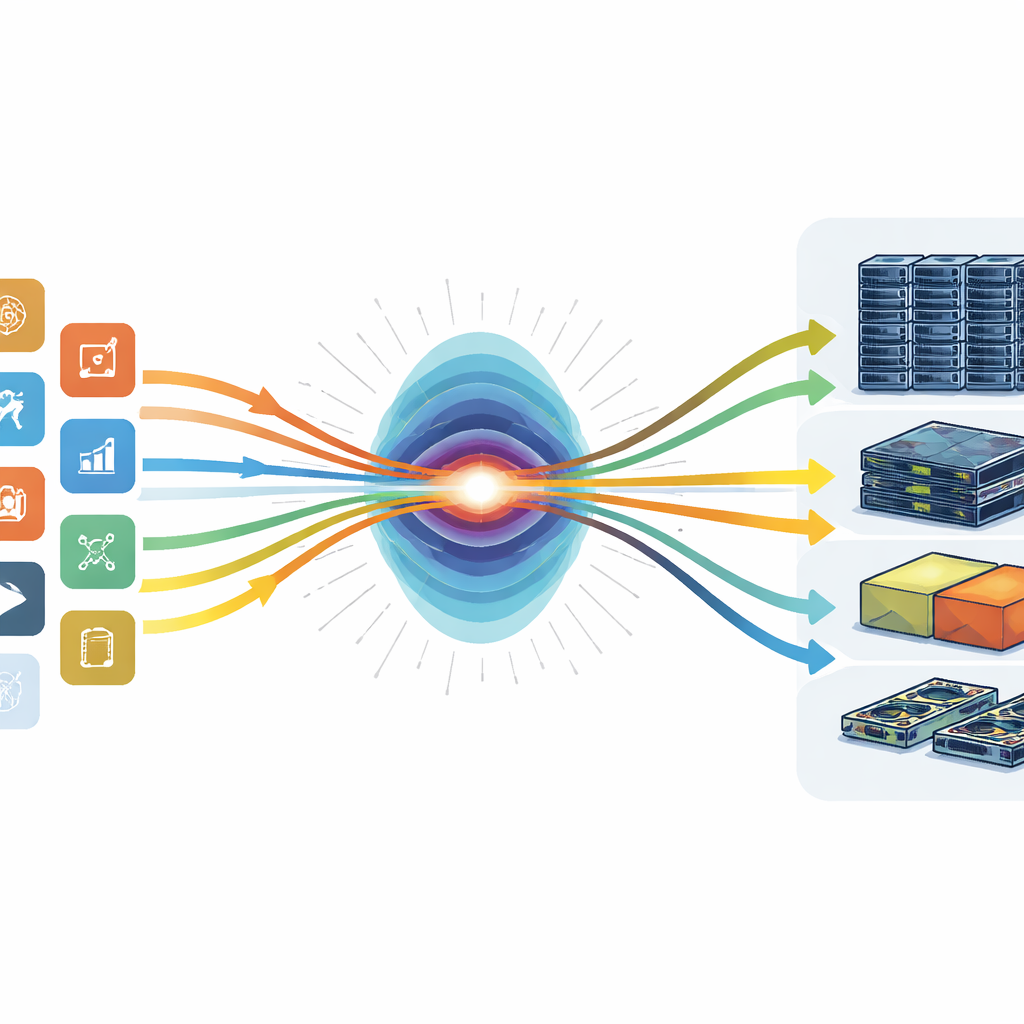

The authors borrow a concept from cutting-edge AI models called “attention” and apply it to data center management. Instead of treating all past usage data the same, their system learns which moments in time and which types of jobs are most useful for guessing what will happen next. One part of the model focuses on how each workload—like a training job or an online service—changes over time. Another part looks sideways across different workloads running at the same moment to uncover hidden connections, such as a pattern where finishing a batch of training jobs often leads to a surge in related online queries. By layering these two views, the system can forecast future demands for processors, memory, and accelerators more accurately than earlier methods.

Turning Predictions into Better Decisions

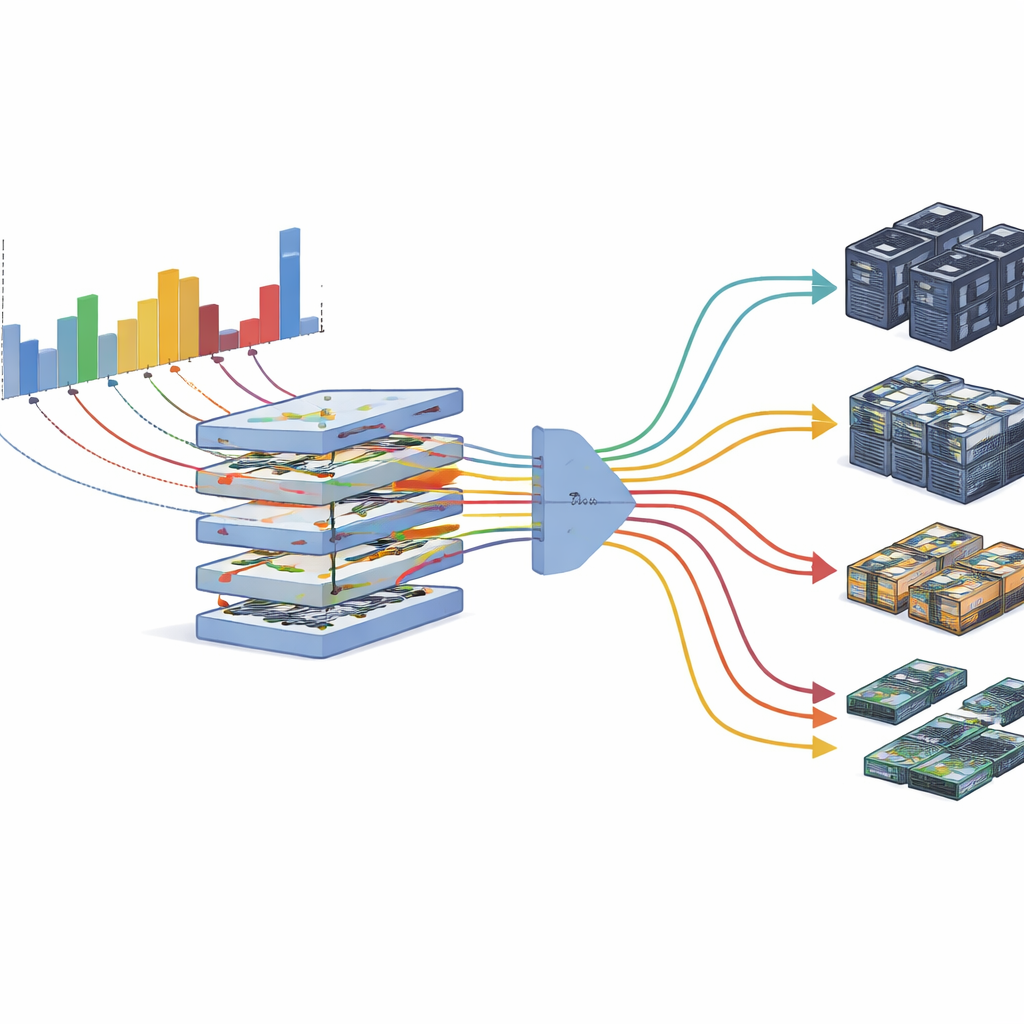

Prediction alone is not enough; the data center must act on it. The second half of the framework turns these forecasts into concrete decisions about where to run each job. The authors treat this as a balancing act among three goals: finishing jobs quickly, using as little energy as possible, and keeping machines busy rather than idle. Their scheduler represents the data center as a network of different devices and uses an optimization procedure to choose placements that trade off these goals according to operator preferences. Because forecasts are never perfect, the system also estimates its own uncertainty and leaves safety margins when needed, then monitors reality in real time to adjust by pausing low-priority work or moving jobs when usage drifts from expectations.

Putting the System to the Test

To see how this approach works in practice, the researchers built a test cluster with a realistic mix of processors, GPUs, and specialized AI hardware, and fed it detailed activity records from real-world data centers at Google, Alibaba, and an academic lab. They compared their method against popular forecasting tools and scheduling strategies, including techniques used in production systems and reinforcement learning-based controllers. The attention-based predictor consistently made more accurate guesses, especially for the sharp bursts that often occur in AI workloads. When coupled with their dynamic allocator, the system raised overall hardware use to about four-fifths of capacity, cut average job completion time by roughly a quarter, and lowered energy consumption by about 15 percent, all while keeping service violations to a very low level.

What This Means for Everyday Users

For non-specialists, the main takeaway is that smarter coordination inside data centers can make AI services faster, cheaper, and greener without requiring new chips or buildings. By learning where to “pay attention” in the flood of usage data, this framework helps existing hardware do more useful work and sit idle less often. That means companies can deliver snappier apps and more powerful AI tools while holding down electricity bills and carbon footprints. As similar prediction-and-allocation systems spread and mature, the invisible machinery of the internet may become not just more capable but also more sustainable.

Citation: Shao, S., Ding, X., Zhao, B. et al. Attention-based workload prediction and dynamic resource allocation for heterogeneous computing environments. Sci Rep 16, 8571 (2026). https://doi.org/10.1038/s41598-026-38622-4

Keywords: data center scheduling, AI workload prediction, heterogeneous computing, energy-efficient computing, resource allocation