Clear Sky Science · en

Error characterization and error correction approaches in combinatorial DNA-based storage

Storing the world’s data in DNA

Our phones, servers, and cloud centers are drowning in information, and traditional storage technologies are struggling to keep up. DNA—the same molecule that carries genetic information in living things—offers an enticing alternative: it is incredibly dense, long‑lasting, and needs almost no power to preserve. This paper explores a particularly powerful flavor of DNA data storage, called combinatorial DNA encoding, and shows how a new kind of error correction can make it far more reliable in practice.

How to pack more bits into DNA

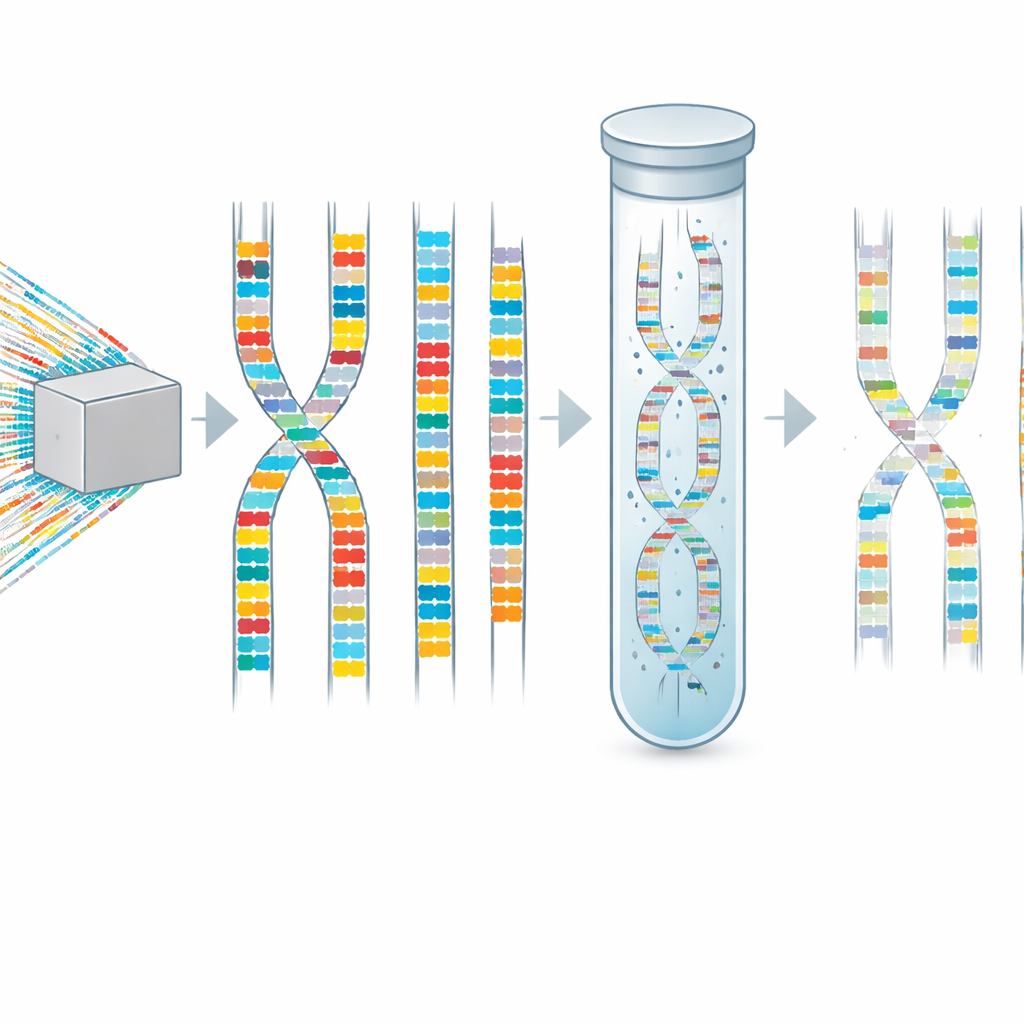

Conventional DNA storage writes data by choosing one of four bases (A, C, G, T) at each position along a synthetic DNA strand. Combinatorial DNA encoding takes a different approach. Instead of using a single short DNA fragment at each position, it uses carefully chosen combinations of short fragments. Each position in a digital message is represented not by one short sequence, but by a set of them drawn from a predefined library. This greatly boosts how much information can be packed into every synthesis step, reducing cost and time. However, it also means that to correctly read a single “letter” of the stored message, the system must detect all of the fragments that should be present at that position.

When some pieces quietly disappear

Because DNA molecules are produced and read in large numbers, the same combinatorial sequence appears many times, each copy made and read with small imperfections. The authors examined several experimental datasets and discovered that a specific kind of mistake dominates in combinatorial DNA storage: erasure of a single fragment from an otherwise correct combination. In other words, one member of the set is simply never observed in the sequencing reads, even though the others are present. These “asymmetric combinatorial erasures” become especially common when the number of reads per stored sequence is low—a realistic situation in large-scale systems, where sequencing more deeply is expensive. Below about 50 reads per sequence, the frequency of such missing pieces rises sharply, making it difficult or impossible to reconstruct the intended data using standard methods.

Probing errors at larger scale

To move beyond small demonstrations, the team collaborated with an industrial partner to build a large proof‑of‑concept storage system using combinatorial DNA. They encoded thousands of bits of text into 640 distinct combinatorial sequences, each spanning eight positions that carry information. Specialized laboratory protocols assembled pools of DNA molecules where each molecule represented one combination of short fragments. The researchers then sequenced millions of reads and used a customized analysis pipeline based on BLAST, a well‑known sequence alignment tool, to find which fragments appeared at each position. This large dataset confirmed the earlier observation: when read coverage was high, most combinations could be reconstructed, but when the average number of reads per sequence dropped, missing fragments—and thus erasure errors—became the main obstacle to accurate decoding.

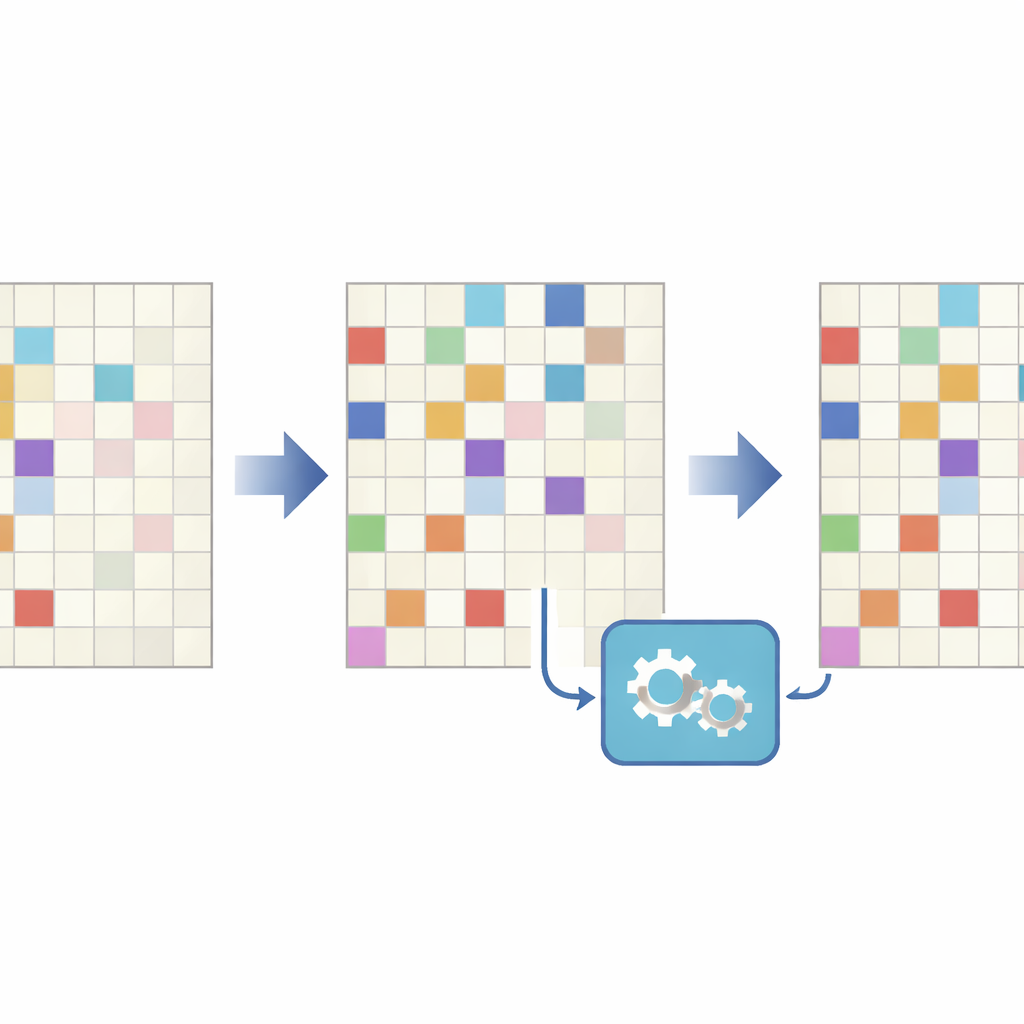

A code that expects one‑way mistakes

Traditional error‑correcting schemes used in DNA storage often assume that mistakes are roughly symmetric—symbols may be confused, added, or lost with similar likelihood. That assumption does not fit combinatorial DNA, where the typical failure is that a fragment present in the original combination fails to show up at all, while spurious extra fragments are comparatively rare. To tackle this, the authors designed a new error‑correcting code, called a combinatorial VT code, that is tuned to this one‑way behavior. They represent each combinatorial letter as a row in a binary matrix and treat missing fragments as bits that flip only from one to zero. The code uses a mathematical fingerprint, or “syndrome,” for each letter that can reveal which fragment went missing, even when only part of the combination is observed. These syndromes are themselves protected by a Reed–Solomon code, enabling recovery of several such errors across a sequence.

Putting the new method to the test

The researchers put their tailored code head‑to‑head against a more conventional two‑dimensional Reed–Solomon scheme that had been used previously in DNA storage. They tested both in software simulations and in a second large‑scale experiment, where half of the sequences were protected by the traditional method and half by the new combinatorial code, under identical redundancy. Across a range of conditions dominated by erasure errors, the new approach more often reconstructed the original data correctly, and it did so especially well when read coverage was low. Under these harsher conditions, the traditional approach frequently failed to decode entire sequences, while the combinatorial VT scheme still recovered them.

Why this matters for future DNA archives

The work shows that making DNA data storage practical is not just about squeezing more bits into molecules—it also requires error correction that matches the real error patterns of the laboratory processes used. By carefully studying how combinatorial DNA storage fails, and by designing codes that specifically expect fragments to go missing, the authors demonstrate a clear path to more reliable and scalable DNA archives. As DNA‑based systems grow to handle ever larger collections of data, such tailored, asymmetric error‑correction strategies will be essential for turning fragile molecular mixtures into trustworthy long‑term memories.

Citation: Preuss, I., Sabary, O., Gabrys, R. et al. Error characterization and error correction approaches in combinatorial DNA-based storage. Sci Rep 16, 8093 (2026). https://doi.org/10.1038/s41598-026-38599-0

Keywords: DNA data storage, error correction, combinatorial encoding, erasure errors, information density