Clear Sky Science · en

Leveraging natural language processing and machine learning to identify chronic conditions from primary care electronic medical records

Why your doctor’s notes matter more than you think

When you visit your family doctor, every cough, complaint, and concern is written into your electronic medical record. Much of this detail lives in free‑form notes rather than tidy check boxes. This study shows that those narrative notes, when combined with modern computer techniques, can help doctors spot chronic illnesses like arthritis, kidney disease, diabetes, high blood pressure, and breathing problems more accurately—especially when these problems are not clearly coded elsewhere in the chart.

Hidden clues inside everyday clinic records

Electronic medical records in primary care contain two very different kinds of information. There are structured items, such as billing codes, medication lists, and lab results, and there are unstructured notes, where clinicians describe symptoms, history, and their reasoning in regular language. In Canada, billing codes are often incomplete and used mainly for payment rather than precise diagnosis, so many health issues show up more clearly in the notes than in the check boxes. The researchers set out to see whether mining both types of information together could better identify five common long‑term conditions in patients aged 60 and older who attended a single Alberta family medicine clinic.

Teaching computers to read doctor’s language

To tap into the rich but messy text of clinical notes, the team used natural language processing, a set of tools that helps computers work with human language. They cleaned the notes by removing stray symbols, standardizing words, expanding abbreviations, and reducing related words to common roots. They also built simple rules to recognize when a note said a patient did not have a condition—for example, phrases like “no evidence of” or “was ruled out”—so the computer would not mistakenly treat these as positive cases. Clinicians on the team created lists of meaningful terms and phrases for each condition, helping the algorithms focus on relevant medical ideas rather than every stray word.

Finding themes and learning from patterns

Next, the researchers quantified the text so that it could be fed into machine learning models. They counted how often each word or word pair appeared in each patient’s notes, but they also down‑weighted very common words and highlighted those that were especially distinctive for a particular condition. Using a method called topic modeling, they checked that the most frequent word groups in the notes lined up with the conditions of interest—for example, terms linked to diabetes or high blood pressure. This step served as a reality check, confirming that the computer‑identified themes matched clinical knowledge before building prediction models.

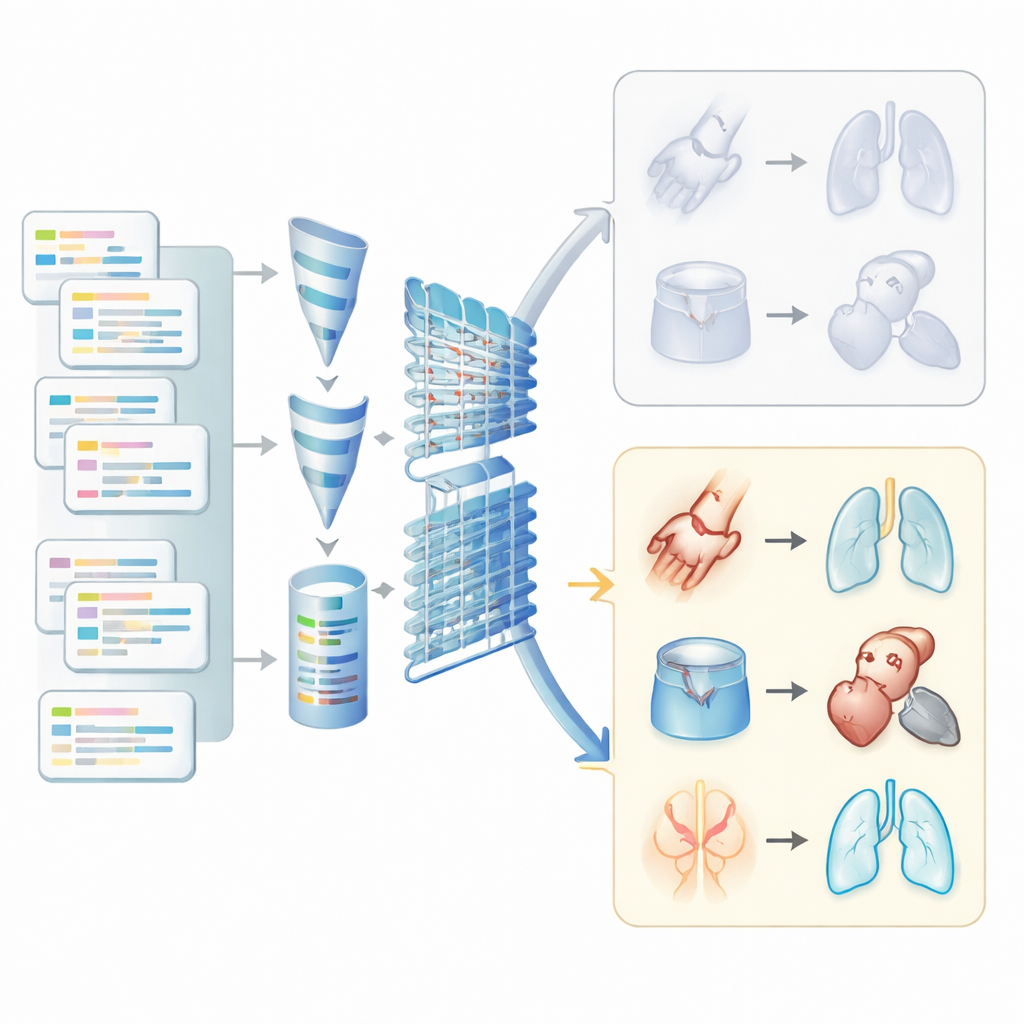

Letting algorithms flag who is likely sick

The heart of the study was training three types of machine learning models to decide whether each patient likely had each of the five chronic conditions. One model worked like a refined risk calculator, another drew a boundary between healthy and ill cases, and a third resembled a simple brain‑inspired network. The researchers first trained these models using only the structured parts of the record, and then retrained them using both structured data and the processed text features from the notes. They also adjusted for the fact that some diseases were less common in the sample by carefully rebalancing the data so that rare conditions would not be overlooked by the algorithms.

Clear gains from using the full story

When unstructured notes were added, the models became noticeably better at telling who did and did not have a condition, especially for problems that are often under‑coded in billing data. For arthritis and respiratory diseases, measures of how well the models separated sick from well patients and how reliably they flagged true cases improved markedly. For example, performance for detecting breathing problems and arthritis moved from fair to strong when notes were included. Gains for diabetes and high blood pressure were smaller because these conditions were already well captured in structured fields. Interestingly, the simpler models often performed as well as, or better than, the more complex neural network, suggesting that sophisticated deep learning is not always necessary for this kind of clinic‑level work.

What this means for your future care

Overall, the study shows that paying attention to the narrative parts of medical records—not just codes and lab numbers—can significantly sharpen our ability to find patients with chronic diseases. By turning free‑text notes into machine‑readable signals and combining them with existing structured data, health systems may be able to identify at‑risk patients sooner, focus follow‑up care where it is most needed, and extend this approach to other conditions that mostly live in the written story of the visit rather than the drop‑down menus.

Citation: Zhang, N., Abbasi, M., Khera, S. et al. Leveraging natural language processing and machine learning to identify chronic conditions from primary care electronic medical records. Sci Rep 16, 8441 (2026). https://doi.org/10.1038/s41598-026-38594-5

Keywords: electronic medical records, chronic disease detection, natural language processing, machine learning in healthcare, primary care data