Clear Sky Science · en

Intelligent incremental classification using a dynamic grasshopper-enhanced neural network for data streams

Why Constantly Changing Data Matters

From power grids and factories to online payments, modern systems spew out data every second. Hidden in these continuous data streams are early warnings of equipment failures, cyberattacks, or looming price spikes. The challenge is that this river of information never stops and its behavior keeps changing over time. The paper summarized here introduces a new way to train neural networks so they can keep learning from such live data without slowing down or losing accuracy, making them more useful for real-world monitoring and decision-making.

The Limits of One-Off Training

Most traditional machine learning models are trained in "batches": engineers collect a large historical dataset, tune the model, and then deploy it. This works if the world stays roughly the same. But in industrial settings, conditions drift—demand patterns change, sensors age, markets fluctuate. A model frozen in time gradually becomes blind to new patterns, and retraining it from scratch on ever-growing datasets is costly and slow. Standard automatic tuning methods like grid search or evolutionary algorithms also assume fixed data, meaning they must be restarted whenever the data distribution shifts, which is impractical for always-on systems.

A Neural Network That Learns on the Fly

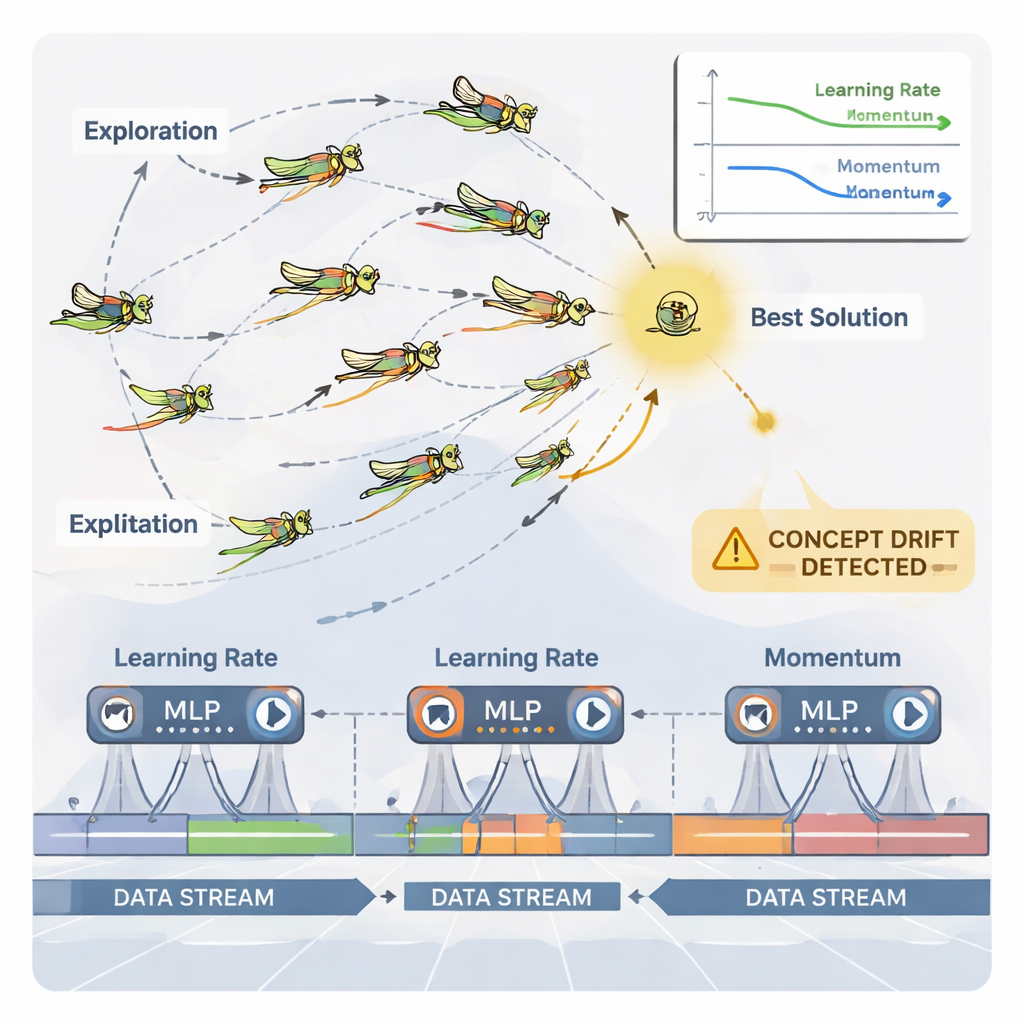

The authors propose an incremental learning framework built around a multilayer perceptron (MLP), a common type of neural network. Instead of feeding the network all past data at once, the incoming data stream is broken into manageable windows. Each new window becomes a small training step that updates the network’s internal weights and then is discarded—a "train-and-forget" strategy that keeps memory use low. Crucially, the system does not rely on fixed training settings. Two key knobs that control learning behavior—the learning rate (how big each update is) and momentum (how smoothly updates move)—are continuously adjusted as the stream evolves, so the model can stay responsive without becoming unstable.

Grasshoppers as Smart Parameter Tuners

To handle this continuous adjustment, the paper uses a nature-inspired optimizer called the Dynamic Grasshopper Optimization Algorithm (DGOA). Imagine a swarm of virtual grasshoppers exploring possible combinations of learning rate and momentum. Early on, they roam widely to search for good regions; later, they tighten their movements to refine promising choices. In this dynamic variant, their step size and attraction toward the best solution change over time based on how well the neural network is doing. The system also monitors for "concept drift"—sudden changes in prediction errors or in the data itself. When a drift is detected, some grasshoppers are reset and their steps temporarily become larger, allowing the optimizer to quickly search new regions and escape outdated settings.

Putting the Method to the Test

The researchers evaluated their approach on a real electricity market dataset from Australia, where the goal was to predict whether prices would move up or down. Compared with common tuning methods such as grid search, random search, particle swarm optimization, genetic algorithms, ant colony optimization, and the standard grasshopper algorithm, the dynamic version paired with incremental learning achieved the highest accuracy (about 89.5%) while using less computation time and fewer iterations. Additional experiments showed that the method adapts better to both stable and changing data streams, scales from thousands to billions of samples while keeping memory in check, and performs competitively on tasks like predictive maintenance, anomaly detection, and fraud detection, as well as on standard mathematical optimization benchmarks.

What This Means in Practice

For non-experts, the takeaway is that this work offers a way to keep neural networks "alive" and well-tuned in environments where data never stops and conditions constantly shift. Instead of repeatedly stopping the system to rebuild models from scratch, the proposed framework lets a lightweight network update itself window by window, while a swarm-based optimizer continuously tweaks how fast and how smoothly it learns. The result is faster adaptation to new patterns, better long-term accuracy, and more efficient use of computing resources—key ingredients for reliable, real-time decision-making in sectors like energy, manufacturing, and finance.

Citation: Darwish, S.M., El-Shoafy, N.A. Intelligent incremental classification using a dynamic grasshopper-enhanced neural network for data streams. Sci Rep 16, 7730 (2026). https://doi.org/10.1038/s41598-026-38571-y

Keywords: data streams, incremental learning, neural networks, hyperparameter optimization, swarm intelligence