Clear Sky Science · en

A global twitter sentiment analysis model for COVID-vaccination

Why feelings about vaccines on Twitter matter

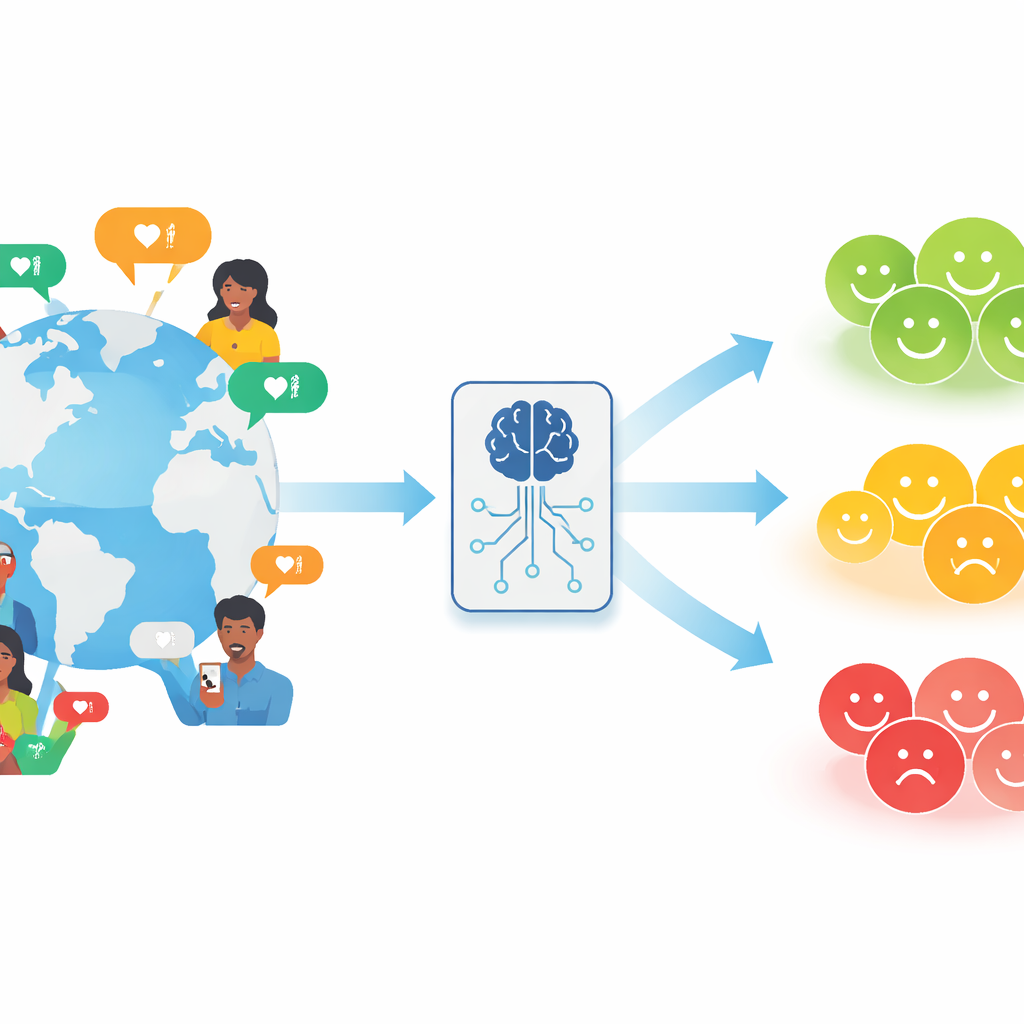

During the COVID-19 pandemic, governments relied on vaccines and public cooperation to save lives. Yet people around the world reacted very differently to vaccination campaigns, often venting their hopes and fears on social media. This study looks beyond simple "positive" or "negative" labels on tweets and asks a deeper question: how do people’s comments about COVID-19 vaccination look once we factor in how hard their own country was hit by the virus? By blending tweet text with real-world pandemic data, the authors aim to capture what a message truly means in its broader global setting.

From raw tweets to first-pass feelings

The researchers began by collecting over forty thousand English-language tweets about COVID-19 vaccination posted in spring 2021, a crucial period when many countries were reaching major vaccination milestones. They cleaned the data by stripping away user tags and web links that do not help in judging tone. To assign an initial sentiment to each tweet, they used a modern language model specially trained on Twitter content, known as Twitter-roBERTa. This model sorts tweets into three basic categories: positive, negative, or neutral, based purely on the text. The authors call this first layer of labeling the tweet’s “local sentiment,” because it ignores what is happening in the rest of the world.

Adding the real-world state of the pandemic

Next, the team gathered country-level COVID-19 statistics—case counts, deaths, and population—for ten countries spread across North America, Europe, Asia, and Oceania. They converted these numbers into a single “severity value” for each country, showing how hard it was hit relative to the others during the study period. A tweet from a country with high rates of cases and deaths is thus read in a very different light from an identical tweet in a country with milder conditions. The researchers then merged each tweet with the severity value of the country it likely came from, using users’ self-reported locations and carefully curated lists of cities and regions to map locations to countries.

Turning local feelings into global shades of opinion

With both tweet text and country context in hand, the authors designed three methods to refine each tweet’s label from a simple positive/negative/neutral tag into a richer “global sentiment.” The first two methods use probability rules (Bayes’ theorem) to measure how common each type of sentiment is within a country or within two broad groups of countries: those in relatively “good” versus “bad” pandemic condition. A tweet that goes against the prevailing mood in its setting, such as a rare positive comment in a hard-hit country, is treated as a “high intensity” expression, while one that echoes a common view is treated as “low intensity.” Method 2 also distinguishes “weakly” and “strongly” positive or negative labels, depending on whether the tweet’s tone fits or contradicts the country’s situation.

A smarter model to learn intensity automatically

The third method uses a more advanced statistical approach called Bayesian multilevel ordinal regression. Instead of relying on fixed cutoffs, this model learns, from the data themselves, how tweet-level sentiment scores (derived from the Twitter-roBERTa probabilities) interact with the severity of the pandemic in each country. It accounts for differences between countries while still pooling information across them. The model then estimates, for every tweet, not only whether it is negative, neutral, or positive, but also how confidently it belongs in that category. Tweets whose model-based probabilities are higher than typical for their category are labeled as “high intensity”; others are marked as “low intensity.” This creates nuanced global sentiment labels that reflect both language and public health context.

What the findings mean for understanding public mood

When the authors used these new global sentiment labels to train common machine-learning classifiers, they found that the nuanced labels—especially those produced by the advanced model—helped the classifiers learn more accurate patterns than the cruder methods. In practical terms, this means that public-health agencies, researchers, and social-media analysts can gain a sharper picture of how people really feel about vaccines by looking at tweets through a global lens, not just reading the words in isolation. Two people may sound equally frustrated about vaccination, but if one lives in a country coping with a severe outbreak and the other in a place where the situation is under control, their messages carry different weight. By capturing these differences in intensity, the study offers a more grounded way to monitor public sentiment and to design responses that better match the realities people are facing.

Citation: Chakrabarty, D., Chatterjee, S. & Mukhopadhyay, A. A global twitter sentiment analysis model for COVID-vaccination. Sci Rep 16, 9005 (2026). https://doi.org/10.1038/s41598-026-38553-0

Keywords: COVID-19 vaccination, Twitter sentiment, social media analysis, public health communication, machine learning