Clear Sky Science · en

Tighter privacy auditing of differentially private stochastic gradient descent in the hidden state threat model

Why this matters for everyday technology

Modern apps constantly learn from our data, from photos and messages to medical records. A leading way to keep this training safe is called differential privacy, which adds carefully tuned noise so no single person’s data stands out. But how do we know these protections actually work in practice, especially for the deep neural networks used today? This paper probes that question and shows when hiding the “training movie” of a model really helps privacy—and when it does not.

How private learning is supposed to work

Differentially private stochastic gradient descent (DP-SGD) is the workhorse algorithm for privacy-preserving machine learning. It trains models step by step on small batches of data, clipping each step’s gradient (the direction of improvement) and adding random noise before updating the model. Theory provides upper bounds on how much any person’s data can influence the final model, summarized by a privacy number often called epsilon. In parallel, “privacy auditing” tries to attack the trained model and see how much information can actually be extracted in practice. If theory and auditing match, we can trust our privacy accounting; if they do not, something important is being missed.

What changes when only the final model is revealed

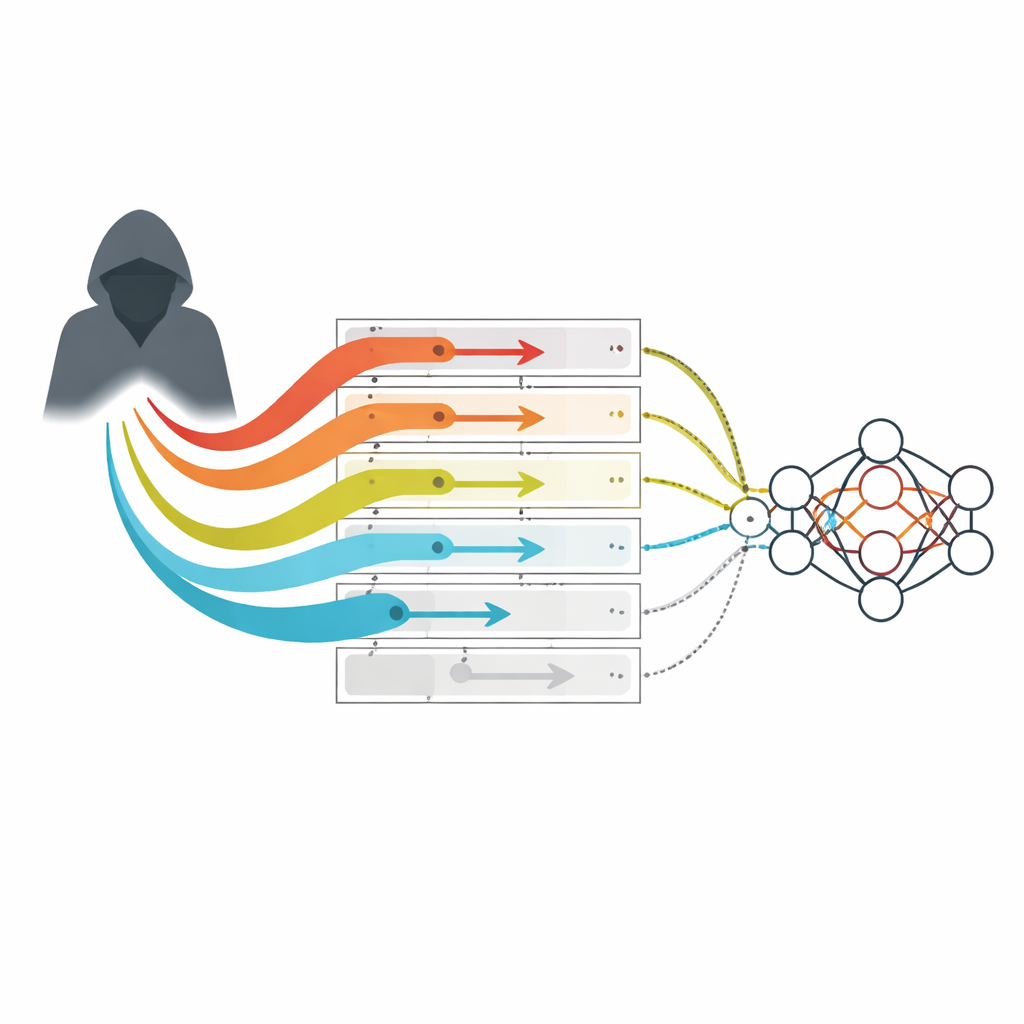

Most earlier audits assumed a powerful adversary who can see every intermediate model checkpoint during training. In reality, many organizations only release the final model, not the full training history. This more realistic setting is called the hidden state threat model. Recent theoretical work suggested that, at least for simple convex problems, hiding intermediate models might amplify privacy over time: data used early in training gets “washed out” by later noisy updates. However, modern deep learning relies on highly non-convex models, where the loss surface is rugged and complex. It was unclear if the same amplification really happens there—or if existing attacks were simply too weak to expose the full privacy loss.

A new way for adversaries to push the model

The author introduces a new family of “gradient-crafting” adversaries tailored to the hidden state model. Instead of trying to design a special data point and then watching how its loss changes during training (as in traditional loss-based attacks), these adversaries directly prescribe the sequence of gradients that would be applied if a worst-case data point were present. They choose gradients that always hit the clipping threshold and align with rarely used parameter directions, making their influence easier to detect even without seeing intermediate models. Two simple variants are studied: one that randomly picks a parameter direction, and another that simulates the training process to find the least-updated direction before injecting strong, repeated gradients along it.

What the experiments reveal about real privacy risk

Using this framework, the paper audits DP-SGD on image and tabular datasets with common architectures such as convolutional and residual networks, as well as a small fully connected model. When the crafted gradient is used at every training step, the new adversaries match the strict theoretical privacy bounds—even though they only see the final model. This means that, in this extreme case, hiding intermediate checkpoints does not provide any extra privacy at all. When the crafted gradient is inserted less often, the picture changes: for large batches relative to the noise level, the audits remain close to theory (again suggesting little real amplification), but for smaller batches and higher noise, a gap appears that points to genuine, though modest, privacy amplification in non-convex settings.

Peeking inside the worst-case limit

To understand the absolute limits of privacy in the hidden state model, the paper also studies a more extreme theoretical adversary that not only crafts gradients but also designs an entire loss landscape to keep the influence of a special data point alive across iterations. In this controlled setting, the results cleanly separate two regimes: with large batch sizes, privacy accounting based on standard theory is essentially tight, but with small batches and substantial noise, early information about a data point is partly forgotten over time. Crucially, this amplification is weaker than what is known for simple convex problems and never fully erases the privacy risk.

What this means for users and practitioners

For non-experts, the takeaway is that simply hiding the training history of a deep learning model does not magically guarantee much stronger privacy. When a person’s data is used very frequently during training, their risk is close to what today’s conservative theory already predicts. Some extra protection does emerge in more favorable regimes—small batches with significant noise—but it is modest and does not drive the risk to zero. These findings both validate parts of existing privacy accounting and highlight its limits, offering a clearer, more realistic picture of how much protection DP-SGD can provide when only the final model is shared.

Citation: Bhuekar, A. Tighter privacy auditing of differentially private stochastic gradient descent in the hidden state threat model. Sci Rep 16, 8365 (2026). https://doi.org/10.1038/s41598-026-38537-0

Keywords: differential privacy, DP-SGD, privacy auditing, machine learning security, hidden state model