Clear Sky Science · en

An intracortical brain-machine interface based on macaque ventral premotor activity

Teaching the Brain to Move a Cursor

Imagine guiding a computer cursor or robotic arm using only your thoughts, even if your muscles can no longer move. Brain–machine interfaces (BMIs) aim to make that possible by translating brain activity into commands for external devices. Most systems so far have tapped into one main movement area of the brain, but what happens if that region is damaged, as in stroke or ALS? This study asks whether another nearby area, usually linked to planning hand actions and watching others move, can also reliably drive a BMI.

A New Brain Area Joins the Team

Classic BMIs mainly read signals from the primary motor cortex, the strip of brain tissue that directly controls voluntary movements, and from a neighboring planning region called dorsal premotor cortex. The researchers turned their attention to a different neighbor: the ventral premotor cortex, specifically a zone called F5c. In monkeys, F5c is rich in cells that fire when the animal reaches and grasps objects and even when it simply watches actions on a screen. That mix of movement and observation responses suggested F5c might be well suited to control a cursor or a robotic “avatar” without requiring the body to move.

Monkeys, Microelectrodes, and Moving Targets

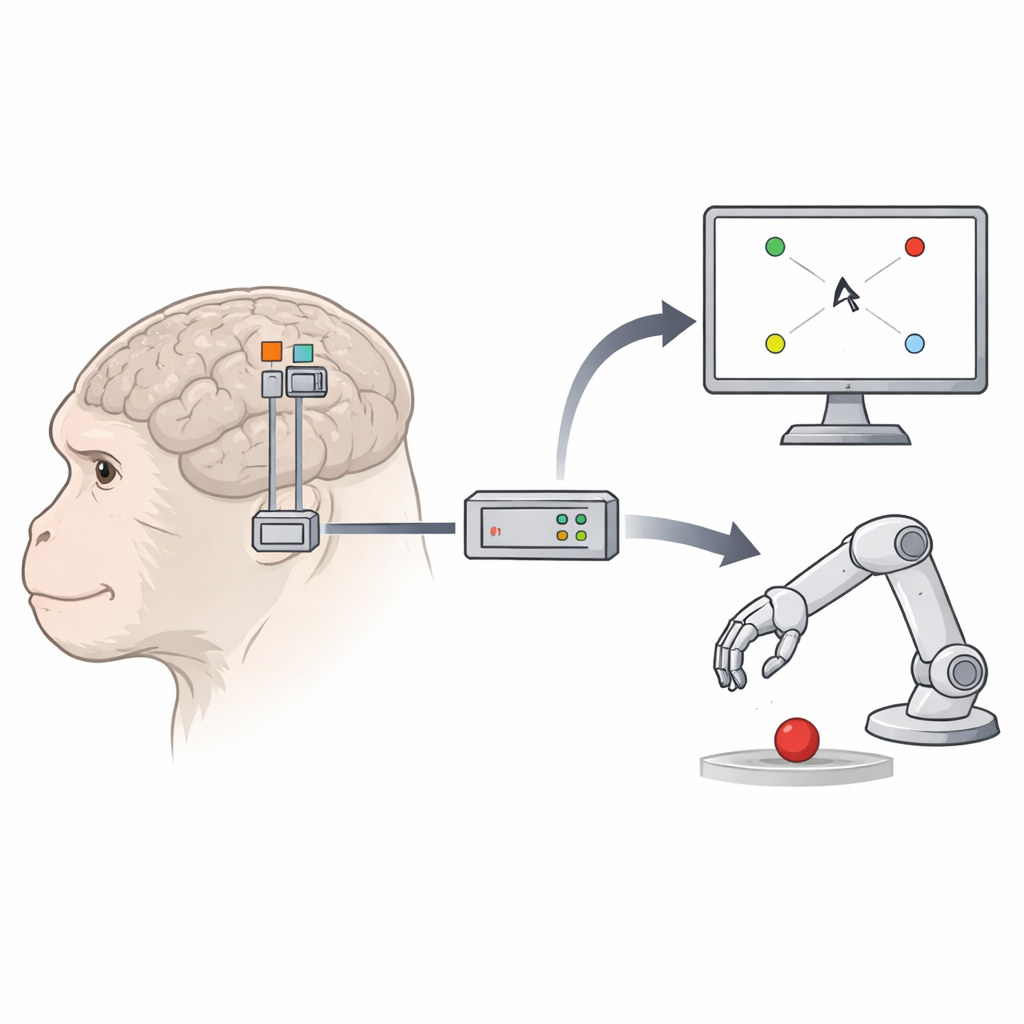

Two macaque monkeys were implanted with tiny 96-electrode grids in three spots: primary motor cortex, dorsal premotor cortex, and F5c. In daily sessions, the animals performed several visually simple but behaviorally demanding tasks. In one task, they touched the center of a screen and then reached to one of eight outer targets, while a small on-screen square cursor moved from the center to the same target. In a second task, they kept their hand still and simply watched the cursor travel to the targets. In a third, more life-like setup, the cursor was replaced by a 3D robot arm avatar reaching toward targets in a virtual scene. Across these tasks, the team could compare how well each brain area drove cursor or avatar movements.

How Brain Signals Became Smooth Motion

During a training phase, the cursor or avatar followed computer-generated, gently curved paths while the monkeys either moved or watched. At the same time, the electrodes recorded rapid bursts of brain activity. The researchers then trained a decoder—a mathematical tool that learns to map patterns of brain firing to the velocities of the cursor or avatar on the screen. To capture only the most informative channels, they chose electrodes whose activity tracked movement direction and speed. They used a method that isolates brain patterns most closely tied to behavior and enhanced it with a non-linear step, allowing the system to capture more complex relationships between neural activity and motion. In the decoding phase, the computer stopped driving the cursor or avatar; instead, the decoder used live brain signals, updated every 50 milliseconds, to steer the onscreen movement. The decoder was periodically retrained in the background so it could adapt as neural responses shifted over time.

How Well Did the “New” Area Perform?

The key question was whether F5c could match or come close to the performance of the traditional control areas. In both monkeys, F5c-based decoding initially lagged behind when moving the cursor, especially when the animals were only watching and not moving their own hand. But as sessions progressed—and as more electrodes provided reliable movement-related signals—F5c caught up. In several conditions, its performance equaled that of primary motor cortex and even surpassed it in later sessions for passive cursor control. When controlling the robot avatar, overall success was lower across all areas, but F5c still supported meaningful control, especially when combined with a gentle assistive algorithm that subtly guided the avatar toward the target. Importantly, neurons in all three regions showed similar patterns of directional tuning and population activity during the computer-driven training phase and the active control phase, with only a subset changing their preferred directions as the monkeys learned to drive the BMI.

What This Means for Future Neurotechnology

To a non-specialist, the crucial takeaway is that ventral premotor area F5c—once thought of mainly as a planner and observer of actions—can also serve as a practical control hub for brain–machine interfaces. When enough movement-related signals are available, decoders trained on F5c activity can guide a screen cursor or assistive robot nearly as well as those based on the classic movement area. This suggests that future clinical BMIs might not have to rely on a single cortical region. For people whose primary motor cortex is damaged, nearby planning regions like ventral premotor cortex could provide an alternative pathway to regain control over digital tools, prosthetic devices, or mobility aids.

Citation: De Schrijver, S., Garcia Ramirez, J., Iregui, S. et al. An intracortical brain-machine interface based on macaque ventral premotor activity. Sci Rep 16, 8407 (2026). https://doi.org/10.1038/s41598-026-38536-1

Keywords: brain-machine interface, motor cortex, premotor cortex, neural decoding, prosthetic control