Clear Sky Science · en

A wavelet-based frequency-domain approach for accurate multi-crop disease detection

Smarter Eyes for Crop Health

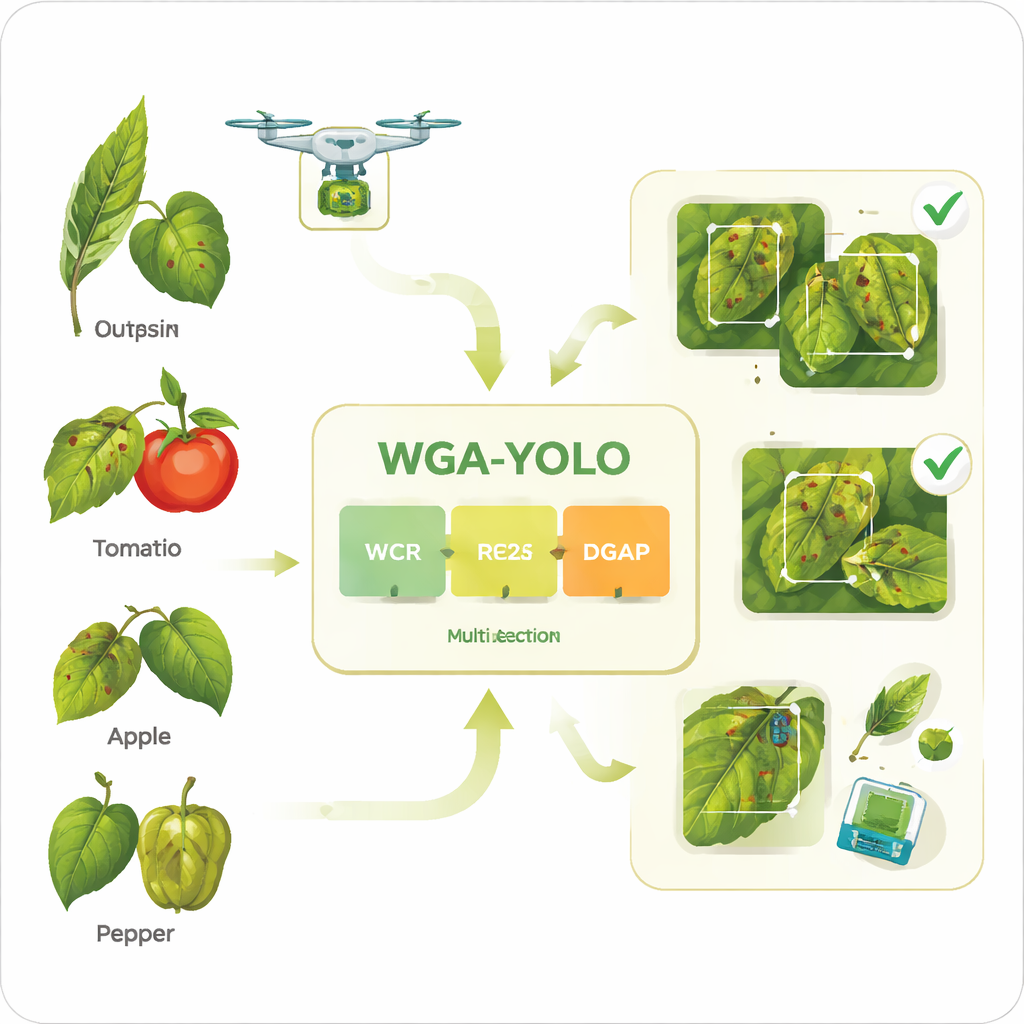

Farmers and researchers increasingly rely on cameras and drones to spot plant diseases early, before they spread and devastate harvests. But real fields are messy: leaves overlap, sunlight changes by the second, and many disease spots are tiny and easily confused with normal leaf texture. This paper presents WGA-YOLO, a compact artificial-intelligence system designed to find diseased areas on many kinds of crops quickly and accurately, even under such challenging conditions.

Why Finding Leaf Spots Is So Hard

At first glance, recognizing a sick leaf in a photo seems straightforward. In practice, it is anything but. In real fields, disease lesions can be very small, irregularly shaped, and scattered across leaves. Their color and texture often resemble natural patterns like veins or speckles. Lighting may be harsh, dim, or patchy due to shadows. Traditional machine‑learning systems depend on hand‑crafted visual cues and tend to break down when the background gets cluttered or the lighting shifts. Newer deep‑learning systems, such as standard YOLO models, are more powerful, but they can still miss tiny lesions or require heavy computing power that is impractical for low‑cost devices used on farms.

Cleaning Up the View of Plant Diseases

To train and test any detection system, a reliable dataset is essential. The authors began by revisiting a popular public collection of plant images called PlantDoc. They found many issues that could mislead an AI model: missing or inconsistent labels, drawings instead of real photos, and images with watermarks or handwritten notes. They carefully re‑checked, corrected, and removed problematic samples, then expanded the dataset with new, clearly documented images from public sources. The result, PlantDoc_boost, includes 13 common crops and 17 disease types, with realistic outdoor scenes and many small diseased areas. This cleaner, richer dataset better reflects what a camera actually “sees” in the field and makes it possible to test whether a model will generalize beyond the lab.

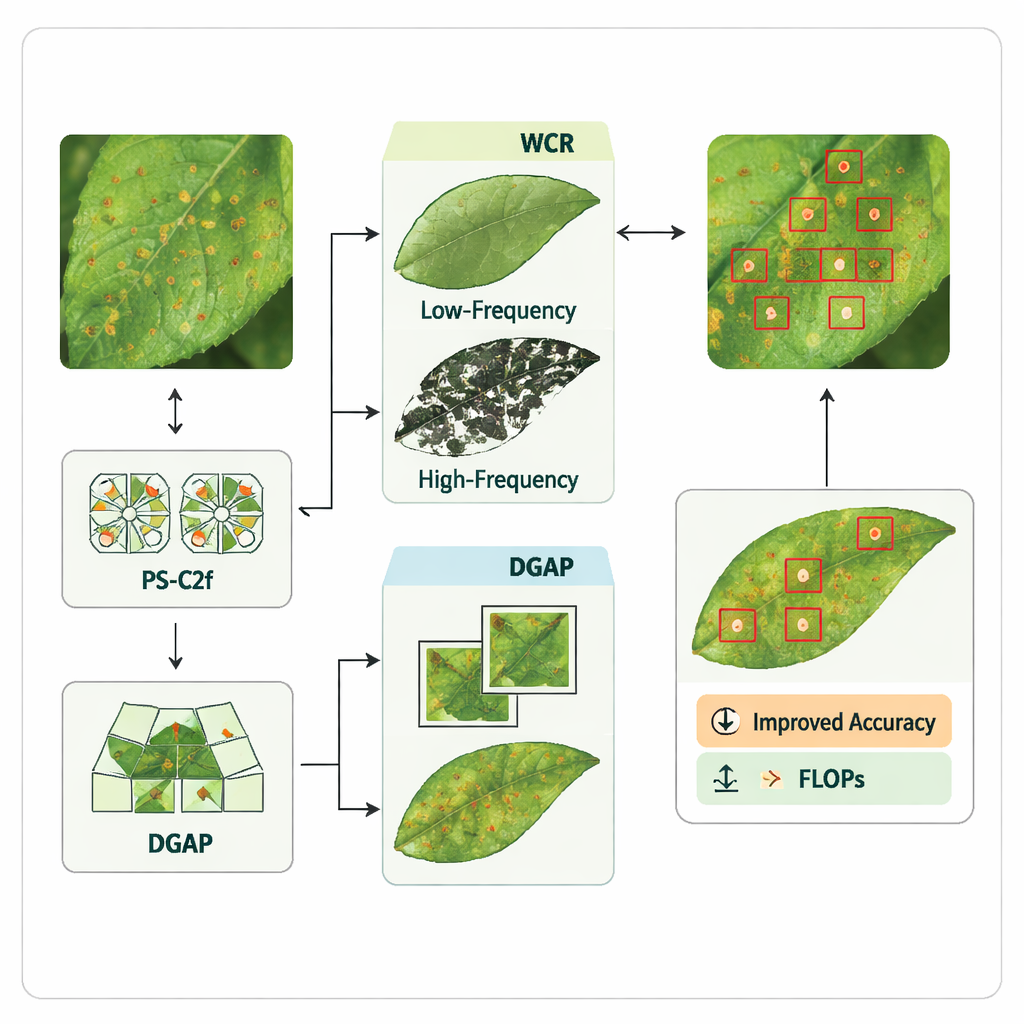

How the New Model Looks Inside

WGA‑YOLO builds on YOLOv8n, a popular one‑stage object detector known for its speed. The authors redesigned key parts of the network to preserve fine details while keeping it lightweight. First, they replace some standard downsampling steps with a module called Wavelet Channel Recalibration (WCR). Instead of simply shrinking images and losing information, WCR performs a wavelet transform that splits features into smooth, low‑frequency content and sharp, high‑frequency edges and textures. By recombining these thoughtfully, the network keeps both the overall shape of leaves and the tiny spots that signal disease, all with very little extra computation.

Zooming In on Tiny Lesions at Many Scales

Small lesions are particularly easy to overlook, so the authors introduce a customized building block called PS‑C2f. It uses “pinwheel‑shaped” filters that look in several directions around each point, making the model more sensitive to subtle changes in shape and texture that outline lesion boundaries. Another new piece, DGAP (Dynamic Group Attention Pooling), helps the network combine information from different scales—from small spots to nearly leaf‑sized regions. By learning how much weight to give local, mid‑range, and global views, DGAP encourages the model to highlight truly important lesion areas while downplaying confusing background patterns, such as veins or soil textures.

How Well It Works in Practice

Tested on the PlantDoc_boost dataset, WGA‑YOLO detects diseased regions more accurately than several well‑known alternatives, including Faster R‑CNN and multiple versions of YOLO, while using fewer parameters and slightly less computation than its YOLOv8n starting point. It also performs strongly on several external datasets of corn, tomato, and apple diseases, which have simpler scenes but cover many images and disease types. Across these tests, WGA‑YOLO is better at focusing on true lesion areas and less easily fooled by distracting textures or lighting. This combination of accuracy and efficiency suggests that the model could run on modest hardware, such as edge devices mounted on drones or farm robots, and provide near‑real‑time guidance.

What This Means for Farmers

In plain terms, this work delivers a sharper and more efficient digital “eye” for crops. By cleaning up the training data and re‑engineering the way the AI model handles fine detail and scale, the authors created a detector that spots more diseases without demanding bulky computers. That could help farmers catch problems earlier, target pesticide use more precisely, and reduce both costs and environmental impact. While further tuning is needed for very early, subtle infections and for deployment on the smallest devices, WGA‑YOLO marks a significant step toward practical, field‑ready disease monitoring across many different crops.

Citation: Zhao, J., Liang, Y., Wei, G. et al. A wavelet-based frequency-domain approach for accurate multi-crop disease detection. Sci Rep 16, 7099 (2026). https://doi.org/10.1038/s41598-026-38476-w

Keywords: crop disease detection, precision agriculture, computer vision, YOLO, plant health monitoring