Clear Sky Science · en

Query-efficient decision-based adversarial attack with low query budget

Why tiny glitches in images can fool smart machines

Modern artificial intelligence can spot faces, animals, and everyday objects with impressive accuracy. Yet these same systems can be tricked by changes to an image so small that people can barely see them. This paper explores a new way to create such “fooling” images while asking the AI as few questions as possible, revealing both how fragile today’s models can be and how attackers might exploit them in the real world.

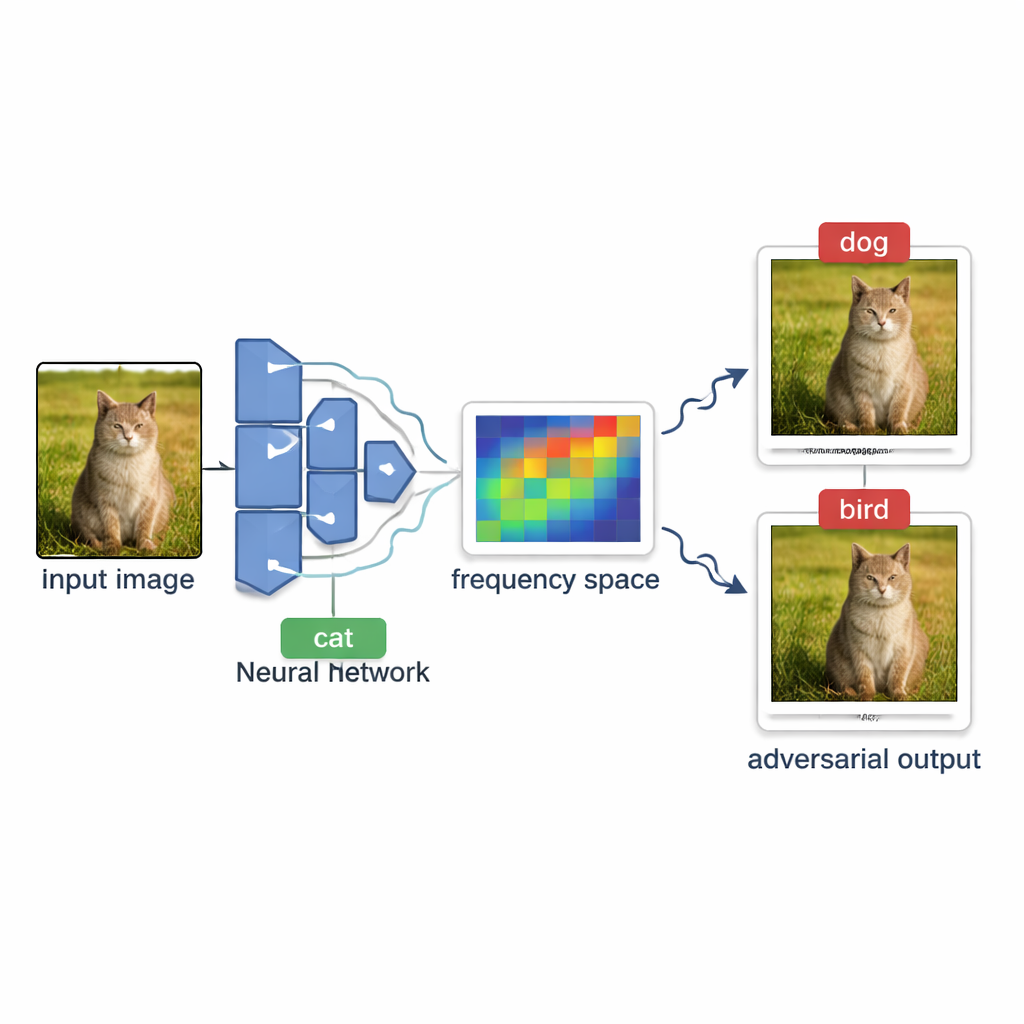

How attackers probe AI systems from the outside

In many real services—like online photo tagging or content filters—the model behaves like a black box. Outsiders can upload an image and see only the final label, such as “dog” or “stop sign,” but never the internal confidence scores or the model’s structure. Creating a misleading image under these conditions is called a decision-based black-box attack. The challenge is to gently nudge a normal picture until the model mislabels it, without being able to see how “close” it is to changing its mind and without sending so many test images that the system notices or becomes too costly to query.

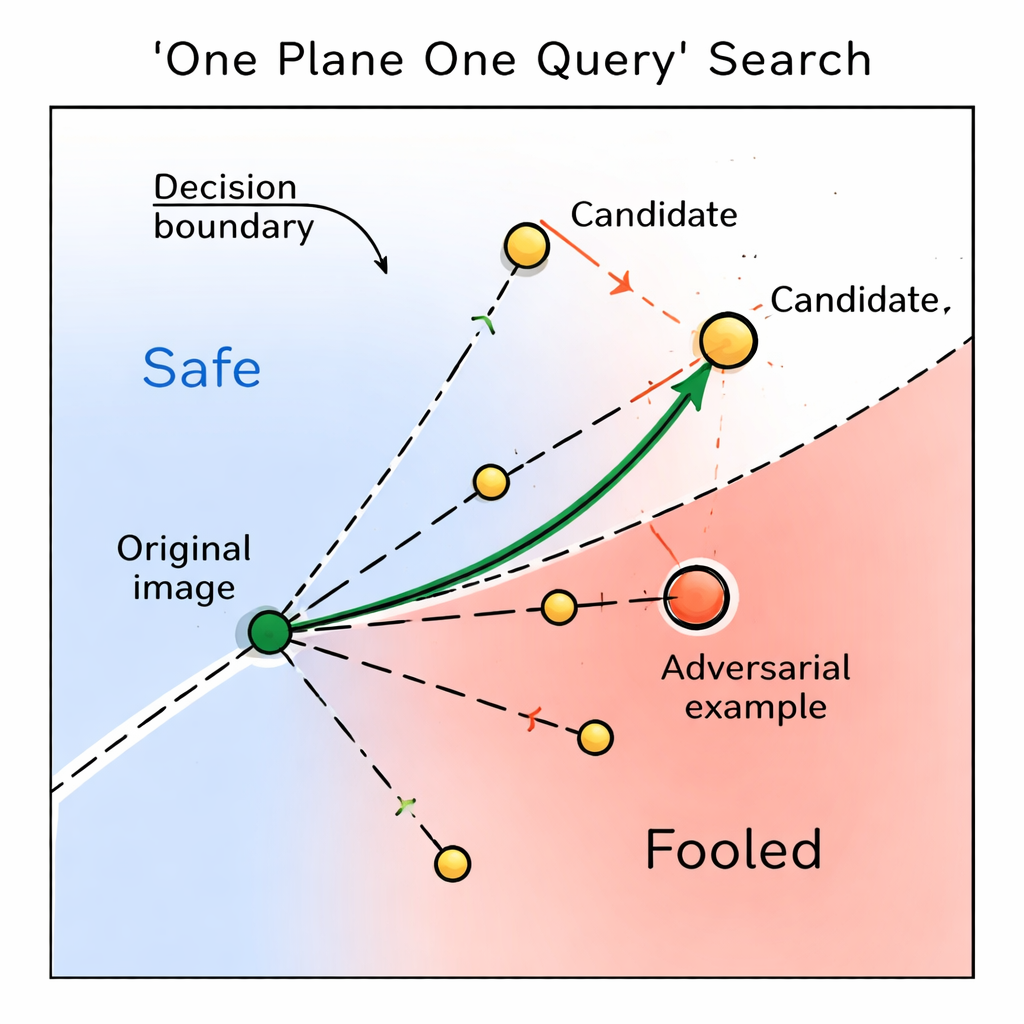

A new way to search with very few questions

The authors introduce OPOQA (One Plane One Query Attack), a method designed to be stingy with queries while still crafting high-quality adversarial images. Instead of repeatedly probing along a single guessed direction, OPOQA works in rounds. In each round, it starts from an already misleading image and the original clean image, then proposes several new candidate images that lie in carefully chosen directions. Crucially, each direction is probed at most once, which frees up the limited query budget to explore many more possibilities rather than over-refining a single guess.

Riding the smooth waves in an image

To choose promising directions, OPOQA leans on the idea that the most effective, hard-to-see changes are often smooth, broad shifts rather than sharp pixel-level noise. The method uses a mathematical tool called the discrete cosine transform to move the image into a “frequency” view, where slow, gentle variations sit in a compact region. It randomly samples a few of these low-frequency components, converts them back into normal pixel changes, and uses them as basic directions for exploration. Each sampled direction helps define a flat two-dimensional surface that connects the original image, the current adversarial image, and a new candidate. On each of these surfaces, OPOQA picks a single point to test, balancing two goals: getting closer to the original picture while still likely pushing the model into a wrong decision.

Picking the best candidate and adapting on the fly

Once OPOQA has generated a small set of candidate images, it measures how far each one is from the original image and sorts them from least to most changed. It then queries the model in that order. The moment it finds a candidate that the model misclassifies, it stops and treats that image as the new starting point for the next round. If none of the candidates manage to fool the model, OPOQA keeps the previous best adversarial image but adjusts an internal knob that controls how conservative or aggressive the next set of steps will be. This “greedy” strategy—always accepting the best available misclassified image and dynamically tuning the step size—lets the attack zero in on subtle, effective perturbations without wasting queries on unpromising directions.

What the experiments reveal about AI’s weak spots

The researchers tested OPOQA on 200 images from the large-scale ImageNet benchmark and six widely used neural network models, including Inception-v3, ResNet, VGG, DenseNet, and vision transformers. Under a strict limit of 1,000 model queries per image, OPOQA matched or outperformed several leading attack methods. For example, on Inception-v3 it successfully fooled the model on 94 percent of images while keeping changes so small that they were almost invisible to the human eye, improving on the previous best method by several percentage points. Across models, OPOQA tended to reach high success rates earlier—using fewer queries—though some competing methods caught up or surpassed it when given very large query budgets and time for fine-tuning.

What this means for everyday AI safety

The study shows that today’s vision systems can be tricked even when attackers see only final decisions and have limited opportunities to probe the model. By smartly exploring gentle, low-frequency changes and carefully rationing each query, OPOQA can create images that look the same to people but lead machines badly astray. For non-experts, the takeaway is that AI “seeing” is still quite brittle: it can be pushed off course in subtle ways that are hard to notice. Recognizing and studying such efficient attacks is a key step toward hardening real-world systems—such as security cameras, medical image tools, and autonomous vehicles—against manipulation that might otherwise go undetected.

Citation: Tuo, Y., Yin, M. & Che, S. Query-efficient decision-based adversarial attack with low query budget. Sci Rep 16, 6886 (2026). https://doi.org/10.1038/s41598-026-38428-4

Keywords: adversarial examples, black-box attacks, deep learning security, image classification, query-efficient attack