Clear Sky Science · en

HQA2LFS-handwriting quality assessment using an active learning framework in smartphones

Why your handwriting still matters

Even in an age of laptops and tablets, the way we write by hand still shapes how teachers judge schoolwork and how clinicians spot learning or movement problems. But checking handwriting page by page is slow and subjective. This study presents a smartphone-based system that can photograph handwritten pages and automatically estimate how clear, neat, and well‑spaced the writing is. By blending human expertise with machine learning, it aims to turn messy piles of notebooks into quick, reliable feedback for students, teachers, and health professionals.

Turning pages into measurable patterns

The researchers start with what a teacher already has: scanned or phone‑captured pages of student work, both on ruled and plain paper. Their software first cleans each page, removing noise and converting it to a sharp black‑and‑white image so that ink stands out clearly from the background. An optical character recognition engine then locates every handwritten word and cuts the page into many small "word patches." For each patch, the system measures how the strokes are distributed from top to bottom, whether lines lean or stay straight, how evenly words are spaced, and whether text hugs or drifts away from the notional baseline. These measurements translate the visual feel of a page into a structured table of numbers that a computer can learn from.

Seeing neatness the way people do

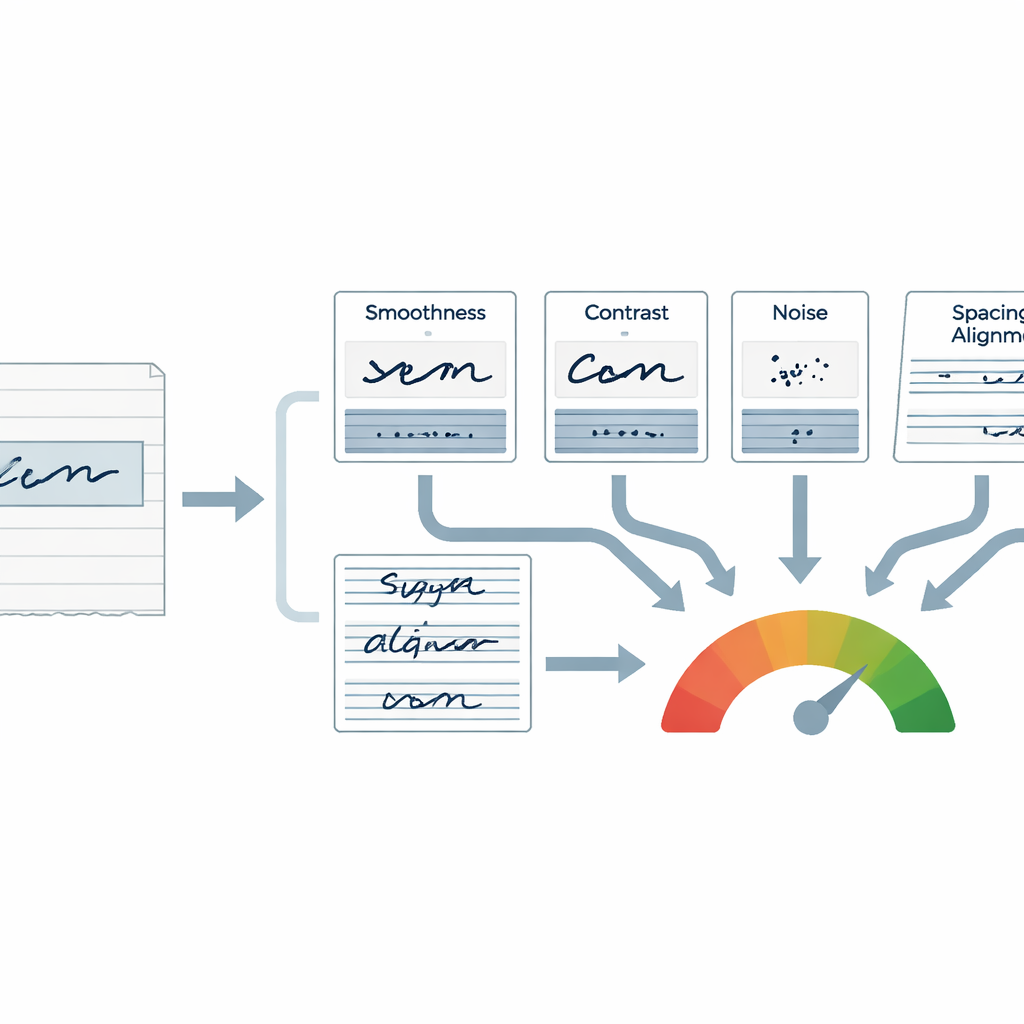

To make the scores meaningful, the team designed a "perceptual" score that mimics how humans judge a word at a glance. Four ingredients drive this score: how smooth the strokes look, how strongly the ink stands out from the page, how much stray ink or scribble‑like noise is present, and how continuous and well‑formed the strokes appear. Each word’s patch is also sliced into six horizontal zones, from top to bottom, to capture whether letters sit properly on an invisible baseline, whether tall parts like ascenders are consistent, and whether writing is cramped or stretched. Extra checks look at fringe behavior along horizontal lines, spotting text that floats above or sinks below where it should be, as well as irregular gaps between words and lines.

Teaching the system with fewer marked papers

A key challenge is that expert scores are expensive: teachers must label many pages before a model can learn. To tackle this, the authors use an "active learning" strategy. Initially, 10–12 experienced teachers rate a modest set of pages on a simple four‑level scale from poor to excellent. A regression model, especially tree‑based methods like Random Forest and XGBoost, is trained to predict a numerical handwriting quality score from the measured features. Instead of randomly asking for more labels, the system looks for samples it is most uncertain about or predicts poorly. Those pages are then shown in an interactive dashboard where experts can quickly confirm or adjust the suggested scores. This loop concentrates human effort where it teaches the model the most, boosting accuracy without requiring every page in a large collection to be graded by hand.

What the numbers reveal about writing and fatigue

Using two large datasets—unruled pages that test a writer’s own sense of alignment, and ruled pages written in morning and afternoon sessions—the system uncovers patterns that match everyday classroom experience. Most pages fall into good or excellent categories, but many still show dense regions, spacing problems, or skewed lines. On ruled paper, scores tend to dip slightly in the afternoon, and features linked to loss of focus and uneven spacing become more common, hinting at fatigue or reduced concentration. The models trained on these features track teacher scores very closely, with correlation values above 0.9 and error margins small enough to reliably distinguish clearly written work from struggling handwriting, even for writers the system has never seen before.

From raw scores to helpful feedback

In simple terms, the researchers have built a camera‑based assistant that can "read" the visual quality of handwriting, almost as consistently as a panel of teachers, while needing far fewer expert ratings than traditional systems. By combining human judgment, carefully chosen visual features, and an active learning loop that focuses on the hardest cases, their framework turns handwritten pages into interpretable scores on neatness, spacing, and alignment. With further development, such tools could power classroom apps that flag students who need extra support, monitor fatigue or stress during exams, or support clinicians and forensic analysts who must make decisions based on how, not just what, people write.

Citation: Koushik, K.S., Nair, B.J.B., Rani, N.S. et al. HQA2LFS-handwriting quality assessment using an active learning framework in smartphones. Sci Rep 16, 8186 (2026). https://doi.org/10.1038/s41598-026-38330-z

Keywords: handwriting quality assessment, smartphone imaging, machine learning, active learning, educational technology