Clear Sky Science · en

Anomaly-based intrusion detection on benchmark datasets for network security: a comprehensive evaluation

Why smarter defenses matter for everyone online

Every email you send, video you stream, or bill you pay online travels through networks that are constantly probed by attackers. Security tools called intrusion detection systems act as digital alarm systems, scanning this traffic for signs of trouble. But as attacks grow more varied and sophisticated, older rule-based tools are struggling to keep up. This study explores how modern deep learning methods can power more accurate and adaptable alarms that spot both familiar and never-before-seen threats while keeping false alerts low.

From fixed rules to learning from experience

Traditional intrusion detection tools work much like antivirus software: they look for known signatures—specific patterns that match cataloged attacks. This approach is fast and reliable for familiar threats, but it fails when attackers change tactics or use so-called zero-day exploits. A newer strategy, anomaly detection, instead learns what normal network behavior looks like and flags unusual activity. That makes it better at catching novel attacks, but it risks raising too many false alarms. The authors focus on deep learning, a branch of artificial intelligence in which layered networks of simple processing units automatically learn patterns from data, aiming to combine the adaptability of anomaly detection with the reliability of signature systems.

Putting two learning engines to the test

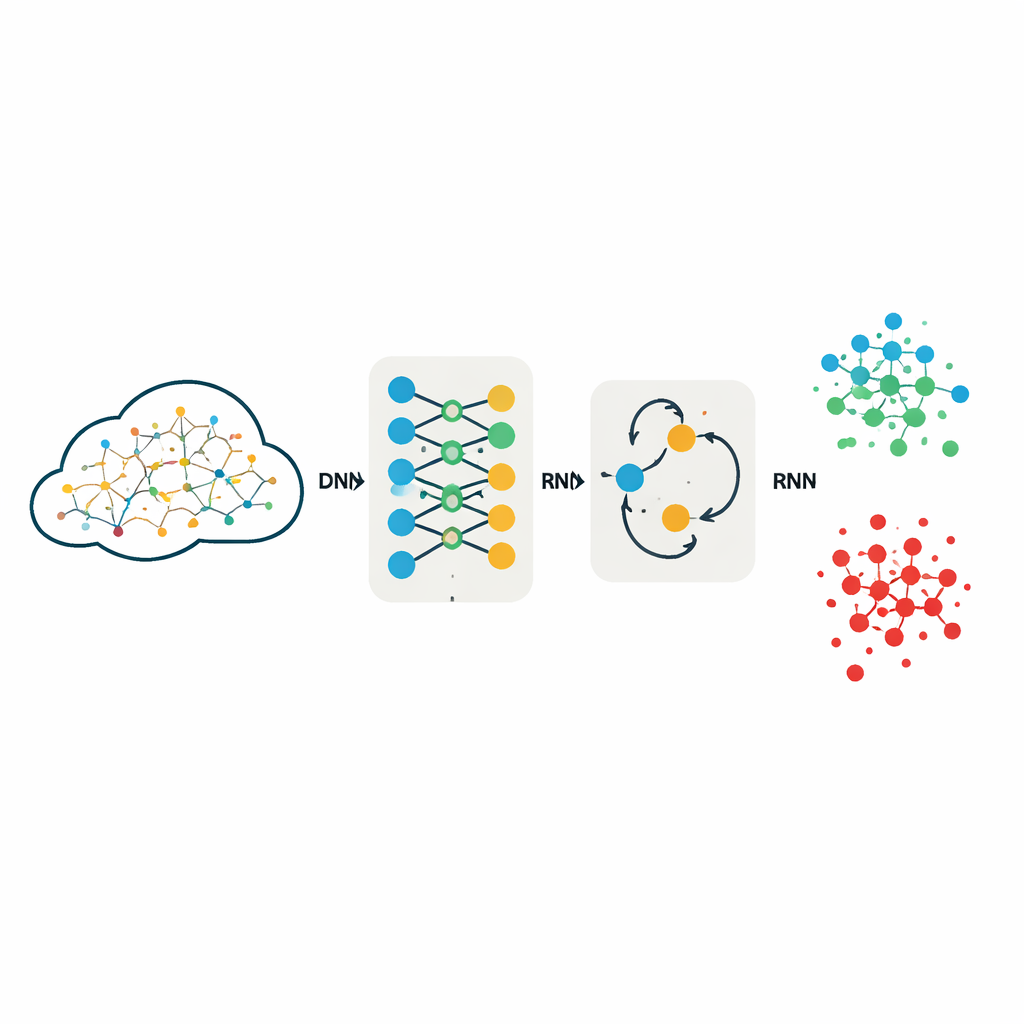

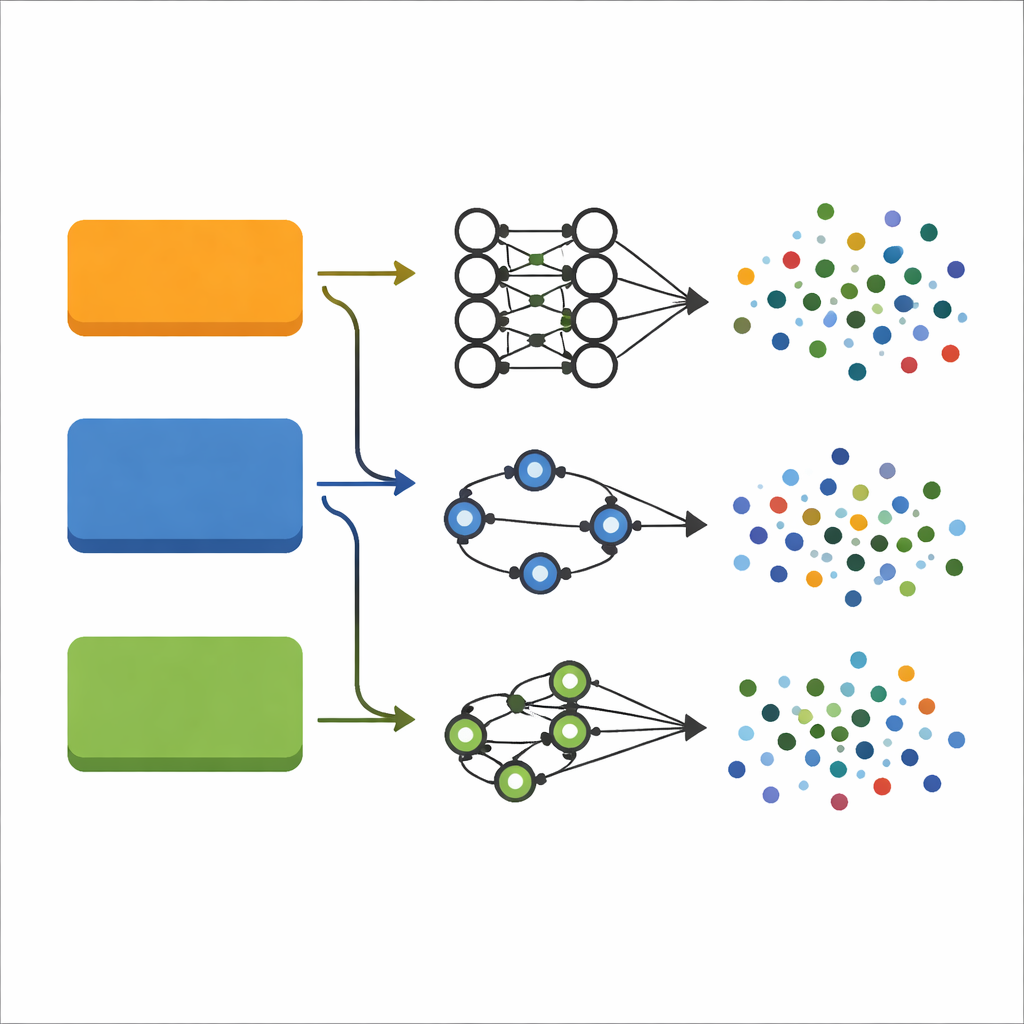

The researchers evaluate two popular deep learning models: a deep neural network (DNN), which processes each network connection as a rich numerical record, and a recurrent neural network (RNN), which adds an internal "memory" designed to capture relationships across ordered data. Instead of handcrafting features, they feed these models full sets of measurements describing each network connection, after converting text fields to numbers and scaling all values. Both models are trained and tested in exactly the same way on three widely used benchmark collections of network traffic: KDDCup99, NSL-KDD, and UNSW-NB15, which together cover a broad range of attack types, from flooding a server with traffic (DoS) to stealthy attempts to gain extra user privileges.

How the study was carefully set up

To make the comparison fair and repeatable, the team keeps the model designs intentionally simple and transparent. The DNN uses three fully connected layers to transform the 40–42 input features into predictions over either five or ten traffic categories, such as "normal" or different attack families. The RNN uses a lightweight recurrent layer followed by a final decision layer, treating each record as a very short sequence so it can still model interactions among features. Both models use the same activation function and a widely adopted optimization strategy known for stable learning. Crucially, the authors do not discard features to shrink the data; earlier work showed that aggressive feature reduction can throw away subtle clues that are vital for distinguishing rare but dangerous attacks.

What the results say about accuracy and reliability

On the older KDDCup99 and NSL-KDD datasets, both models deliver strikingly high performance: accuracies exceed 99% with false alarms under 1%. This means that almost all malicious connections are correctly caught, while very few legitimate connections are mistakenly flagged. On UNSW-NB15, a more modern and challenging dataset with ten distinct classes, performance drops somewhat as expected, but remains strong. The DNN reaches about 96% accuracy, while the RNN trails at roughly 82%. Detailed scores show that the DNN not only classifies common attacks well, but also handles rare categories like worms and user-to-root attacks with high F1-scores, a measure that balances catching attacks against avoiding misses. Experiments with a more complex transformer-based model actually perform worse, suggesting that extra architectural sophistication does not automatically yield better security.

What this means for safer networks

The study concludes that well-designed but relatively simple deep learning models can form the backbone of practical intrusion detection systems. By training directly on full-featured benchmark datasets and carefully tuning their learning process, the DNN in particular achieves state-of-the-art accuracy with low false positives across a wide variety of attack types. For everyday users, this translates into security tools that are better at spotting both routine and unusual threats without constantly crying wolf. The authors suggest that future work can build on this foundation by refining recurrent models, exploring selective feature reduction for speed, and combining deep feature extractors with traditional classifiers, moving us closer to intrusion detection that is both powerful and efficient in real-world networks.

Citation: Kumar, L.K.S., Nethi, S.R., Uyyala, R. et al. Anomaly-based intrusion detection on benchmark datasets for network security: a comprehensive evaluation. Sci Rep 16, 8507 (2026). https://doi.org/10.1038/s41598-026-38317-w

Keywords: intrusion detection, network security, deep learning, anomaly detection, cyberattacks