Clear Sky Science · en

An improved YOLOv11 network for marine debris detection in underwater environment

Why spotting underwater trash matters

Far below the ocean’s surface, plastic bags, bottles, fishing lines and other debris quietly build up. This litter harms marine life, clogs sensitive habitats and can even interfere with underwater robots that scientists use to study and protect the sea. The paper summarized here describes a smarter computer-vision system that helps cameras and robots automatically find and label underwater trash in real time, even in murky, cluttered water.

The challenge of seeing clearly under the sea

Unlike clear daylight photos on land, underwater images are often dark, hazy and tinted blue or green. Light fades quickly with depth, sand and plankton cloud the water, and trash items are often small, partly hidden or look similar to rocks and plants. Traditional image-processing methods struggle in these conditions, and even modern deep-learning detectors can miss tiny objects or mistake background texture for debris. Yet accurate and fast detection is crucial for mapping pollution, steering cleanup robots and tracking how marine trash changes over time.

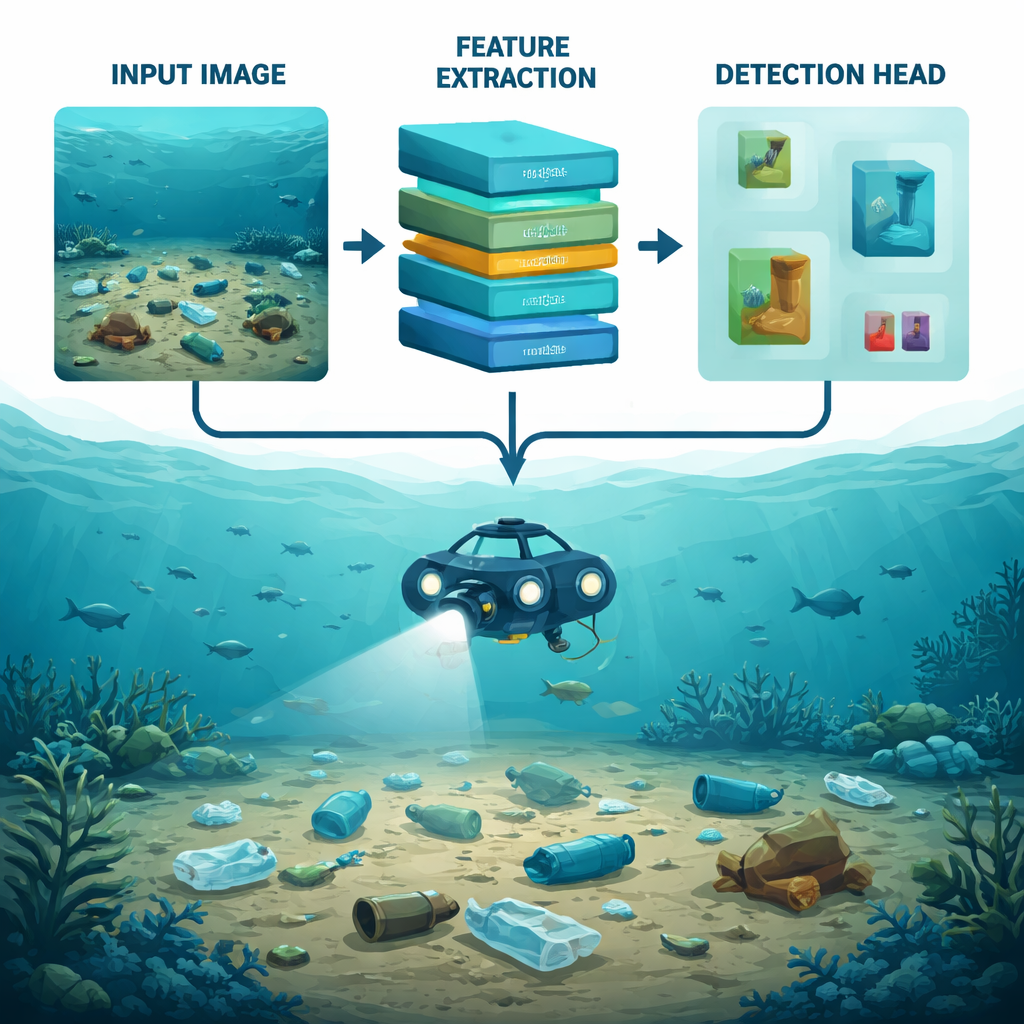

Building on a fast vision workhorse

The authors build on YOLOv11, a recent member of the “You Only Look Once” family of object detectors. YOLO models are popular because they scan an image once and predict the locations and types of many objects in real time. However, the standard YOLOv11 design was created for more typical scenes, like streets or indoor photos, not the visually harsh underwater world. To close this gap, the researchers redesign two key parts of the network: how it first extracts visual patterns from an image, and how it later decides which parts are important trash objects and which are just noisy background.

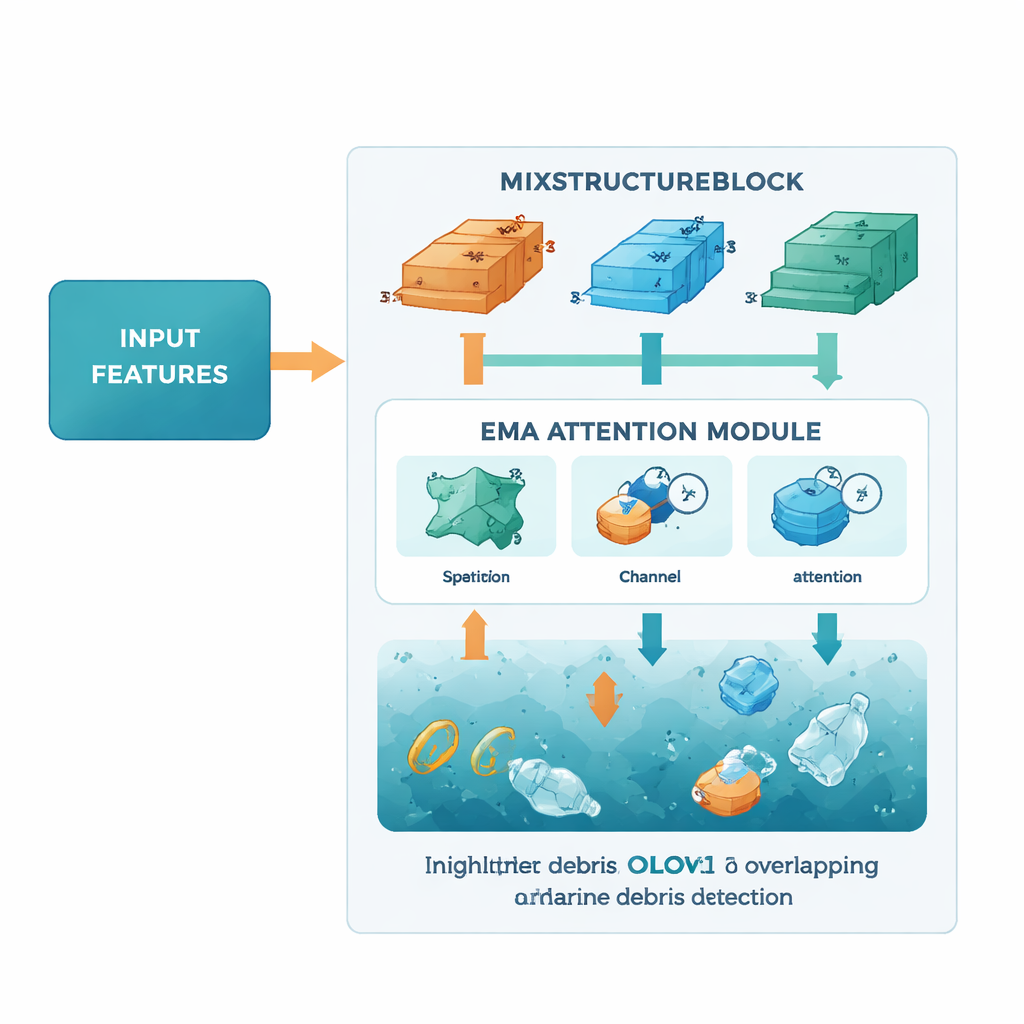

A new way to pick out details at many sizes

The first improvement is a module called the MixStructureBlock, which replaces a standard building block in the YOLOv11 backbone. Instead of using one fixed pattern of filters, MixStructureBlock runs several branches in parallel that look at the scene with different “window sizes” and spacing. This helps the network notice both fine details, like the edge of a bottle cap, and larger shapes, like a drifting bag. On top of that, the block includes simple attention mechanisms that learn to emphasize informative colors and locations while downplaying unhelpful background patches. The result is a richer, cleaner set of features that make small, faint pieces of debris easier to spot.

Teaching the network where to focus

The second upgrade is an Efficient Multi-scale Attention (EMA) module, added later in the network where detections are made. EMA looks at the feature maps both across space and across channels, effectively asking two questions at once: “Where in the image is something important happening?” and “Which types of patterns are most relevant right now?” By pooling information at multiple scales and using lightweight mathematical operations, EMA sharpens the network’s focus on likely trash regions—such as overlapping objects or dim items far from the camera—while keeping the overall model compact and fast enough for real-time use on embedded hardware.

Putting the system to the test

To judge their design, the team trained and evaluated the model on TrashCan, a large public collection of deep-sea images assembled in Japan. One version of the dataset labels debris by specific object type (like cup, bag or metal pipe), while another groups items by material (like plastic or fabric). On both versions, the improved network outperforms several strong baselines, including the original YOLOv11, earlier marine-debris systems and other underwater-focused YOLO variants. It not only detects more debris correctly, especially small and crowded items, but also does so with a remarkably small model size of about 5 megabytes, which is well-suited to power-limited underwater vehicles.

What this means for cleaner oceans

In plain terms, the study shows that carefully rethinking how an AI “looks” at underwater images can make a real difference in finding trash beneath the waves. By combining multi-scale pattern extraction with smart attention to important regions, the proposed system spots more debris while staying efficient enough for real-time use. Deployed on camera systems and underwater robots, such technology could help scientists and environmental agencies map pollution hot spots, guide cleanup efforts and monitor whether policies to reduce marine litter are working—bringing us a step closer to healthier oceans.

Citation: Yuanwei, J., Yijiang, D., Xuemei, W. et al. An improved YOLOv11 network for marine debris detection in underwater environment. Sci Rep 16, 7074 (2026). https://doi.org/10.1038/s41598-026-38305-0

Keywords: marine debris detection, underwater robotics, object detection, deep learning, ocean pollution