Clear Sky Science · en

NeuroAction: a neuroevolutionary approach to reinforcement learning for autonomous vehicles

Why smarter self-driving styles matter

Most of us imagine self-driving cars as calm, perfectly rational drivers. But today’s systems tend to chase a single blend of goals—like not crashing while getting you there quickly—and that blend is baked in by engineers. NeuroAction, the approach described in this paper, aims to give autonomous cars something closer to human flexibility: the ability to choose from many safe driving styles, from cautious "baby-on-board" behavior to brisk highway cruising, without retraining the car each time.

From one-size-fits-all to many safe options

Current deep reinforcement learning systems for driving learn by trial and error: they observe the road, take actions like steering and acceleration, and get a single numerical reward that mixes together different aims such as speed, safety, and lane position. To adjust the system, engineers must design that single reward very carefully. If they weight speed too highly, the car may drive aggressively; if they overemphasize safety, it may crawl along. Changing preferences later usually means going back and retraining a large neural network from scratch, which is slow, memory-hungry, and sensitive to technical settings.

Breaking driving into simple goals

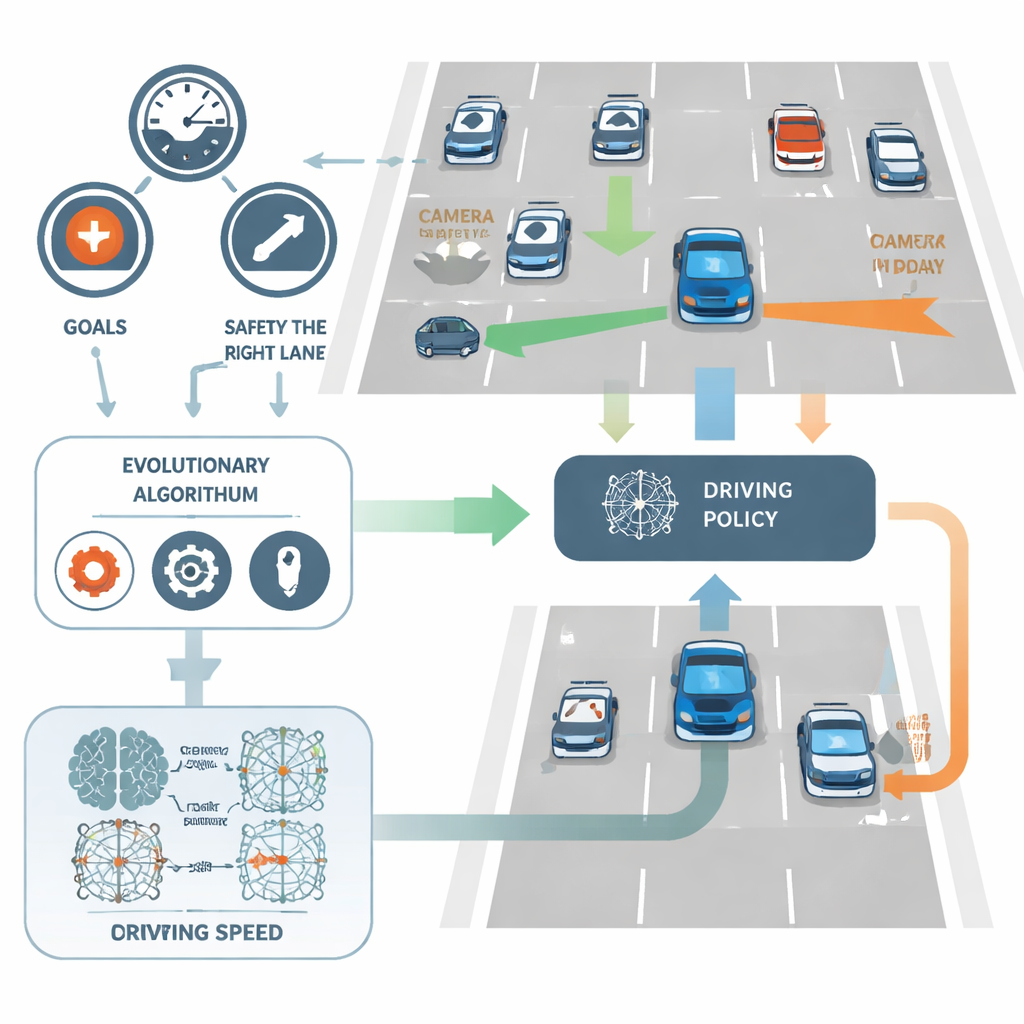

NeuroAction tackles this by splitting the driving task into several clear objectives instead of one. In the study, the car’s virtual driver is judged independently on three things: how fast it travels within a safe range, how faithfully it stays in the rightmost (typically safer) lane, and how well it avoids collisions. Rather than combining these into a single score, the method treats them as separate yardsticks. Behind the scenes, each possible driving policy—the neural network that turns sensor input into steering and speed decisions—is evaluated along all three axes at once.

Let evolution search for better drivers

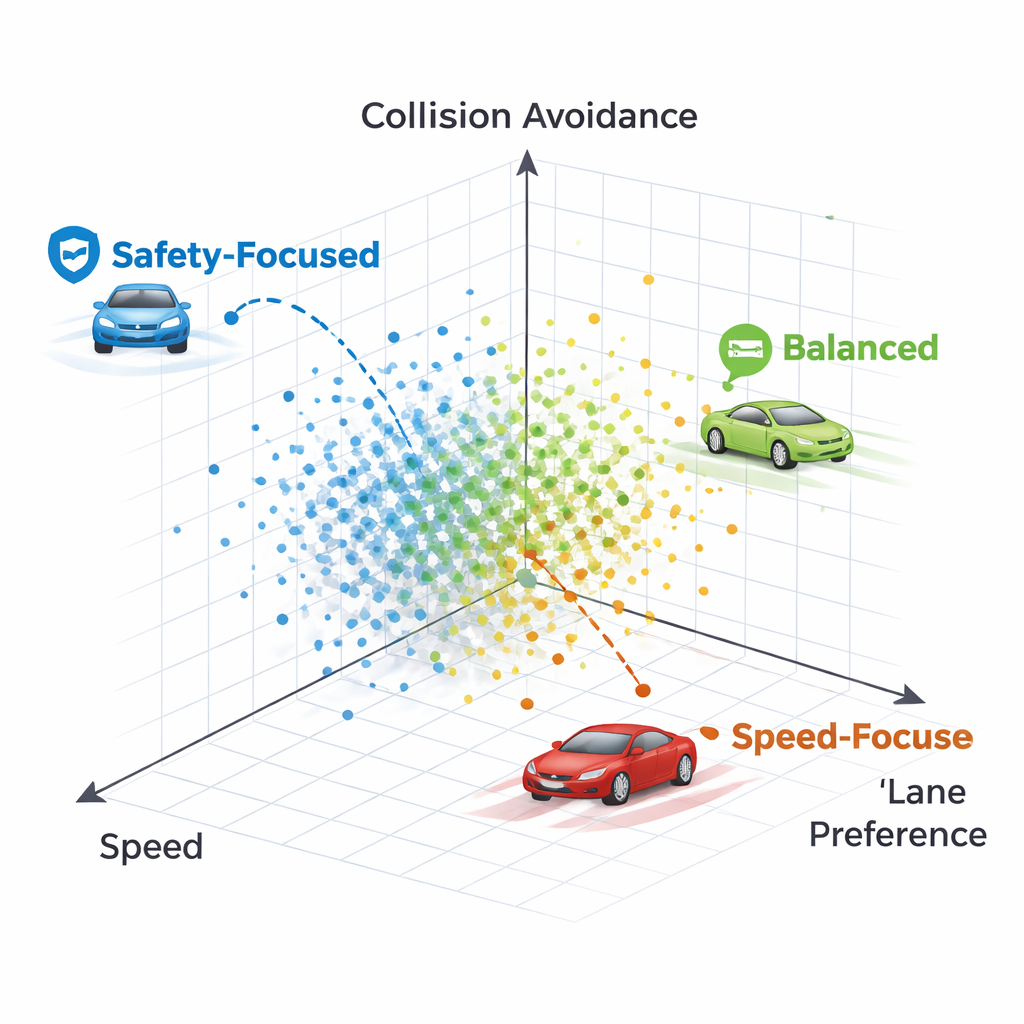

Instead of fine-tuning network weights with the standard backpropagation technique, NeuroAction uses ideas borrowed from biological evolution. A population of different driving policies is created and tested in a simulated highway environment. Policies that strike good trade-offs between speed, lane discipline, and safety are kept and recombined, while poorer ones are discarded. Over many generations, this evolutionary process discovers a whole frontier of strong solutions—known as a Pareto front—where no policy can be improved on one goal without sacrificing at least one of the others.

Comparing evolutionary and gradient-based learning

The researchers applied NeuroAction to a widely used 2D highway simulator, using a standard neural-network-based driving agent. They then optimized the agent’s parameters with several established multi-objective evolutionary algorithms, comparing how well each could cover the range of desirable trade-offs. A key performance measure, the “hypervolume” of the discovered frontier, captures both how good and how diverse the solutions are. One algorithm, NSGA-II, achieved the best overall coverage, while a close relative, NSGA-III, produced particularly consistent results across repeated runs.

What different driving styles look like

By inspecting individual policies on the Pareto front, the authors show that each point corresponds to a recognizably different driving style. One policy keeps firmly to the right lane almost at all costs, sacrificing speed and eventually colliding with a very slow vehicle ahead—an overcautious strategy that values lane preference too highly. Another policy initially changes lanes but then returns to a clear right lane, maintaining higher speed while still avoiding crashes. In general, the methods produce a spectrum of strategies ranging from conservative, lane-keeping drivers to more assertive but still safe cruisers, all available simultaneously without retraining.

What this means for future self-driving cars

To a non-specialist, the central message is that NeuroAction turns the training of self-driving cars into a search for many good options instead of one fixed behavior. This makes it possible to select a driving policy to match the situation—slow and ultra-safe when carrying children, faster when you are in a hurry—while still respecting safety constraints. Although the current experiments are in simulation and use simplified objectives, the framework points toward more adaptable, preference-aware autonomous vehicles that can offer personalized yet reliable driving styles built on a solid mathematical foundation.

Citation: Aboyeji, E., Ajani, O.S., Fenyom, I. et al. NeuroAction: a neuroevolutionary approach to reinforcement learning for autonomous vehicles. Sci Rep 16, 7403 (2026). https://doi.org/10.1038/s41598-026-38269-1

Keywords: autonomous driving, reinforcement learning, evolutionary algorithms, multi-objective optimization, self-driving cars