Clear Sky Science · en

Multi-level attention DeepLab V3+ with EfficientNetB0 for GI tract organ segmentation in MRI scans

Sharper Aiming at Tumors

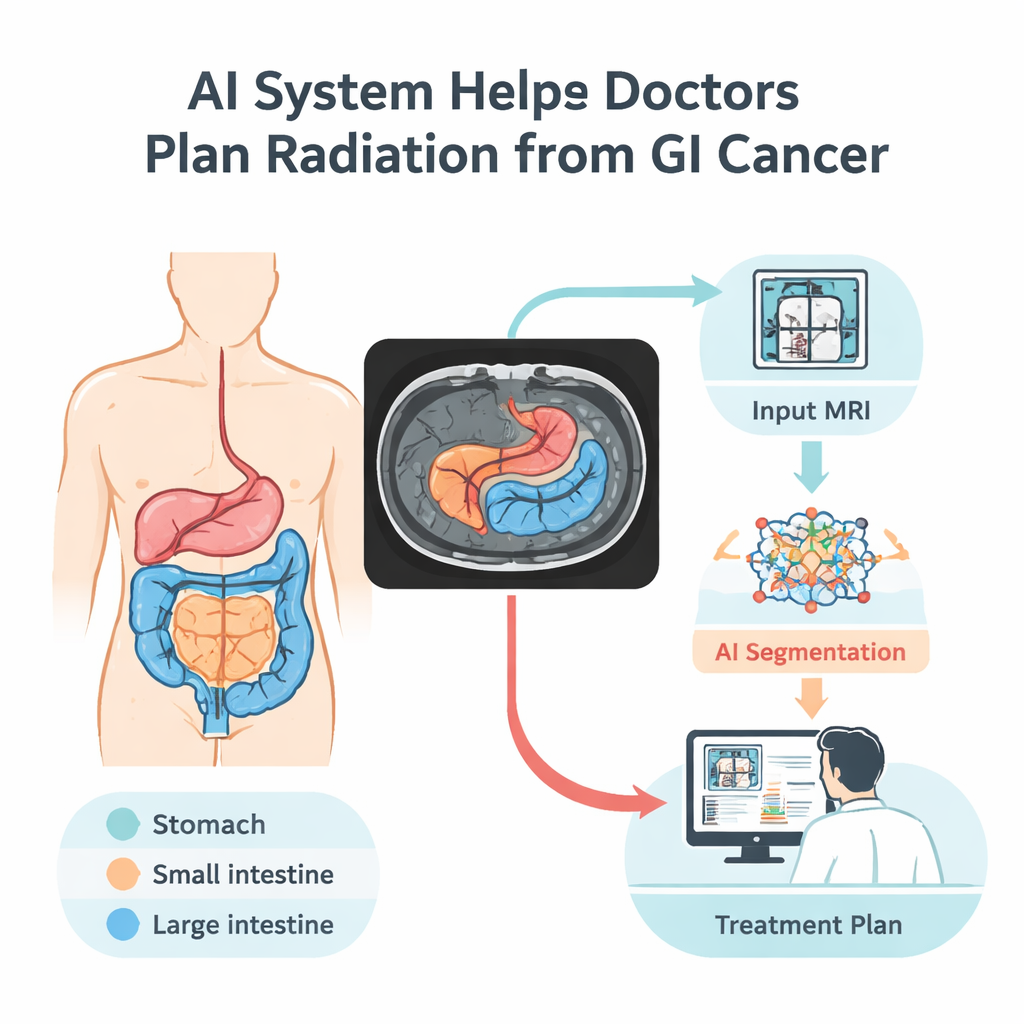

When doctors treat cancers of the digestive system with radiation, they face a delicate balancing act: hit the tumor hard while sparing nearby healthy organs like the stomach and intestines. Today, outlining those organs by hand on each magnetic resonance imaging (MRI) scan can take up to an hour per patient per day. This study introduces a computer vision system that automatically traces these organs in MRI images, promising faster, more precise treatment planning and fewer side effects for patients.

Why Mapping the Gut Matters

Gastrointestinal cancers are common and often deadly, with overall survival hovering around 30 percent. Radiation therapy is a mainstay of treatment, but the digestive tract is packed tightly inside the abdomen, and healthy organs can shift slightly from day to day. To avoid damaging the stomach, small intestine, and large intestine, specialists must see exactly where they are before each treatment session. Manual outlining is slow and prone to variation between experts. An automated, reliable way to draw these boundaries could shorten appointments, allow doctors to treat more patients, and improve the safety and accuracy of radiation doses.

Teaching Computers to Read MRI Scans

The researchers built an artificial intelligence model that learns to recognize three key digestive organs on MRI scans: the stomach, small intestine, and large intestine. They trained it on the UW–Madison GI Tract dataset, the only public collection with detailed organ outlines on abdominal MRI. This dataset includes 38,496 images from 85 patients, along with carefully prepared labels that mark where each organ appears—or where no organ is present. To make the most of this relatively small sample, the team split the data by patient (so the model never sees the same person in both training and testing) and expanded the dataset by flipping, rotating, brightening, and gently warping images. These controlled changes help the system cope with real-world variations in patient position, image brightness, and subtle shape differences.

How the New AI Model Sees Patterns

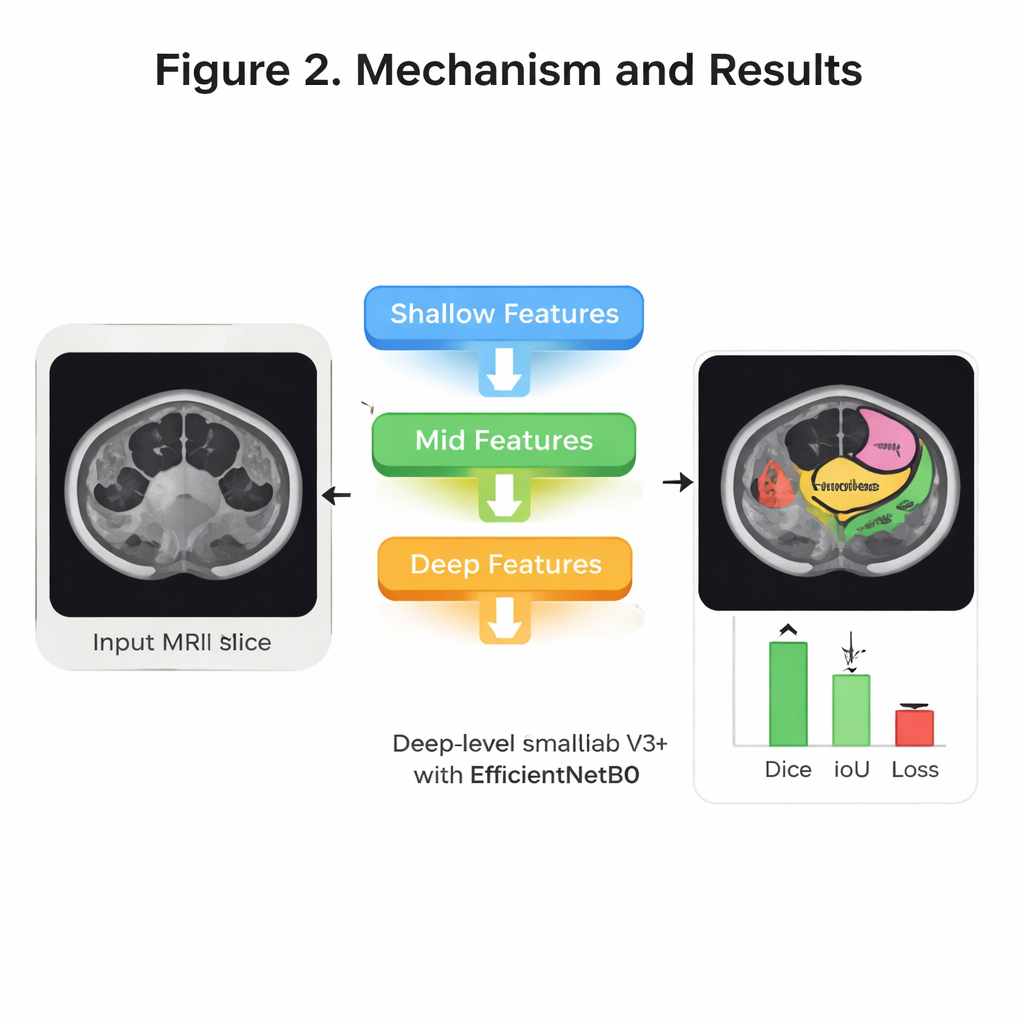

Inside the model, several ideas from modern image recognition are combined to sharpen its “eye” for anatomy. First, a compact network called EfficientNet B0 scans each image and builds up layers of visual features—from simple edges to complex organ shapes—while keeping computing demands modest. Next, a structure known as DeepLab V3+ looks at the image at multiple scales, a bit like zooming in and out to understand both fine detail and overall context. On top of this, the authors add an “attention” mechanism at several levels of detail. In simple terms, attention helps the system decide which parts of the image and which internal signals deserve more weight, so it can focus on subtle but important cues that distinguish, for example, the stomach from loops of small bowel. Finally, a decoder stage stitches these clues back together into a clean, full-size mask showing the three organs.

Testing Accuracy and Efficiency

The team systematically tuned how they trained the system—trying different optimization methods, numbers of training cycles, and ways of splitting the data for validation. Their best configuration used an optimizer called RMSprop, four-fold cross-validation, and 30 rounds of training. On held-out test patients, the model correctly labeled more than 99 percent of pixels overall and showed very strong overlap with expert-drawn outlines. A widely used overlap measure, the Dice score, reached about 94 percent on average across the three organs, while a related measure, Intersection over Union, reached about 92 percent. Just as important for hospital use, the system is relatively lightweight: it has about 8.3 million trainable parameters and can process a typical 224×224 MRI slice in roughly 31 milliseconds, fast enough for near real-time support during daily treatment planning.

What This Could Mean for Patients

In everyday terms, this study shows that a carefully designed AI can reliably trace the stomach and intestines on MRI images, matching expert performance while working far faster and more consistently. That capability could help radiation oncologists adjust beams more precisely around sensitive tissues, reducing unwanted damage and side effects during treatment. While the current model was trained on scans from one center and mostly healthy anatomy, it provides a strong foundation for future systems that include diseased organs and data from multiple hospitals. With further testing and refinement, tools like this could become routine assistants in the radiation planning room, quietly ensuring that life-saving X-rays land exactly where they are needed most.

Citation: Sharma, N., Gupta, S., Al-Yarimi, F.A.M. et al. Multi-level attention DeepLab V3+ with EfficientNetB0 for GI tract organ segmentation in MRI scans. Sci Rep 16, 7546 (2026). https://doi.org/10.1038/s41598-026-38247-7

Keywords: gastrointestinal cancer, MRI segmentation, radiation therapy planning, deep learning in medicine, medical image analysis