Clear Sky Science · en

Explainable active reinforcement deep learning improves lung cancer detection from CT images

Why this matters for patients and families

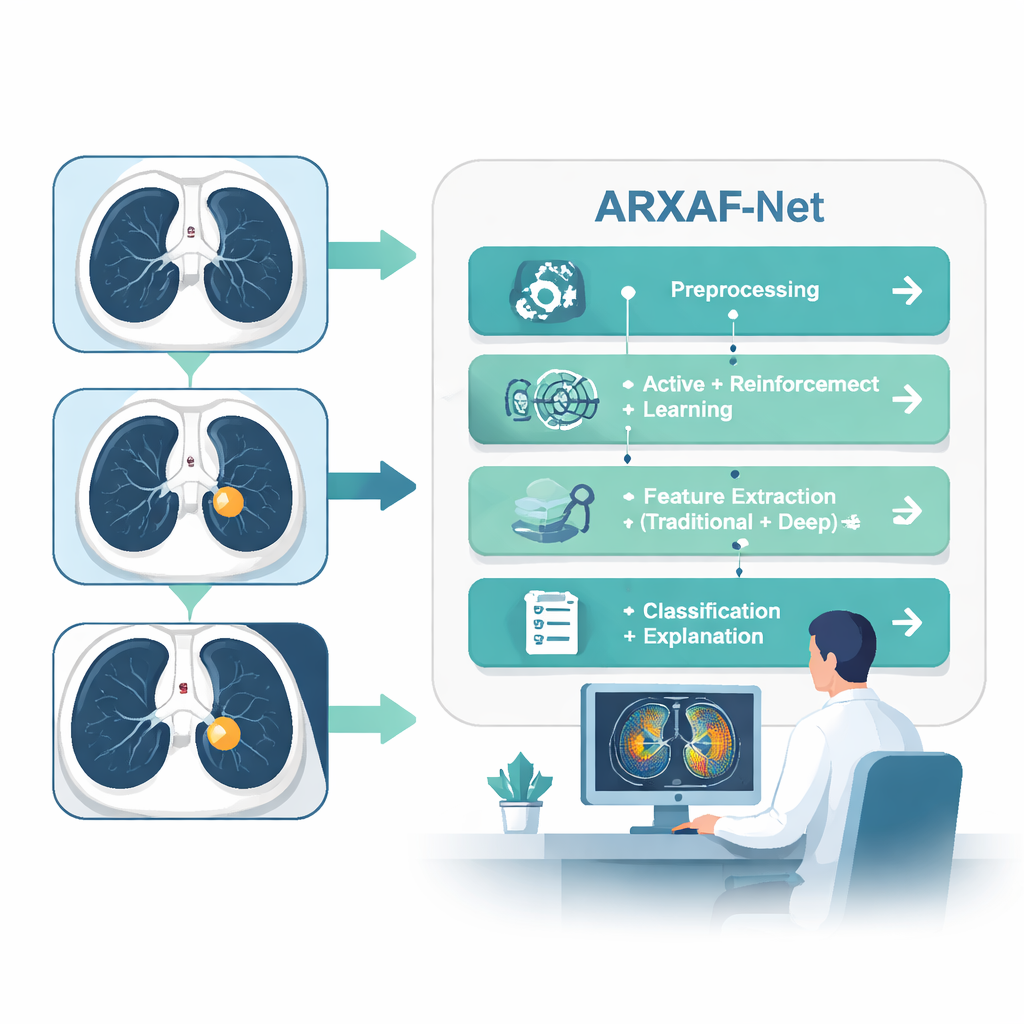

Lung cancer is one of the deadliest cancers largely because it is often found too late. Doctors rely on CT scans to spot tiny spots in the lungs, but reading thousands of images is exhausting and easy to get wrong. This paper presents a new computer system, called ARXAF‑Net, that aims to catch lung cancer earlier and more accurately while also showing doctors why it reached each decision. That mix of high accuracy, fewer missed cancers, and clear visual explanations could make AI a safer, more trusted helper in the clinic.

Teaching computers to learn from the right scans

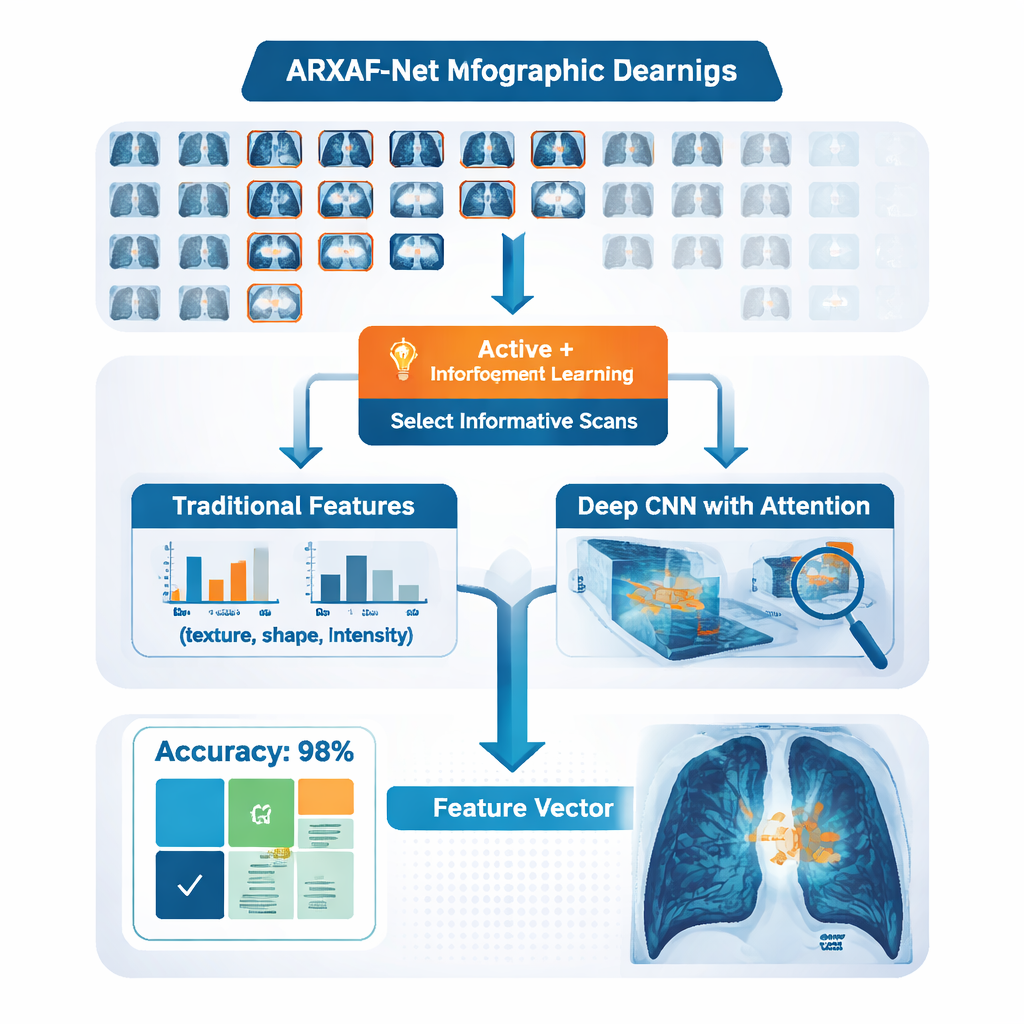

Most powerful AI systems need huge numbers of carefully labeled images, which in medicine means many hours of work by expert radiologists. ARXAF‑Net tackles this problem with a strategy that asks the computer to be picky about which images humans must label. It starts with a modest set of CT scans where each image is already known to be cancer or not. The model then looks at thousands of unlabeled scans and calculates how uncertain it is about each one. Instead of labeling everything, it selects only the most confusing or informative cases and passes them to a special decision-making module inspired by reinforcement learning, a technique also used in game‑playing AIs. This module learns, step by step, how to assign reliable labels to these tricky scans, gradually building a much larger, high‑quality training set without requiring experts to label every image.

Blending human-crafted clues with deep learning

ARXAF‑Net does not rely on a single type of image cue. The system extracts traditional "handcrafted" features that radiologists and image scientists have used for years—such as how rough or smooth a region looks, how bright it is, and what shape a possible nodule takes. At the same time, a deep neural network analyzes the raw CT pixels and automatically learns complex patterns linked to cancer, helped by an "attention" mechanism that teaches the network to focus on the most informative parts of the lungs. All of these measurements are carefully scaled and combined into a single compact fingerprint for each scan. The authors then apply feature-selection methods to keep only the most useful elements of this fingerprint, reducing noise and keeping the system efficient.

From numbers to clear answers and heatmaps

Once each CT image has its fingerprint, ARXAF‑Net tests several types of classifiers—both classic machine learning methods and modern deep networks—to decide whether the image shows cancer. The best-performing setup turns out to be a relatively simple convolutional neural network equipped with attention, fed by the combined traditional and deep features. On a curated dataset of 30,020 CT images (evenly split between cancer and non‑cancer), this combined system reaches a striking test accuracy of about 99.9%, with very high sensitivity (catching almost all cancers) and near‑perfect specificity (rarely flagging healthy lungs as diseased). Just as important, the authors measure how long training and testing take, showing that the model can run fast enough to be practical in hospital settings.

Making AI decisions visible to radiologists

A major barrier to using AI in medicine is trust: doctors are reluctant to rely on a "black box" whose reasoning they cannot see. ARXAF‑Net addresses this by building explainability directly into its design. Using a technique called Grad‑CAM, the system overlays a colored heatmap onto each CT scan, highlighting the regions that influenced its decision most strongly. Three experienced radiologists reviewed hundreds of these heatmaps. They checked whether the highlighted areas matched real tumor regions and whether any suspicious spots were missed. With the heatmaps turned on, the radiologists’ own accuracy rose from about 97% to nearly 100%, and their reading time fell by about a quarter. Quantitative tests also showed strong alignment between the AI’s focus and the experts’ markings, suggesting that the system is looking at clinically meaningful structures rather than random image noise.

What this means for future lung cancer care

To a layperson, ARXAF‑Net can be thought of as a careful assistant that learns quickly from the hardest cases, combines many types of visual clues, and then shows its work. By cutting down on the amount of expert labeling needed, it could make powerful lung‑cancer screening tools more accessible. By pairing very high accuracy with transparent heatmaps that radiologists understand, it may also help build the confidence needed to bring AI into everyday clinical practice. If similar ideas are validated on data from many hospitals and scanner types, such systems could help catch lung cancer earlier and more reliably, giving patients a better chance at timely treatment.

Citation: Nady, G., Salem, A., Badawy, O. et al. Explainable active reinforcement deep learning improves lung cancer detection from CT images. Sci Rep 16, 7510 (2026). https://doi.org/10.1038/s41598-026-38239-7

Keywords: lung cancer, CT imaging, medical AI, deep learning, explainable AI