Clear Sky Science · en

Evaluation of deep learning models for segmentation of hippocampus volumes from MRI images in Alzheimer’s disease

Why this research matters to families

Alzheimer’s disease slowly erodes memory and independence, often long before symptoms become obvious. Doctors know that a small brain structure called the hippocampus shrinks as the disease progresses, but measuring that shrinkage by hand on brain scans is slow and difficult. This study explores whether modern artificial intelligence can automatically outline the hippocampus on MRI images and reliably estimate how much has been lost on each side of the brain, potentially giving doctors a faster, more objective window into early brain changes.

A small brain region with a big role in memory

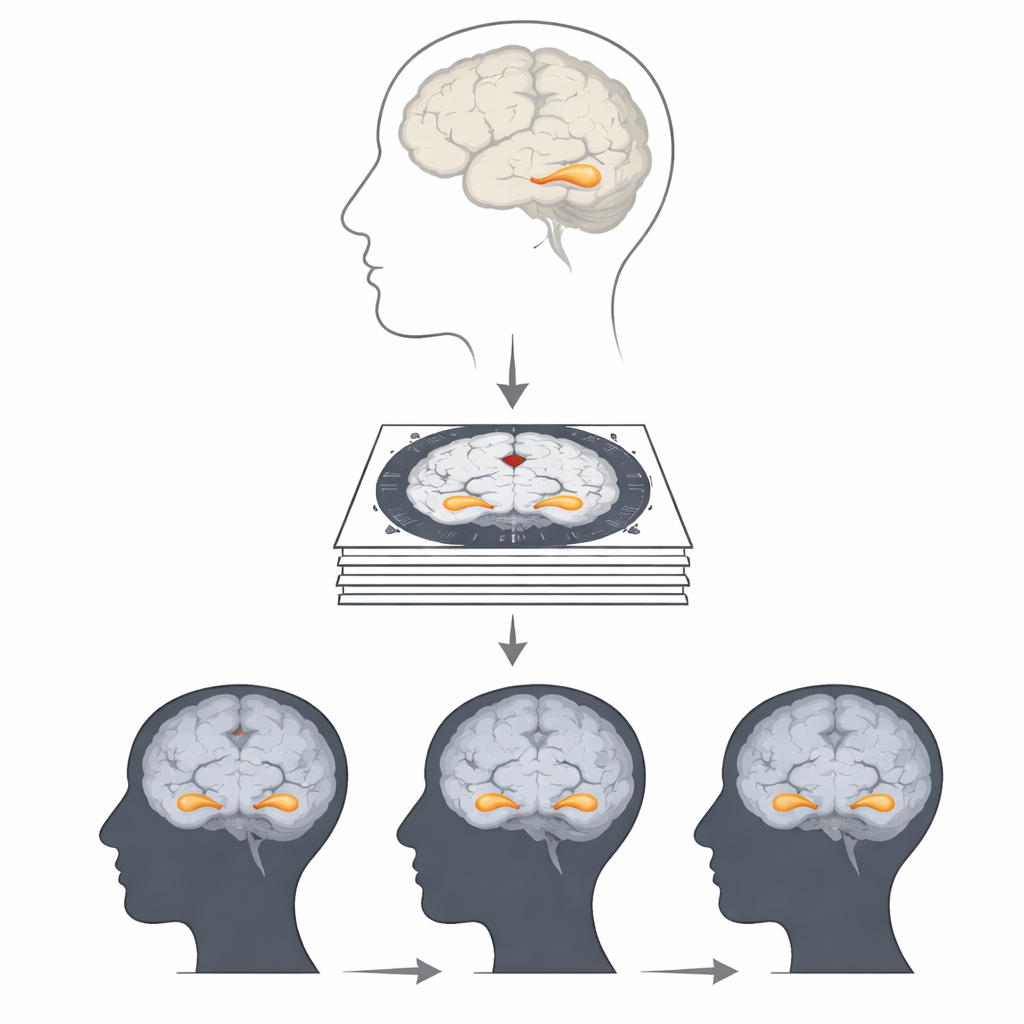

The hippocampus, tucked deep in the temporal lobes on both sides of the brain, helps us form new memories and navigate our surroundings. Earlier research has shown that its volume tends to decline in people with Alzheimer’s disease, and that loss can begin years before a formal diagnosis. The left hippocampus is more closely tied to verbal and autobiographical memories, while the right side plays a larger role in spatial memory and navigation. Tracking how the size of each side changes over time could therefore reveal not only whether disease is present, but how it might affect day-to-day thinking and functioning.

Why measuring the hippocampus is so hard

On an MRI scan, the hippocampus appears as a small, intricately shaped structure, just a tiny part of each image slice. Traditionally, experts trace its boundaries by hand across 25 to 30 slices, then combine those areas to calculate volume. This manual approach is considered the gold standard, but it demands specialized training, takes considerable time, and is hard to apply to the thousands of scans collected in large studies or busy clinics. Existing automated software can handle larger, simpler brain regions well, yet often struggles to capture the fine details of the hippocampus consistently, especially across different scanners and image qualities.

Putting deep learning to the test

To tackle this challenge, the researchers evaluated three deep learning models designed to find and outline objects in images. They used MRI scans from 300 people in the Alzheimer’s Disease Neuroimaging Initiative: 100 with Alzheimer’s disease, 100 with mild cognitive impairment (a possible early stage), and 100 healthy older adults. After a neurologist carefully labeled the hippocampus on thousands of image slices, the team trained the models to learn the visual patterns that define this structure. They compared performance using several standard accuracy measures, focusing on how well each model’s predicted outlines overlapped with the expert labels.

The winning model and what it revealed

Among the three approaches, a model called U-Net clearly performed best at drawing accurate borders around the hippocampus on both sides of the brain. It achieved the highest overlap with expert labels across all three groups, outperforming a popular object-detection model known as YOLO-v8 and another advanced method called DeepLab-v3. Once trained, the U-Net model was used to segment the hippocampus in a separate test set of images and to calculate volumes. The results showed a clear pattern: people with Alzheimer’s had the smallest hippocampal volumes, those with mild cognitive impairment had intermediate volumes, and healthy controls had the largest. In every group, the left side tended to be slightly smaller than the right.

Subtle differences between left and right

By comparing the two sides directly, the researchers also examined how symmetrical the hippocampus was in each group. They found that in healthy older adults, the right side was noticeably larger than the left, giving the highest asymmetry. In contrast, people with Alzheimer’s disease and those with mild cognitive impairment showed smaller overall volumes and only slight differences between left and right. This suggests that as disease progresses, both hippocampi shrink and their volumes become more similar, a pattern that may carry information about how memory and other cognitive abilities are changing.

What this means for future care

For non-specialists, the take-home message is that artificial intelligence can now match expert performance in a tedious but crucial step: outlining the hippocampus on brain scans. In this study, the U-Net model proved especially reliable for this task, allowing rapid calculation of hippocampal volume on both sides of the brain. If further validated in larger and more diverse datasets, such tools could help clinicians track early brain changes more easily, support earlier and more confident diagnosis, and monitor how well treatments slow or alter disease progression. The work brings us closer to using routine MRI scans, enhanced by deep learning, as a practical biomarker for Alzheimer’s disease in everyday clinical practice.

Citation: Pusparani, Y., Lin, CY., Jan, YK. et al. Evaluation of deep learning models for segmentation of hippocampus volumes from MRI images in Alzheimer’s disease. Sci Rep 16, 7878 (2026). https://doi.org/10.1038/s41598-026-38220-4

Keywords: Alzheimer’s disease, hippocampus volume, brain MRI, deep learning segmentation, U-Net