Clear Sky Science · en

Ensemble-based high-performance deep learning models for medical image retrieval in breast cancer detection

Why smarter scans matter for breast health

Breast cancer is one of the most common cancers in women, and ultrasound scans are a key tool for spotting suspicious lumps early. But doctors today must sift through growing archives of medical images, and computers that could help often struggle to truly “understand” what they see. This study introduces a smarter kind of image-search engine for breast ultrasound that not only finds and classifies tumors with high accuracy, but also shows doctors what parts of the image guided its decisions.

From simple pictures to helpful comparisons

Hospitals now store huge numbers of breast ultrasound scans, making it hard and time‑consuming to locate past cases that resemble a new patient’s image. Earlier content‑based image retrieval systems compared pictures using basic traits like brightness or texture, which often failed to match how radiologists reason about disease. The authors aim to close this gap by training a deep learning system on a widely used collection of 830 breast ultrasound images, grouped into normal tissue, harmless (benign) tumors, and dangerous (malignant) tumors. Their goal is twofold: classify a new scan into one of these three groups and then automatically retrieve similar past scans to guide diagnosis.

Teaching a hybrid AI to see patterns

The team builds a “hybrid” model that combines three kinds of neural networks, each playing a different role. A convolutional network specializes in reading the spatial patterns in an ultrasound image, such as the shape of a lump or how sharply its edges stand out. A recurrent network, more often used for sequences like speech, is adapted to treat rows of pixels as a kind of ordered signal, helping the system notice subtle changes across the image. On top of these, an explainable AI component produces heatmaps that highlight the image regions most responsible for a decision, so clinicians can check whether the model is focusing on the tumor rather than on irrelevant background.

Cleaning, expanding, and organizing the data

Before training, the researchers carefully prepare the ultrasound images. They remove duplicates and unhelpful borders, convert the scans to a common gray‑scale format, crop away blank regions, and resize everything to a standard small square so the model can process data efficiently. Each image is labeled as normal, benign, or malignant, and mask images outline the exact tumor regions. Because medical datasets are usually small, they artificially expand this collection by rotating, flipping, zooming, and adjusting contrast, growing the training set from 548 to 3,840 images. This controlled variation teaches the network to cope with the many ways real tumors can appear on different machines and in different patients.

How the system classifies and searches

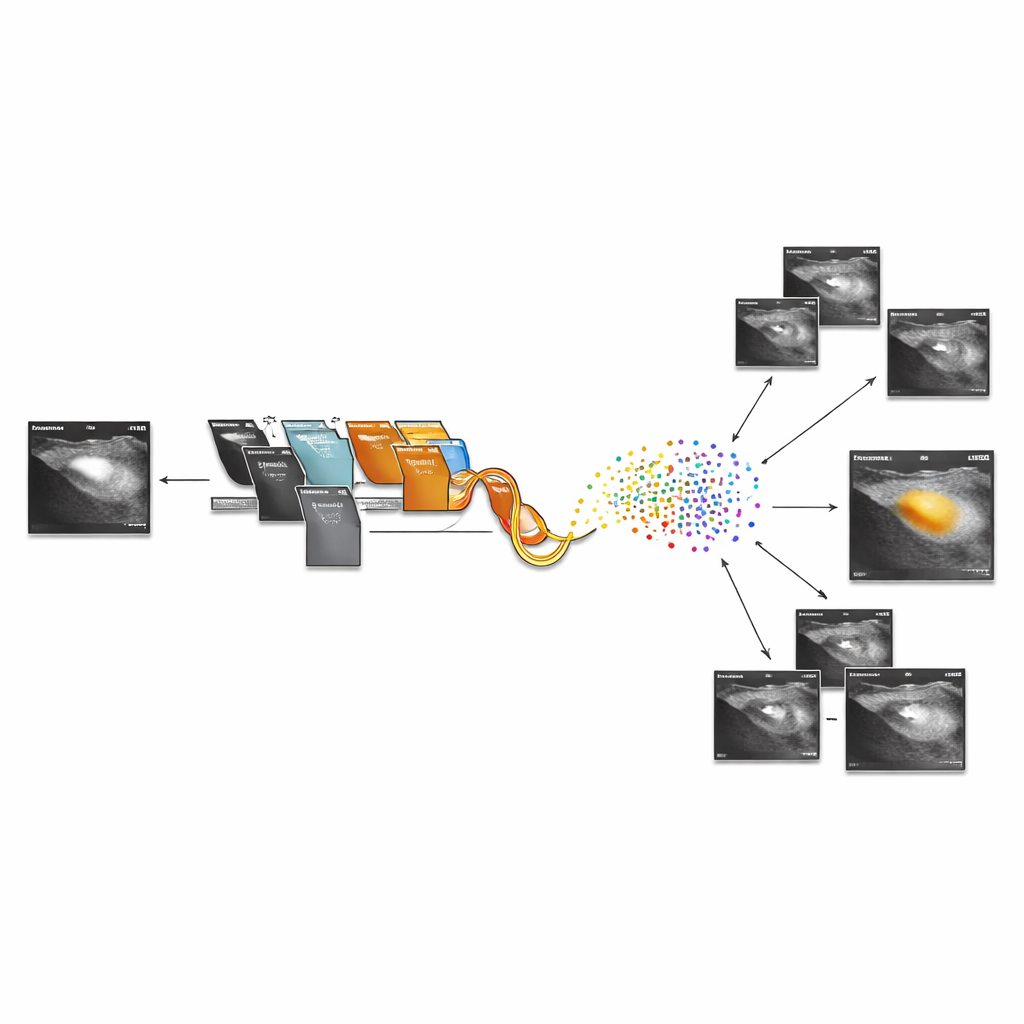

Once trained, the hybrid model turns each ultrasound scan into a compact numerical fingerprint taken from the second‑to‑last layer of the network. Images with similar fingerprints tend to show similar tissue patterns, so the team can compute simple distances between these fingerprints to find the closest matches in the database. The system first predicts whether the new scan is normal, benign, or malignant, then retrieves visually and clinically similar cases, giving the radiologist a gallery of reference images. The explainability module overlays warm‑colored regions on the original scan, showing where the network “looked” to reach its conclusion, which can build trust and support teaching and second opinions.

What the results mean for patients

In tests on the breast ultrasound dataset, the hybrid approach reaches about 99% classification accuracy and outperforms several leading deep learning models that rely on a single architecture. It also shows stable behavior across multiple train‑test splits, suggesting that its performance is not a fluke of one dataset split. For patients, this means that, in the future, a radiologist could not only get a highly reliable computer‑assisted reading of an ultrasound, but also instantly see similar past cases and precisely which parts of the image raised concern. While the authors note that broader clinical trials and tests on other imaging types are still needed, their work points toward more transparent, dependable, and efficient use of AI in breast cancer detection.

Citation: Fawzy, A.E., Almandouh, M.E., Herajy, M. et al. Ensemble-based high-performance deep learning models for medical image retrieval in breast cancer detection. Sci Rep 16, 8723 (2026). https://doi.org/10.1038/s41598-026-38218-y

Keywords: breast ultrasound, medical image retrieval, deep learning, breast cancer detection, explainable AI