Clear Sky Science · en

A hybrid deep learning framework using convolutional and transformer models for robust plant disease classification

Why spotting sick plants matters

Plant diseases quietly destroy a huge share of the world’s food every year, cutting yields, hurting farmers’ incomes, and threatening food security. Catching these diseases early is hard: fields are large, expert plant doctors are scarce, and many symptoms are subtle. This paper explores how a new type of artificial intelligence can learn to recognize dozens of leaf diseases from simple photos, offering a path toward smartphone or field-camera tools that help farmers act before problems spread.

From human guesswork to digital eyes

Traditional diagnosis relies on people visually inspecting leaves and sometimes sending samples to a lab. That process is slow, subjective, and often unavailable in rural regions. Over the past decade, researchers have trained computer programs to read leaf images instead. Earlier systems either required engineers to hand-design visual cues, or used deep learning models called convolutional neural networks that excel at picking up textures, colors, and edges. These methods improved accuracy but still struggled when disease signs were faint, spread across the leaf, or looked similar across different illnesses. The new study asks whether combining two modern AI approaches can deliver more reliable answers in these difficult cases.

Blending two ways of seeing

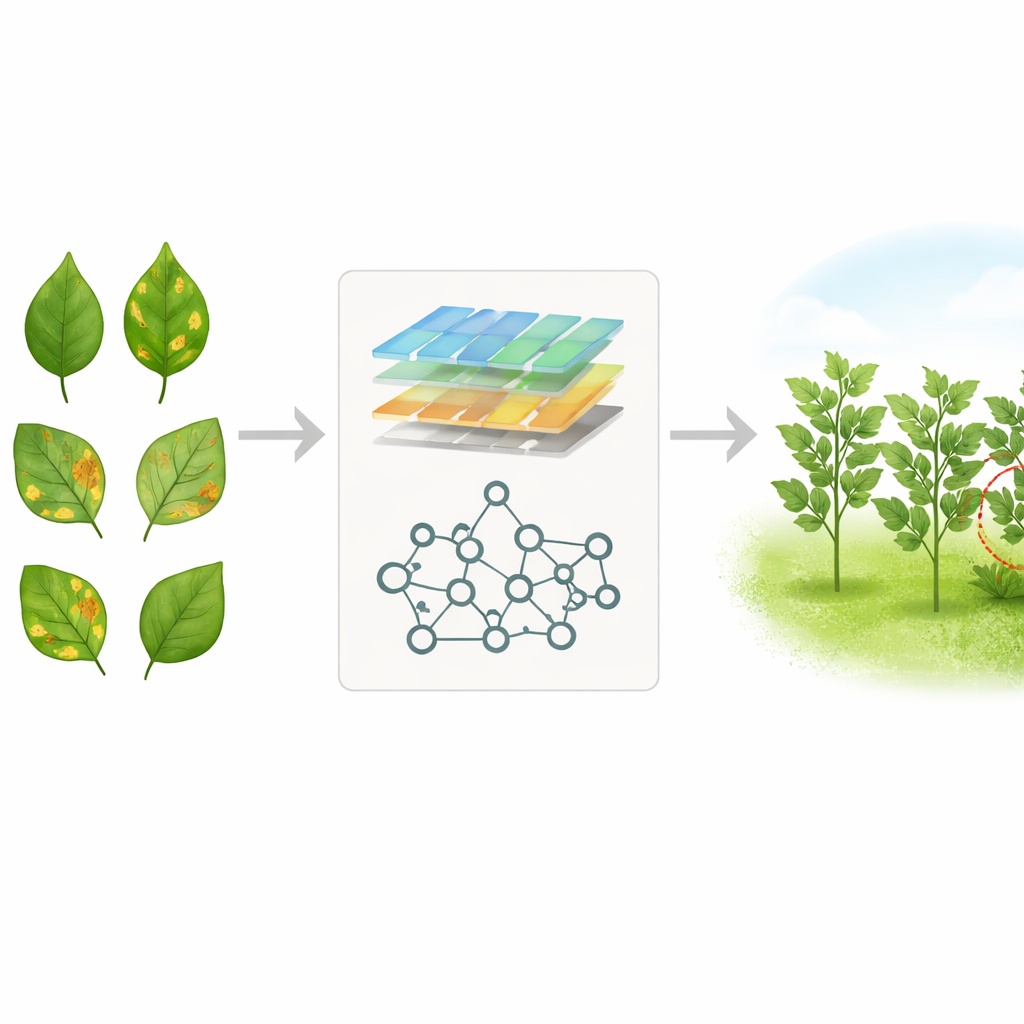

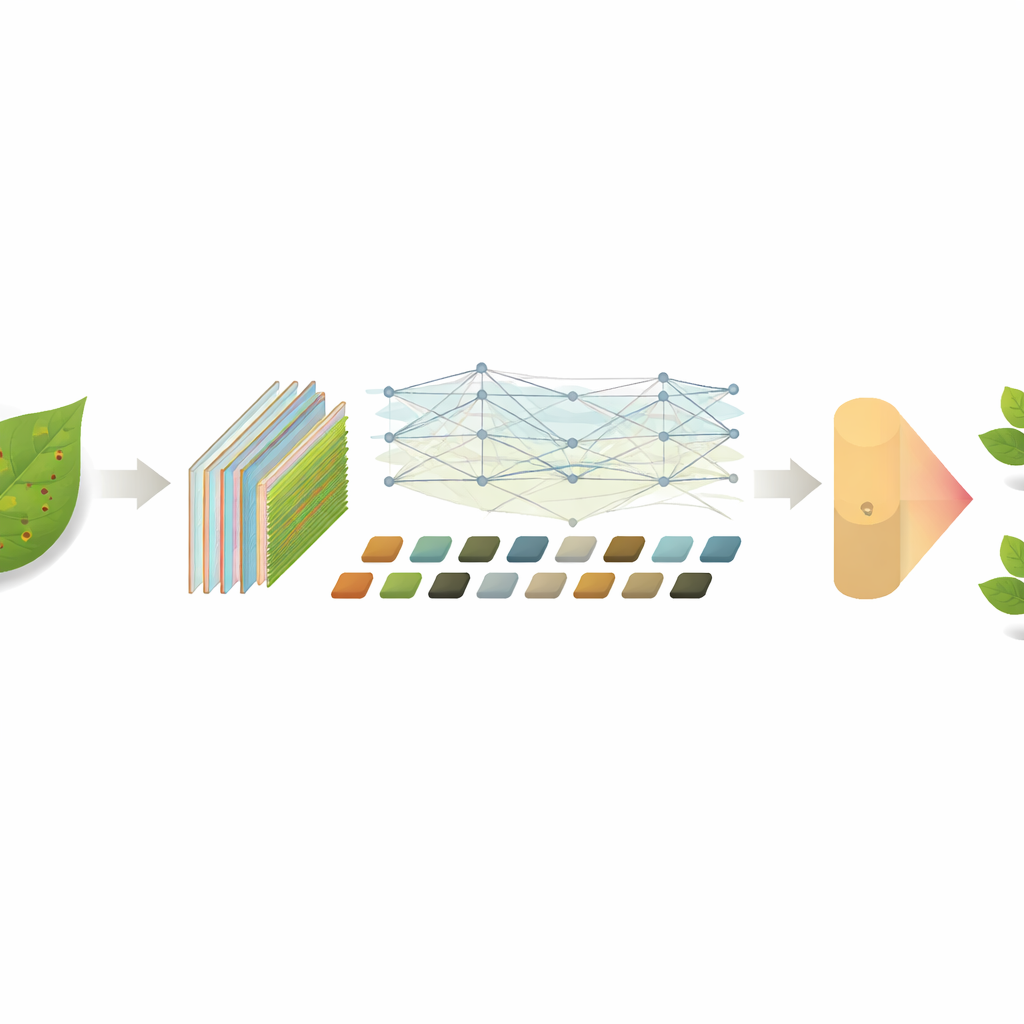

The authors build a hybrid system that fuses a convolutional network with a newer image model known as a vision transformer. The first part, EfficientNet-B7, acts like a magnifying glass, scanning leaf photos for fine details such as tiny spots, veins, and color shifts. Its output is then reshaped and passed to a transformer (ViT-B16), which is designed to notice how different regions of the image relate to each other across long distances. By turning the detailed features into a series of small patches and letting the transformer weigh how each patch interacts with all the others, the system can understand both local blemishes and the overall pattern on the leaf surface. This combination aims to mimic how a skilled agronomist looks closely at a lesion while also considering its placement and surroundings.

Teaching the system with thousands of leaves

To train and test their model, the researchers used a large public collection of 21,534 images showing 38 different conditions, including many diseases and healthy leaves from crops such as apple, tomato, grape, and corn. They standardized the photos to a common size and applied digital tricks—such as rotations, flips, and zooms—to simulate the messy conditions of real fields. The model first learns general visual patterns from existing image data, then is fine-tuned on this plant collection. Throughout training, the team tracks not just overall accuracy but also how often the system correctly identifies each disease and how well it avoids false alarms, making sure performance holds up across both common and rarer classes.

How well the hybrid approach performs

When evaluated on unseen images, the hybrid model correctly classifies plant health and disease in 98.13 percent of cases, and maintains high scores across strict measures of precision, recall, and balance between the two. It handles both healthy leaves and difficult diseases, though very early symptoms remain more challenging. The authors compare their system with a range of popular alternatives, including stand‑alone convolutional networks, pure transformer models, lightweight mobile networks, fast detectors like YOLO, and classic tools such as support vector machines and random forests. Across these head‑to‑head tests, the hybrid consistently comes out on top, edging out even strong competitors that use only EfficientNet or ensembles of multiple networks.

What this means for farms and food

In practical terms, the study shows that combining two complementary AI “views” of an image—sharp local detail and broad context—can significantly improve automatic plant disease detection. While the current system still expects fairly clear photos and runs best on machines with graphics processors, the same design ideas could be adapted into lighter versions for smartphones, drones, or low‑cost field devices. As these tools mature, they could give farmers rapid, on‑the‑spot guidance about what is attacking their crops and where, supporting earlier treatment, reduced chemical use, and more stable harvests. For everyday readers, the key message is that smarter cameras and algorithms are becoming powerful allies in protecting the world’s food supply.

Citation: Jawed, M.M., Tufail, F.A., Ahmed, M.Z. et al. A hybrid deep learning framework using convolutional and transformer models for robust plant disease classification. Sci Rep 16, 9704 (2026). https://doi.org/10.1038/s41598-026-38209-z

Keywords: plant disease detection, deep learning, vision transformer, precision agriculture, image classification