Clear Sky Science · en

Building user trust in AI chatbots for customer service through human-like cues and perceived reliability

Why talking to machines feels more human

Many of us now turn to chat windows instead of call centers when we need help with an order, a bill, or a late delivery. These helpers are often chatbots—computer programs that talk back in everyday language. Yet people still wonder: Can I really trust a machine with my problems and my personal information? This study looks closely at how real shoppers in Pakistan and China decide whether to trust customer service chatbots, and what makes these digital helpers feel both caring and reliable.

Digital helpers on the front line

Companies worldwide are embracing chatbots because they are fast, always available, and cheaper than large call-center teams. In countries like Pakistan and China, where online shopping is growing rapidly, chatbots increasingly answer questions, track orders, and handle complaints. But surveys show that many users come away disappointed, describing chatbots as cold, unhelpful, or hard to trust. The researchers behind this paper wanted to move beyond statistics and hear detailed stories from users themselves, especially in these two fast-changing but under-studied markets.

Listening to real conversations

To uncover how trust forms—or breaks—the team interviewed 28 people who had recently used chatbots for customer service, 18 in Pakistan and 10 in China. Rather than running a questionnaire, they held in-depth conversations in local languages, asking participants to describe good and bad experiences with chatbots. The interviews were carefully transcribed, translated, and analysed using a step‑by‑step method called thematic analysis. This approach allowed the researchers to group hundreds of comments into broader patterns, revealing two big ingredients of trust: how human the chatbot feels, and how capable it seems.

When a chatbot feels like a person

The first ingredient is emotional. Users described trusting chatbots more when the interaction felt natural, warm, and personal. Smooth back‑and‑forth conversation signaled that the chatbot was “paying attention.” Simple touches—friendly greetings, a polite tone, or well‑placed emojis—made the experience feel less mechanical. People especially valued moments when the chatbot seemed to understand their frustration, responded kindly, or remembered details from past chats. These qualities created a sense of social presence, as if a real person were on the other side. At the same time, some users felt uneasy when the chatbot acted “too human,” or when personalisation felt intrusive, showing that warmth works best when it feels authentic and not overdone.

When a chatbot proves it can do the job

The second ingredient is practical. Even the friendliest chatbot quickly loses trust if it gives wrong answers, responds slowly, or behaves inconsistently from one visit to the next. Participants placed high value on accurate information, quick replies, and steady performance across multiple conversations. They also appreciated clear signals about what the chatbot could and could not do, and liked it when the system handed them off to a human agent instead of guessing or hiding its limits. Concerns about privacy were strong: users wanted reassurance that their personal and payment details were stored safely and not being misused. When these practical needs were met, emotional touches like empathy and humour felt genuine rather than like a mask.

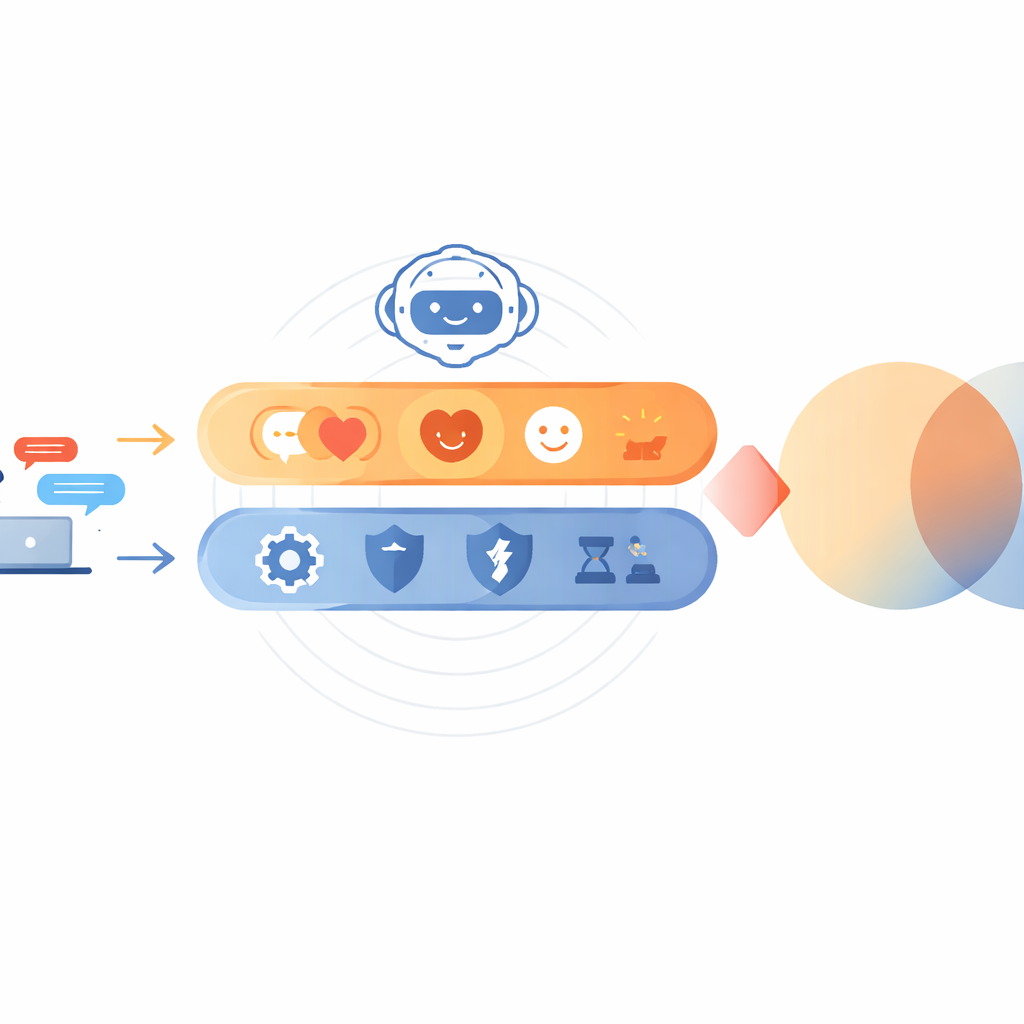

Two kinds of trust working together

By combining these stories, the authors propose a simple but powerful picture of how trust in chatbots develops. Emotional trust—sparked by human‑like conversation, empathy, and personal attention—helps people feel comfortable giving the chatbot a chance. Practical trust—built on accuracy, speed, consistency, and data protection—keeps them coming back. In the settings studied, especially in Pakistan, people often relied first on emotional signals to overcome their uncertainty about new technology, then judged the system on its performance. For businesses, the message is clear: a trustworthy chatbot must be both heart and brain. It should talk like a considerate human while acting like a careful, competent professional. When both sides work together, customers are more likely to treat these digital helpers not as cold machines, but as dependable partners in their everyday shopping lives.

Citation: Wang, S., Fatima, N., Shahbaz, M. et al. Building user trust in AI chatbots for customer service through human-like cues and perceived reliability. Sci Rep 16, 7860 (2026). https://doi.org/10.1038/s41598-026-38179-2

Keywords: AI chatbots, customer trust, customer service, anthropomorphism, digital customer experience