Clear Sky Science · en

Estimating the odds ratio from the output scores of machine learning models: possibilities and limitations

Why this matters for health and AI

Doctors and public health researchers increasingly turn to artificial intelligence to discover how environmental factors, such as temperature or air pollution, affect our health. But while modern machine‑learning tools are powerful at predicting who might get sick, they often fail to answer a more basic question that clinicians and policymakers care about: how much does a given exposure raise or lower risk? This study tackles that gap by showing how to translate the opaque output of popular machine‑learning models into the familiar odds ratios that underpin much of medical and epidemiologic decision‑making.

From black‑box scores to understandable risk

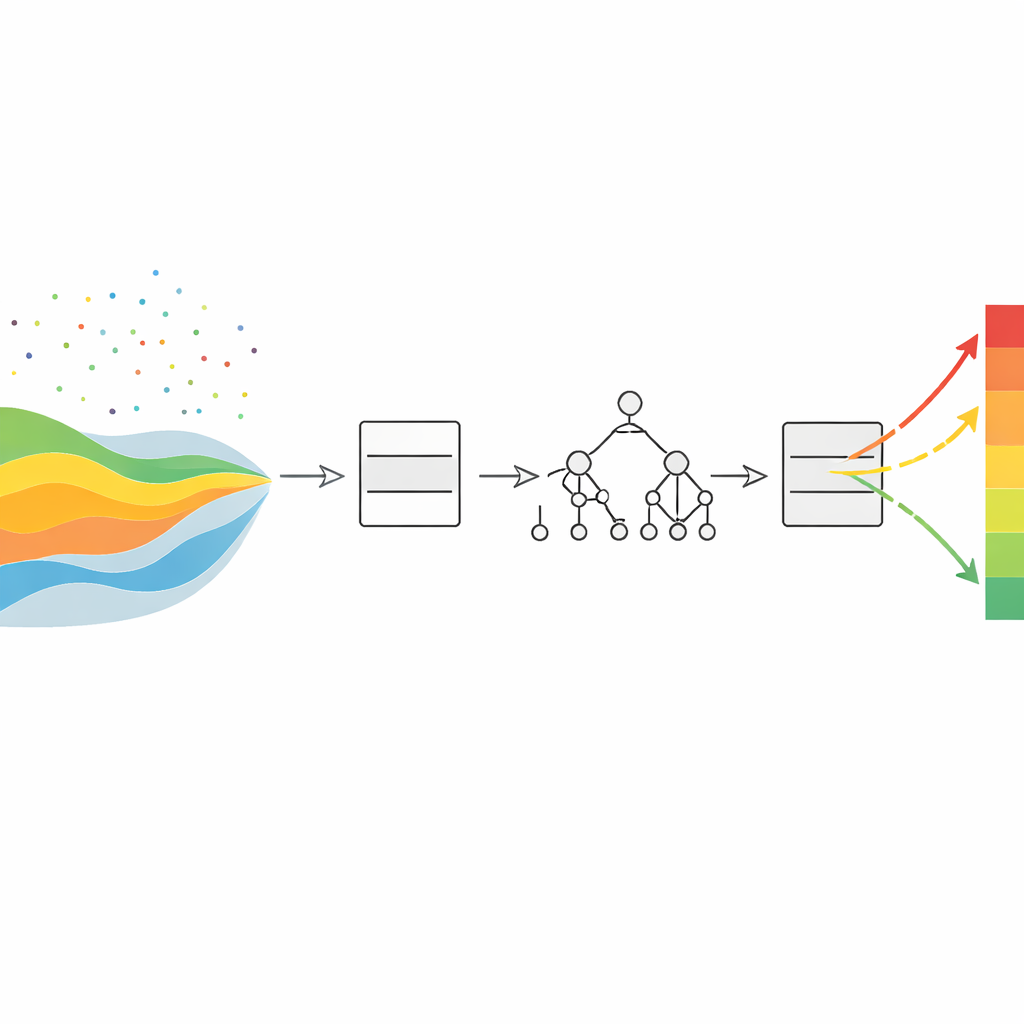

In traditional epidemiology, a workhorse method called logistic regression links an exposure (for example, cold weather) and a health outcome (such as hospital admission) while controlling for other factors like age or pollution. Its main strength is interpretability: it directly provides an odds ratio, which tells us how many times higher (or lower) the odds of illness are in one group compared with another. Modern machine‑learning methods such as random forests and gradient boosting can capture far more complex patterns in data, but they usually return scores without a straightforward meaning for risk, making it difficult to report results in a language clinicians trust. The authors set out to connect these two worlds.

New ways to read risk from machine‑learning models

The researchers proposed ten different ways to recover odds ratios from the scores produced by machine‑learning classifiers. Eight of these "hybrid" estimators start from the model’s raw or calibrated scores—numbers between zero and one that reflect how likely each person is to have the outcome—and then multiply a simple summary of those scores by an adjustment factor derived from a conventional logistic regression model. This factor accounts for differences in age, season, and other background variables between exposed and unexposed groups. Two additional estimators rely on partial dependence functions, a tool that asks, in effect, "what would the model predict if everyone had exposure level A versus level B, while everything else stayed as observed?" By comparing these predictions, the authors obtain a model‑based odds ratio that reflects the machine‑learning model’s view of the data.

Testing the methods on real health questions

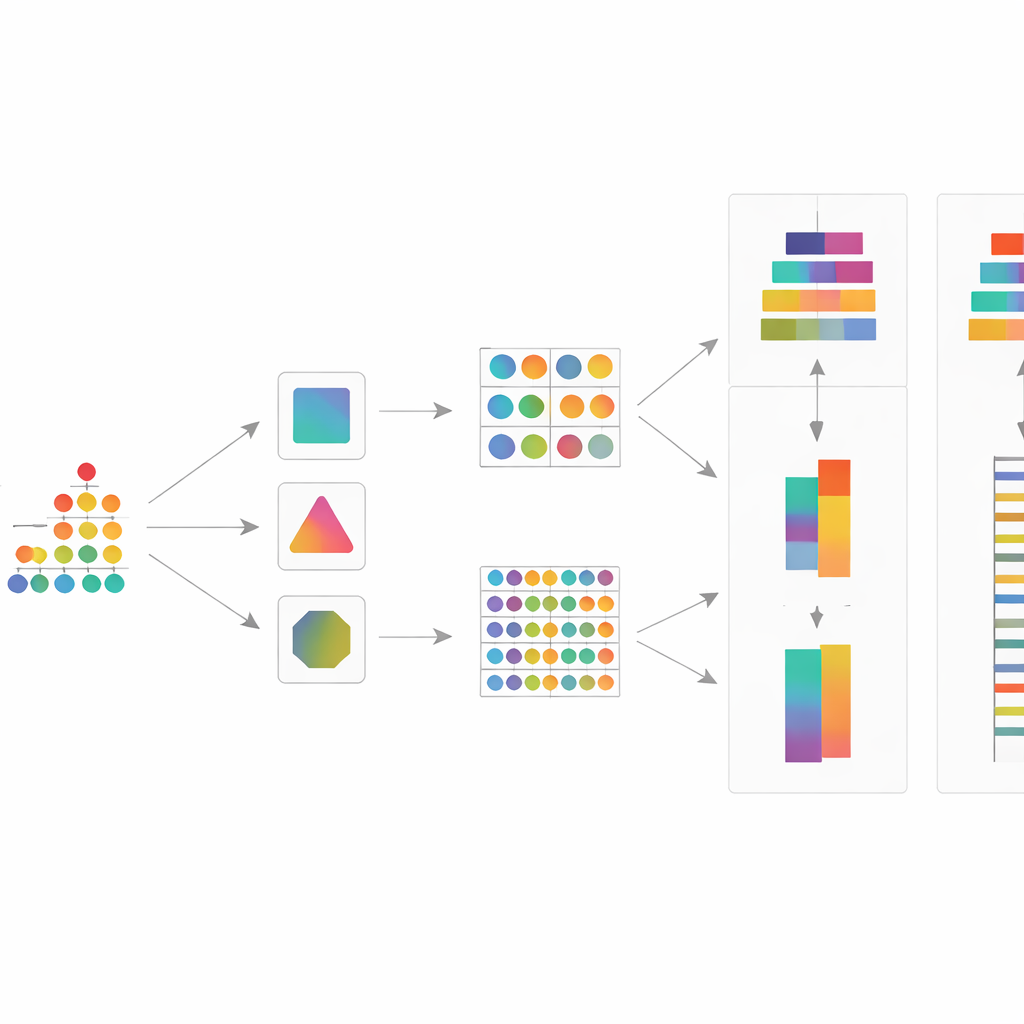

To see how well these ideas work, the team applied them to three models—logistic regression, random forest, and gradient boosting—on two large epidemiologic datasets from Israel. One followed older adults admitted to hospital with respiratory or cardiovascular problems, focusing on whether unusually low temperatures increased the chance of admission. The other traced more than 160,000 infants to examine whether higher prenatal temperatures were linked to overweight at age two. For each combination of dataset and model, they calculated ten odds‑ratio estimates and their uncertainty ranges, and compared the results with those from standard logistic regression, treating it as a practical benchmark.

Which machine‑learning tools behaved best

A key step in the study was "calibration"—reshaping the raw scores of machine‑learning models so that, for instance, among people assigned a 20% risk, about one in five truly have the outcome. The authors tested three common calibration methods and found that a simple technique called isotonic regression often brought random forest and gradient‑boosting scores closest to well‑behaved probabilities. When these calibrated scores fed into their odds‑ratio estimators, an important pattern emerged: odds ratios derived from gradient boosting tended to line up well with those from logistic regression, with about 87% of estimates falling inside the logistic model’s 95% confidence range and often producing somewhat narrower uncertainty intervals. In contrast, random forests showed erratic behavior—many predictions collapsed to 0 or 1, which made several odds‑ratio estimates unstable or misleading, even after calibration.

What this means for using AI in public health

The study demonstrates that it is possible to enjoy the predictive power of modern machine‑learning models without sacrificing interpretability, at least under common conditions in environmental health research. When paired with careful calibration and the proposed estimators, gradient‑boosting models can provide odds ratios that are comparable to, and sometimes more precise than, those from classic logistic regression. However, not all machine‑learning algorithms are equally suited to this task: random forests, in particular, may require extra caution or alternative strategies when used to estimate effect sizes. For policymakers and clinicians, the key takeaway is that advanced AI methods need not remain black boxes—if used thoughtfully, they can yield clear, familiar measures of risk that support real‑world decisions.

Citation: Nirel, R., Bauman, N., Morin, E. et al. Estimating the odds ratio from the output scores of machine learning models: possibilities and limitations. Sci Rep 16, 8922 (2026). https://doi.org/10.1038/s41598-026-38150-1

Keywords: odds ratio, machine learning, epidemiology, risk estimation, temperature and health