Clear Sky Science · en

Quantitative fat-fraction analysis of the rotator cuff muscles on clinical sagittal and coronal T1-weighted MRI using deep learning algorithms

Why Shoulder Muscle Fat Matters

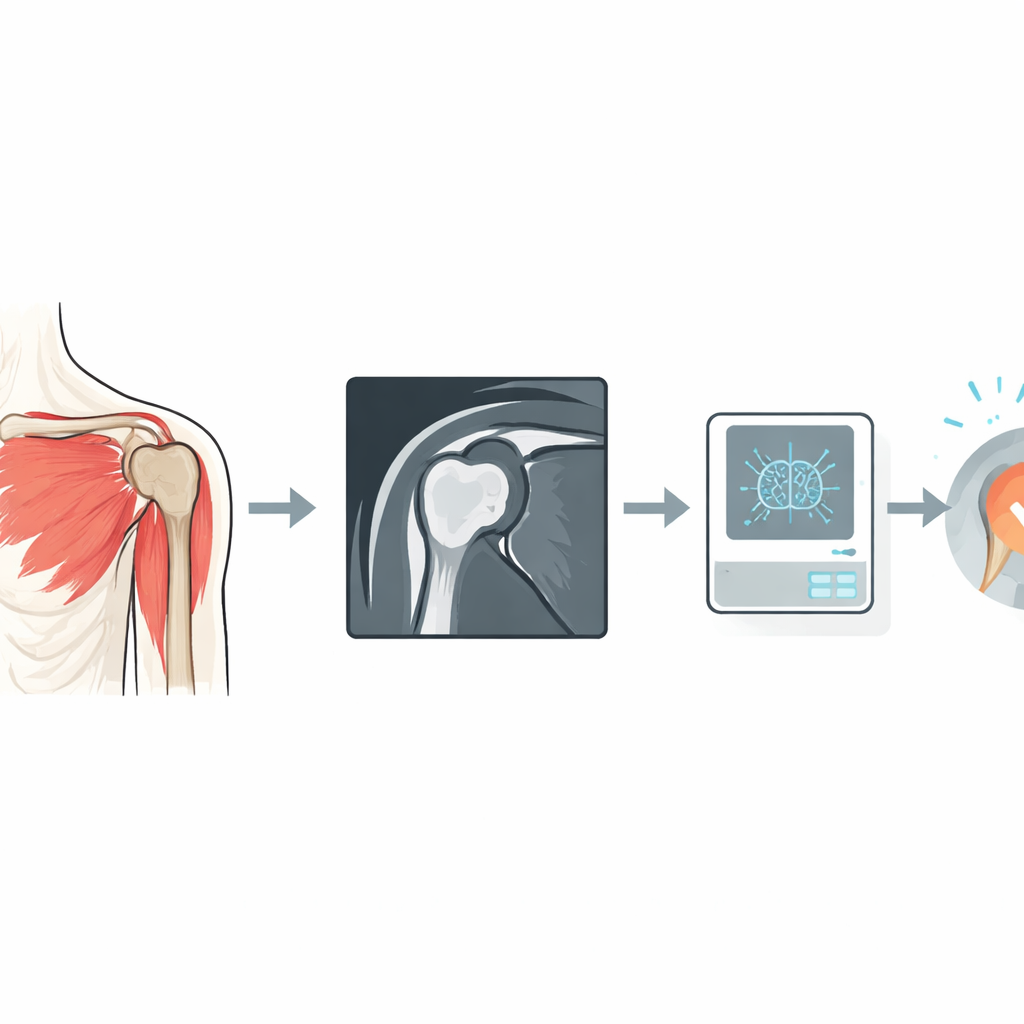

When a tendon in the shoulder’s rotator cuff tears, surgeons can often repair it—but the muscle’s condition strongly influences whether that repair will last. One key warning sign is how much fat has crept into the damaged muscle. Until now, doctors have had to judge this from a single shoulder scan slice by eye, using a rough five-step scale. This study explores how modern image analysis, powered by deep learning, could turn routine shoulder scans into precise 3D maps of muscle fat, helping doctors better predict who will benefit from surgery and how to plan it.

The Problem With Blurry Information

Today, most surgeons rely on standard magnetic resonance imaging (MRI) of the shoulder to assess rotator cuff muscles. On these images, fat looks bright and muscle darker, and a widely used grading system ranks each muscle from “no fat” to “more fat than muscle.” But this judgment is done on a single angled slice of the shoulder—the so-called Y-view—and different experts often disagree on the exact grade. In patients whose tendons have pulled back, that single slice may no longer line up with the same part of the muscle from person to person, making comparisons even harder. Past research has also shown that what you see in one slice does not reliably represent the entire three-dimensional muscle.

A Better Way to See Fat in Muscles

Radiologists already have a more precise MRI technique, known as Dixon imaging, that can measure the exact percentage of fat in each tiny volume element—or voxel—throughout the muscle. These scans reveal that fat is unevenly distributed and can vary along the length of the muscle. However, Dixon scans are not part of routine shoulder imaging in most hospitals. The authors of this study asked whether a computer could learn to infer the same detailed fat information directly from the standard MRIs that patients already receive. They assembled data from 99 adults with rotator cuff tears who had both routine T1-weighted MRIs and specialized Dixon scans of the same shoulder, covering all four key rotator cuff muscles.

Teaching an Algorithm to Read Between the Pixels

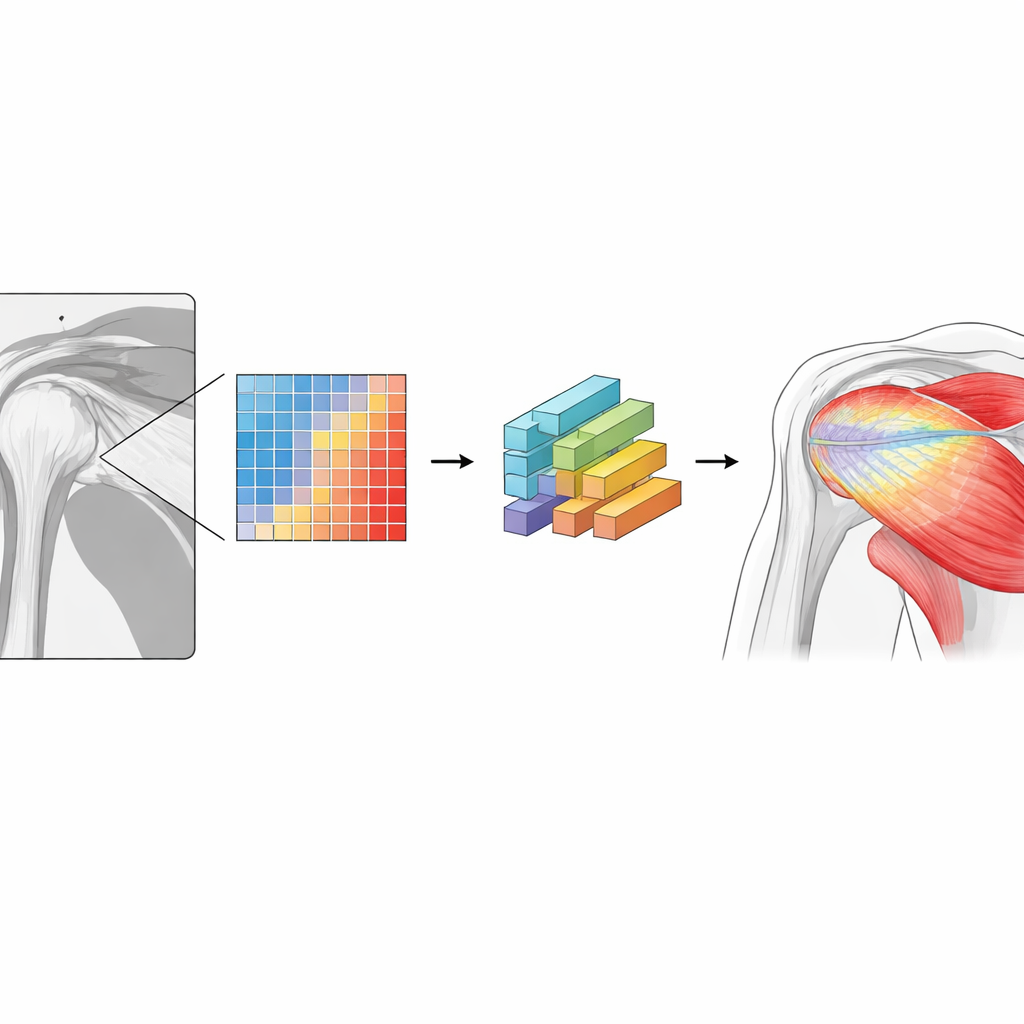

The team first used a previously validated deep learning tool to automatically outline the shoulder bones and each rotator cuff muscle on the standard MRIs. They then aligned the routine scans with the Dixon images so that each voxel in the standard MRI could be matched to its true fat percentage from the Dixon scan. Instead of simply labelling each voxel as “fat” or “muscle,” they divided fat content into five ranges, from almost no fat to very high fat. A 3D neural network was trained to predict, for every voxel inside the muscles, which of these five ranges it belonged to, based only on the appearance in the standard MRI. Training used 75 shoulders; performance was tested on the remaining 24, in both sagittal (side-on) and coronal (front-facing) scan directions.

Sharper Numbers, Muscle by Muscle

Once the network learned this task, the researchers could convert its voxel-by-voxel predictions into an average fat percentage for each muscle. Compared with the true values from Dixon imaging, the errors were small—typically within about 1–2 percentage points, and at worst about 2–4 percentage points depending on the muscle and scan direction. Crucially, this multi-level approach clearly outperformed a traditional “binary” method that classifies each voxel as either all fat or all muscle based on a simple threshold. That older style of measurement underestimated overall fat content by around 6 percentage points, roughly half of the true fat in some muscles. The new method also captured how fat is distributed along each muscle, revealing that while the average level may be stable, individual patients can show strong local variations that a single slice would miss.

What This Could Mean for Patients

For people facing rotator cuff surgery, the difference between a rough visual score and a precise 3D measurement may translate into a clearer prognosis and more tailored treatment. This work shows that a deep learning algorithm can turn the standard shoulder MRIs already collected in clinics into near-quantitative fat maps, without extra scan time or special equipment. Although the method still needs testing on more diverse scanners and hospitals, it offers a path toward automated, consistent assessment of muscle quality. In the future, such detailed maps of where fat sits within a muscle could help surgeons decide when a repair is likely to succeed, refine surgical techniques, and ultimately improve outcomes for patients with painful shoulder tears.

Citation: Hess, H., Oswald, A., Daneshvar, K. et al. Quantitative fat-fraction analysis of the rotator cuff muscles on clinical sagittal and coronal T1-weighted MRI using deep learning algorithms. Sci Rep 16, 8821 (2026). https://doi.org/10.1038/s41598-026-38108-3

Keywords: rotator cuff, muscle fat, MRI, deep learning, shoulder surgery