Clear Sky Science · en

UncerTrans: uncertainty-aware temporal transformer for early action prediction

Why seeing actions early can keep us safe

Imagine a home robot that can tell, from just the first flick of a wrist, whether someone is about to pour hot water safely into a mug or accidentally knock the kettle over. In factories, hospitals, and smart homes, machines increasingly share space with people, and reacting only after an accident starts is too late. This paper introduces UncerTrans, a new AI system that not only predicts what a person is likely to do based on the very beginning of an action, but also tells us how sure it is about its own guess—an ability that is vital when human safety is on the line.

From watching to forecasting human actions

Most current computer-vision systems recognize what someone is doing only after the action is nearly finished: they classify a complete video clip as “cutting vegetables” or “picking up a cup.” That’s helpful for later analysis, but not for preventing burns, collisions, or falls. Early action prediction tackles a harder problem: deciding what full action is coming after seeing only 10–20% of it. The challenge is that many actions look similar at the start—reaching toward a kettle might mean pouring a drink or bumping it over—so a system must work with little information and still avoid dangerous mistakes.

Teaching a machine to focus on the right moments

UncerTrans addresses this by using a temporal transformer, a modern neural network architecture originally developed for language. Instead of reading words in a sentence, it looks at short snippets of video over time. The model breaks an early action sequence into a handful of segments and uses an attention mechanism to decide which moments matter most. Recent frames are given extra weight, echoing our intuition that the latest movement usually reveals the clearest intent. This design lets the system pick up both fine details, like finger motion, and broader patterns, like the path of an arm, even when it sees only a fraction of the full action.

Getting a machine to admit when it is unsure

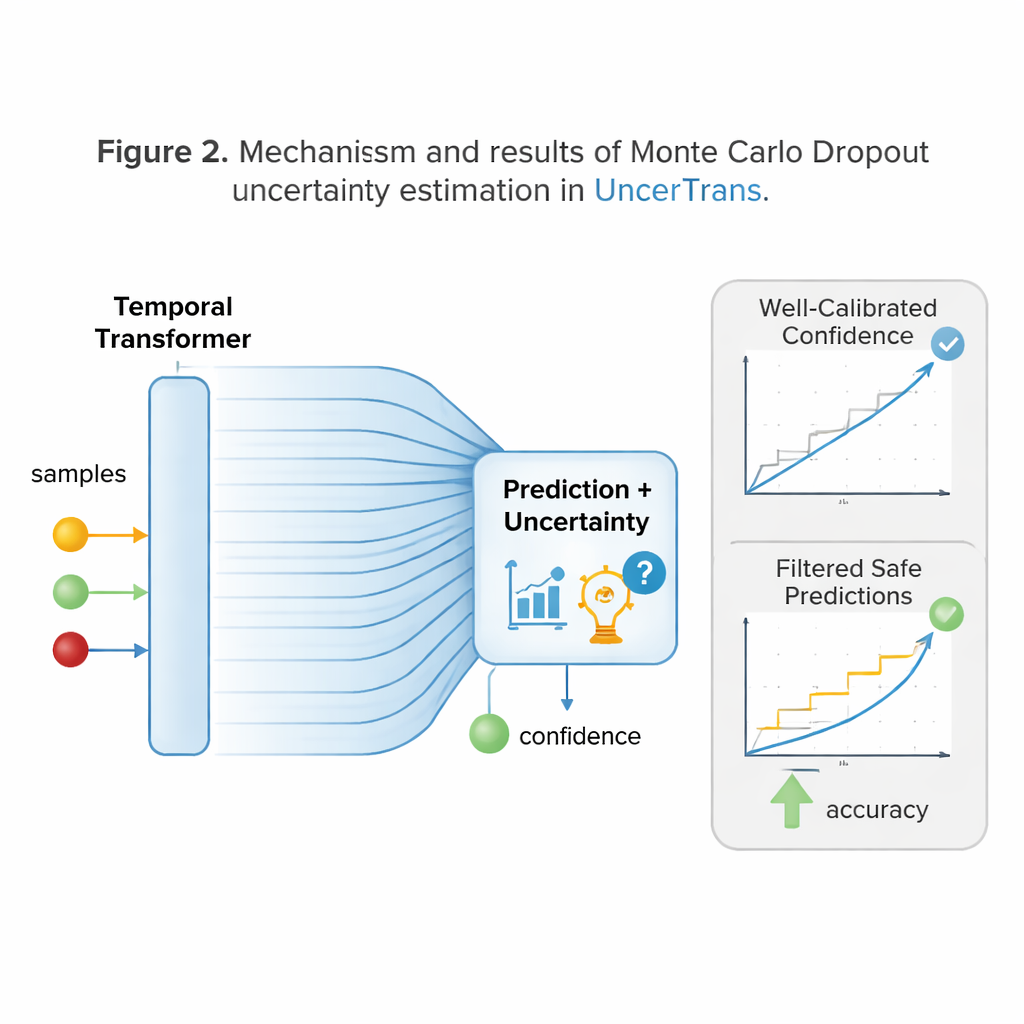

A key innovation of UncerTrans is that it does not stop at a single hard answer. Instead, it runs the same input through the network many slightly different times using a technique called Monte Carlo dropout. Each run drops different internal connections at random, producing a slightly different prediction. By looking at how much these predictions disagree, the system can estimate its own uncertainty: tightly clustered predictions signal high confidence, while scattered ones flag doubt. UncerTrans further separates uncertainty caused by limited training experience from noise in the video itself, and it adjusts how many test runs it performs on the fly—using more when the first samples look ambiguous and fewer when they already agree.

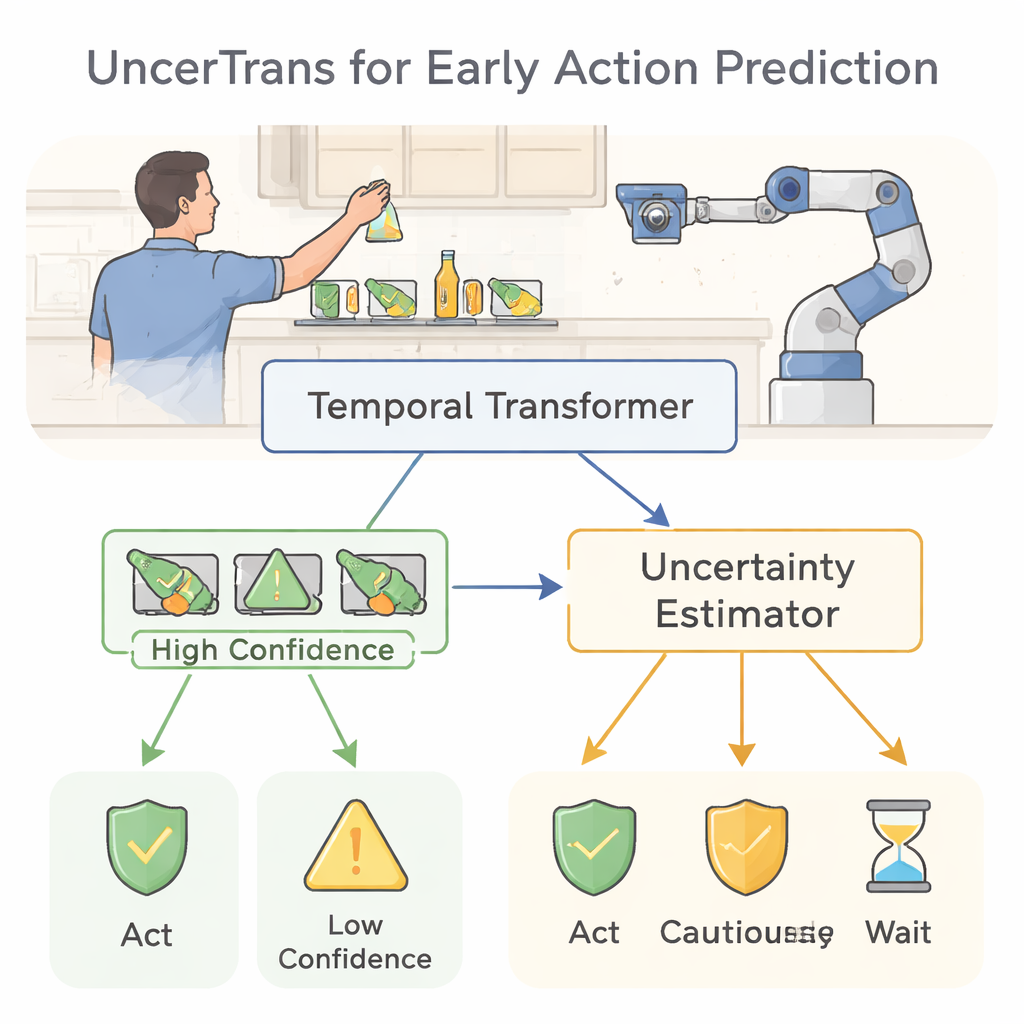

Turning confidence into safer decisions

Knowing when you might be wrong is only useful if it changes your behavior. UncerTrans converts its confidence estimates into practical choices. For predictions with low uncertainty, the system can act decisively—such as triggering a warning or moving a robot arm out of harm’s way. When uncertainty is moderate, it can choose safer, conservative behaviors, like slowing a robot or asking for more information. If uncertainty is very high, it can refuse to decide at all and simply keep watching. Tests on a large “first-person” kitchen video dataset show that UncerTrans predicts upcoming actions more accurately than several strong alternatives, especially when only the first 10% of an action is visible. Notably, when it discards just the 30% most uncertain cases, the accuracy on the remaining predictions rises to about 84%, demonstrating the real value of uncertainty-aware filtering.

What this means for everyday human–robot teamwork

To a non-specialist, the message is straightforward: UncerTrans is a step toward machines that not only guess our next move from limited clues but also know when those guesses are trustworthy. By combining a time-sensitive vision model with an internal “confidence meter,” the system can react faster and more safely in cluttered, real-world environments such as kitchens, factories, and care facilities. While the method still carries computational costs and will need further refinement, it offers a promising blueprint for future robots and monitoring systems that anticipate dangers early, respond cautiously when uncertain, and ultimately fit more safely into human spaces.

Citation: Zhai, X., Liu, Y. UncerTrans: uncertainty-aware temporal transformer for early action prediction. Sci Rep 16, 7068 (2026). https://doi.org/10.1038/s41598-026-38107-4

Keywords: early action prediction, human-robot collaboration, uncertainty in AI, transformer vision models, safe intelligent systems