Clear Sky Science · en

Research on positioning method in parcel sorting in disordered logistics

Why Smarter Parcel Sorting Matters

Every online order you place sets off a hidden ballet of boxes in massive logistics centers. Before a parcel can speed toward your doorstep, it must be found, picked up, measured, scanned and routed—often from a chaotic pile of mixed packages. Today, much of that first "unpacking the chaos" still relies on human workers doing repetitive, tiring tasks. This paper presents a new vision-based method that helps robots reliably find where to grab each parcel in a jumble, moving one step closer to fully automated, faster and less labor‑intensive parcel sorting.

From Messy Piles to Robot-Friendly Data

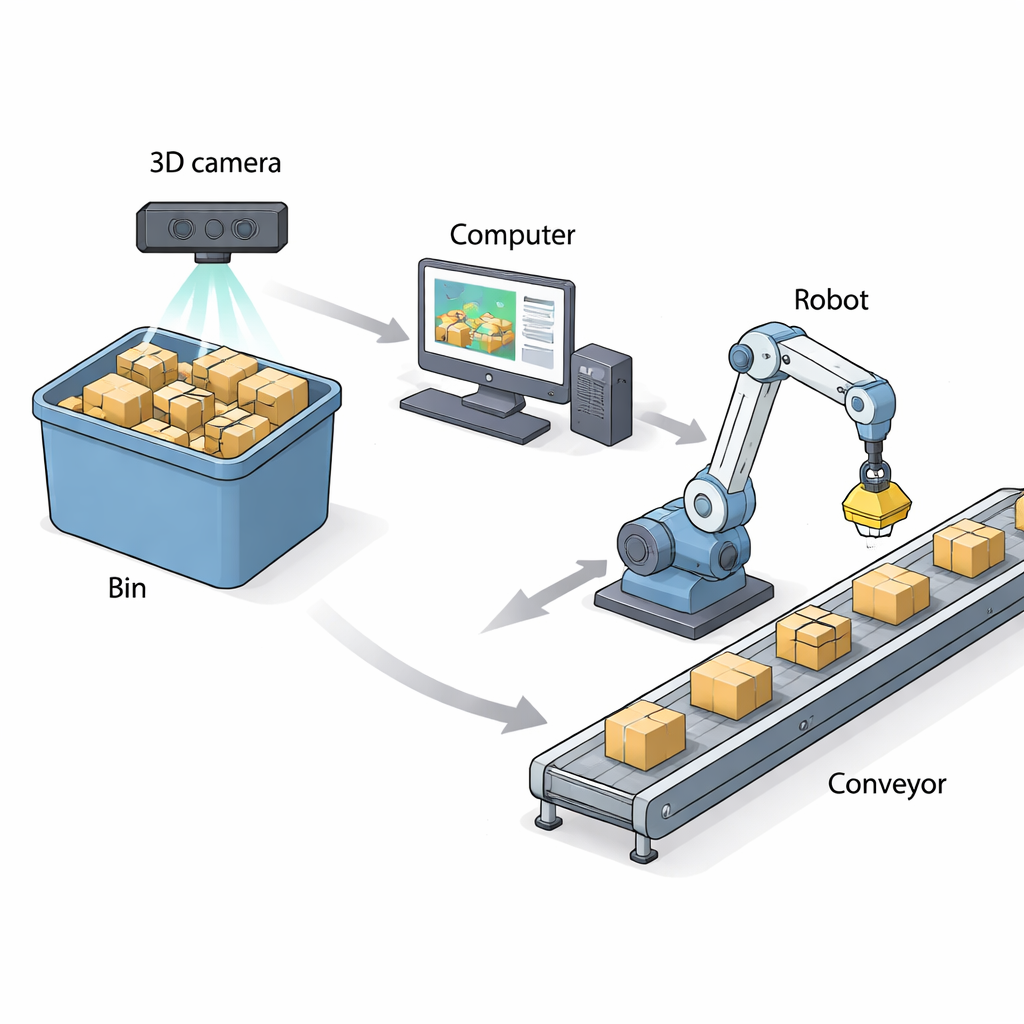

The researchers focus on what they call "disordered" logistics parcels: everyday boxes and soft mailers tossed into bins in no particular order, sometimes squashed or bent by stacking. To replace human workers in this messy environment, a robot first needs to know exactly where to reach and how to orient its gripper on the surface of a target package. The team builds a system around a 3D camera that captures both a color image and a depth map of the top layer of parcels. A modern recognition network (based on YOLOv8) spots individual parcels in the color image, while the depth map reveals their three‑dimensional shape. This combination allows the computer to pick the best parcel to grab next—one that is not too occluded and far enough from the bin’s edges—before computing an accurate grasp point.

Finding a Stable Grasp Point with Three Points and a Shadow

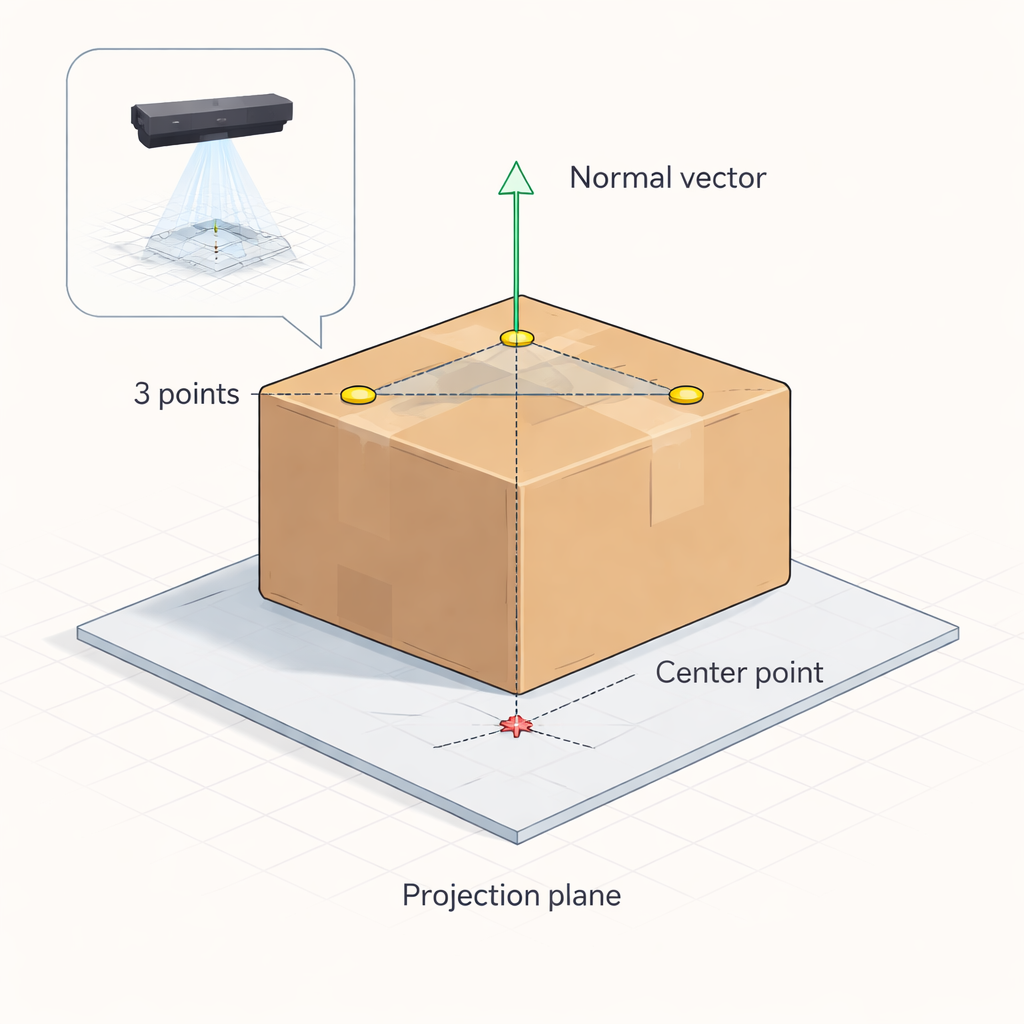

At the heart of the method is a geometric trick the authors call a three‑point orientation–projection centering algorithm. Once a target parcel is chosen, the system randomly selects three points on its upper surface from the depth data. These three points define a plane, just as three pins on a tabletop define that table’s tilt. From this plane, the algorithm calculates a "normal" direction—a straight line sticking out perpendicular to the parcel’s surface. In parallel, the system uses the parcel’s four top corners in the image to infer the geometric center of its projected outline, similar to finding the center of a rectangle’s shadow. Combining the plane’s orientation with this center position yields a precise 3D grasp location and the tilt of the parcel’s top surface, which can then guide a robot’s suction cup or gripper.

Handling Squashed and Bulging Packages

Real parcels are not perfect blocks: bubble‑wrapped mailers sag, soft bags bulge, and cardboard boxes can warp under load. A simple flat‑surface assumption would fail in these cases. To address this, the authors extend their math to distinguish between three situations: nearly flat packages, convex (bulging) tops, and concave (sagging) surfaces. By comparing the highest and lowest depth values on a parcel’s surface, the system first decides whether it is significantly deformed. If so, it analyzes how the deformed surface intersects with an imagined reference plane and fits an approximate ellipse to that intersection. From this, it solves for an "optimal" plane that best represents a stable grasping surface—even if the real top sags or bulges—and then projects the key grasp point back onto that plane.

Putting the Algorithm to the Test

To check whether the math works in practice, the team built a testbed with a six‑axis industrial robot, a 3D camera and a custom laser‑and‑probe device. First, they marked the true geometric center of each test parcel’s top surface and used two laser beams to pinpoint that physical location in space. Next, they let their vision algorithm compute its own estimate of the same center and commanded the robot to move a second probe to that computed point. By measuring the tiny offset between the two probe tips, they could calculate the positioning error. Tests with both rigid wooden box models and realistic packaging materials—corrugated cartons, bubble mailers and plastic bags, in sizes up to 250×250 mm—showed a maximum positioning error of about 1.7 millimeters and average errors close to 1 millimeter per axis. The full computation for each parcel took roughly 17.5 milliseconds, fast enough for high‑throughput sorting lines.

What This Means for Future Warehouses

In plain terms, the study demonstrates that a robot equipped with a 3D camera and this three‑point, projection‑based algorithm can reliably figure out where and how to grab packages from a messy bin with millimeter‑level precision. While strong deformations in very soft packages still reduce accuracy somewhat, the method remains robust enough for realistic warehouse conditions. As parcel volumes continue to climb and labor shortages persist, such algorithms could enable safer, less monotonous work by shifting the heaviest and most repetitive sorting tasks from people to machines—while helping to keep the growing world of e‑commerce running smoothly.

Citation: Han, Y., Zhang, F., He, A. et al. Research on positioning method in parcel sorting in disordered logistics. Sci Rep 16, 7524 (2026). https://doi.org/10.1038/s41598-026-38092-8

Keywords: 3D vision, parcel sorting, robotic picking, logistics automation, object localization