Clear Sky Science · en

Integrating simplified Swin-T with modified EFS-Net for attention-guided underwater pipelines segmentation in complex underwater environments

Why watching the seafloor matters

Hidden beneath the waves, vast networks of pipes carry oil, gas, and power cables that modern societies rely on. If these underwater pipelines crack, corrode, or shift, the result can be costly shutdowns and severe pollution. Today, much of the inspection work is done by human operators who stare at hours of murky video from underwater robots. This paper presents a new artificial intelligence (AI) system that can automatically pick out pipelines from difficult underwater images, even when they are dim, dusty with “sea snow,” or partly buried in sand. That step toward reliable, automated inspection could make offshore energy and infrastructure safer and cheaper to maintain.

Seeing clearly in a murky world

Underwater imagery is notoriously hard for computers to interpret. Light fades quickly with depth, colors shift toward green and blue, and floating particles create haze and snow-like specks. Classic image techniques, which rely on sharp edges and clean contrast, tend to fail when the pipeline is covered by sand, obscured by plants, or blurred by fog. Deep learning has improved matters, and several popular neural networks can already spot pipes in specific datasets. Yet those systems usually specialize in one type of water condition or camera setup. When they face a new environment—different water, lighting, or background—their accuracy drops sharply. The central challenge is to build a model that is both accurate and adaptable while still efficient enough to run in real-world inspection systems.

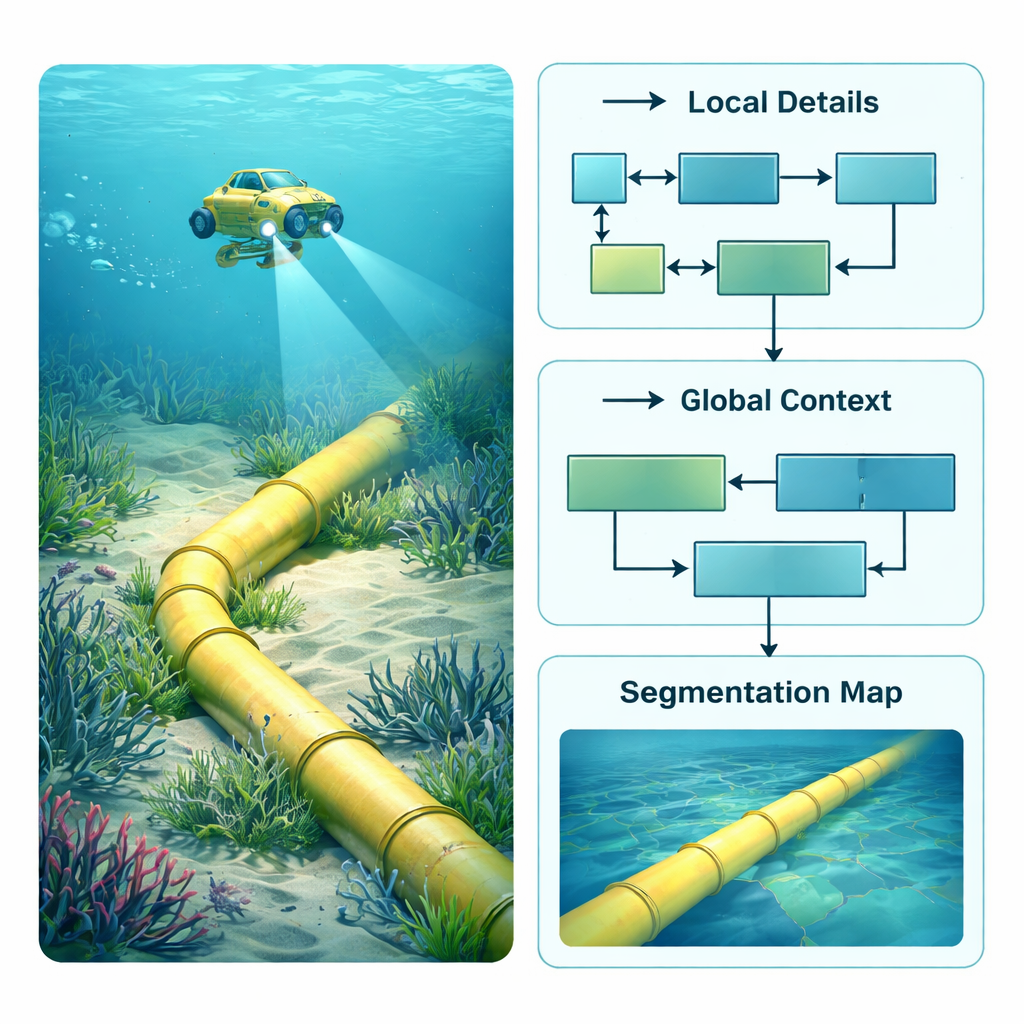

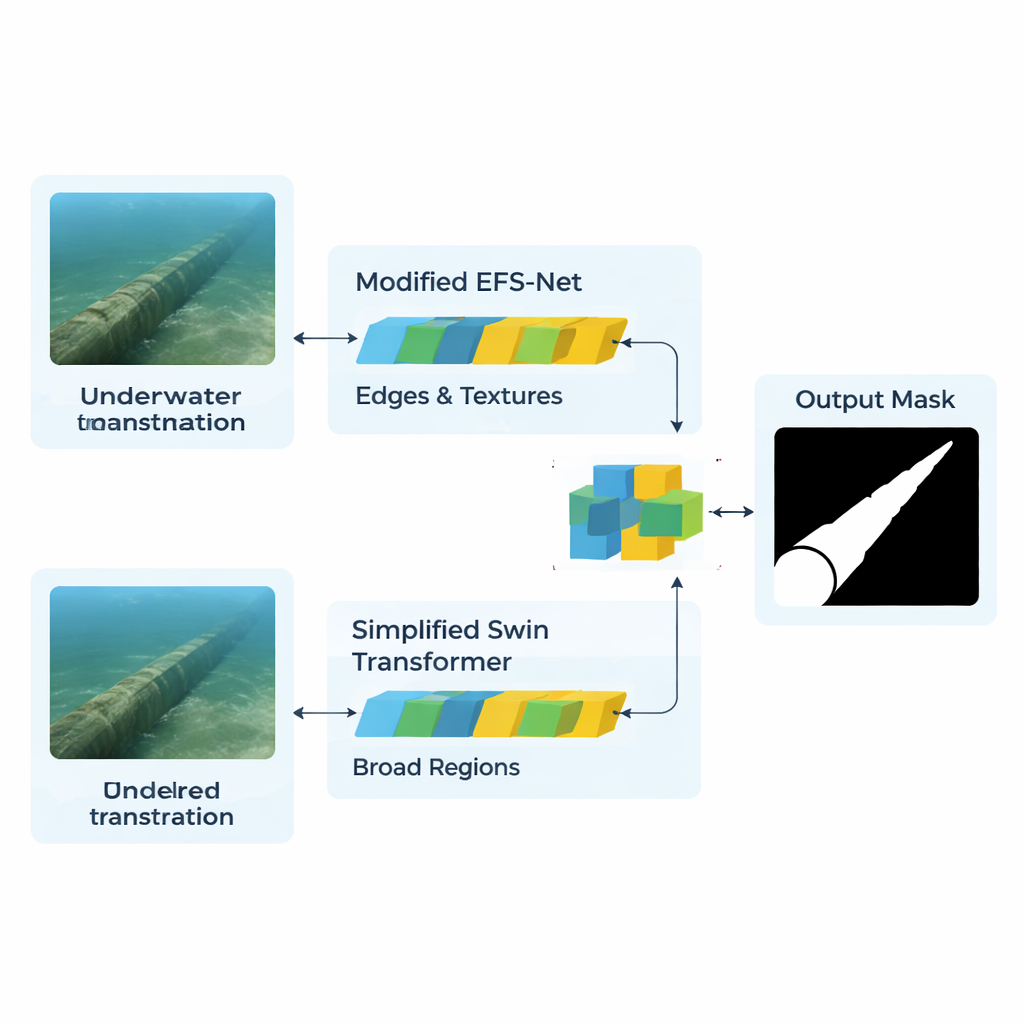

A two-brain approach to underwater images

The authors tackle this by building a hybrid AI architecture that combines two very different “ways of seeing.” One branch, based on a streamlined version of the Swin Transformer, acts like a wide-angle observer. It scans the whole frame to understand large-scale patterns, such as the overall route of a pipe across the seabed. The second branch, adapted from a model called EFS-Net and powered by an EfficientNet backbone, behaves like a magnifying glass. It concentrates on fine details—edges, textures, and thin structures that reveal where the pipeline begins and the sand or vegetation ends. Both branches process the same resized images and convert them into internal feature maps that describe what the network thinks might be meaningful structures in each region of the picture.

Letting attention decide what matters

Simply stacking the outputs of these two branches would create a tangle of redundant information. Instead, the model uses an “attention” mechanism to decide, pixel by pixel, which details are worth focusing on. A three-head cross-attention module compares the features from the detail-focused branch with the features from the context-focused branch. In essence, the detail branch asks targeted questions—“Is this edge part of a pipeline?”—while the context branch supplies global clues—“Does a line in this position and direction make sense as part of a pipe?” An additional refinement step, called CBAM, further boosts the signal from likely pipeline regions and dampens background noise such as rocks, algae, or suspended particles. A decoder network then gradually rebuilds a full-size mask that marks each pixel as pipeline or not.

Putting the system to the test

To judge whether this design works in practice, the researchers assembled a large and demanding dataset named HOMOMO. It contains more than 120,000 color images of real seabed pipelines taken along 1.2 kilometers of pipe under varied and often hostile conditions: low light, sea fog, floating “snow,” sand drifts, and heavy plant growth. They trained their model on part of this collection and then compared it with widely used systems such as UNet, DeepLab, SwinUNet, TransUNet, Mask2Former, and several versions of the YOLO object detector. On HOMOMO, their hybrid model correctly segmented pipeline pixels with a mean intersection-over-union of about 98%, substantially higher than the best competing method. Just as importantly, when tested—without retraining—on two very different image sources, a synthetic Roboflow dataset and real-world YouTube footage, the model still performed strongly, showing that it can cope with new cameras and water conditions.

What this means for the real ocean

For non-specialists, the takeaway is that this AI system can reliably outline underwater pipelines in video frames that are too noisy and inconsistent for conventional methods. By blending a global view of the scene with a sharp eye for edges and textures, and by using attention to fuse these perspectives, the model achieves high accuracy without requiring massive computing power. In practical terms, such a tool could help autonomous robots continuously monitor long stretches of subsea infrastructure, flagging possible damage or burial for human review. While it still struggles with pipes that are extremely thin or completely hidden, the approach marks an important step toward safer, more automated inspection of the hidden plumbing that supports modern energy and communication networks.

Citation: Hosseini, N., Mohanna, F. & Moghimi, M.K. Integrating simplified Swin-T with modified EFS-Net for attention-guided underwater pipelines segmentation in complex underwater environments. Sci Rep 16, 6987 (2026). https://doi.org/10.1038/s41598-026-38081-x

Keywords: underwater pipelines, image segmentation, deep learning, marine inspection, transformer networks