Clear Sky Science · en

Learning-aided Artificial Bee Colony with neural knowledge transfer for global optimization

Smarter digital swarms for hard problems

Many of today’s toughest challenges—from tuning solar panels to planning delivery routes—boil down to searching through enormous possibility spaces for the best answer. Swarm-inspired algorithms, which mimic how bees or birds explore their environment, are widely used for this kind of search. But classic swarms mostly rely on chance rather than memory. This paper introduces a way to make a popular bee-based algorithm genuinely “learn” from experience, turning it from a clever guesser into a data-driven problem solver.

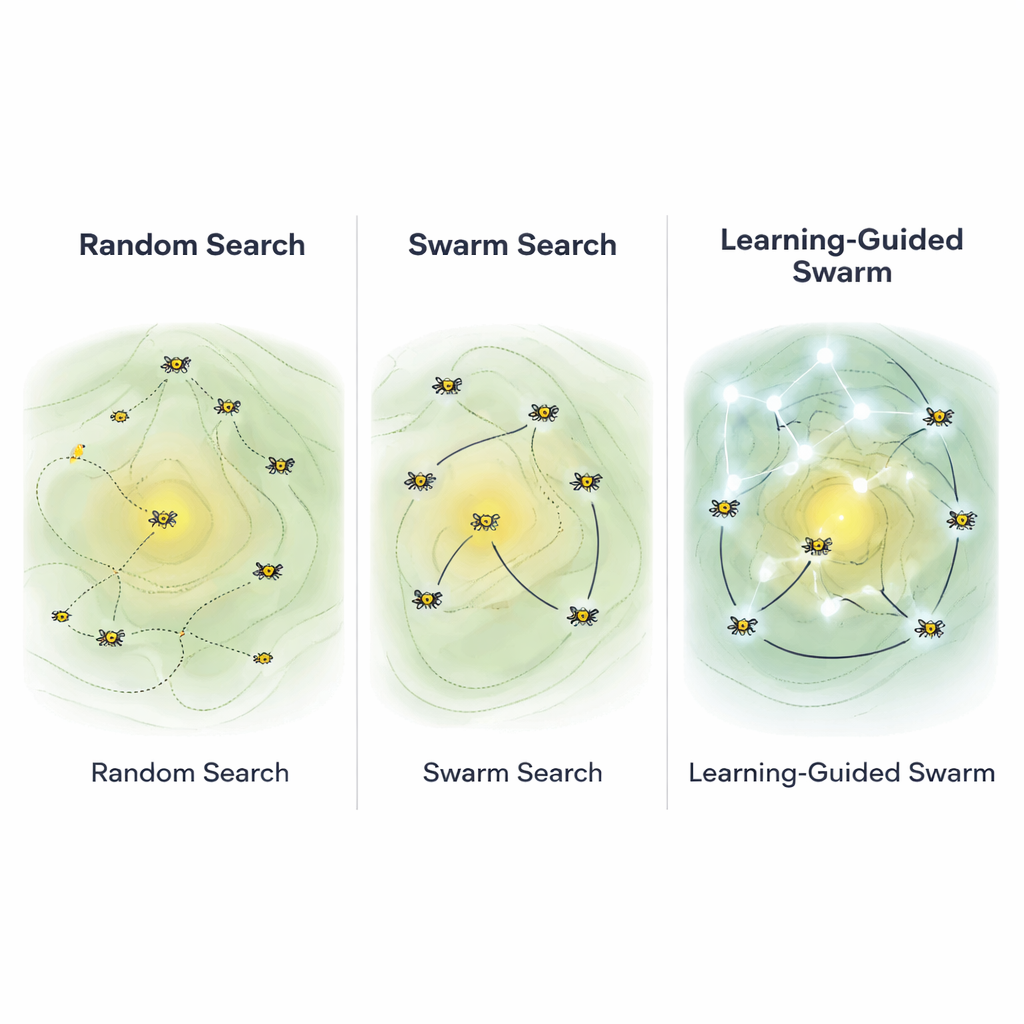

From blind wandering to guided exploration

Traditional search methods can be imagined as hikers stumbling around a foggy mountain range, hoping to find the highest peak. A basic “random search” walks anywhere, very slowly improving. More advanced evolutionary algorithms, including the Artificial Bee Colony (ABC) method, use rules inspired by natural selection and foraging: some virtual bees explore new areas, others exploit good spots, and poor locations are abandoned. Yet even these methods largely ignore the rich history of what worked well before. Each new move is chosen with little regard for the detailed patterns of past success, which can lead to slow progress or getting stuck on a mediocre hill instead of the true summit.

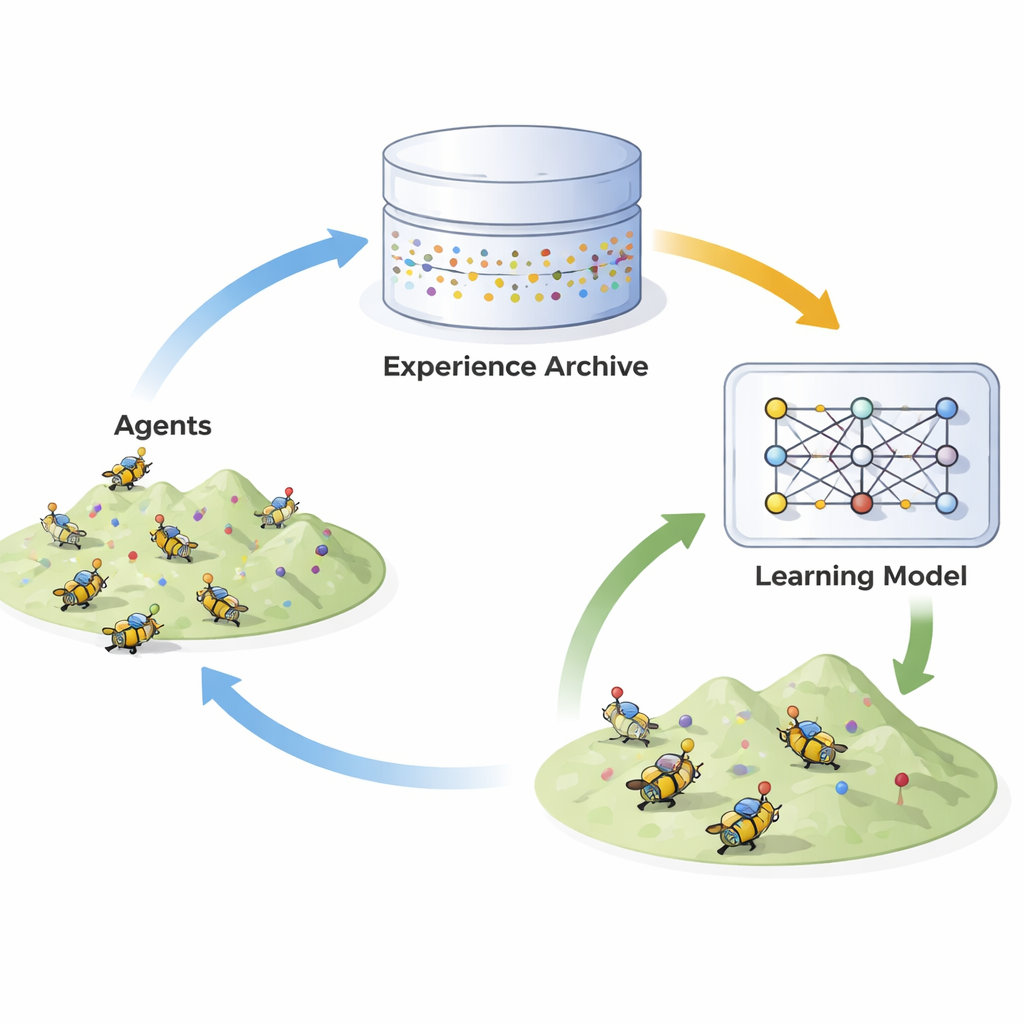

Teaching bees to remember and predict

The authors propose Learning-Aided Artificial Bee Colony (LA-ABC), which upgrades the standard bee algorithm with a simple artificial neural network—a kind of mathematical brain. As the digital bees search, the algorithm records “successful moves”: whenever a new candidate solution clearly improves upon an older one, the pair is stored in a rolling archive. These examples form an experience bank that captures how good solutions tend to evolve. The neural network is trained online, during the run, to learn a mapping from “before” to “after”: given a promising solution, it predicts how to nudge it toward an even better one.

Two paths: chance versus learned guidance

Once this learning engine is in place, LA-ABC runs in two alternating modes. In one mode, the bees behave like the original ABC, using random-like rules to preserve exploration and avoid overconfidence. In the other mode, the algorithm calls on its learned model. For a chosen bee, the neural network suggests an improved location, and a light touch of randomness is added so the swarm does not become rigid or overfit to early data. A control knob decides how often the learning-guided path is used, balancing broad search with focused refinement. This design allows the swarm to benefit from its accumulated knowledge while still probing new, unexplored regions.

Putting learned swarms to the test

To see whether learning truly helps, the authors benchmark LA-ABC on dozens of mathematical testbeds known to be challenging: smooth and rugged landscapes, single-peak and many-peak scenarios, and complex mixes of both. They compare it to a dozen leading algorithms, including improved versions of Differential Evolution, Particle Swarm Optimization, and other knowledge-aided and reinforcement-learning-based swarms. Across most tests, LA-ABC reaches better solutions faster and more reliably, a result confirmed by multiple statistical examinations. The authors then apply the method to a practical engineering task: estimating hidden electrical parameters of photovoltaic (solar) models. Here, LA-ABC recovers parameter values that not only match physical expectations—such as realistic resistances and diode behavior—but also reproduce real measurement data with especially low error.

Why this matters for real-world technology

The study shows that giving swarm algorithms a modest learning component can significantly sharpen their search power without making them unwieldy. LA-ABC keeps the simplicity and flexibility that made the original bee algorithm popular, while adding a memory of past successes that gently steers future decisions. For non-experts, the takeaway is that many optimization tools used behind the scenes in engineering, energy, logistics, and even machine learning can be made more efficient by weaving in small, focused learning modules. Instead of endlessly guessing, these digital swarms start to behave more like seasoned explorers—remembering where they have been and using that experience to climb toward better solutions.

Citation: Saini, G., Jadon, S.S. & Chaube, S. Learning-aided Artificial Bee Colony with neural knowledge transfer for global optimization. Sci Rep 16, 7019 (2026). https://doi.org/10.1038/s41598-026-38028-2

Keywords: swarm intelligence, artificial bee colony, neural networks, optimization, solar energy