Clear Sky Science · en

APMSR: an intelligent QA system for synthetic biology empowered by adaptive prompting and multi-source knowledge retrieval

Smarter Answers for a New Kind of Biology

Synthetic biology promises cleaner fuels, greener factories, and new medical treatments, but the science behind it grows so fast that even experts struggle to keep up. This study introduces APMSR, a smart question‑answering system designed to help researchers quickly find reliable answers about a key biofuel microbe, Zymomonas mobilis. By blending large language models with carefully chosen online and offline sources, the system aims to give precise, up‑to‑date answers instead of confident but incorrect guesses.

The Challenge of Asking Good Questions

Scientists already rely on search engines and online databases, but these tools often return long lists of papers rather than direct answers. Large language models (LLMs) can speak fluently about many topics, yet in fast‑moving fields like synthetic biology they may miss recent discoveries or simply make things up. The authors focus on the practical problem of answering expert‑level questions about Z. mobilis, a bacterium prized for turning sugars into ethanol efficiently. In this setting, wrong answers are not just annoying—they can send experiments and investments in the wrong direction.

Guiding the AI with the Right Instructions

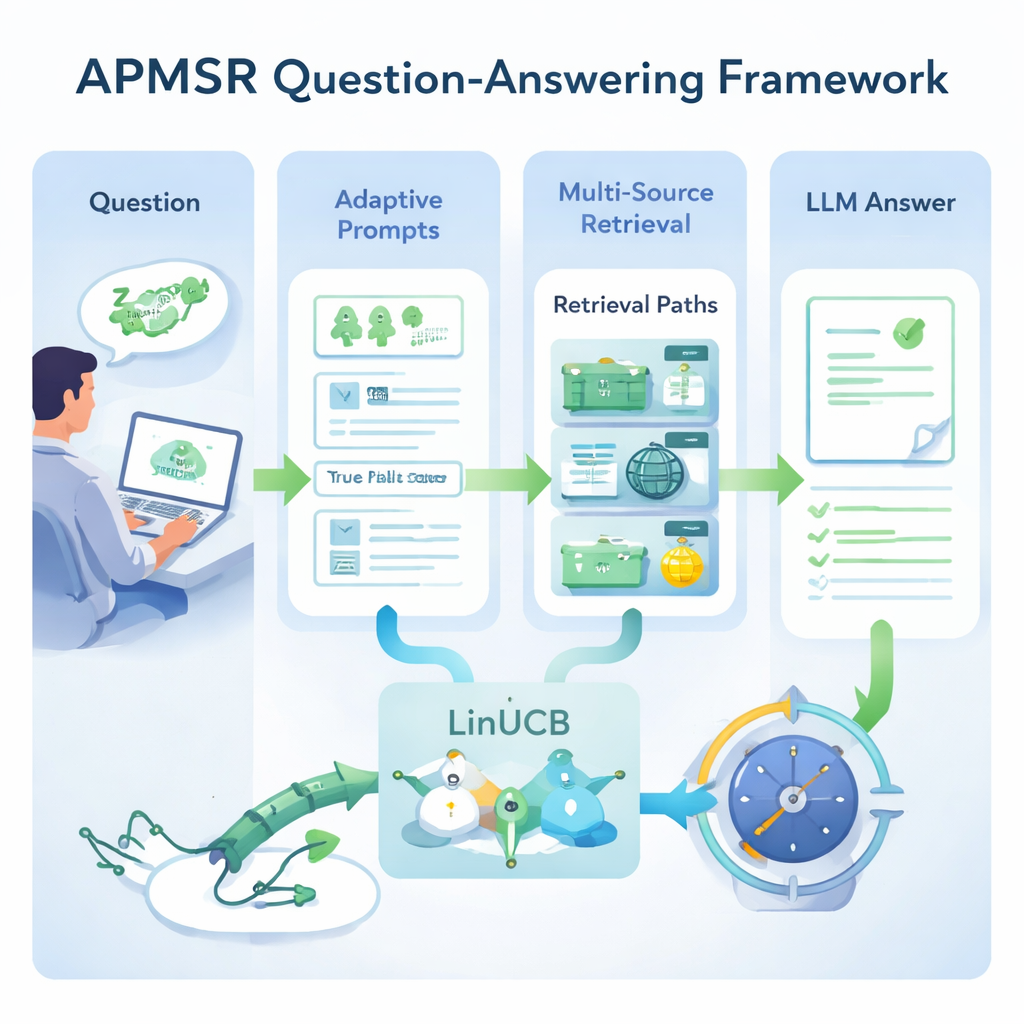

A central idea in APMSR is that how you ask the model matters as much as what you ask. Instead of using a single, fixed instruction, the system first asks the LLM to identify what kind of question it is seeing—for example, a multiple‑choice item or a true/false statement. Once the type is recognized, APMSR automatically picks a matching “prompt template” that tells the model how to reason and how to format its answer. Multiple‑choice questions, for instance, are nudged to compare options carefully, while true/false questions are steered toward checking the correctness of a statement and explaining why. This adaptive prompting helps keep the model focused and reduces meandering, off‑topic replies.

Choosing the Best Place to Look for Facts

Good instructions alone are not enough; the system must also look in the right places. APMSR connects to three kinds of information sources: a local library of curated scientific papers, live web resources, and a hybrid that merges both. For each user query, the system treats these three choices as competing “paths” and uses a mathematical strategy called LinUCB, originally developed for balancing risk and reward in decision‑making problems. LinUCB scores how well each path seems to work based on previous questions and their outcomes, then selects the path most likely to yield a correct answer while still occasionally trying alternatives. Over time, this feedback loop teaches the system which combinations of sources tend to be most trustworthy for different question styles.

Putting the System to the Test

To see whether these ideas actually help, the team built a specialized test set of 220 expert questions about Z. mobilis, split evenly between multiple‑choice and true/false formats, all derived from peer‑reviewed studies. They compared three setups: a bare LLM with no external documents, a standard retrieval‑augmented system using only a local database, and their full APMSR design. Accuracy rose from 54% for the bare model to 80% with standard retrieval, and then to 93% once adaptive prompts and the LinUCB‑based path selector were added. The optimized system also outperformed an existing synthetic‑biology‑focused model called SynBioGPT by about 19 percentage points, suggesting that clever orchestration of prompts and retrieval can matter more than simply training a bigger model.

What This Means for Future Lab Work

For non‑specialists, the main takeaway is that the authors have built a sort of “research co‑pilot” that not only talks in fluent language but also knows when to check multiple sources and how to structure its own thinking. By tuning both the way questions are framed and the way information is gathered, APMSR sharply cuts down on misleading answers in a complex, rapidly evolving field. While the current system is focused on a single microbe and on quiz‑style questions, the same approach could be extended to broader areas of biology and beyond, helping scientists, engineers, and perhaps eventually clinicians ask better questions and receive more trustworthy answers from AI tools.

Citation: Wang, J., Cao, Z., Tian, Z. et al. APMSR: an intelligent QA system for synthetic biology empowered by adaptive prompting and multi-source knowledge retrieval. Sci Rep 16, 7331 (2026). https://doi.org/10.1038/s41598-026-38006-8

Keywords: synthetic biology, question answering, large language models, retrieval augmented generation, Zymomonas mobilis