Clear Sky Science · en

Stable approach based diagonal recurrent quantum neural networks for identification of nonlinear systems

Teaching Machines to Understand Messy Systems

Many of the systems that shape our lives—from electric motors in appliances to turbulent airflow and even some medical processes—behave in complicated, nonlinear ways. That means small changes in their inputs can produce surprisingly large or chaotic responses. Predicting and controlling such systems is hard, yet vital for efficiency, safety, and energy savings. This paper introduces a new kind of learning machine that blends ideas from quantum computing, neural networks, and stability theory to model these tricky behaviors more accurately and reliably.

Why Ordinary Models Fall Short

Traditional modeling tools often assume that cause and effect are tidy and proportional, which works well for simple or nearly linear systems. But many real systems have memory, feedback, and thresholds that make their behavior highly nonlinear. Classic neural networks have helped, yet they come with their own problems. Feed-forward networks, where information travels only one way, are good at static tasks but struggle when past signals matter. Recurrent networks, which loop information back on itself to create memory, can capture time-varying behavior but tend to be hard to train, prone to instability, and computationally heavy when every neuron talks to every other.

Blending Quantum Ideas with Simple Loops

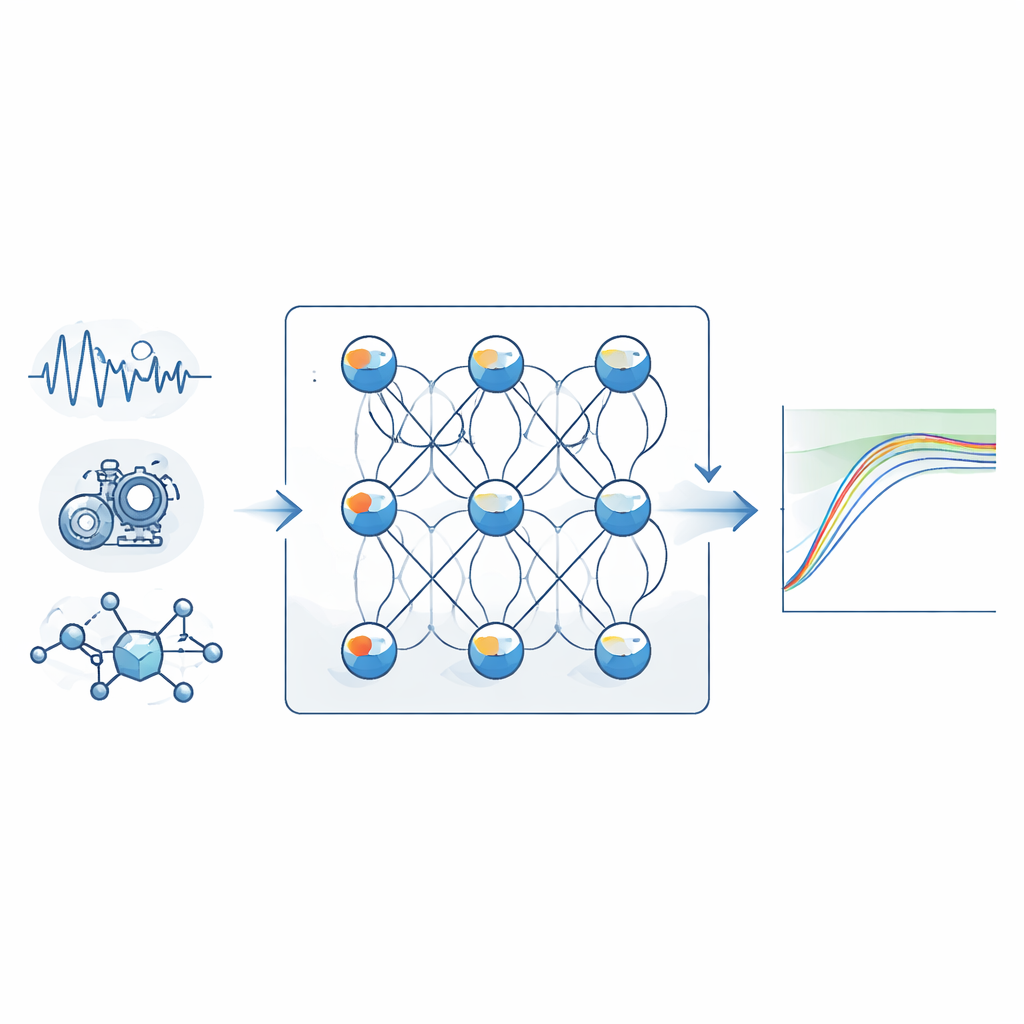

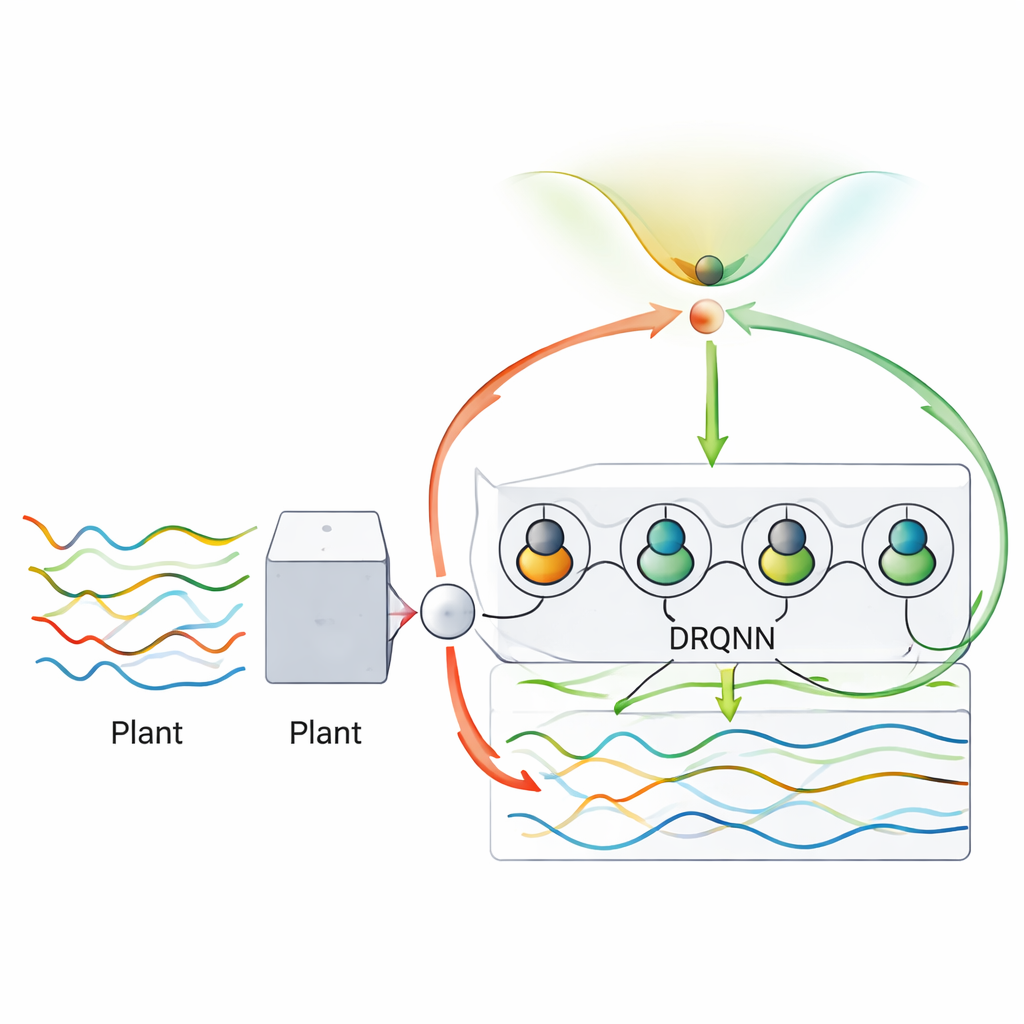

The authors propose a diagonal recurrent quantum neural network with Lyapunov stability, abbreviated DRQNN-LS. At its heart, this network still looks like a familiar three-layer neural net: inputs, a hidden layer, and an output. But two twists make it special. First, the hidden units behave like simplified quantum bits, whose internal states are described using phase-like quantities rather than plain numbers. This quantum-inspired representation lets each unit encode richer information in a compact way, improving the network’s ability to approximate complex relationships. Second, instead of a tangle of feedback links, each hidden neuron only feeds back into itself. This “diagonal” recurrence preserves the memory needed to follow time-varying patterns while drastically reducing the number of connections that must be tuned.

Keeping Learning Stable and Under Control

A major challenge in training any recurrent network is keeping learning stable: if the weights change too aggressively, the model’s output can explode or oscillate; if they change too slowly, training takes forever and may get stuck. Here the authors rely on Lyapunov stability theory, a mathematical framework originally developed for analyzing the safety of physical systems. They construct a special energy-like function that combines the modeling error and the size of the network parameters. By carefully deriving how this function changes over time, they obtain automatic rules for updating the network’s weights and internal parameters so that the overall “energy” can only decrease. This yields adaptive learning rates that speed up or slow down on their own, guaranteeing convergence without hand-tuning.

Putting the New Network to the Test

To show that DRQNN-LS is more than a neat idea on paper, the authors test it on three very different tasks. First, they model a mathematical nonlinear system with known behavior, checking how closely the network can track its output. Second, they tackle the chaotic Henon map, a classic benchmark where tiny changes in initial conditions can produce wildly different trajectories. Third, they apply the method to real data from a small direct-current motor, a noisy, practical device whose internal workings are not fully known. In each case they compare the new approach with several existing neural models, including classical diagonal recurrent networks and earlier quantum-inspired versions trained with simpler gradient-based rules.

Better Accuracy, Robustness, and Noise Tolerance

Across all three examples, the DRQNN-LS consistently produces lower prediction errors and better fit to the true signals than the competing methods, even when the data are deliberately corrupted with substantial noise. While the new model requires somewhat more computation per step—because it tracks recurrent quantum-inspired states and evaluates the Lyapunov-based update—the running times remain small enough for real-time use on modern processors. The results suggest that combining a streamlined recurrent structure, quantum-style neuron states, and mathematically guaranteed stable learning yields a powerful and practical tool for understanding and predicting nonlinear dynamics in the real world.

What This Means Going Forward

For non-specialists, the takeaway is that we are learning how to build smarter, more trustworthy digital twins of messy physical systems. DRQNN-LS offers a way to let a machine learn the behavior of a complex process directly from data while ensuring that its learning does not “blow up” or wander unpredictably. That combination of flexibility and stability could prove valuable in fields ranging from industrial control and robotics to power systems and perhaps even biological or medical modeling, where nonlinear behavior and noisy measurements are the norm.

Citation: Khalil, H., Elshazly, O. & Shaheen, O. Stable approach based diagonal recurrent quantum neural networks for identification of nonlinear systems. Sci Rep 16, 8274 (2026). https://doi.org/10.1038/s41598-026-37973-2

Keywords: nonlinear system identification, quantum neural network, recurrent neural network, Lyapunov stability, chaotic dynamics modeling