Clear Sky Science · en

A deep learning framework for breast cancer diagnosis using Swin Transformer and Dual-Attention Multi-scale Fusion Network

Why this matters for patients and doctors

Breast cancer is one of the most common cancers in women, and mammograms are the main tool for catching it early. Yet reading these X‑ray images is hard, even for experts, and small warning signs can be missed. This study presents a new artificial intelligence (AI) system designed to help radiologists spot breast cancer more reliably by combining two powerful ways of “looking” at mammograms: one that sees the big picture and one that zooms in on tiny details.

The challenge of seeing both the forest and the trees

Modern AI systems already assist in reading medical images, but most rely on a single type of model. Convolutional neural networks are good at spotting local patterns, such as sharp edges or small bright spots. Vision transformers, a newer family of models, excel at understanding relationships across the whole image. Mammograms, however, demand both abilities at once: cancer may show up as tiny calcium specks or subtle distortions, but its meaning depends on how those signs fit into the overall breast structure. At the same time, real mammography datasets are relatively small and often unbalanced, with far fewer cancer cases than normal exams, making it easy for AI systems to overfit or become biased.

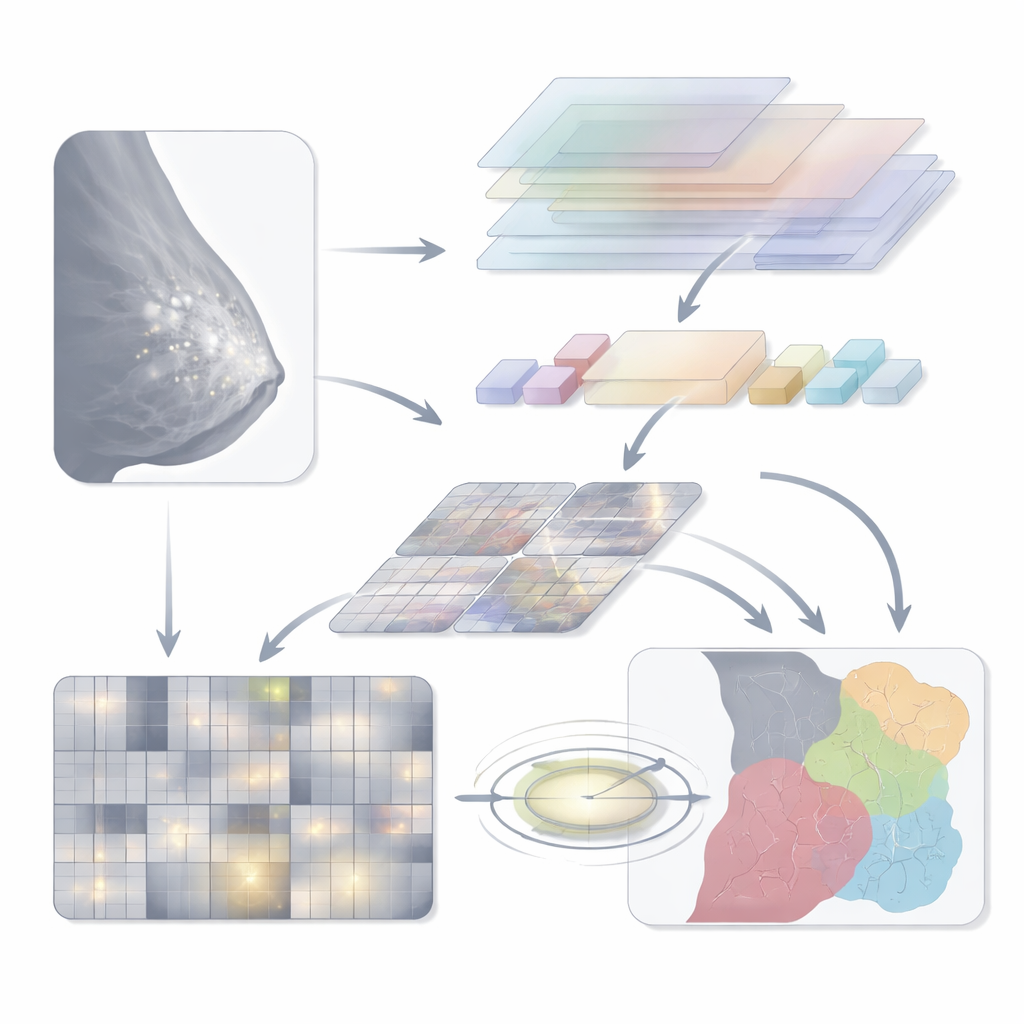

A two-track AI that looks wide and zooms deep

The authors introduce a hybrid model they call Swin‑DAMFN, built explicitly to merge global and local vision. One branch is based on the Swin Transformer, which divides the mammogram into windows and uses an attention mechanism to capture long‑range context—how different parts of the breast relate to each other. The second branch is a custom convolutional network, the Dual‑Attention Multi‑scale Fusion Network (DAMFN). This branch is tuned to pick up extremely fine details such as microcalcifications and slight tissue distortions. Within it, specialized blocks analyze the image at multiple scales and directions, then use attention modules to emphasize regions that look most clinically informative while downplaying background tissue.

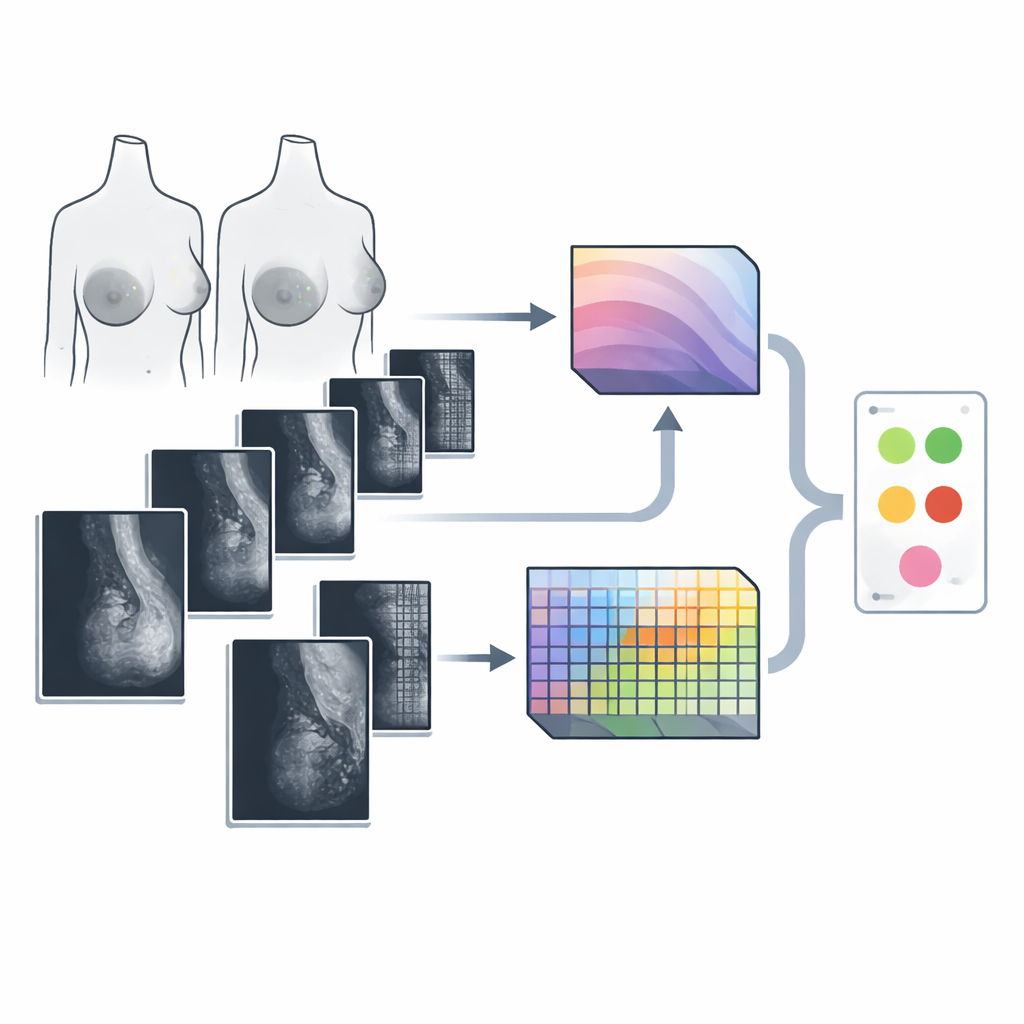

Teaching the system with more and richer images

Because real mammography datasets are limited and skewed toward non‑cancer cases, the researchers strengthened training data in two complementary ways. First, they used a type of generative model called a conditional GAN to synthesize realistic mammogram patches, especially for underrepresented malignant categories. These generated images help balance the classes and expose the model to more variations of disease appearance. Second, they applied photometric changes—small, random adjustments in brightness, contrast, and sharpness—to both real and synthetic images. This forces the AI to focus on true anatomical patterns rather than superficial lighting or noise, improving its ability to generalize to new scans.

How the pieces work together during diagnosis

During analysis, a preprocessed mammogram is fed simultaneously into both branches. The Swin Transformer produces a compact summary of global structure, while DAMFN outputs a rich map of local features. These are then aligned in size and fused into a single representation. A lightweight “triplet attention” block further refines this fusion by cross‑checking channels and spatial dimensions, steering the model’s focus toward areas that are most likely to contain disease. Finally, a simple classification head averages the information and produces a prediction over several classes, such as normal tissue, benign findings, or different types of malignant lesions.

What the results mean in practice

The team tested Swin‑DAMFN on two widely used public datasets, CBIS‑DDSM and MIAS, and compared it against many popular deep learning models. Their system reached about 99% accuracy on CBIS‑DDSM and nearly 99% on MIAS, with similarly high sensitivity (ability to catch cancers) and specificity (avoiding false alarms). Careful ablation studies showed that each component—the dual branches, the attention‑based fusion, and the data‑augmentation strategy—contributed to these gains. While the authors note that broader testing on diverse hospital data is still needed, the findings suggest that hybrid AI systems like Swin‑DAMFN could become valuable assistants in breast cancer screening, helping radiologists detect dangerous lesions earlier and more consistently while reducing workload and uncertainty.

Citation: Aldawsari, M.A., Aldosari, S.J., Ismail, A. et al. A deep learning framework for breast cancer diagnosis using Swin Transformer and Dual-Attention Multi-scale Fusion Network. Sci Rep 16, 8941 (2026). https://doi.org/10.1038/s41598-026-37969-y

Keywords: breast cancer, mammography, deep learning, transformer models, medical imaging AI