Clear Sky Science · en

Real-time object detection for unmanned aerial vehicles based on vision transformer and edge computing

Smarter Eyes in the Sky

Unmanned aerial vehicles—drones—are rapidly becoming everyday tools for jobs like inspecting bridges, monitoring traffic, and searching for missing people. But for a drone to truly help in these time‑critical tasks, it must do more than simply film the world; it has to recognize tiny objects in real time while flying on a limited battery and a small onboard computer. This paper presents a new way to give drones sharper, faster “eyes” by combining an advanced AI technique called a vision transformer with clever use of nearby edge computers, so that small objects like pedestrians, bikes, and cars can be spotted quickly and reliably from the air.

Why Drones Struggle to See Small Details

From high above the ground, people and vehicles can shrink to just a few dozen pixels in a video frame. Traditional neural‑network systems used on drones are designed to run quickly on low‑power chips, but they often miss these tiny objects or fail when lighting or viewing angle changes. Newer vision transformer models, borrowed from the language‑processing world, are much better at understanding the whole scene at once and teasing out small details from cluttered backgrounds. The catch is that they usually demand huge computing power, far beyond what a flying platform can carry. The authors set out to close this gap: keep the transformer’s sharp vision, but slim it down enough to run in real time on a drone, and offload extra work to a nearby edge server only when conditions allow.

A Split Brain: Drone and Edge Working Together

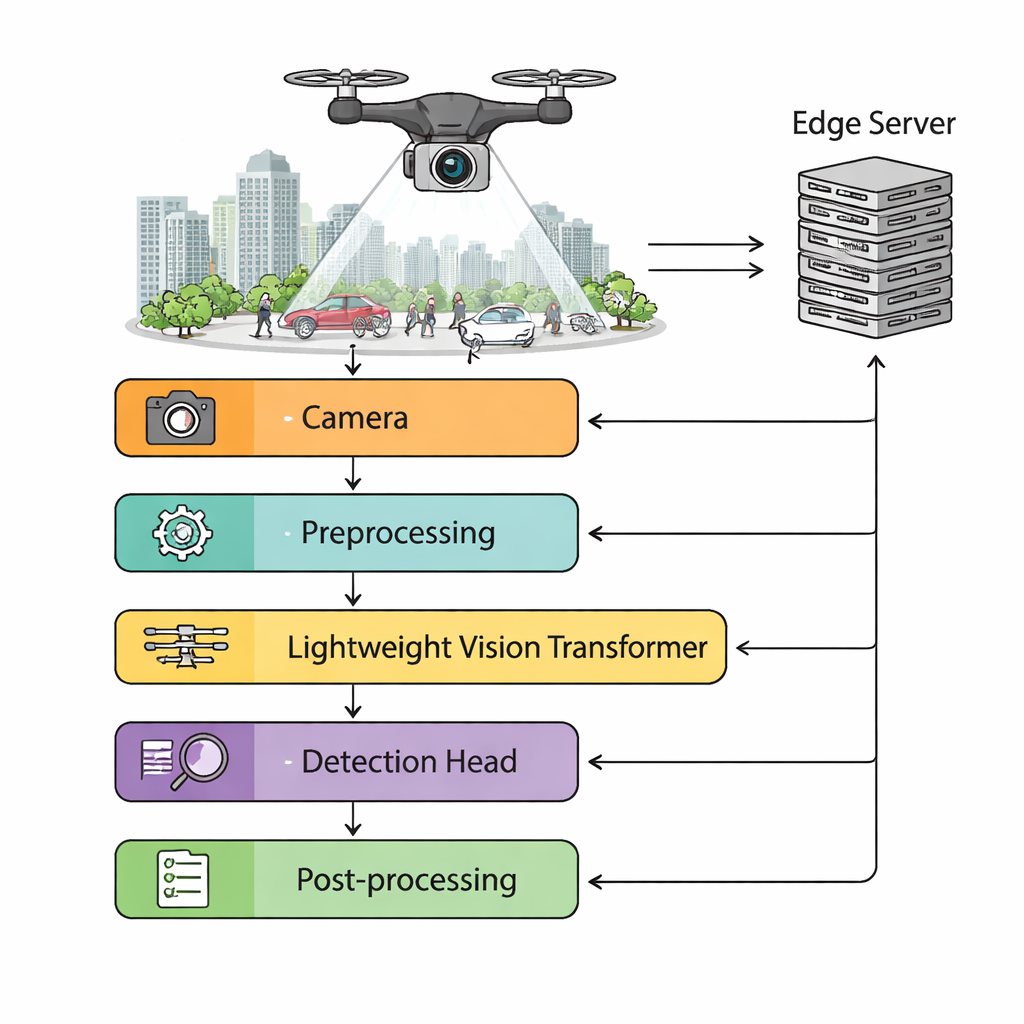

The proposed framework divides the work between the drone and a ground‑based edge computer. The drone’s camera streams high‑definition video into an onboard preprocessing module that stabilizes shaky footage, adjusts brightness, and dynamically resizes images depending on how much computing power is available. A lightweight vision transformer then extracts rich features from each frame, feeding a detection head that predicts where objects are and what they are. A scheduler monitors wireless network delay, battery level, and processing load. When the link to the ground is fast and stable, heavier tasks—such as processing batches of frames or running extra accuracy‑boosting models—can be pushed to the edge server. When the connection degrades, the system automatically switches to fully autonomous onboard processing so the drone never has to “fly blind.”

Trimming the Model Without Losing Its Sight

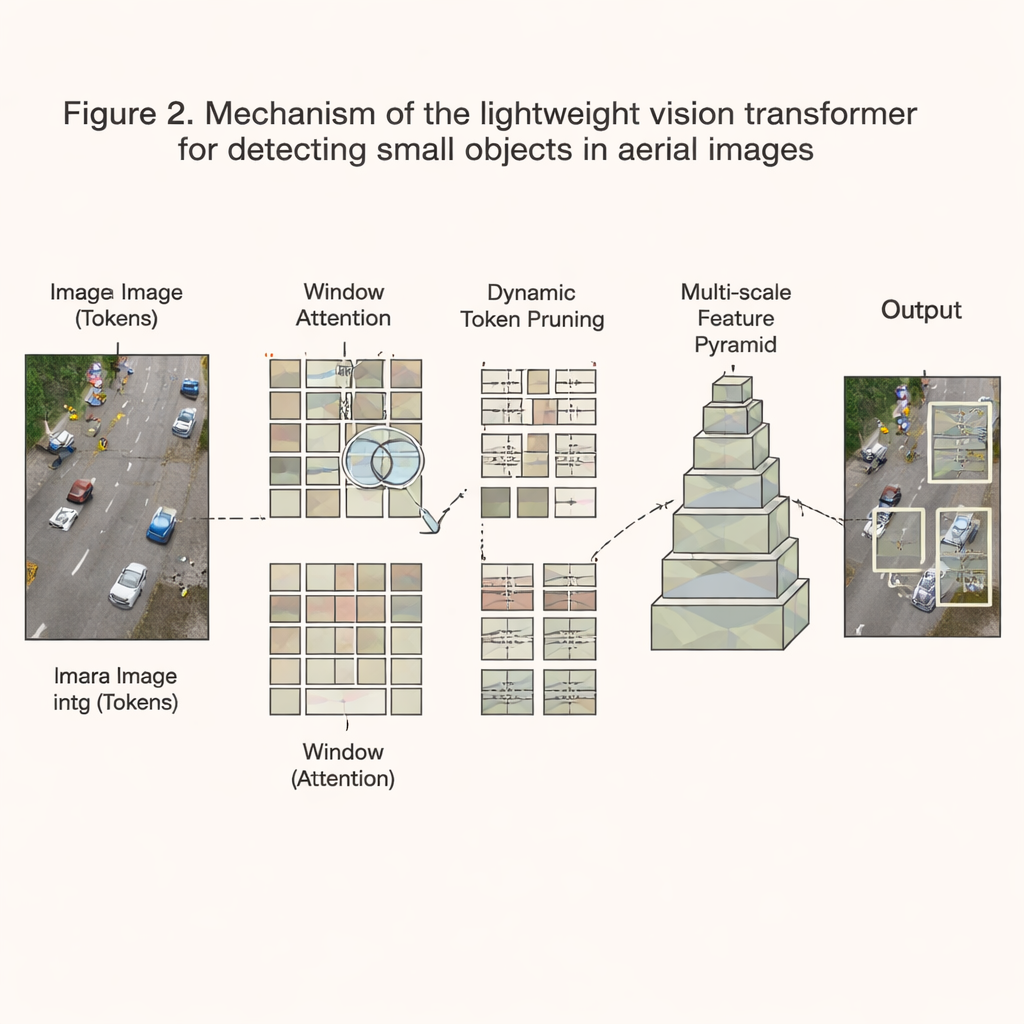

To make the transformer small and fast enough, the authors redesign its inner workings. Instead of letting every part of the image compare itself with every other part—a process that grows explosively with resolution—they restrict attention to local windows that slide across the picture, bringing the computational cost down to more manageable levels. On top of that, a dynamic pruning scheme constantly evaluates which regions of the image contain useful information and which are mostly empty background. Tokens judged uninformative are dropped early, saving time and memory, while complex, cluttered scenes keep more detail. The model also builds a multi‑scale pyramid of features so that both tiny pedestrians and larger vehicles can be detected in the same frame. Combined with careful quantization (using fewer bits per number), channel pruning, and low‑level software optimizations, these changes cut required calculations by about two‑thirds while preserving over 94% of the original accuracy.

Putting the System to the Test

The team evaluates their design on a large aerial dataset assembled from public drone benchmarks and thousands of newly collected images over cities, suburbs, and rural areas in different seasons and lighting conditions. On a popular embedded computer used in drones, the NVIDIA Jetson Xavier NX, their system runs at about 39 frames per second—fast enough for real‑time operation—while achieving higher accuracy than widely used lightweight detectors such as YOLO variants. In particular, it is significantly better at spotting small objects, with roughly a seven‑percentage‑point gain in average precision over conventional convolutional networks. Week‑long field trials on a commercial drone platform show that the system maintains performance despite camera vibration, changing illumination, and fluctuating wireless connectivity, and that it can smoothly shift between edge‑assisted and fully onboard modes during real flights.

What This Means for Real‑World Drone Missions

In plain terms, this work shows that it is possible to give drones sharper and more reliable vision without strapping a data‑center‑grade computer to them. By redesigning the vision transformer to be lean, selectively focusing on the most informative parts of each image, and teaming the drone with a nearby edge server when possible, the authors deliver a detector that sees more, misses less, and still runs in real time within tight power and memory budgets. This makes tasks like search and rescue, disaster assessment, and infrastructure inspection safer and more effective, because drones can better pick out small, critical details—such as a stranded person or a damaged cable—exactly when every second counts.

Citation: Zhu, W., Chen, K. Real-time object detection for unmanned aerial vehicles based on vision transformer and edge computing. Sci Rep 16, 6814 (2026). https://doi.org/10.1038/s41598-026-37938-5

Keywords: drones, object detection, edge computing, vision transformer, real-time imaging