Clear Sky Science · en

A novel deep-learning model to convert DAS strain to geophone particle velocity: application to PoroTomo data from the Brady geothermal field

Listening to Earthquakes with Internet-Style Cables

What if the same kind of fiber-optic cables that carry our internet traffic could also act as giant strings of thousands of earthquake sensors? This study explores exactly that idea. The authors show how a modern artificial intelligence (AI) model can turn the raw, hard-to-interpret signals from fiber-optic cables into the more familiar motion readings used by seismologists, potentially making seismic monitoring cheaper, denser, and easier to deploy in harsh or crowded environments.

Why Fiber-Optic Ears Are Hard to Understand

Distributed Acoustic Sensing (DAS) turns ordinary fiber-optic cables into continuous lines of sensors that respond to tiny stretches and squeezes in the ground. Instead of a few hundred standalone instruments spread over a field, DAS can provide thousands of measurement points along a single cable. That density is a major advantage for tracking how seismic waves move through the Earth. But there is a catch: DAS measures how much the cable is strained, while traditional seismometers, called geophones, record how fast the ground moves. Most existing seismology methods are built for geophone-style motion, not for strain. Strain also exaggerates small-scale irregularities near the surface, making the data noisy and less consistent from place to place. Converting DAS strain into geophone-like ground motion is therefore essential, yet standard physics-based recipes for doing so often require strong assumptions about wave behavior, cable geometry, and the presence of co-located reference sensors.

Using AI to Translate Between Two Ways of Hearing

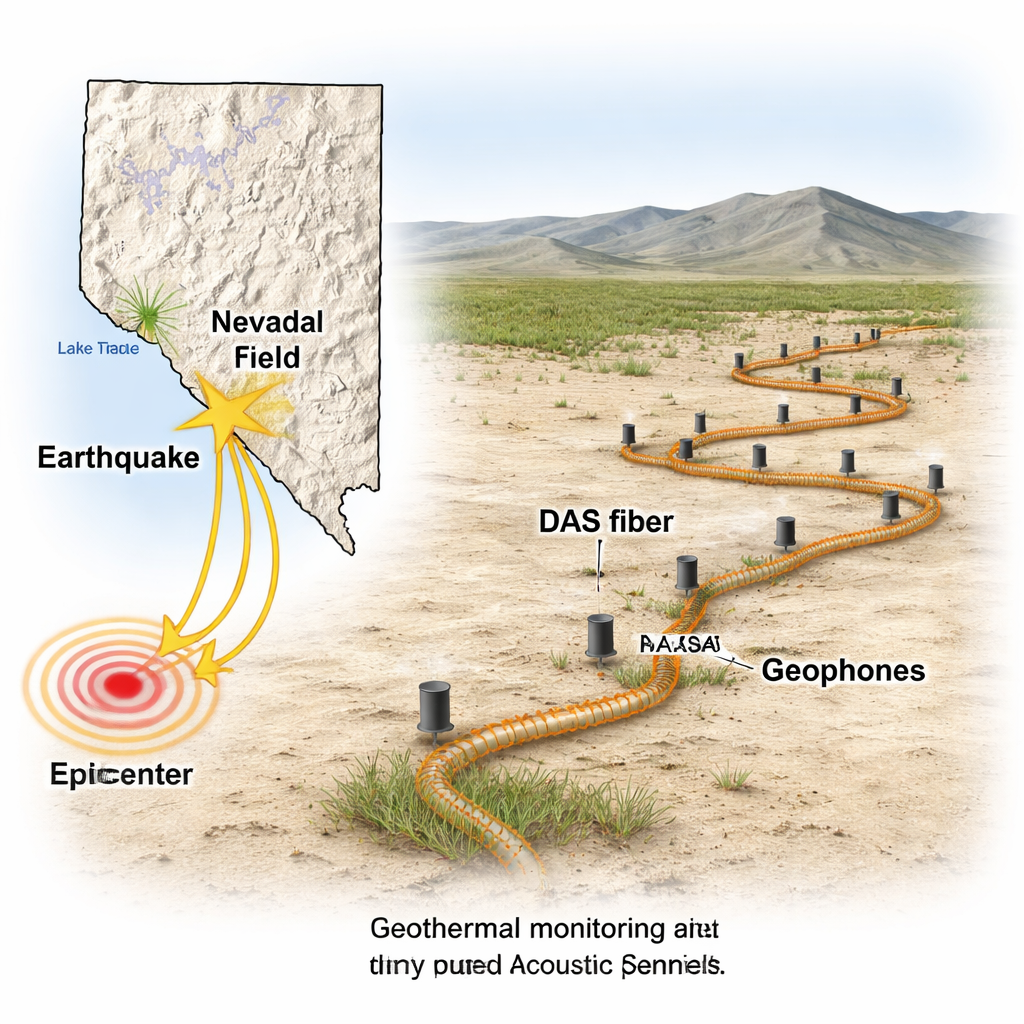

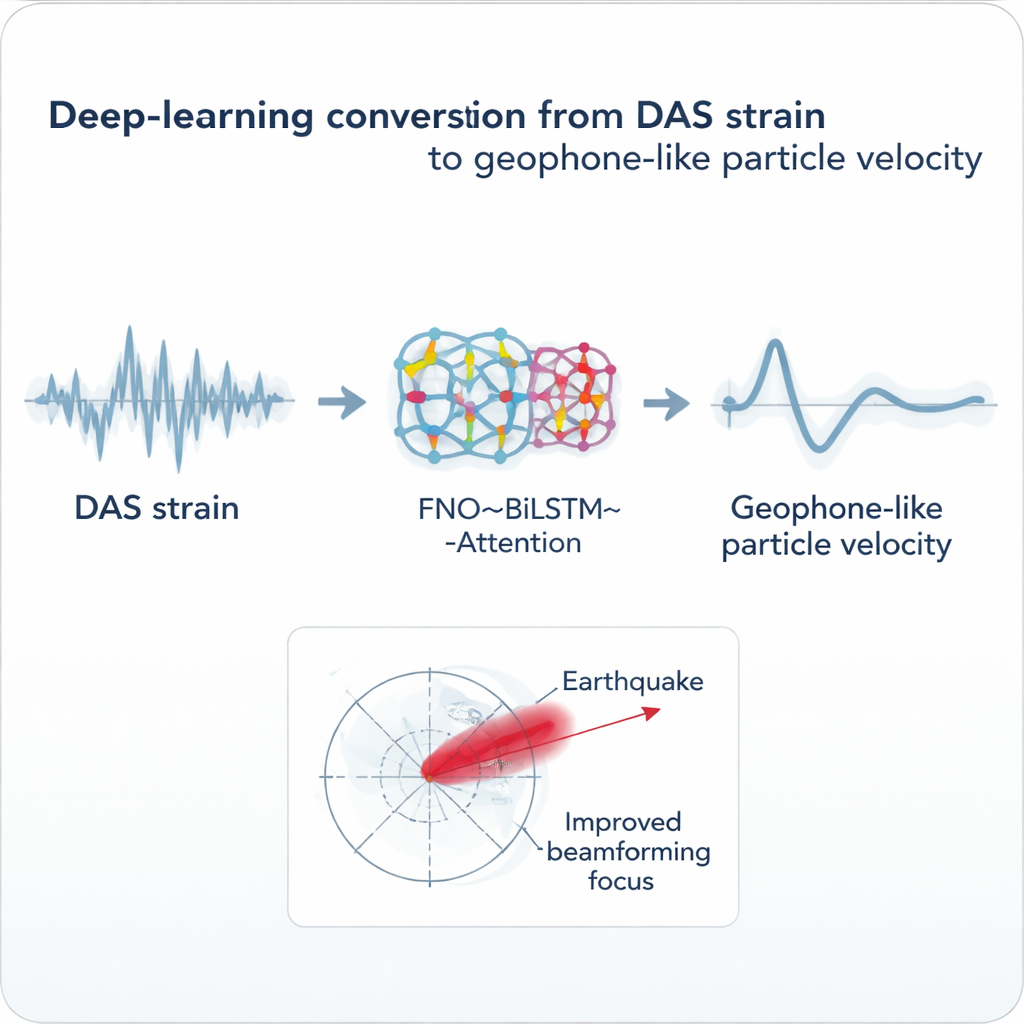

The researchers developed a deep-learning model that acts as a translator between DAS strain and geophone particle velocity. They trained it on data from the PoroTomo experiment at the Brady Hot Springs geothermal field in Nevada, where an 8.4-kilometer zigzag fiber-optic cable was deployed alongside a grid of 238 three-component geophones. For 112 locations where geophones lay very close to the cable, they paired each geophone’s horizontal motion trace with the ten nearest DAS channels. The model, which combines a Fourier Neural Operator (to capture spatial patterns along the cable), a bidirectional recurrent network (to understand time evolution), and an attention mechanism (to focus on the most informative parts of each signal), learned to predict what the geophone would have recorded based solely on the DAS strain input.

How Well the AI Translator Works

To judge performance, the authors compared the AI-generated waveforms with the actual geophone data using standard measures of error and similarity. They also checked how often the predictions matched across many examples. The hybrid architecture clearly outperformed a simpler design that dropped the Fourier component: errors were roughly twenty times smaller on average, and the similarity to true geophone traces was consistently very high. In the frequency domain, where scientists analyze which pitches of vibration are present, the AI-produced particle velocities closely matched the geophone spectra across the full range of interest for both P-waves and S-waves. By contrast, a conventional physics-based conversion method agreed well only at low frequencies, missing important details at higher frequencies where DAS behavior is more complicated.

Putting the Converted Data to Work

The real test is whether the converted signals are useful for downstream tasks. The team applied a beamforming technique, known as MUSIC, that uses an array of sensors to estimate the direction and apparent speed of incoming seismic waves. Previous work at the same site showed that raw DAS strain rate was too incoherent for reliable beamforming: the waves looked smeared, and the results were poor compared with the nodal geophone array. The new AI-based conversion tells a different story. When the authors ran beamforming on the AI-predicted particle velocity along the cable, the method recovered a sharp estimate of the earthquake’s backazimuth and wave speed—matching or even slightly improving upon the performance of the geophones and surpassing the physics-based DAS conversion. The improvement arises from both the higher spatial density of DAS channels and the AI model’s ability to suppress incoherent noise while preserving the coherent motion that matters for seismic analysis.

What This Means for Future Earth Monitoring

For non-specialists, the main takeaway is that the authors have built a smart translator that lets dense, flexible fiber-optic cables talk the same language as conventional seismic instruments. Their AI model does not replace physics, but it learns a site-specific mapping that captures messy real-world factors like cable-ground coupling and local noise. While each new installation will still need its own short calibration period with a few co-located geophones, the approach opens the possibility of turning existing and future fiber networks into powerful, high-resolution tools for earthquake monitoring, hazard assessment, and subsurface imaging. Over time, as the method is tested on more sites and more events, such AI-assisted conversions could help bring detailed seismological analysis to places where traditional sensor deployments are impractical or too expensive.

Citation: Al-Qadasi, B., Cui, Y., Waheed, U.B. et al. A novel deep-learning model to convert DAS strain to geophone particle velocity: application to PoroTomo data from the Brady geothermal field. Sci Rep 16, 7001 (2026). https://doi.org/10.1038/s41598-026-37888-y

Keywords: distributed acoustic sensing, seismology, deep learning, earthquake monitoring, fiber-optic sensors