Clear Sky Science · en

The enhanced EME-YOLOv11 for real-time polarizer defect detection

Why tiny flaws in screens really matter

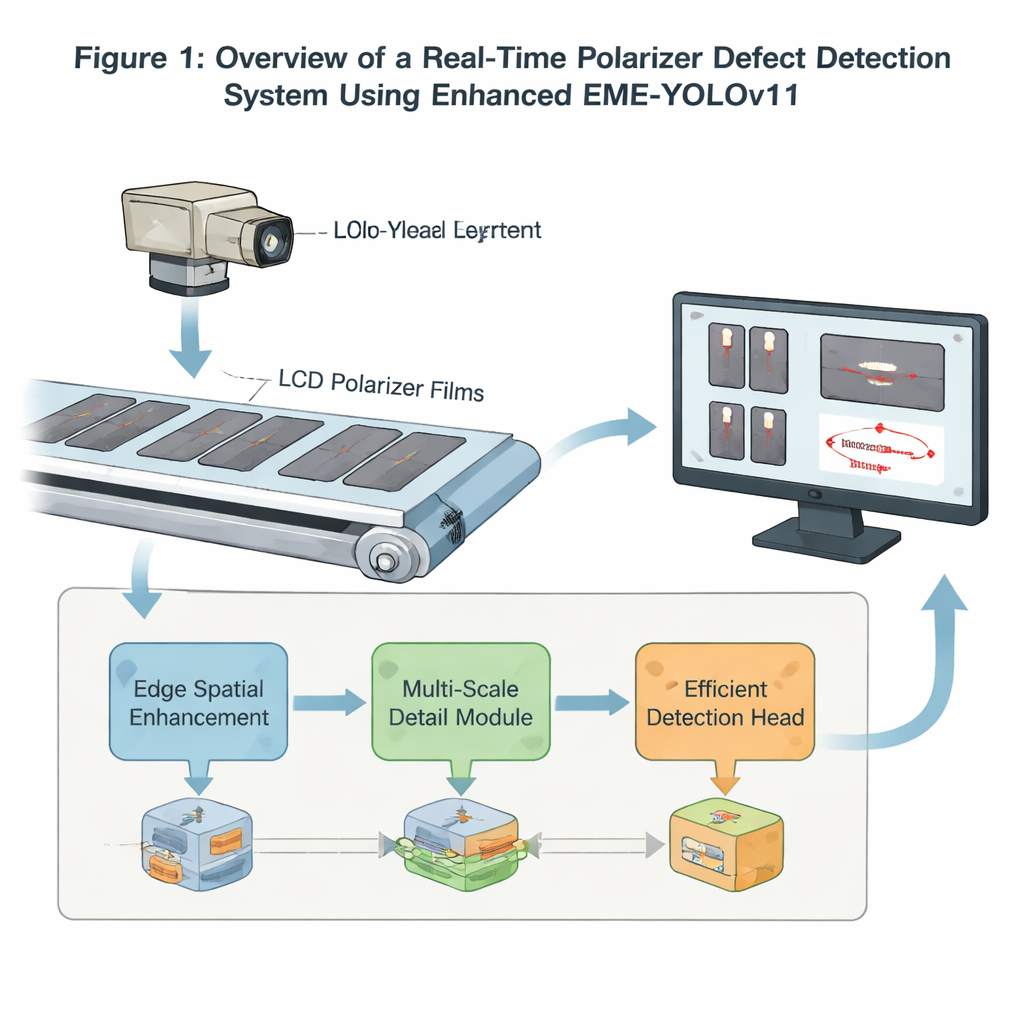

Every smartphone, laptop, and TV screen relies on a thin optical film called a polarizer to control how light passes through the display. If that film has even small specks, stains, or scratches, image quality can suffer and entire panels may be thrown away. Today, most factories still depend heavily on human inspectors or older image-processing tricks to spot these flaws, which is slow, tiring, and not always reliable. This study introduces a smarter, faster artificial-intelligence system—called EME‑YOLOv11—that is designed to catch these defects in real time as panels roll down the production line.

From human eyeballs to machine eyes

In the liquid crystal display (LCD) industry, the polarizer is a key component that strongly affects brightness, contrast, and viewing angle. Common defects—such as bubbles, stains, foreign particles, or tool marks—may be only a fraction of a millimeter wide, yet they can downgrade a screen or make it unusable. Traditional inspection relied on workers visually scanning panels, but people struggle to notice faint or tiny flaws for long periods, and their judgments vary with experience and fatigue. Early machine-vision systems improved on this by using cameras and handcrafted rules to measure shapes, textures, or gray levels. However, these rule-based methods break down when defect shapes change, contrast is low, or backgrounds are complex, all of which are common with polarizer films.

Letting neural networks learn what matters

Deep learning, and in particular convolutional neural networks, has transformed how computers understand images by learning useful features directly from data instead of relying on manually designed rules. Within this field, the YOLO ("You Only Look Once") family of models has become a workhorse for real-time object detection, balancing speed and accuracy in a single end-to-end framework. The authors build on the recent YOLOv11 model, which is already tuned for fast detection, and tailor it specifically for polarizer inspection. Their goal is to boost the model’s sensitivity to subtle defects, keep it light enough for industrial deployment, and still process images quickly enough to keep up with moving production lines.

Sharpening edges and zooming in on fine detail

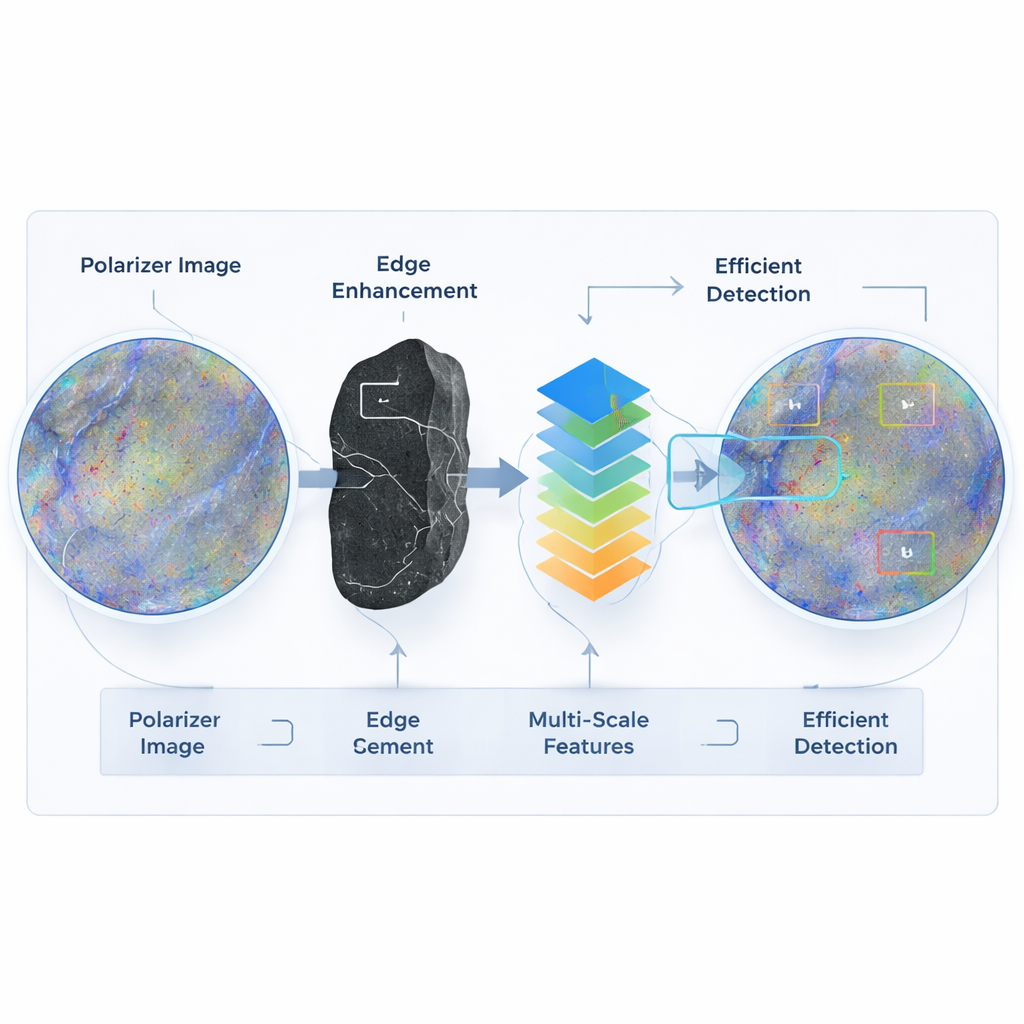

The first key improvement focuses on how the network sees edges and spatial patterns. The authors replace a standard block in YOLOv11’s backbone with a new module that runs two branches in parallel: one branch uses a Sobel operator—an efficient, classic edge filter—to emphasize sharp changes in intensity, and the other uses regular convolutions to preserve broader textures and structures. By fusing these two views and feeding them forward, the system becomes better at highlighting the faint boundaries of stains and marks that might otherwise blend into the background. A second module rewrites how the network looks at details at different scales. Instead of pooling, which can wash out subtle variations, the authors use dilated convolutions with several carefully chosen spacings. This lets the model capture both tiny, local features and broader context without exploding the number of parameters, helping it recognize small, irregular defects as well as larger ones.

Faster decisions with a leaner detection head

At the output end of the network, a redesigned “head” converts feature maps into concrete predictions about where defects are and what type they are. The authors reorganize this part into three resolution levels—fine for small flaws, medium for typical defects, and coarse for larger ones—and swap standard convolutions for grouped convolutions, which split computations into smaller, parallel chunks. The head also separates classification (what kind of defect) from box refinement (exact location). This combination reduces the number of calculations and model size while still improving accuracy. In tests on a real factory dataset of nearly 4,000 polarizer images, the enhanced EME‑YOLOv11 outperformed not only the original YOLOv11 but also other popular one‑stage and transformer-based detectors, achieving higher precision and recall with fewer floating‑point operations and fewer parameters.

What this means for everyday screens

Put simply, EME‑YOLOv11 is a smarter and more efficient set of “machine eyes” for polarizer inspection. By sharpening edges, preserving fine details, and streamlining the decision-making layers, it catches more true defects while remaining fast enough for real factory use. Although the current tests were run on a high-end graphics card, the compact design points toward future deployment on embedded devices installed directly on production lines. If such systems are widely adopted, manufacturers could waste fewer panels, stabilize quality, and cut costs—all of which ultimately improves the reliability and appearance of the screens people use every day.

Citation: Liu, R., Jing, C., Zhang, T. et al. The enhanced EME-YOLOv11 for real-time polarizer defect detection. Sci Rep 16, 7414 (2026). https://doi.org/10.1038/s41598-026-37884-2

Keywords: polarizer defects, industrial inspection, deep learning, YOLO object detection, machine vision