Clear Sky Science · en

A real-time industrial safety automation using YOLO architectures leveraging diverse chromatic domains

Smarter Eyes on the Factory Floor

Hidden flaws in metal welds can turn sturdy machines, bridges, or pipelines into silent hazards. Traditionally, trained inspectors peer at glowing seams of metal, trying to spot tiny cracks or gaps before they become accidents. This paper explores how artificial intelligence can take on much of that watchful work, using fast image-recognition software to examine welds in real time, even as parts roll past on a conveyor belt. By comparing several versions of a popular AI detector called YOLO and testing how different ways of representing color affect its vision, the researchers show a path toward safer, more efficient factories.

Why Spotting Bad Welds Is So Hard

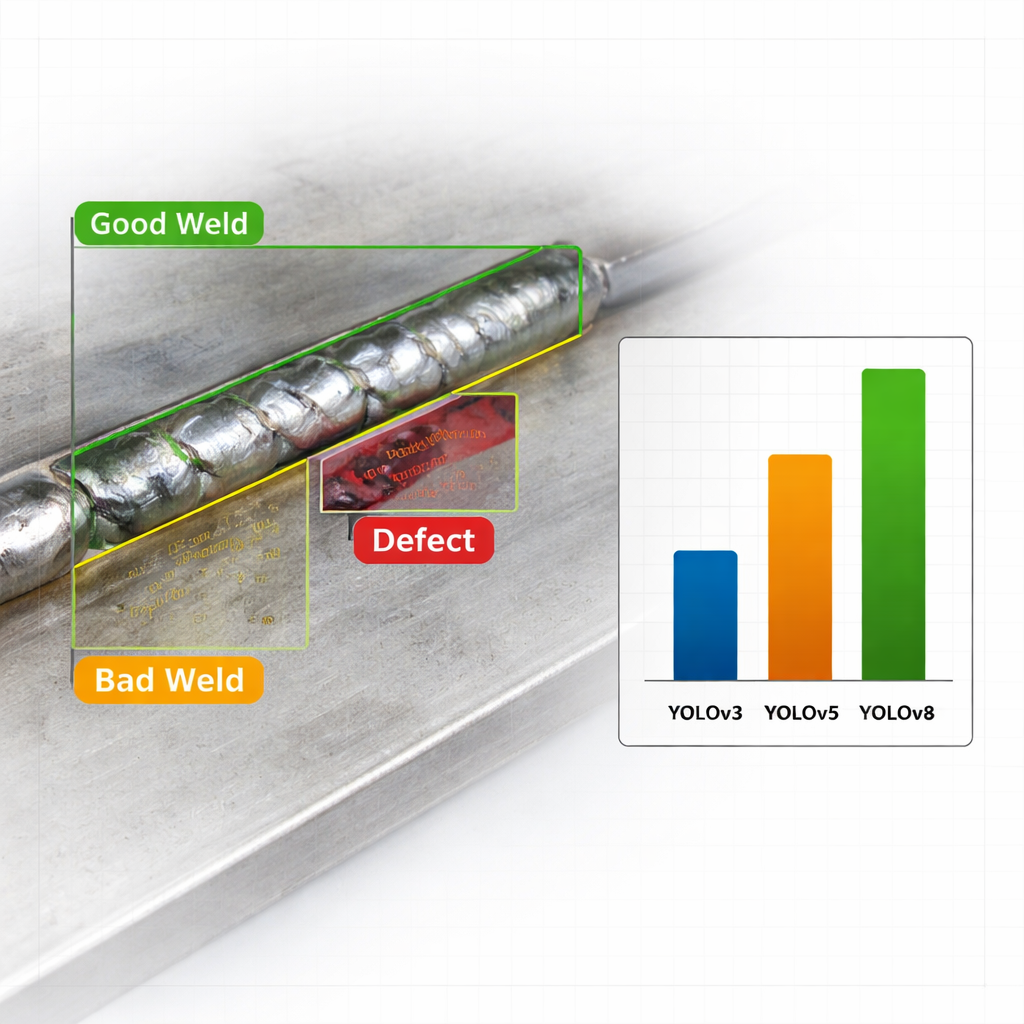

On a busy production line, welds vary in shape, shine, and background clutter. A single image might contain multiple welds and defects, which makes simple image classification ("good" or "bad" overall) far too crude. Instead, the system must both find and label specific problem areas along a seam. The authors focus on three practical categories—good weld, bad weld, and outright defect—because each calls for a different response, from accepting a part to immediate rework. They use a publicly available dataset of more than six thousand annotated weld images, ensuring that the AI is trained and tested on a realistic range of surfaces, lighting conditions, and flaw types.

Teaching Machines to Look Once and Decide

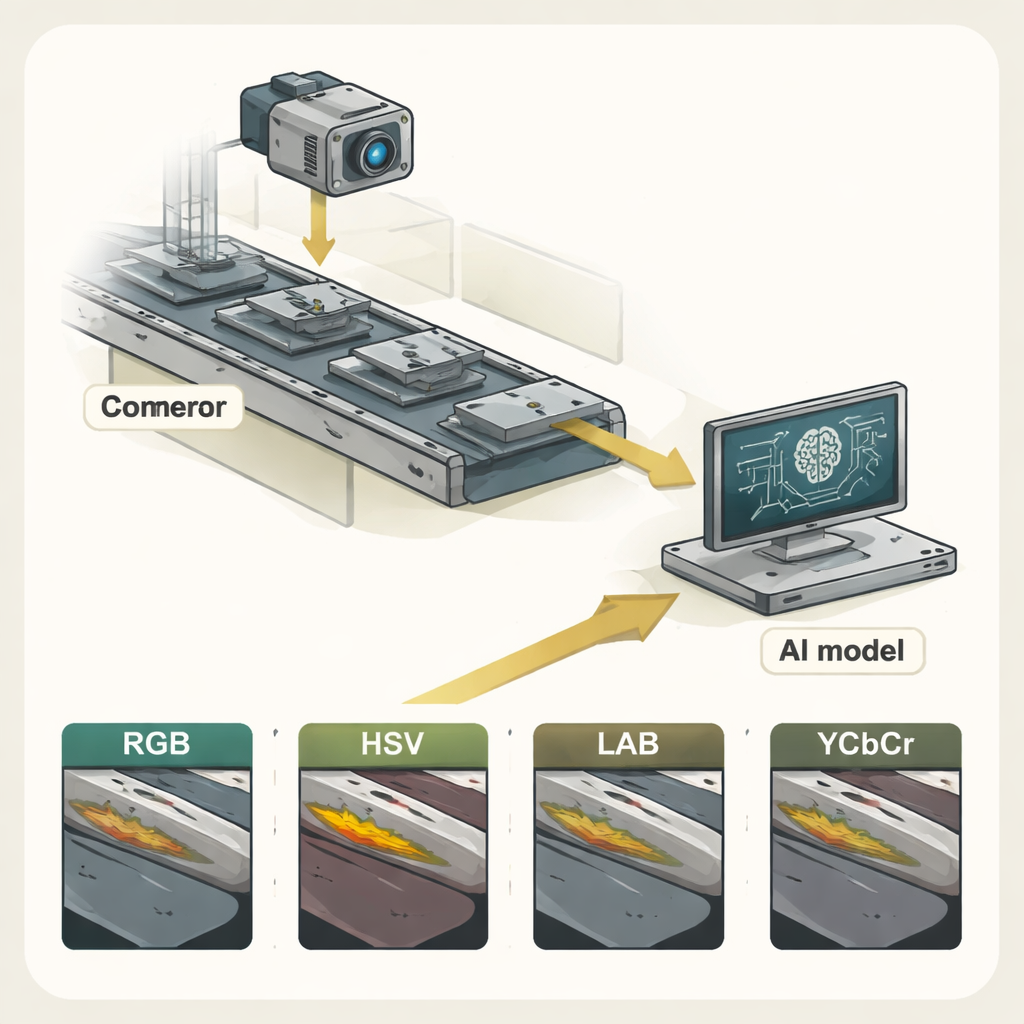

The study centers on the YOLO ("You Only Look Once") family of object-detection models, known for scanning an image in a single pass and drawing boxes around what they find. The researchers compare three generations: YOLOv3, YOLOv5, and the latest YOLOv8. Each version improves speed and accuracy through deeper networks and smarter training strategies. To better mimic the lighting challenges of real factories, the team also transforms every weld image into four different color spaces—RGB (the familiar red–green–blue), HSV, LAB, and YCbCr—and trains separate models on each. This multispectral approach lets them ask a pointed question: does changing how color is encoded help the AI see defects more clearly?

Color, Speed, and Accuracy in Action

Across all experiments, one pattern is clear: the newest model, YOLOv8, outperforms its predecessors. When trained on standard RGB images, YOLOv8 achieves a normalized mean average precision (mAP@0.5) of 0.592, noticeably higher than YOLOv3 and YOLOv5 under the same conditions. In practical terms, this means it is better at both finding and correctly labeling weld regions. The model is also extremely fast, processing roughly 138 images per second on a modern graphics card—far above the 30 frames per second often used as a real-time benchmark. Among the color spaces, RGB consistently gives the strongest results for all three YOLO versions, while HSV, LAB, and YCbCr trail behind. Those alternative encodings can highlight certain visual features, but in this setting they do not outweigh the simplicity and information content of RGB.

From Laboratory Tests to the Factory Edge

To demonstrate real-world feasibility, the authors deploy a streamlined YOLOv8 model on a Raspberry Pi-based edge device linked to a conveyor belt and camera. As welded parts move beneath the lens, the system captures frames, cleans them up through basic preprocessing, and runs detection locally, classifying each weld as good, bad, or defective. Results are logged to a database and displayed on a dashboard for inspectors, who can see live defect markers and long-term quality trends. On top of that, the framework can generate recommendations, such as suggesting tweaks to welding speed or voltage, or flagging when equipment maintenance might be needed based on recurring defects.

What This Means for Safer Manufacturing

For a layperson, the key outcome is straightforward: this work shows that a lightweight, modern AI model can reliably and very quickly flag risky welds in real industrial conditions, especially when it uses ordinary RGB camera images. YOLOv8 proves accurate enough to distinguish clearly bad welds and fast enough to keep up with high-speed production lines, all while running on modest hardware close to the machines. The authors argue that this kind of automated, color-aware inspection can reduce human error, catch problems earlier, and support safer, more consistent manufacturing. Future refinements—such as richer training data and improved handling of more subtle defect types—could make these digital inspectors an everyday part of industrial safety.

Citation: Pati, N., Sharma, A., Gourisaria, M.K. et al. A real-time industrial safety automation using YOLO architectures leveraging diverse chromatic domains. Sci Rep 16, 7253 (2026). https://doi.org/10.1038/s41598-026-37869-1

Keywords: welding defect detection, industrial safety automation, YOLOv8, real-time computer vision, edge AI