Clear Sky Science · en

Deep reinforcement learning-driven multi-objective optimization and its applications on lighting infrastructure operation and maintenance strategy

Smarter Lights for Safer Tunnels

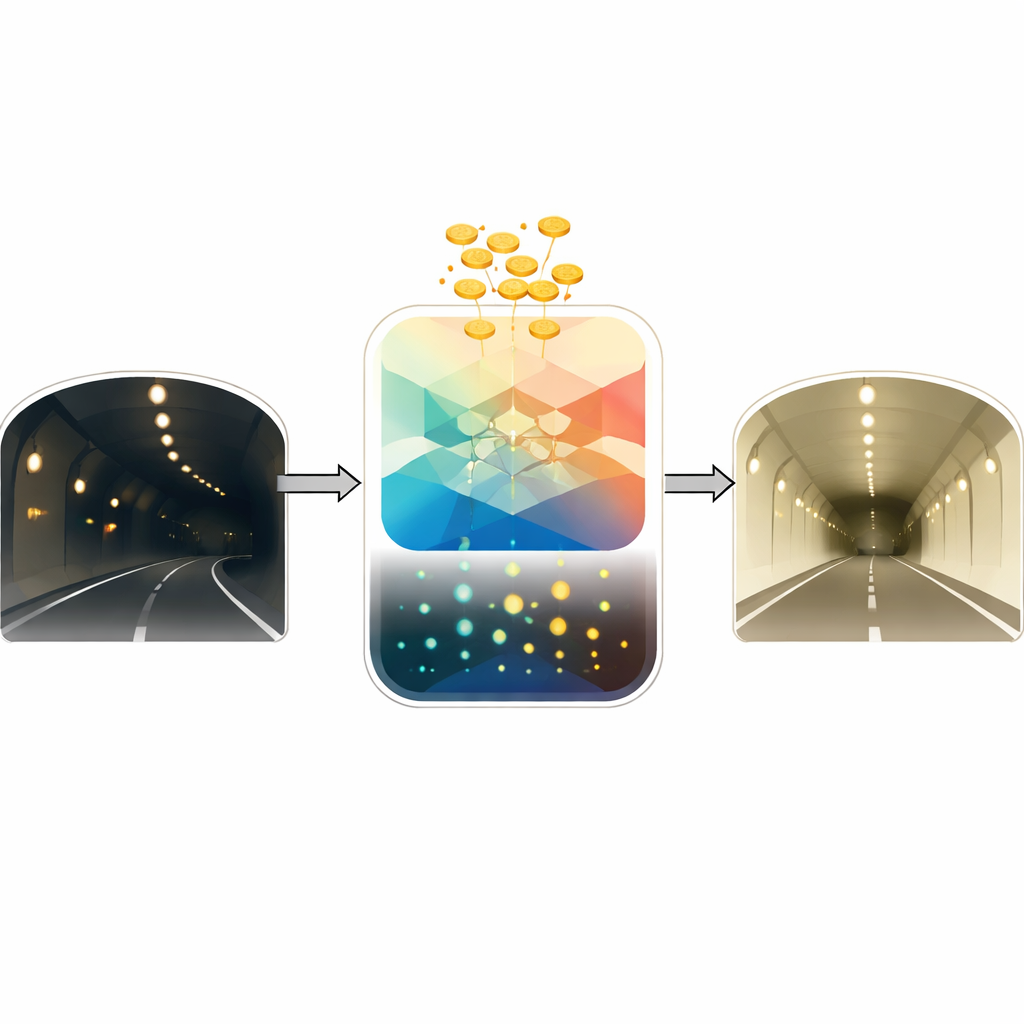

Driving through a long highway tunnel, we take it for granted that the lights will stay bright and steady. Yet keeping thousands of fixtures working safely, without wasting money on unnecessary repairs, is a complex juggling act. This paper presents a new way to manage tunnel lighting that uses artificial intelligence to continuously balance two competing goals: keeping the lights reliable for drivers and keeping the overall costs under control.

Why Tunnel Lights Are Hard to Manage

Tunnel lighting is critical for traffic safety. When lamps age or circuits fail, the light level can suddenly drop, making it harder for drivers to judge distance and speed and increasing the risk of crashes. Traditional maintenance relies on fixed schedules, simple thresholds, or single-goal rules such as “minimize cost” or “maximize lamp life.” These approaches do not cope well with real tunnels, where conditions change over time, thousands of fixtures age at different rates, and safety and cost often pull in opposite directions. The authors argue that what is needed is a method that can constantly learn from data and adapt decisions as the system changes.

Teaching a Digital Agent to Maintain the Lights

The researchers build a digital “agent” that learns how and when to repair, replace, or adjust tunnel lights by interacting with a simulated tunnel. This agent is based on deep reinforcement learning, a branch of AI where a system tries actions, observes the outcome, and gradually learns strategies that maximize a reward. In this case, the reward combines operating cost (energy use, labor, spare parts, and safety penalties) and system health (the probability that lamps continue to work reliably). The agent sees a detailed picture of the tunnel: the brightness of each fixture, whether it is failing, the surrounding light environment, and signs of degradation over time. At each step it chooses actions for each lamp—do nothing, brighten, dim, repair, or replace—and receives feedback on how these choices affect both cost and reliability.

Capturing How Lights Wear Out

To give the agent a realistic world to learn in, the authors first build a mathematical model of how tunnel lights degrade. They use a type of random-walk process (a Wiener process) that captures both the steady drift toward failure and the uncertainty from real-world conditions like temperature swings. Using four years of operating data from more than 2,000 LED fixtures in a 7‑kilometer tunnel in Yunnan Province, they compress many sensor readings into a single “health” indicator and show that this degradation model closely matches reality. It predicts how the chance of failure grows over time and how much remaining life a lamp likely has. This model feeds into the simulated environment where the learning agent practices maintenance strategies without risking real drivers.

Balancing Cost and Reliability at the Same Time

A key contribution of the work is that it treats cost and reliability as equally important goals rather than collapsing them into a single number. The authors turn the multi-goal problem into many simpler subproblems, each representing a different trade-off between low cost and high reliability. For each subproblem, the learning agent finds a good strategy; taken together, these strategies form a “frontier” of best possible compromises. To speed this process up, the team lets neighboring subproblems share what they have learned whenever their trade-offs are similar, instead of training each one from scratch. They also reshape the reliability measure so that the learning process becomes especially sensitive when the system is near dangerous failure levels, nudging the agent to respond more aggressively before safety is threatened.

What the New Strategy Achieves

When tested against several common tunnel maintenance strategies—such as fixed‑interval inspections, brightness‑based triggers, or rules based on failure rates—the new approach delivers a better balance between safety and spending. It cuts overall maintenance and operating costs by nearly 30 percent while keeping reliability high and preventing the learning agent from becoming either too cautious or too reckless. The parameter‑sharing scheme also makes training more efficient, reducing computation time and improving the coverage of possible cost–reliability trade-offs. For a layperson, the takeaway is that this method uses data and adaptive learning to decide exactly when and where to intervene in a tunnel, so that lights stay safe for drivers while taxpayers or operators pay less over the system’s lifetime.

Citation: Wang, Z., Tang, J., Wei, P. et al. Deep reinforcement learning-driven multi-objective optimization and its applications on lighting infrastructure operation and maintenance strategy. Sci Rep 16, 8989 (2026). https://doi.org/10.1038/s41598-026-37811-5

Keywords: tunnel lighting, predictive maintenance, reinforcement learning, infrastructure reliability, multi-objective optimization