Clear Sky Science · en

A comparative analysis of the performance of large Language models in the dentistry specialty examination

Why smart chatbots matter for future dentists

Artificial intelligence is rapidly changing how doctors and dentists learn and work. One of the most visible tools is the conversational chatbot powered by large language models—the same kind of technology behind many popular AI assistants. This study asked a simple but important question: if dental students used these tools to prepare for a highly competitive specialty exam in oral and maxillofacial radiology, how well would the machines actually do?

Testing AI on a real exam

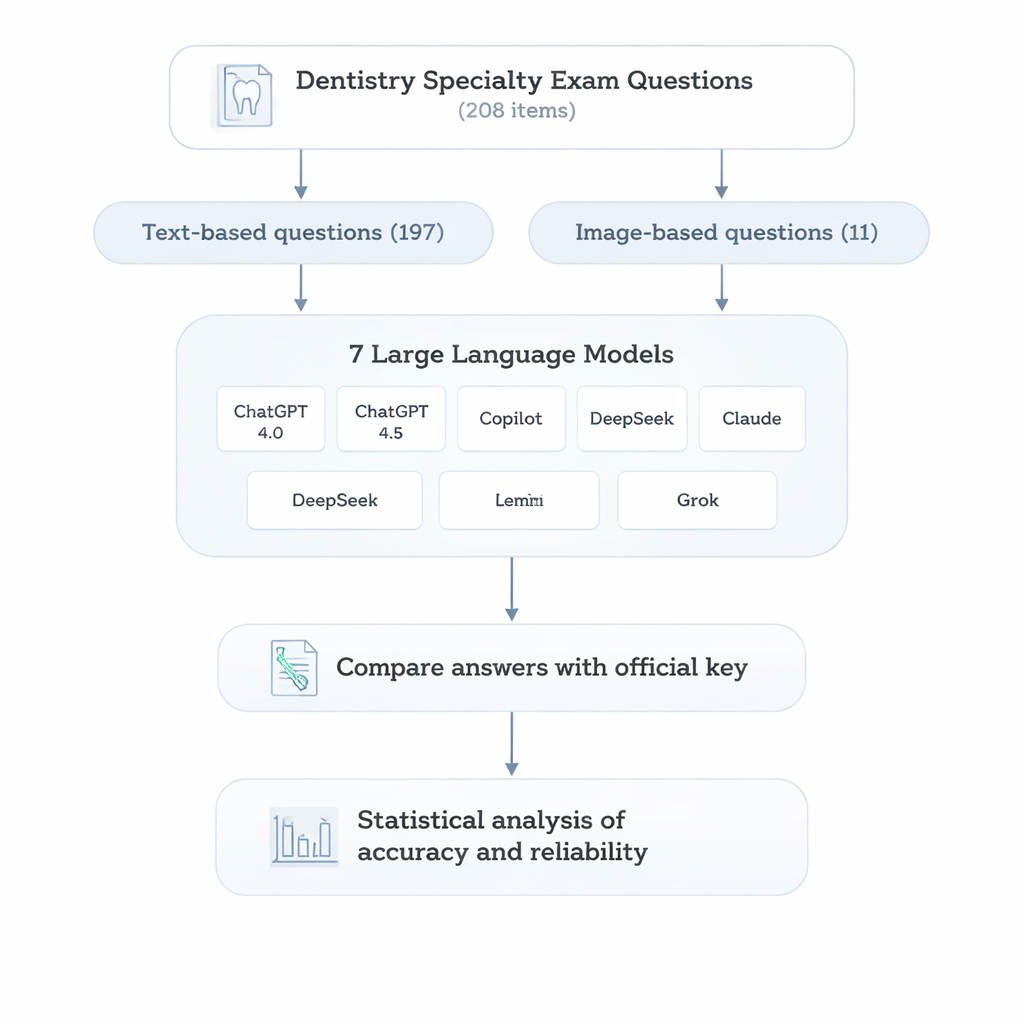

To find out, the researchers turned to the Dentistry Specialization Entrance Examination (DUS) in Türkiye, which helps determine who can enter advanced training programs. From past years of this nationwide test, they selected 208 multiple-choice questions covering topics that radiology specialists must master, from radiation physics and imaging techniques to jaw tumors and sinus diseases. Most of the questions were text-only, but a smaller set required interpreting radiographic images, mirroring real-life diagnostic work.

Seven chatbots take the same challenge

The team then posed every question, in Turkish, to seven widely used AI chatbots based on different large language models: two versions of ChatGPT, plus Gemini, Copilot, DeepSeek, Claude, and Grok. Each question was entered carefully and separately to avoid any carryover between conversations. A second researcher compared every AI answer with the official key and marked each as right or wrong. Finally, the authors used standard statistical tests to compare the models overall and within specific subject areas.

Who scored highest—and where they stumbled

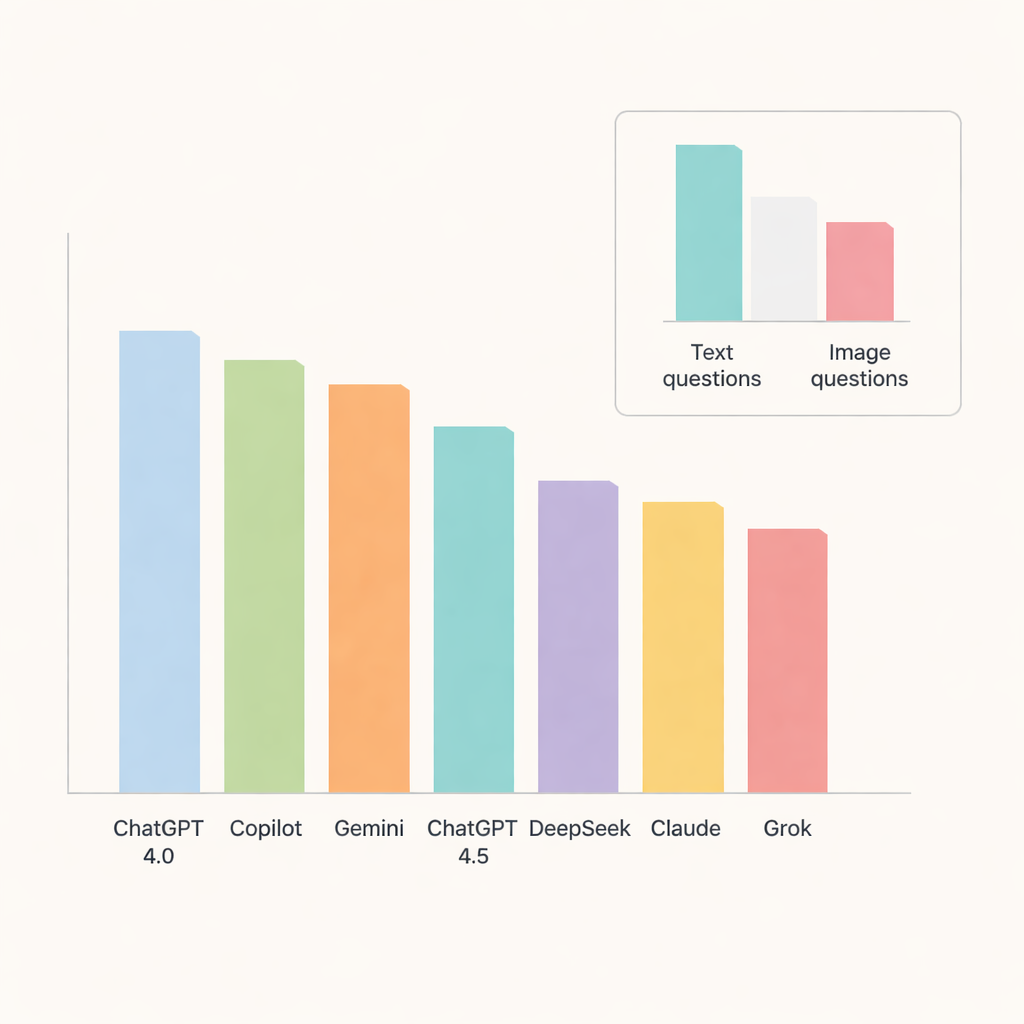

Among all the chatbots, ChatGPT 4.0 stood out, answering about 91 percent of questions correctly. Copilot and Gemini followed closely with accuracy around the mid-to-high 80s, while ChatGPT 4.5, DeepSeek, Claude, and Grok trailed somewhat behind. When the researchers drilled down into topics, the models did especially well on oral pathology and salivary gland diseases, where accuracy approached or exceeded 90 percent. In contrast, radiographic anatomy and soft tissue calcifications were noticeably harder, pulling scores down across systems and hinting at areas where AI still struggles with fine-grained detail.

Pictures remain harder than words

A key test was whether the chatbots could handle images as well as text. Here, their limitations became clear. Accuracy dropped sharply on image-based questions, even for the best-performing models. ChatGPT 4.0, Gemini, and Copilot led this category but still answered only about two-thirds of visual questions correctly. DeepSeek did the worst on images, with just over one-third right. For most models, the difference between text and image performance was large enough to be statistically meaningful, underscoring that interpreting medical images remains a tough task for today’s general-purpose AI.

What this means for students and patients

The study’s bottom line is that modern chatbots can be powerful helpers in dental education, especially for reviewing facts and practicing exam-style questions in radiology. However, even the strongest systems make enough mistakes—particularly in visually demanding or highly specific topics—that they cannot safely replace expert judgment. For students and clinicians, these tools are best seen as smart study partners or decision aids, not as stand-alone authorities. Used with appropriate caution and oversight, they may speed learning and broaden access to high-quality explanations, while the final responsibility for diagnosis and treatment remains firmly with trained professionals.

Citation: Geduk, G., Hasırcı, U.C., Kusay, D.D. et al. A comparative analysis of the performance of large Language models in the dentistry specialty examination. Sci Rep 16, 6739 (2026). https://doi.org/10.1038/s41598-026-37800-8

Keywords: dental education, artificial intelligence, large language models, oral and maxillofacial radiology, medical examinations