Clear Sky Science · en

Simulated depression risk classification from Parkinson’s voice features using a self-attention-enhanced MLP architecture

Why the sound of a voice matters

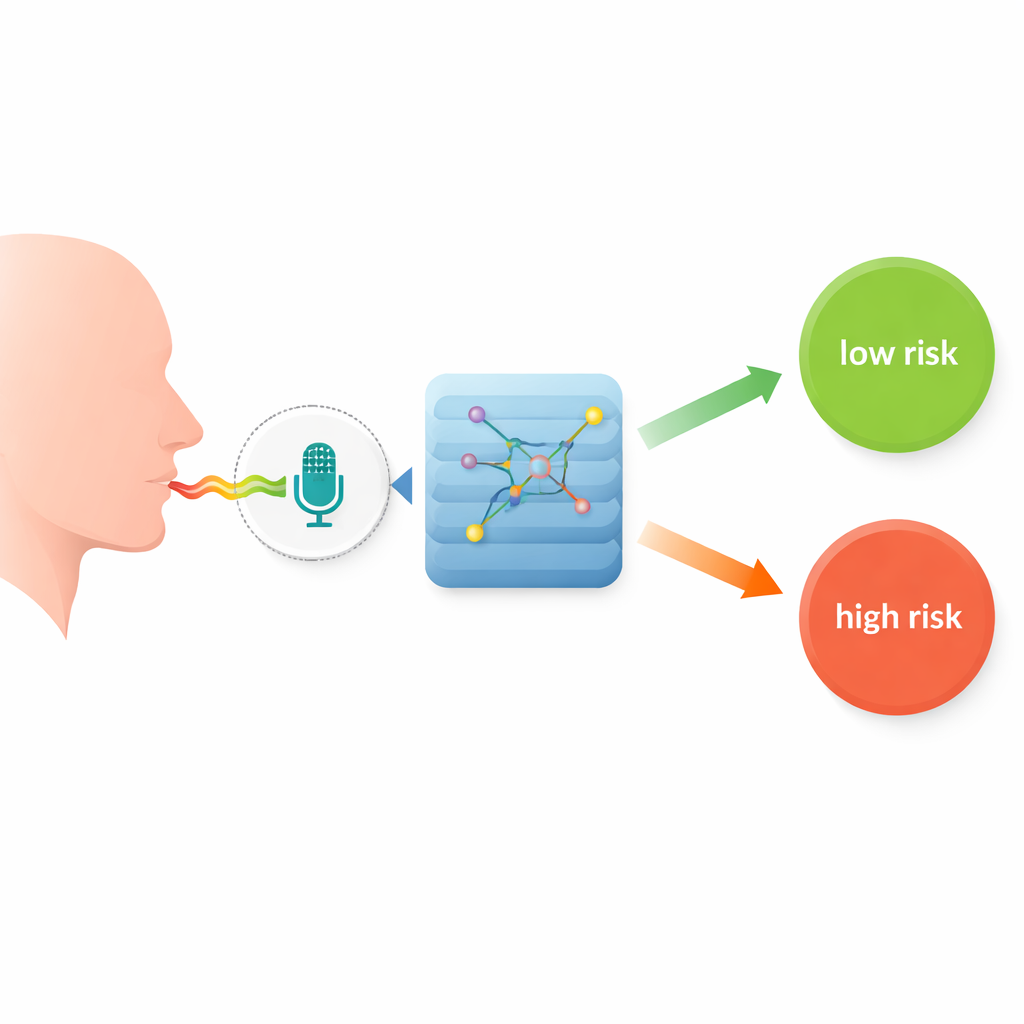

For many people living with Parkinson’s disease, the most noticeable changes are tremors or slower movement. But less visible changes, like mood and motivation, can quietly erode quality of life. Depression is common in Parkinson’s and often goes unrecognized. This study explores a surprisingly simple idea: could brief voice recordings, analyzed by an artificial intelligence (AI) system, help flag who might be at higher risk of depression, without the need for invasive tests or lengthy questionnaires?

Listening for hidden signals

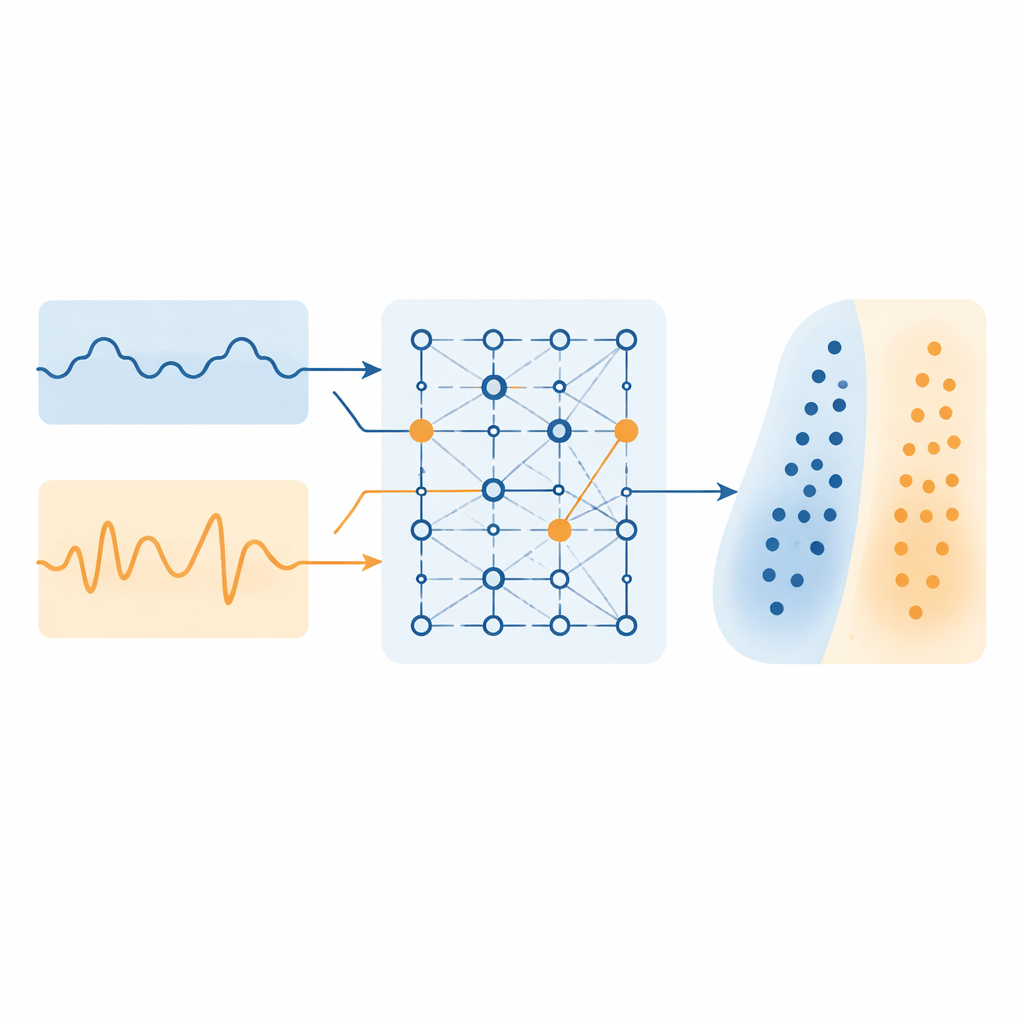

Parkinson’s disease affects the brain circuits that control not just movement, but also speech and emotion. As a result, the way a person speaks can subtly change. The authors focus on two measurable aspects of voice. One is how “clean” and steady the tone is compared to background noise, and the other is how much the pitch wobbles from one moment to the next. Healthier, more energetic voices tend to be clearer and more stable, while voices affected by low mood or reduced drive can become breathier and less controlled. By turning these aspects into numerical “voice biomarkers,” the researchers aim to capture mental health clues that are otherwise easy to miss.

Turning raw sound into usable data

The study uses a publicly available collection of voice recordings from 195 people, some with Parkinson’s and some without. Each person sustained a simple vowel sound, and computer algorithms broke these recordings down into 22 detailed acoustic measurements. Before any AI model was trained, the team cleaned and standardized the data so that each feature could be fairly compared across individuals. They then focused on the two key voice measures and used simple cutoff values to place people into two groups: lower depression risk if the voice was both relatively clear and pitch-stable, and higher risk otherwise. The authors emphasize that these labels simulate risk for research purposes and are not the same as a clinical diagnosis made by a doctor.

How the AI "pays attention"

Most traditional computer models treat each voice measure as an independent piece of information. In reality, these features often work together: a slightly noisier voice might mean something different if pitch is also unstable. To capture such relationships, the researchers build a self-attention–enhanced neural network. In simple terms, the network first transforms the set of voice features into an internal representation, then uses an attention mechanism to decide which combinations of features matter most for each person. This design allows the system to weigh, for example, whether a particular pattern of noise and pitch variation is especially telling for depression risk in Parkinson’s, and to refine its prediction accordingly.

Putting the model to the test

The new model is evaluated against several widely used approaches, including support vector machines, k-nearest neighbors, and other deep learning methods. All models see the same voice data and simulated risk labels, and their performance is assessed with standard measures such as accuracy and how often they correctly identify higher-risk cases. The self-attention network comes out on top, reaching about 97% accuracy and very strong scores for both catching higher-risk individuals and correctly recognizing lower-risk ones. It also trains and runs quickly, suggesting that in principle it could support near real-time screening in clinics or even remote monitoring tools.

What this could mean for patients

The study shows that a short, simple voice recording, combined with a carefully designed AI model, can carry rich information about mental health risk in people with Parkinson’s disease. Although the current labels are based on rules rather than formal psychiatric evaluations, the work points toward a future in which non-invasive, everyday signals like speech could help clinicians spot problems earlier and track changes over time. With further validation using real clinical depression scores and more varied speech samples, this kind of voice-based screening could become a practical aid for monitoring emotional well-being alongside movement symptoms in Parkinson’s care.

Citation: Arasavali, N., Ashik, M., Nirmal, V. et al. Simulated depression risk classification from Parkinson’s voice features using a self-attention-enhanced MLP architecture. Sci Rep 16, 7869 (2026). https://doi.org/10.1038/s41598-026-37773-8

Keywords: Parkinson’s disease, voice analysis, depression risk, machine learning, digital biomarkers