Clear Sky Science · en

A lightweight hybrid perception enhancement network for infrared image super-resolution

Sharper Heat Vision for Everyday Technology

Infrared cameras let us “see” heat in the dark, through fog, or inside machines—but the images they produce are often blurry and low in detail. This paper introduces a new way to sharpen those fuzzy thermal pictures using artificial intelligence, so that security cameras, medical scanners, and industrial inspection tools can reveal clearer, more reliable information without needing bulkier or more expensive hardware.

Why Infrared Pictures Are Hard to Make Clear

Unlike smartphone cameras, infrared sensors capture invisible heat radiation rather than visible light. That makes them invaluable in security, defense, medicine, and equipment monitoring, where they can detect people at night, spot inflammation, or reveal overheating parts. However, infrared sensors are typically low in resolution because high-end detectors are costly and power-hungry. Software methods called super-resolution try to turn a coarse, low-resolution image into a sharper one. Traditional neural networks that use convolutions are good at picking up local patterns such as small edges, but they struggle to understand how different parts of the image relate over long distances. Newer transformer-based networks can capture this wider context but are heavy, slow, and tend to miss fine details like thin lines and textures—exactly the features that matter for small targets in infrared scenes.

Blending Two Ways of Seeing

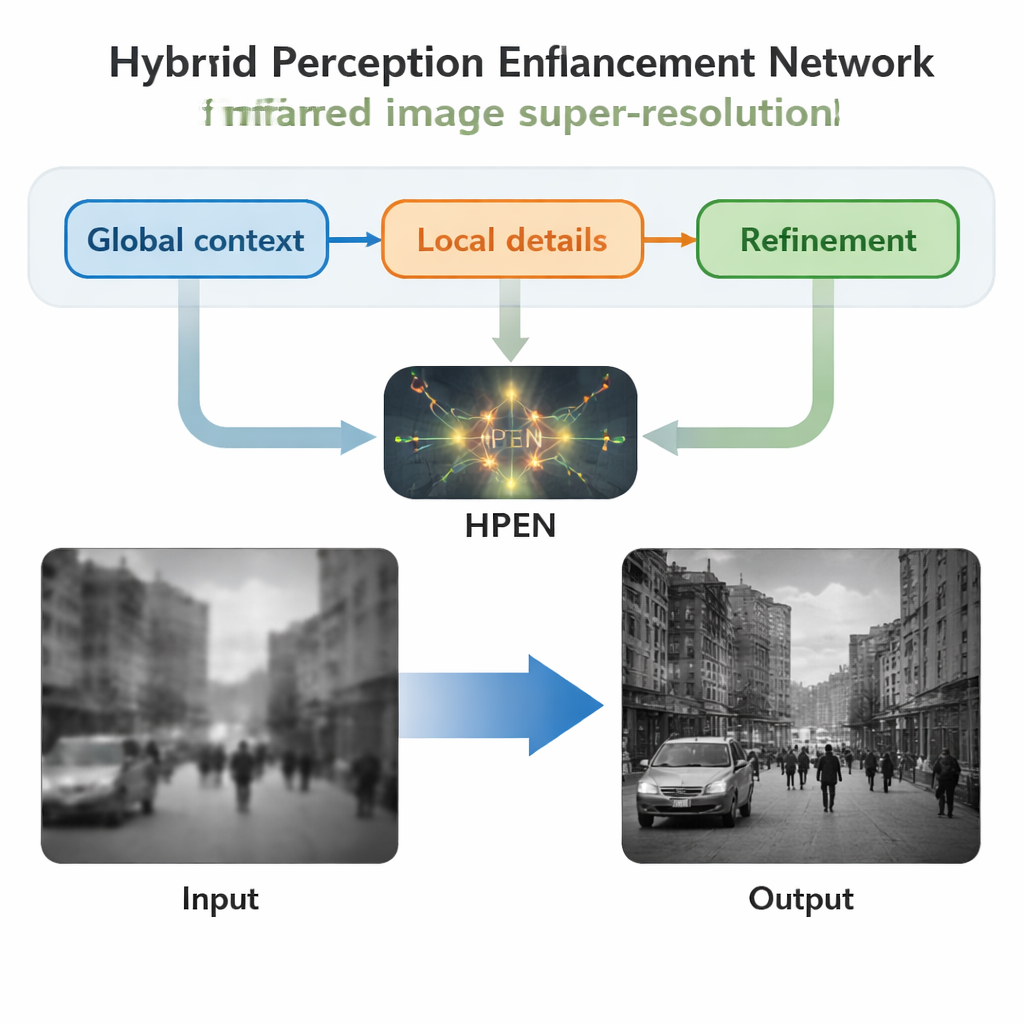

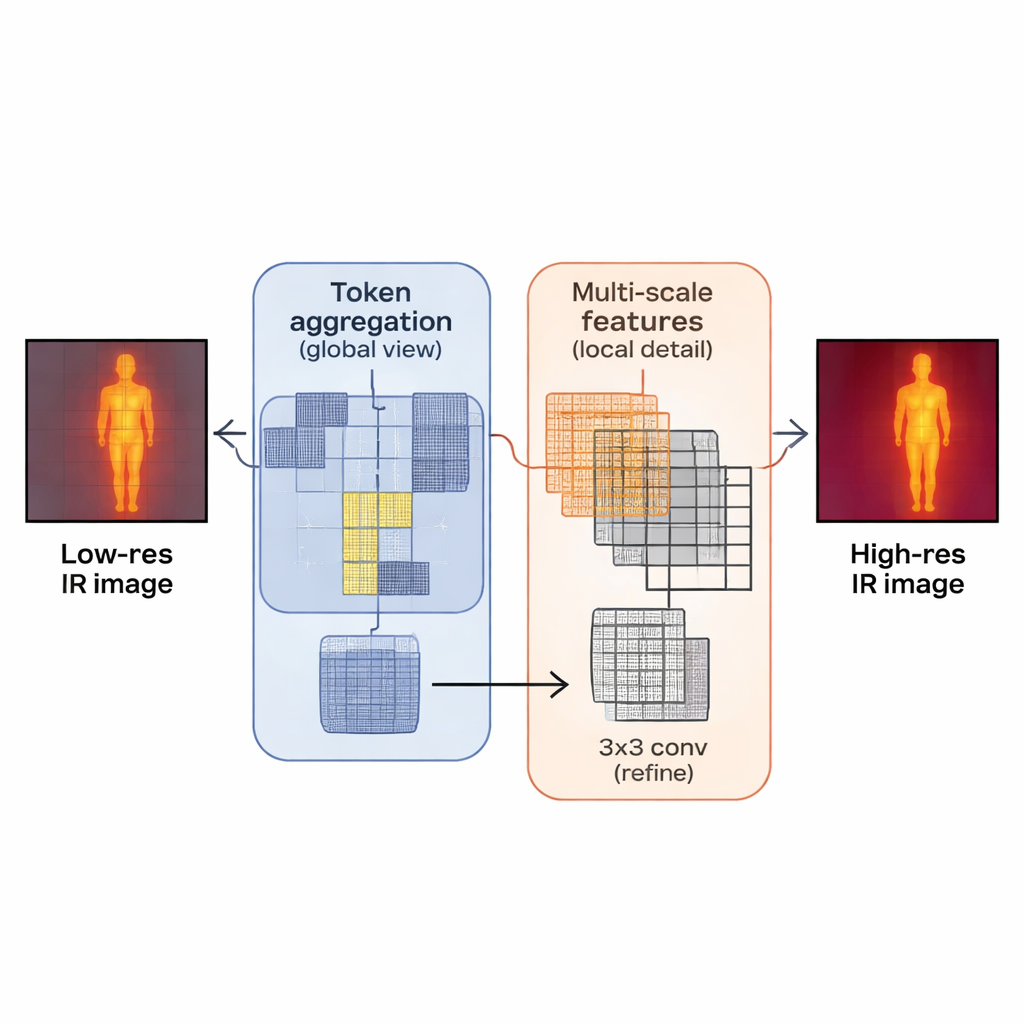

The authors propose a new model, the Hybrid Perception Enhancement Network (HPEN), designed specifically to balance detail and efficiency for infrared images. Its central building block, the Hybrid Perception Enhancement Block, combines three ideas in sequence. First, a “token aggregation” stage groups together similar patches across the image so the network can reason about the scene at a global level, rather like clustering related regions before deciding what they mean. Second, a “multi-scale feature” stage uses parallel processing paths to look at both small, fine-grain structures and slightly larger neighborhoods, helping the network keep track of edges, textures, and broader shapes at the same time. Finally, a simple 3×3 filter refines and cleans up the features, preventing the smoothing side effects that large, global operations can introduce.

Inside the New Sharpening Engine

Zooming out to the full HPEN system, the process begins by lightly processing the low-resolution infrared image to extract basic patterns. That information is then passed through a series of the hybrid blocks, each one deepening the model’s understanding of the scene by combining long-range relationships with small-scale details. A shortcut connection lets the original coarse information bypass these deeper layers so the network can focus its efforts on reconstructing the missing high-frequency content—things like crisp edges and small hot spots. In the final stage, a compact upsampling module scales the features back up to the target resolution, converting them into a sharpened infrared image of the same size as a high-quality reference. Throughout, the design is intentionally lightweight, keeping the number of operations and memory usage low enough for practical deployment on common graphics processors.

How Well the Method Works in Practice

To test HPEN, the authors trained and evaluated it on several public infrared datasets that include city scenes, vegetation, vehicles, pedestrians, and night conditions. They compared it to many recent “lightweight” super-resolution methods that aim to be both accurate and efficient. HPEN consistently matched or slightly outperformed these rivals on standard quality measures that track how close the sharpened image is to a high-resolution reference. It was particularly strong on the harder four-times enlargement setting, where turning a very small image into a much larger one often reveals artifacts. Despite this accuracy, HPEN used substantially fewer computations, much less graphics card memory, and offered faster processing time than strong transformer-based competitors. Additional tests that look at perceived, human-like image quality showed that HPEN’s results looked the most similar to real high-resolution infrared images, with fewer washed-out edges and better-preserved textures.

What This Means for Real-World Uses

For a non-specialist, the key message is that HPEN offers a smarter way to “enhance the zoom” on thermal cameras without changing the hardware. By carefully combining global context (understanding the whole scene) with local detail (preserving tiny edges and textures) in an efficient package, the method produces sharper, more informative infrared images while keeping computing costs under control. This could help surveillance systems spot people or vehicles more clearly in darkness, allow industrial inspectors to see fine cracks or hot spots on equipment, and give doctors clearer thermal patterns during non-invasive screening—all using existing sensors that suddenly see more than they did before.

Citation: Liu, Z., Tian, J., Liu, C. et al. A lightweight hybrid perception enhancement network for infrared image super-resolution. Sci Rep 16, 6572 (2026). https://doi.org/10.1038/s41598-026-37763-w

Keywords: infrared imaging, super-resolution, deep learning, image enhancement, computer vision